Wisdom of Crowds cluster ensemble

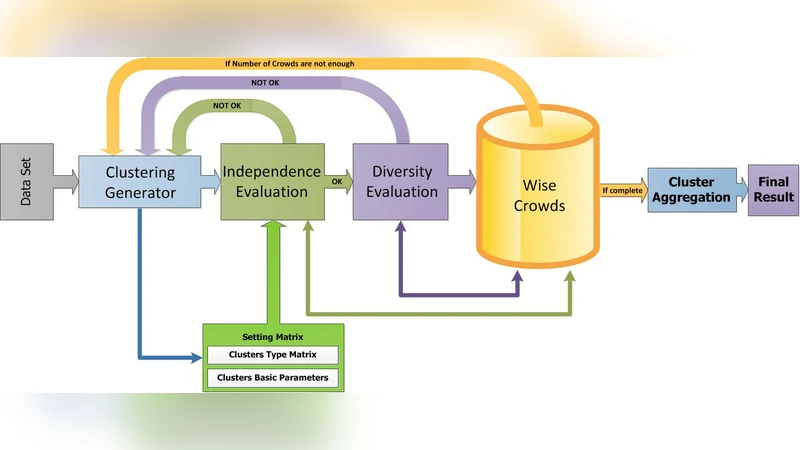

The Wisdom of Crowds is a phenomenon described in social science that suggests four criteria applicable to groups of people. It is claimed that, if these criteria are satisfied, then the aggregate decisions made by a group will often be better than those of its individual members. Inspired by this concept, we present a novel feedback framework for the cluster ensemble problem, which we call Wisdom of Crowds Cluster Ensemble (WOCCE). Although many conventional cluster ensemble methods focusing on diversity have recently been proposed, WOCCE analyzes the conditions necessary for a crowd to exhibit this collective wisdom. These include decentralization criteria for generating primary results, independence criteria for the base algorithms, and diversity criteria for the ensemble members. We suggest appropriate procedures for evaluating these measures, and propose a new measure to assess the diversity. We evaluate the performance of WOCCE against some other traditional base algorithms as well as state-of-the-art ensemble methods. The results demonstrate the efficiency of WOCCE’s aggregate decision-making compared to other algorithms.

💡 Research Summary

The paper introduces a novel framework called Wisdom of Crowds Cluster Ensemble (WOCCE) that adapts the social‑science concept of “wisdom of crowds” to the problem of clustering ensemble. Classical wisdom‑of‑crowds theory states that a group’s collective decision outperforms individual judgments when four conditions are satisfied: diversity, independence, decentralization, and aggregation (collective wisdom). The authors map each of these conditions onto clustering:

-

Decentralization – Base clusterings are generated from different “views” of the data. This is achieved by varying the sampling strategy, selecting different subsets of features, or applying distinct data transformations (e.g., PCA, random projections). The goal is to ensure that each base algorithm focuses on a different region or aspect of the data space.

-

Independence – The base algorithms must operate without influencing each other. WOCCE therefore employs heterogeneous clustering methods (K‑means, DBSCAN, Spectral Clustering, Agglomerative, etc.) and randomizes initial seeds and hyper‑parameters. By avoiding shared initialization or common parameter settings, the ensemble reduces correlated errors.

-

Diversity – While most ensemble literature measures diversity with Normalized Mutual Information (NMI) or Adjusted Rand Index (ARI), the authors argue that these metrics overlook structural differences such as boundary shape and intra‑cluster cohesion. They propose a new “Diversity Score” that combines (a) a Kullback‑Leibler divergence between label distributions, (b) a boundary‑difference term that captures how cluster borders shift, and (c) a cohesion term reflecting intra‑cluster compactness. Formally, for two base partitions A and B,

\

Comments & Academic Discussion

Loading comments...

Leave a Comment