Unsupervised Semantic Action Discovery from Video Collections

Human communication takes many forms, including speech, text and instructional videos. It typically has an underlying structure, with a starting point, ending, and certain objective steps between them. In this paper, we consider instructional videos …

Authors: Ozan Sener, Amir Roshan Zamir, Chenxia Wu

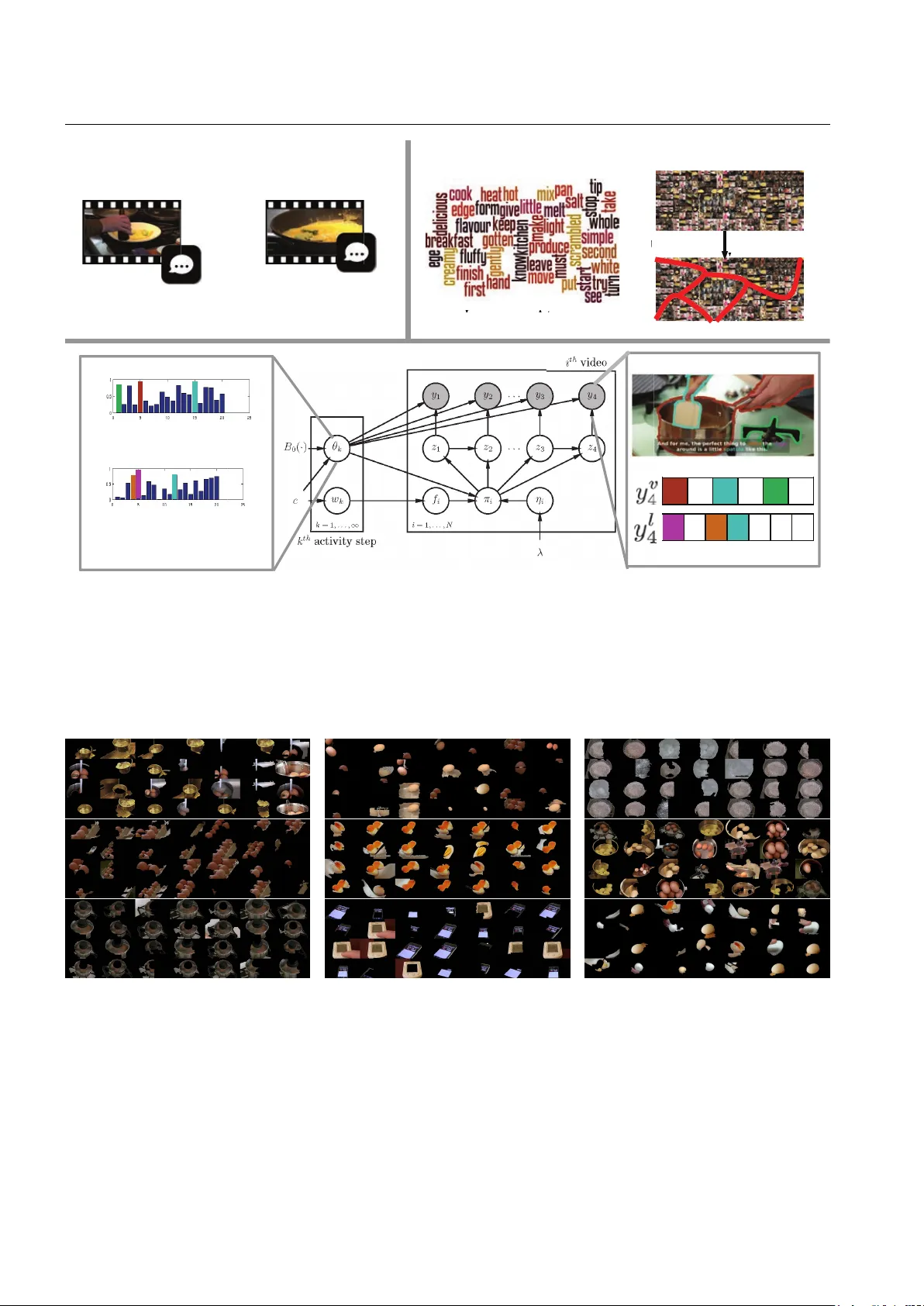

IJCV man uscript No. (will b e inserted b y the editor) Unsup ervised Seman tic Action Disco v ery from Video Collections Ozan Sener · Amir Roshan Zamir · Chenxia W u · Silvio Sav arese · Ash utosh Saxena Received: date / Accepted: date Abstract Human comm unication takes many forms, including sp eec h, text and instructional videos. It t ypi- cally has an underlying structure, with a starting p oin t, ending, and certain ob jective steps betw een them. In this paper, w e consider instructional videos where there are tens of millions of them on the Internet. W e prop ose a metho d for parsing a video into such seman tic steps in an unsup ervised w a y . Our method is capable of pro viding a seman tic “storyline” of the video comp osed of its ob jective steps. W e accomplish this us- ing b oth visual and language cues in a joint generativ e mo del. Our metho d can also pro vide a textual descrip- tion for each of the identified semantic steps and video segmen ts. W e ev aluate our method on a large num ber of complex Y ouT ub e videos and show that our metho d disco vers seman tically correct instructions for a v ariet y of tasks. 1 O. Sener Cornell Universit y , Ithaca NY 14853, USA E-mail: ozan@cs.cornell.edu A.R. Zamir Stanford Universit y , Stanford CA 94305, USA E-mail: zamir@cs.stanford.edu C. W u Cornell Universit y , Ithaca NY 14853, USA E-mail: wu@cs.cornell.edu S. Sav arese Stanford Universit y , Stanford CA 94305, USA E-mail: silvio@stanford.edu A. Saxena Brain of Things Inc, Cup ertino CA 95014, USA E-mail: ashutosh@brainoft.com 1 First version of this pap er app eared in ICCV 2015. This extended version has more details on the learning algorithm and hierarchical clustering with full deriv ation, additional analysis on the robustness to the subtitle noise, and a nov el application on rob otics. 1 In tro duction In the last decade, we hav e seen a significant demo cra- tization in the wa y w e access and generate information. One of the ma jor shifts has been in mo ving from exp ert- curated information sources into cro wd-generated large scale knowledge bases suc h as Wikipedia(Wikip edia 2004). F or example, the wa y we generate and access c o ok- ing r e cip es has been transformed substantially . Go ogle T rends(Google 2016) indicates that in the year of 2005 n umber of Go ogle searches for c o okb o oks were 1 . 56 times larger than the num ber of searches for c o oking vide os . In the year 2016, the num ber of searc hes for c o oking vide os is 8 . 6 times larger than that of c o okb o oks . This b eha vior is mostly due to the large v olume of co oking videos av ailable on the internet. In an era where an a v- erage user gets 2 million videos for the query How to make a p anc ake? , w e need computer vision algorithms that can understand suc h information and represent it to the users in a compact form. Suc h algorithms are not only useful for humans to digest millions of videos but also useful for rob ots to learn concepts from online video collections in order to p erform tasks. Considering the in tractabilit y of sup ervised infor- mation in large-scale video collections, we b elieve the k ey to the unsup ervised grounding is utilizing the struc- tural assumptions. Human communication takes many forms, including language and videos. F or instance, ex- plaining “ho w-to” perform a certain task can be com- m unicated via language (e.g., Do-It-Y ourself b o oks) as w ell as visual (e.g., instructional Y ouT ub e videos) infor- mation. Regardless of the form, such h uman-generated comm unication is generally structured and has a clear b eginning, end, and a set of steps in betw een. Finding this hidden and ob jective steps of human communica- 2 Sener et al. tion is a critical step to understand large video collec- tions. Language and vision provide different, but corre- lating and complemen tary information. Challenge lies in that both video frames and language (from subti- tles generated via Automatic Sp eech Recognition) are only a noisy , partial observ ation of the actions b eing p erformed. Ho wev er, the complementary nature of lan- guage and vision giv es the opp ortunity to understand the activities only from these partial observ ations. In this pap er, we present a unified mo del, considering b oth of the mo dalities, in order to parse human activities into activit y steps with no form of supervision other than re- quiring videos to be the same category (e.g., all co oking eggs, c hanging tires, etc.). Fig. 1: Giv en a large video collection (frames and subtitles) of an instructional category (e.g., Ho w to co ok an ommelette?), w e discov er activity steps (e.g., crac k the eggs). W e also parse the videos based on the disco vered steps. The k ey idea in our approac h is the observ ation that the large collection of videos, p ertaining to the same activit y class, t ypically include only a few ob jective ac- tivit y steps, and the v ariability is the result of exp o- nen tially many wa ys of generating videos from activity steps through subset selection and time ordering. W e study this construction based on the large-scale infor- mation av ailable in Y ouT ube in the form of instruc- tional videos (e.g., “Making panck age”, “How to tie a b ow tie”). Instructional videos hav e many desirable prop erties lik e the v olume of the information and a w ell defined notion of activit y step. How ev er, the proposed parsing metho d is applicable to any t ype of videos as long as they are comp osed of a set of steps. The output of our metho d can b e seen as the se- man tic “storyline” of a rather long and complex video collection (see Fig. 1). This storyline pro vides what par- ticular steps are taking place in the video collection, when they are o ccurring, and what their meaning is ( what-when-how ). This metho d also puts videos per- forming the same ov erall task in common ground and capture their high-lev el relations. In the prop osed approach, giv en a collection of videos, w e first generate a set of language and visual atoms. These atoms are the result of relating ob ject prop osals from eac h frame as w ell as detecting the frequen t w ords from subtitles. W e then emplo y a generativ e b eta pr o- c ess mixtur e mo del , which iden tifies the activity steps shared among the videos of the same category based on a represen tation using learned atoms. Although we do not explicity enforce this steps to b e semantically mean- ingful, our results highly correlate with the semantic steps. In our metho d, we do neither use an y spatial or temp oral lab el on actions/steps nor any lab els on ob ject categories. W e later learn a Marko v language mo del to pro vide a textual description of the activit y steps based on the language atoms it frequen tly uses. W e ev aluate our approach on v arious settings. First of all, we collected a large-scale dataset of instructional videos from Y ouT ub e following the most frequen tly p er- formed how to queries. Then, we ev aluate temp oral parsing qualit y per video and also a seman tic clustering p er category (how-to query). Second of all, we exten- siv ely analyze the contribution of eac h mo dality as w ell as the robustness against the language noise. Robust- ness against the language noise is a critical one since ASR alwa ys expected to ha v e some errors. Moreov er, results suggest that b oth language and vision is critical for semantic parsing. Finally , we discuss and presen t a no vel robotics application. W e start with a single query and generate a detailed physical plan to p erform the task. W e present a comp elling simulation results sug- gesting that our algorithm has a great p otential for rob otics applications. 2 Related W ork Designing an artificial in telligence agent which can un- derstand h uman generated videos hav e been topic of computer vision and rob otics researc hers for decades. Motiv ated by the application of surveillance, video sum- marization was one of the earliest metho ds which are re- lated to our problem. The surveillance applications fur- ther motiv ated the activit y and ev en t recognition meth- o ds. With the help of the a v ailabilit y of larger datasets, researc hers managed to train mac hine learning mo dels whic h can detect certain even ts . Recently , the datasets ha ve gotten larger and cross-modal enabling algorithms whic h can link vision with language. In the mean time, the fo cus of rob otics comm unity w as on parsing recip es directly for manipulation. W e list and discuss related w orks from each field in the following sections. Unsup ervised Semantic Action Discov ery from Video Collections 3 2.1 Video Summarization: Summarizing an input video as a sequence of k ey frames (static) or video clips (dynamic) is useful for b oth mul- timedia search interfaces and retriev al purp oses. Early w orks in the area are summarized in (T ruong and V enk atesh 2007) and mostly fo cus on cho osing keyfr ames . Summarizing videos is particularly imp ortant for some sp ecific domains like ego-centric videos and news rep orts as they are generally long in duration. There are man y succesful w orks (Lee et al 2012; Lu and Grau- man 2013; Rui et al 2000); ho wev er, they mostly rely on c haracteristics sp ecific to the domain. Summarization is also applied to the large image collections by recov ering the temp oral ordering and vi- sual similarit y of images (Kim and Xing 2014), and by Gupta et al. (Gupta et al 2009) to videos in a super- vised framew ork using action annotations. These collec- tions are also used for key-frame selection (Khosla et al 2013) and further extended to video clip selection (Kim et al 2014; Potapov et al 2014). Unlik e all of these meth- o ds whic h focus on forming a set of k ey frames/clips for a compact summary (which is not necessarily semanti- cally meaningful), we pro vide a fresh approach to video summarization by p erforming it through seman tic pars- ing on vision and language. How ev er, regardless of this dissimilarit y , w e exp erimentally compare our metho d against them. 2.2 Mo deling Visual and Language Information: Learning the relationship b etw een the visual and lan- guage data is a crucial problem due to its immense ap- plications. Early metho ds (Barnard et al 2003) in this area fo cus on learning a common multi-modal space in order to join tly represen t language and vision. They are further extended to learning higher level relations b e- t ween ob ject segments and words (So cher and F ei-F ei 2010). Similarly , Zitnic k et al.(Zitnick et al 2013; Zitnick and P arikh 2013) used abstracted clip-arts to under- stand spatial relations of ob jects and their language cor- resp ondences. Kong et al. (Kong et al 2014) and Fidler et al. (Fidler et al 2013) b oth accomplished the task of learning spatial reasoning by only using the image cap- tions. Relations extracted from image-caption pairs, are further used to help seman tic parsing (Y u and Siskind 2013) and activity recognition (Motw ani and Mo oney 2012). Recen t w orks also fo cus on automatic genera- tion of image captions with underlying ideas ranging from finding similar images and transferring their cap- tions (Ordonez et al 2011) to learning language mo dels conditioned on the image features (Kiros et al 2014; So c her et al 2014; F arhadi et al 2010); their emplo y ed approac h to learning language mo dels is typically ei- ther based on graphical mo dels (F arhadi et al 2010) or neural netw orks (So c her et al 2014; Kiros et al 2014; Karpath y and F ei-F ei 2014). All aforementioned metho ds are using supervised la- b els either as strong image-word pairs or w eak image- caption pairs, while our metho d is fully unsup ervised. 2.3 Activit y/Even t Recognition: The literature of activit y recognition is broad. The clos- est techniques to ours are either sup ervised or fo cus on detecting a particular (and often short) action in a w eakly/unsupervised manner. Also, a large b o dy of action recognition metho ds are in tended for trimmed videos clips or remain limited to detecting v ery short actions (Kuehne et al 2011; So omro et al 2012; Niebles et al 2010; Laptev et al 2008; Efros et al 2003; Ryoo and Aggarw al 2009). Ev en though some recent w orks at- tempted action recognition in untrimmed videos (Jiang et al 2014; Oneata et al 2014; Jain et al 2014), they are mostly fully sup ervised. Additionally , sev eral metho d for lo calizing instances of actions in rather longer video sequences hav e been de- v elop ed (Duchenne et al 2009; Hoai et al 2011; Laptev and P ´ erez 2007; Bo jano wski et al 2014; Pirsiav ash and Ramanan 2014). Our w ork is different from those in terms of b eing multimodal, unsup ervised, applicable to a video collection, and not limited to identifying pre- defined actions or the ones with short temporal spans. Also, the previous works on finding action primitiv es suc h as (Niebles et al 2010; Y ao and F ei-F ei 2010; Jain et al 2013; Lan et al 2014b,a) are primarily limited to disco vering atomic sub-actions, and therefore, fail to iden tify complex and high-level parts of a long video. Recen tly , ev en t recounting has attracted m uc h in- terest and in tends to identify the evidential segmen ts for which a video b elongs to a certain class (Sun and Nev atia 2014; Das et al 2013; Barbu et al 2012). Even t recoun ting is a relativ ely new topic and the existing metho ds mostly emplo y a sup ervised approach. Also, their end goal is to iden tify what parts of a video are highly related to an even t, and not parsing the video in to semantic steps. 2.4 Recip e Understanding: F ollowing the interest in comm unit y generated recip es in the web, there ha v e been many attempts to auto- matically pro cess recip es. Recent metho ds on natural language processing (Malmaud et al 2014; T enorth et al 2010) fo cus on semantic parsing of language recipes in 4 Sener et al. order to extract actions and the ob jects in the form of predicates. T enorth et al.(T enorth et al 2010) further pro cess the predicates in order to form a complete logic plan. The aforementioned approac hes fo cus only on the language mo dality and they are not applicable to the videos. The recent adv ances (Beetz et al 2011; Bollini et al 2011) in rob otics use the parsed recip e in order to p erform co oking tasks. They use sup ervised ob ject de- tectors and report a successful autonomous exp eriment. In addition to the language based approaches, Malmaud et al.(Malmaud et al 2015) consider b oth language and vision mo dalities and prop ose a metho d to align an in- put video to a recipe. Ho wev er, it can not extract the steps automatically and requires a ground truth recip e to align. On the con trary , our metho d uses b oth vi- sual and language mo dalities and extracts the actions while autonomously discov ering the steps. There is also an approach which generates multi-modal recip es from exp ert demonstrations (Grabler et al 2009). Ho w ever, it is developed only for the domain of ”teaching user in terfaces” and are not applicable to videos. In summary , three aspects differentiate this w ork from the ma jority of existing techniques: 1) discov ering seman tic steps from a video category , 2) being unsup er- vised, 3) adopting a multi-modal join t vision-language mo del for video parsing. 3 Problem Ov erview Our algorithm takes an how-to sen tence as an input query which w e further use to do wnload a large-collection of videos. W e then learn a m ulti-mo dal dictionary using a nov el hierarchical clustering approach. W e finally use the learned dictionary in order to disco ver and lo calize activit y steps. W e visualize this pro cess in Figure 2 with a to y example. The output of our algorithm is temporal parsing of each video as well as an id for each semantic activit y step. In other w ords, w e not only temporally segmen t each video, we also relate the o ccurrence of same activity ov er multiple videos with each other. W e further visualize the output in Figure 1. A toms: Given a large video-collection comp osed of vi- sual information as well as subtitles, our algorithm starts with learning a set of visual and language atoms which are further used for representing m ultimodal informa- tion (Section 4). These atoms are designed to b e likely to corresp ond to the mid-level seman tic concepts like actions and ob jects. In order to learn language atoms , w e find frequently occurring salient words among the subtitles using tf-idf like approac h. Learning visual atoms is slightly trickier due to the intra-cluster v ariabilit y of visual concepts. W e generate ob ject prop osals and T able 1: Notation of the Paper Learning Atoms I t t th frame of the video L t subtitle for t th frame y t = y v t , y l t feature representation of t th frame x p i,r 1 if p th cluster has r th proposal of i th video, 0 o.w. z t activity ID of frame t Learning Activities - Beta Pro cess HMM x p binary vector for p th cluster f k i 1 if i th video has k th activity 0 o.w . Θ k = Θ v k , Θ l k emission prob. of k th activity η k,k 0 i P ( z t +1 = k 0 | z t = k ) for i th vid π k,k 0 i η k,k 0 i × f k i × f k 0 i join tly-cluster them into mid-lev el atoms to obtain vi- sual atoms. W e develop a hierarchical clustering algo- rithm for this purp ose (Section 5). Disc overing A ctivities: After learning the atoms, we represen t the m ulti-mo dal information in each frame based on the o ccurrence statistics of the atoms. Giv en the multi-modal represen tation of eac h frame, w e dis- co ver set of temp oral clusters occurring ov er m ultiple videos using a non-parametric Bay esian metho d (Sec- tion 6). W e expect these clusters to corresp ond to the activit y steps which construct the high lev el activities. Our empirical results confirms this as the resulting clus- ters significantly correlates with the seman tic activity steps. 4 Multi-Mo dal Represen tation with A toms Finding the set of activity steps o v er large collection of videos ha ving large visual v arieties requires us to rep- resen t the semantic information in addition to the lo w- lev el visual cues. Hence, w e find our language and visual atoms by using mid-lev el cues lik e ob ject prop osals and frequen t words. Learning Visual A toms: In order to learn visual atoms, we create a large collection of ob ject prop os- als by indep endently generating ob ject proposals from eac h frame of each video. These prop osals are generated using the Constrained P arametric Min-Cut (CPMC) (Carreira and Sminchisescu 2010) algorithm based on b oth appearance and motion cues. W e note the k th pro- p osal of t th frame of i th video as r ( i ) ,k t . Moreo v er, w e drop the video index ( i ) if it is clearly implied in the con text. In order to group this ob ject prop osals into mid- lev el visual atoms, we follo w a clustering approach. Al- though any graph clustering approac h (eg. Keysegmen ts (Lee et al 2011)) can be applied for this, the joint Unsup ervised Semantic Action Discov ery from Video Collections 5 pro cessing of a large video collection requires handling large visual v ariabilit y among multiple videos. W e pro- p ose a new metho d to join tly cluster ob ject prop osals o ver multiple videos in Section 5. Eac h cluster of ob ject prop osals corresp ond to a visual atom. Learning Language A toms: W e define the language atoms as the salient w ords whic h o ccur more often than their ordinary rates based on the tf-idf measure. The do cument is defined as the concatenation of all subti- tles of all frames of all videos in the collection as D = S i ∈ N C S t ∈ T ( i ) L i t . Then, w e follow the classical tf-idf measure and use it as tf id f ( w , D ) = f w,D × log 1 + N n w where w is the w ord we are computing the tf-idf score for, f w,D is the frequency of the word in the do cument D , N is the total num ber of video collections w e are pro cessing, and n w is the num ber of video collections whose subtitle include the w ord w . W e sort words with their ”tf-idf” v alues and choose the top K words as language atoms ( K = 100 in our exp eriments ). As an example, we show the language atoms learned for the category making scr amble d e gg in Figure 2 Represen ting F rames with A toms: After learning the visual and language atoms, w e represen t eac h frame via the o ccurrence of atoms (binary histogram). F or- mally , the representation of the t th frame of the i th video is denoted as y ( i ) t and computed as y ( i ) t = [ y ( i ) , l t , y ( i ) , v t ] suc h that k th en try of the y ( i ) , l t is 1 if the subtitle of the frame has the k th language atom and 0 otherwise. y ( i ) , v t is also a binary vector similarly defined o v er vi- sual atoms. W e visualize the representation of a sample frame in the Figure 3. Fig. 3: Represen tation for a sample frame. Three of the ob ject prop osals of sample frame are in the visual atoms and three of the wor ds are in the language atoms. 5 Join t Clustering o v er Video Collection Giv en a set of ob ject prop osals generated from ”mul- tiple videos”, simply combining them into a single col- lection and clustering them into atoms is not desirable for t w o reasons: (1) semantic concepts ha v e large vi- sual differences among different videos and accurately clustering them into a single atom is hard, (2) atoms should con tain ob ject prop osals from m ultiple videos in order to semantically relate the videos. In order to satisfy these requirements, w e prop ose a joint exten- sion to spectral clustering. Note that the purp ose of this clustering is generating atoms where each clusters represen ts an atom. Multi Video Edges Multi Video Edges Proposal Graph for Video 1 Proposal Graph for Video 2 Proposal Graph for Video 3 Fig. 4: Join t prop osal clustering. Here, we show the 1 st N N video graph and 2 nd N N region graph. Eac h ob ject prop osal is linked to its tw o NNs from the video it b elongs and t wo NNs from the videos it is neigh b our of. Black no des are the prop osals selected as part of the cluster and the gra y ones are not selected. Moreo ver, dashed lines are intra-video edges and solid ones are in ter-video edges. Basic Graph Clustering: Consider the set of ob ject prop osals extracted from a single video { r k t } , and a pair- wise similarity metric d ( · , · ) for them. W e follo w the sin- gle cluster graph partitioning (SCGP)(Olson et al 2005) approac h to find the dominant cluster whic h maximizes the in tra-cluster similarity: arg max x k t P ( k 1 ,t 1 ) , ( k 2 ,t 2 ) ∈ K × T x k 1 t 1 x k 2 t 2 d ( r k 1 t 1 , r k 2 t 2 ) P ( k,t ) ∈ K × T x k t (1) where x k t is a binary v ariable which is 1 if r k t is in- cluded in the cluster, T is the n um b er of frames and K is the num ber of clusters p er frame. Adopting the v ector form of the indicator v ariables as x tK + k = x k t and the pairwise distance matrix as A t 1 K + k 1 ,t 2 K + k 2 = d ( r k 1 t 1 , r k 2 t 2 ), equation (1) can b e compactly written as arg max x x T A x x T x This can b e solv ed by finding the dom- inan t eigenv ector of x after relaxing x k t to [0 , 1] (Olson 6 Sener et al. et al 2005; P erona and F reeman 1998). Upon finding the cluster, the members of the selected cluster are re- mo ved from the collection and the same algorithm is applied to find remaining clusters. Join t Clustering: Our extension of the SCGP in to m ultiple videos is based on the assumption that the k ey ob jects occur in most of the videos. Hence, we re- form ulate the problem by enforcing the homogeneity of the cluster o ver all videos. W e first create a kNN graph of the videos based on the distance b etw een their textual descriptions. W e use the χ 2 distance of the bag-of-words computed from the video description. W e also create the kNN graph of ob ject prop osals in eac h video based on the pretrained ”fc7” features of AlexNet(Krizhevsky et al 2012). This hierarc hical graph structure is visualized in Figure 4 for 3 videos sample. After creating this graph, w e imp ose b oth ”in ter-video” and ”intra-video” similarit y among the ob ject prop osals of each cluster. Main rationale b e- hind this construction is ha ving a separate notion of distance for in ter-video and in tra-video relations since the visual similarit y decreases drastically for in ter-video ones. Giv en the in tra-video distance matrices A ( i ) , the bi- nary indicator v ectors x ( i ) , and the in ter-video distance matrices as A ( i , j ) , w e define our optimization problem as; arg max X i ∈ N x ( i ) T A ( i ) x ( i ) x ( i ) T x ( i ) + X i ∈ N X j ∈N ( i ) x ( i ) T A ( i , j ) x ( j ) x ( i ) T 11 T x ( j ) , (2) where N ( i ) is the neighbours of the video i in the kNN graph, 1 is vector of ones and N is the n um ber of videos. Although we can not use the efficien t eigen-decomp osition approac h from (Olson et al 2005; P erona and F ree- man 1998) as a result of the mo dification, we can use Sto c hastic Gradient Descen t as the cost function is quasi- con vex when relaxed. W e use the SGD with the follow- ing analytic gradien t function: ∇ x ( i ) = 2 A ( i ) x ( i ) − 2 x ( i ) r ( i ) x ( i ) T x ( i ) + X i ∈ N A i , j x j − x ( j ) T 1 r ( i,j ) x ( i ) T 11 T x ( j ) , (3) where r ( i ) = x ( i ) T A ( i ) x ( i ) x ( i ) T x ( i ) and r ( i,j ) = x ( i ) T A ( i , j ) x ( j ) x ( i ) T 11 T x ( j ) W e iterativ ely use the metho d to find clusters, and stop after the K = 20 clusters are found as the remain- ing ob ject prop osals were deemed not relev an t to the activit y . Eac h cluster corresponds to a visual atom for our application. In Figure 5, w e visualize some of the atoms (i.e. clusters) w e learned for the query How to Har d Boil an Egg? . As apparent in the figure, the resulting atoms are highly correlated and correspond to semantic ob- jects&concepts regardless of their significant in tra-class v ariability . 6 Unsup ervised Activit y Represen tation In this section, we explain our mo del for disco v ering the activit y steps from a video collection given the lan- guage and visual atoms. The main idea behind this step is utilizing the rep etitive nature of steps. In other words, although there are large num ber of videos in an y c ho- sen category , the underlying set of steps are v ery few. Hence, w e tried to find smallest set of activities whic h can generate all the videos w e crawl. W e note the extracted represen tation of the frame t of video i as y ( i ) t . W e mo del our algorithm based on activit y steps and note the activit y lab el of the t th frame of the i th video as z ( i ) t . W e do not fix the the n um ber of activities and use a non-parametric approac h. In our mo del, each activity step is represented ov er the atoms as the likelihoo d of including them. In other w ords, each activity step is a Bernoulli distribution o v er the visual and language atoms as θ k = [ θ l k , θ v k ] suc h that m th en try of the θ l k is the lik eliho o d of observing m th language atom in the frame of an activit y k . Similarly , m th en try of the θ v k represen ts the lik elihoo d of seeing m th visual atom. In other w ords, each frame’s repre- sen tation y ( i ) t is sampled from the distribution corre- sp onding to its activity as y ( i ) t | z ( i ) t = k ∼ B er ( θ k ). As a prior ov er θ , we use its conjugate distribution – Beta distribution –. Giv en the model ab ov e, w e explain the generative mo del which links activity steps and frames in Sec- tion 6.1. 6.1 Beta Pro cess Hidden Mark ov Mo del F or the understanding of the time-series information, F ox et al.(F o x et al 2014) proposed the Beta Process Hidden Mark o v Mo dels (BP-HMM). In BP-HMM set- ting, each time-series exhibits a subset of a v ailable fea- tures. Similarly , in our setup each video exhibits a sub- set of activit y steps. Our model follo ws the construction of F o x et al.(F ox et al 2014) and differs in the choice of probabilit y distri- butions since (F ox et al 2014) considers Gaussian obser- v ations whereas w e adopt binary observ ations of atoms. In our mo del, eac h video i chooses a set of activity steps through an activit y step v ector f ( i ) suc h that f ( i ) k is 1 if i t h video has the activity step k , and 0 otherwise. Unsup ervised Semantic Action Discov ery from Video Collections 7 When the activity step vectors of all videos are con- catenated, it b ecomes an activity step matrix F such that i t h row of the F is the activit y step v ector f ( i ) . Moreo ver, each activit y step k also has a prior proba- bilit y b k and a distribution parameter θ k whic h is the Bernoulli distribution as we explained in the Section 6. In this setting, the activity step parameters θ k and b k follo w the b eta pr o c ess as; B | B 0 , γ , β ∼ BP( β , γ B o ) , B = ∞ X k =1 b k δ θ k (4) where B 0 and the b k are determined by the underly- ing Poisson pro cess (Griffiths and Ghahramani 2005) and the feature v ector is determined as indep endent Bernoulli dra ws as f ( i ) k ∼ B er ( b k ). After marginaliz- ing o v er the b k and θ k , this distribution is sho wn to b e equiv alent to Indian Buffet Pro cess (IBP)(Griffiths and Ghahramani 2005). In the IBP analogy , each video is a customer and each activity step is a dish in the buffet. The first customer (video) chooses a P oisson( γ ) unique dishes (activit y steps). The following customer (video) i chooses previously sampled dish (activity step) k with probability m k i , prop ortional to the n umber of customers ( m k ) c hosen the dish k , and it also chooses P oisson( γ i ) new dishes(activity steps). Here, γ controls the n umber of selected activities in each video and β promotes the activities getting shared b y videos. The ab ov e IBP construction represen ts the activ- it y step discov ery part of our method. In addition, w e also need to mo del the video parsing o v er discov ered steps. Moreov er, w e need to mo del this tw o steps jointly . W e model the eac h video as an Hidden Mark o v Mo del (HMM) ov er the selected activity steps. Eac h frame has the hidden s tate –activity step– ( z ( i ) t ) and we ob- serv e the multi-modal frame represen tation y ( i ) t . Since w e mo del each activit y step as a Bernoulli distribution, the emission probabilities follow the Bernoulli distribu- tion as p ( y ( i ) t | z ( i ) t ) = B er ( θ z ( i ) t ). F or the transition probabilities of the HMM, we do not put an y constraint and simply mo del it as an y p oint from a probability simplex which can b e sampled by dra wing a set of Gamma random v ariables and normal- izing them (F ox et al 2014). F or each video i , a Gamma random v ariable is sampled for the transition b etw een activit y step j and activit y step k if b oth of the ac- tivit y steps are included by the video (i.e. if f i k and f i j are b oth 1). After sampling these random v ariables, we normalize them to make transition probabilities to sum up 1. This pro cedure can b e represen ted formally as η ( i ) j,k ∼ Gam ( α + κδ j,k , 1) , π ( i ) j = η ( i ) j ◦ f ( i ) P k η ( i ) j,k f ( i ) k (5) Where κ is the p ersistence parameter promoting the self state transitions a.k.a. more coherent temp oral b ound- aries, ◦ is the elemen t-wise pro duct and π i j is the tran- sition probabilities in video i from activit y step j to other steps. This mo del is also presented as a graphical mo del in Figure 6 k = 1 , . . . , ∞ i = 1 , . . . , N c y 2 · · · η i f i y 1 · · · λ k th activity step π i B 0 ( · ) i th video w k z 4 y 3 z 3 y 4 z 1 z 2 θ k Fig. 6: Graphical mo del for BP-HMM: The left plate represen t the activity steps and the right plate represen t the videos. i.e. the left plate is for the activit y step discov ery and right plate is for parsing. Se e Se ction 6.1 for details. 6.2 Gibbs sampling for BP-HMM W e employ Mark ov Chain Mon te Carlo (MCMC) metho d for learning and inference of the BP-HMM. W e follow the exact sampler prop osed b y F ox et al.(F ox et al 2014). It marginalize o v er activity likelihoo ds w and activit y assignments z and samples the rest. MCMC pro cedure iterativ ely samples the conditional lik eliho o d of activity matrix F , activity parameters θ and transi- tion weigh ts η . W e divide the explanation of this sam- plers into tw o sections, sampling the activities through activit y matrix ( F ) and activity parameters ( θ ), and sampling the HMM parameters η . Marginalization ov er activit y assignmen ts follows the efficient dynamic pro- gramming approac h. Sampling the A ctivities: Consider the binary activit y inclusion matrix F such that F i,j is 1 if the i th video has the j th activit y . F ollo wing the sampler of F ox et al.(F o x et al 2014), w e divide the sampling F into tw o parts, namely , sampling the shar ed activities and sampling the no vel activities. Sampling shared activities correspond the re-sampling of existing en tries of F . W e simply it- erate o v er eac h entry and prop ose a flip (i.e. if the i th video has the j th activit y , we prop ose to flip it and not 8 Sener et al. to include j th activit y in the i th video). W e accept or reject this prop osals following the Metrop olis-Hasting rule. In order to sample the nov el activities, we follo w the data-driv en sampler (Hughes and Sudderth 2012). Consider the case in which we wan t to prop ose a nov el activit y b y setting the F i,j +1 to 0. In other w ords, w e in tro duce a new activity ( j + 1 th activit y) such that i th video includes it. In order to sample the parameters θ j +1 of it, w e first sample a temp oral windo w W ov er the i th video. This window is sampled by sampling the starting frame and the length of the windo w from a uni- form distribution. Then, we sample the nov el activity from Beta distribution as; θ k,n | W ∼ Beta( α n , β n ) (6) where θ k,n is the n th en try of θ k , α n is the n um ber of frames in the windo w W which ha v e the atom n , and β n is the num ber of frames which do not hav e the atom n . W e use Beta distribution b ecause it is the conjugate prior of the Bernoul li distribution that w e use to mo del activities. Sampling the HMM Par ameters: When the activities are defined via Θ and each video selects a subset of them via ( F ), we can compute the lik elihoo d of eac h state assignmen t by using the dynamic programming giv en the transition probabilities η . By using the lik eliho o ds, w e sample the state assignments z . When the states are sampled, w e can use the closed- form sampler derived in (F ox et al 2014). F ox et al.(F ox et al 2014) sho ws that the transition probabilities can b e sampled through a Dirichlet random v ariable and scaling it with a Gamma random v ariable as; π ( i ) ∼ D ir ( . . . , N ( i ) j,k + α + δ j,k κ, . . . ) (7) follo wed by η ( i ) = π ( i ) × C ( i ) suc h that C ( i ) ∼ Gamma ( K ( i ) + λ + κ, 1). Here, N ( i ) j,k represen ts the n umber of transitions betw een state j and state k in the video i , α , λ and κ are hyperparameters which w e learn with cross-v alidation, δ j,k is 1 if j = k and 0 o.w., and K ( i ) + is the num ber of activities the i th video has chosen. A t the end of the Gibbs sampling, our algorithm ends with a set of activities each represen ted with re- sp ect to the discov ered atoms i.e. Θ 1 . . . Θ k and lab el of eac h frame among the discov ered activities [1 , . . . , k ]. Θ i can be considered as a generativ e distribution of eac h disco v ered activity . In other w ords, if we wan t to sample a frame from activity i , w e simply sample set of language and visual atoms from Θ i . W e p erform this sampling in order to generate a language caption for eac h discov ered activit y as explained in Section A. W e also consistently visualize the results of disco very using story lines as shown in Figure 1. W e assign a color co de to eac h disco v ered activity and sample k eyframes from 4 four differen t clips of same activit y . W e further gen- erate a natural language description as well as displa y the temporal segmentation of each video as a colored timeline. 7 Exp erimen ts In order to exp eriment the prop osed metho d, w e first collected a dataset (details in Section 7.1). W e lab elled small part of the dataset with frame-wise activity step lab els and used it as an ev aluation corpus. Neither the set of labels, nor the temp oral boundaries are exp osed to our algorithm since the set-up is completely unsup er- vised. W e ev aluate our algorithm against the several unsup ervised clustering baselines and state-of-the-art algorithms from video summarization literature whic h are applicable. 7.1 Dataset W e use WikiHo w(wik 2015) in order to obtain the top100 queries the in ternet users are interested in and choose the ones which are directly related to the ph ysical world. Resulting queries are; How to Bake Boneless Skinless Chicken, Make Jel lo Shots, Co ok Ste ak, Bake Chicken Br e ast, Har d Boil an Egg, Make Y o- gurt, Make a Milkshake, Make Be ef Jerky, Tie a Tie, Cle an a Coffee Maker, Make Scr ambled Eggs, Br oil Steak, Co ok an Omelet, Make Ic e Cr eam, Make Panc akes, R emove Gum fr om Clothes, Unclo g a Bathtub Dr ain F or eac h of the queries, w e crawled Y ouT ub e and got the top 100 videos. W e also do wnloaded the En- glish subtitles if they exist. W e further randomly c hoose 5 videos out of 100 p er query . Although the choice was random, we discarded outlier videos at this stage and re-sampled without replacement to hav e 5 inlier ev alu- ation video per query . Other than outlier remov al, no h uman sup er vision is used to c ho ose ev aluation videos. Hence, w e ha ve total of 125 ev aluation videos and 2375 unlab elled videos. F or each ev aluation video, we asked an indep endent lab eler to lab el them. The dataset is lab eled by 5 in- dep enden t lab elers eac h annotating 5 categories. W e ask ed lab elers to lab el start and end frame of each ac- tivit y step as well as the name of the step. W e simply ask ed them the question What ar e the activity steps and wher e do es e ach of starts and end? . All lab elers are sho wn 5 wikiHo w( ? ) video recip es with detailed steps b efore starting the annotation pro cess as a baseline. Unsup ervised Semantic Action Discov ery from Video Collections 9 7.1.1 Outlier Vide o R emoval The video collection we obtain without an y exp ert in- terv ention might ha v e outliers; since, our queries are t ypical daily activities and there are man y carto ons, funn y videos, and music videos ab out them. Hence, w e hav e an automatic filtering stage. The k ey-idea b e- hind the filtering algorithm is the fact that instructional videos ha ve a distinguishable text descriptions when compared with outliers. T o exploit this, we use a clus- tering algorithm to find the large cluster of instructional videos with no outlier. Giv en a large video collection, w e use the graph we explain in Section 5 and compute the dominan t video cluster b y using the Single Cluster Graph P artitioning (Olson et al 2005) and discards the remaining videos as outlier. W e represent eac h video as a bag-of-words of their textual description. In Figure 7, w e visualize some of the discarded videos. Although our algorithm ha v e false p ositives while detecting outliers, w e alw a ys ha v e enough n um b er of videos (minim um 50) after the outlier detection thanks to the large-scale dataset. Fig. 7: Sample videos whic h our algorithm discards as an outlier for v arious queries. A toy milkshak e, a milkshake charm, a funny video ab out Ho w to NOT make smo othie, a video ab out the danger of a fire, a carto on video, a neck-tie video erroneously lab eled as b o w-tie, a song, and a lamb co oking mislab eled as c hick en. 7.2 Qualitativ e Results After independently running our algorithm on all cat- egories, we discov er activit y steps and parse the videos according to discov ered steps. W e visualize some of these categories qualitatively in Figure 8 with the temp oral parsing of ev aluation videos as w ell as the ground truth parsing. T o visualize the con ten t of each activity step, we displa y key-frames from different videos. W e also train a 3rd order Marko v language mo del(Shannon 2001) by using the subtitles. Moreo v er, w e generate a caption for eac h activit y step b y sampling this mo del conditioned on the θ l k . W e explain the details of this pro cess in the app endix. As shown in the Figures 8a & 8b, resulting steps are seman tically meaningful. Moreov er, the language cap- tions are also quite informative hence we can conclude that there is enough language context within the sub- titles in order to detect activities. On the other hand, some of the activity steps alw a ys occur together and our algorithm merges them into a single step while pro- moting sparsit y . 7.3 Quan titative Results W e compare our algorithm with the follo wing baselines. Lo w-lev el features (LLF): In order to experiment the effect of learned atoms, we compare with lo w-lev el features. As features, we use the state-of-the-art Fisher v ector representation of HOG, HOF and MBH features (Jiang et al 2014). Single mo dality: T o exp eriment the effect of multi- mo dal approach, we compare with single modality ap- proac h by only using the atoms of a single mo dality . Hidden Marko v Mo del (HMM): T o experiment the effect of joint generaiv e mo del, we compare our algo- rithm with an HMM. W e use the Baum-W elc h (Rabiner 1989) with cross-v alidation. Kernel T emp oral Segmen tation(P otap o v et al 2014): Kernel T emp oral Segmentation (KTS) proposed b y P otap ov et al.(Potapov et al 2014) can detect the temp oral b oundaries of the even ts/activities in the video from a time series data without any sup ervision. It en- forces a lo cal similarit y of each resultant segment. Giv en parsing results and the ground truth, we ev al- uate both the quality of temp oral segmentation and the activit y step discov ery . W e base our ev aluation on t wo widely used metrics; in tersection o v er union ( I O U ) and mean av erage precision( mAP ). IOU measures the qualit y of temporal segmen tation and it is defined as; 1 N P N i =1 τ ? i ∩ τ 0 i τ ? i ∪ τ 0 i where N is the n um b er of segmen ts, τ ? i is ground truth segmen t and τ 0 i is the detected segmen t. mAP is defined p er activity step and can b e computed based on a precision-recall curve (Jiang et al 2014). In order to adopt these metrics in to unsup ervised set- ting, w e use cluster similarit y measure(csm)(Liao 2005) whic h enables us to use any metric in unsup ervised setting. It c ho oses a matching of ground truth lab els with predicted lab els b y searching o v er all matching and c ho osing the ones giving highest score. W e use mAP csm and I O U csm as ev aluation metrics. 10 Sener et al. A c cur acy of the temp or al p arsing. W e compute, and plot in Figure9, the I O U cms v alues for all comp et- ing algorithms and all categories. W e also av erage ov er the categories and summarize the results in the T able 2. As the Figure 9 and T able 2 suggests, proposed metho d consisten tly outperforms the comp eting algorithms and its v ariations. One in teresting observ ation is the imp or- tance of both mo dalities as a result of dramatic differ- ence b etw een the accuracy of our metho d and its single mo dal v ersions. Moreo ver, the difference betw een our method and HMM is also significan t. W e b eliev e this is due to the ill-p osed definition of activities in HMM since the gran- ularit y of the activity steps is sub jective. On the other hand, our method starts with the well-defined definition of finding set of steps whic h generate the entire collec- tion. Hence, our algorithm do not suffer from granular- it y problem. Coher ency and ac cur acy of activity step disc ov- ery. Although I O U cms successfully measures the ac- curacy of the temp oral segmentation, it can not mea- sure the qualit y of disco v ered activities. In other w ords, w e also need to ev aluate the consistency of the activit y steps detected o ver multiple videos. F or this, w e use un- sup ervised v ersion of mean av erage precision mAP cms . W e plot the mAP cms v alues per category in Figure 10 and their av erage o ver categories in T able 2. As the Fig- ure 10 and the T able 2 suggests, our proposed metho d outp erforms all competing algorithms. One interesting observ ation is the significant difference b et w een seman- tic and low-lev el features. Hence, the mid-level features are k ey for linking multiple videos. Semantics of activity steps. In order to ev aluate the role of semantics, w e p erformed a sub jective anal- ysis. W e concatenated the activity step lab els in the groun t-truth into a lab el collection. Then, we ask non- exp ert users to choos e a label for each discov ered ac- tivit y for eac h algorithm. In other w ords, we replaced the maximization step with sub jective lab els. W e de- signed our exp erimen ts in a wa y that each clip receiv ed annotations from 5 different users. W e randomized the ordering of videos and algorithms during the sub jective ev aluation. Using the lab els provided b y sub jects, we compute the mean a verage precision ( mAP sem ). Both mAP cms and mAP sem metrics suggest that our metho d consistently outperforms the comp eting ones. There is only one recipe in whic h our method is outp er- formed by our based line of no visual information. This is mostly b ecause of the specific nature of the recip e How to tie a tie? . In such videos the notion of ob ject is not useful since all videos use a single ob ject -tie-. T able 3: Semantic mean-a verage-precision mAP sem . The results suggest that our algorithm outp erforms all baselines. The results also suggest that b oth of the mo dalities are required for accurate parsing for video collections. HMM HMM Ours Ours Ours Our w/ LLF w/Sem w/ LLF w/o Vis w/o Lang full mAP sem 6.44 24.83 7.28 28.93 14.83 39.01 The imp ortanc e of e ach mo dality. As shown in Figure 9 and 10, performance significantly drops when an y of the mo dalities is ignored consistently in all cate- gories. Hence, the joint usage is necessary . One in terest- ing observ ation is the fact that using only language in- formation p erformed slightly b etter than using only vi- sual information. W e b elieve this is due to the less intra- class v ariance in the language modality (i.e. p eople use same w ords for same activities). Ho w ev er, it lac ks many details(less complete) and more noisy than visual infor- mation. Hence these results v alidate the complementary nature of language and vision. Gener alization to generic structur e d vide os. W e exp erimen t the applicability of our method b eyond Ho w- T o videos b y ev aluating it on non-Ho w-T o categories. In Figure 11, we visualize the results for the videos re- triev ed using the query “T rav el San F rancisco”. The resulting clusters follo w semantically meaningful activ- ities and landmarks and show the applicability of our metho d b eyond How-T o queries. It is interesting to note that Chinatown and Clement St ended up in the same cluster. Considering the fact that Clement St is known for its Chinese foo d, this suggests that the discov ered clusters are seman tically meaningful. Noise in the subtitles. W e exp erimen t and analyze the robustness to noise in subtitles. Handling noisy sub- titles is an imp ortan t requirement since the scale of large-video collections makes it in tractable to transcrib e all instructional videos. One study suggest that it w ould tak e 374k human-y ear effort to transcrib e all y outub e videos. Hence, we exp ect to hav e combination of au- tomatic sp eech recognition(ASR) generated subtitles with user uploaded ones as an input to an y unsup er- vised parsing algorithm. W e study the effect of noise, introduced by ASR, b y ev aluating our algorithm on three different video cor- pus. First, w e only use the videos with user uploaded subtitles. Second, we only use the videos with ASR gen- erated subtitles. Third, w e use the entire dataset as Unsup ervised Semantic Action Discov ery from Video Collections 11 T able 2: Average of I O U cms and mAP cms o v er recip es. The results suggest that our algorithm outp erforms all baselines. The results also suggest that b oth of the mo dalities as well as semantic representations of visual information are all required for successful parsing of video collections. KTS (Potapov et al 2014) KTS(Potapov et al 2014) HMM HMM Ours Ours Ours Our w/ LLF w/ Sem w/ LLF w/Sem w/ LLF w/o Vis w/o Lang full I O U cms 16.80 28.01 30.84 37.69 33.16 36.50 29.91 52.36 mAP cms n/a n/a 9.35 32.30 11.33 30.50 19.50 44.09 union of first t w o. The results are summarized in T a- ble 4. Results indicates that noise-free subtitle improv es the accuracy as exp ected. Moreov er, the difference b e- t ween the results obtained with full corpus and user uploaded subtitles corpus is very small when compared with ASR only corpus. Hence, our algorithm can fuse information from noisy and noise-free examples in order to comp ensate for errors in the ASR. T able 4: Average of I O U cms and mAP cms o v er recip es with and without user uploaded subtitles. The results sho w that noise in the subtitles has an effect in the parsing accuracy . The results also indicate that our algorithm sho ws robustness to the noise since our accuracy results are comparable to the v ersion using only user uploaded subtitles. I O U cms mAP cms mAP sem ASR only 47 . 61 39 . 13 33 . 27 User uploaded only 54 . 63 46 . 21 42 . 32 Com bination 52 . 36 44 . 09 39 . 01 8 Grounding in to Rob otic Instructions In this section, w e demonstrate how we can apply our algorithm to the task of grounding recip e steps into rob otic actions. One of the most imp ortant applications of our algo- rithms is in rob otics. In future, rob ots will need to p er- form man y tasks up on user’s requests. W e en vision that the rob ots can use our video parser to first do wnload a large video collection for a task and then parse it. F or example, if a user asks to the robot Ple ase make r amen. , the robot can do wnload all videos returned from the query How to make a r amen. . Rob ot can further parse the scene using any of the av ailable R GB-D/RGB/P oin t Cloud segmentation algorithms (Koppula et al 2011; Ren et al 2012; Armeni et al 2016). Rob ot can use the resulting segmen tation in order to find the most sim- ilar recip e simply using the ob ject categories that we output. In order to demonstrate this application, w e use a state of the art language grounding algorithm (Misra et al 2014, 2015) whic h conv erts the generated descrip- tions in to rob ot actions based on the en vironment. T ell Me Da v e algorithm of Misra et al (Misra et al 2014, 2015) uses a semantic sim ulator which enco des the com- mon sense kno wledge about the physical w orld. It tak es the tuple of language, instructions and the environmen t as an input and outputs a series of robot actions to per- form the task. In order to learn the transformation, it uses a large-scale game log of language instruction, en- vironmen t and rob ot action tuples, and models them in terms of a graphical mo del. The en vironment is defined in terms of ob jects and their 3D p ositions, language is series of free-form English sen tences describing eac h step and actions are lo w-level rob ot commands. In our experimental setup, we c ho ose tw o basic ac- tivites that T ell Me Dav e can simulate; namely , How to make a r amen? and How to make an affo gato? . W e also c hose a random environmen t for eac h query from T ell Me Da v e environmen t dataset. W e directly fee d afore- men tioned how-to queries into Y ouT ub e and parse the resulting video collections. The resulting storylines are visualized in Figure 12. In order to complete the loop un til lo w-lev el rob ot commands, we then man ually lab elled the ob ject cat- egories our algorithm disco v ered. F or example, if the category we disco vered is mostly images of eggs, w e la- b elled this category as e gg . 2 Using these lab els, our algorithm chose the video whose ob jects is a subset to the environmen t our rob ot lives in to make sure all ob- jects of the recipe are accessible by the robot. Finally , w e feed the environmen t and generated captions into the T ell Me Dav e algorithm to obtain the ph ysical plan rob ot needs to execute to p erform eac h of the activ- it y . W e visualize eac h plan and the simulation in the Figure 12. 2 This step can b e automated using any ob ject recognition algorithm (Russako vsky et al 2015). 12 Sener et al. Our results are sho wn in the Figure 12, demonstrat- ing that our approach can b e used for rob otics ap- plications with limited supervision. There were some errors in translation of video storyline steps to actual grounded steps. Example errors include turning on b oth of the knobs of the stov e other than the single one. How- ev er, the resulting plans were still feasible in a w a y they can accomplish the required high-lev el task. While a larger analysis and rob otic exp eriments are outside the scope of this paper, with this demonstration w e b eliev e that our proposed metho d shows a feasible direction for rob otics. 9 Conclusions In this paper, w e captured the underlying structure of h uman communication b y jointly considering visual and language cues. W e exp erimen tally v alidate that given a large-video collection having subtitles, it is possible to disco ver activities without any supervision o v er activ- ities or ob jects. Exp erimental ev aluation also suggests the av ailable noisy and incomplete information is p ow- erful enough to not only discov er activities but also de- scrib e them. W e also demonstrated that the resulting disco vered recip es are useful in rob otics scenarios. So; “is it p ossible to understand large-video col- lections without any supervision?”. Given video and sp eec h information, the storylines we generate success- fully summarize the large video collection. This com- pact represen tation of an h undreds of videos is expected to b e useful in designing user interfaces whic h users in- teract with instructional videos. W e believe this is an imp ortan t step in the direction of future video w eb- pages. Y et another v ery important question is “can ma- c hines understand large-video collections?”. Clearly , we needed a small amoun t of man ual information and ev en used a method whic h is trained with h uman sup ervision in our rob otic demonstration. Hence, it is to o early to claim a success for machines w atc hing large-collection of videos. On the other hand, the results are very promis- ing and we b eliev e algorithms which can conv ert a free- form input query into rob ot tra jectories are a p ossible in near future. W e also b elieve our algorithm is an im- p ortan t step in this ambitious target. A Generation of Language Description In this section, w e explain ho w w e generated the text descrip- tion for the activity steps w e discov ered. W e included these descriptions in Figure 8, 11 & 12 as w ell as in the supplemen- tary videos. In order to generate the descriptions, we simply used a Mark ov text generator. W e collected all subtitles of all videos w e included in our dataset. After com bining them, w e trained a 3 rd order Mark o v model by using the subtitles we do wn- loaded. Main purpose of this training is learning the context dep enden t language mo del. Although this step can be ac- complished by v arious of metho ds in the NLP literature, we choose Mark ov language mo del b ecause of its simplicity . In- deed, this mo del is learned purely for visualization purp oses and neither the activity step disco very nor the parsing algo- rithm uses this mo del. After the model is learned, w e need to generate a text description for eac h discov ered activity . Since each disco v- ered activity is represented as Bernoulli random v ariable, we ha ve likelihoo d for each language atom. Our description gen- eration strategy is sampling a large collection of descriptions and ranking them for their closeness to the discov ered activ- ities. W e compute this closeness with the parameters of the Bernoulli random v ariable. F ormally , given large-set of sam- pled descriptions { S i } i ∈ [1 ,K ] , w e rank them using the w eights of the Bernoulli random v ariable as; r i = P j h S j i = w j i θ j P j h S j i = w j i 1 Here, [ · ] is an indicator function, S j i is the j th wo rd of i th description, w j is the j th wo rd and θ j is the j th entry of the activity description. W e simply choose the description having largest rank. Unsup ervised Semantic Action Discov ery from Video Collections 13 …. Input Query: “How to make an ommelette?” First-K videos with their subtitles. Multi-Modal Representation (Section 4, 5) Language Atoms Visual Atoms M ulti-Video Co-Clustering Language Atoms Language Atoms Mu lti-Video Co-Clustering Multi-Video Co-Clustering Visual Mean Language Mean Visual Mean Language Mean Unsupervised Activity Discovery (Section 6) V isual Mean Language Mean Fig. 2: Summary of our metho d. W e start with a single natural language query like how to make an omelette and then we crawl the top K videos returned by this query from Y ouT ub e. W e learn a multi-modal dictionary comp osed of salient words and ob ject prop osals. Rest of the algorithm represents frames and activities in terms of the learned dictionary . F or example, in the b ottom figure, colors represent such atoms and b oth activit y descriptions Θ and frame representations y t are defined in terms of these atoms. (see Fig 1 for output) Fig. 5: Randomly selected images of four randomly selected clusters learned for How to har d b oil an e gg? Please note that the ob jects in the same cluster is not only coming from a single video but discov ered o ver multiple videos. Hence, this stage helps in linking videos with each other. Resulting clusters are seman tically accurate since they t ypically b elong to a single semantic concept like water filling the p ot. 14 Sener et al. (a) How to make an omelet? (b) How to make a milkshake? Fig. 8: Video storylines for queries How to make an omelet? and How to make a milkshake? T emp oral segmentation of the videos and ground truth segmentation. W e also color co de the activity steps we disco vered and visualize their key-frames and the automatically generated captions. Best viewe d in c olor. mIOU max 60 40 20 0 Unclog a Bathtub Drain Remove Gum from Clothes Make Pancakes Make Ice Cream Cook an Omelet Broil Steak Make Scrambled Eggs Clean a Cof fee maker T ie a T ie Make Beef Jerky Make a Milkshake Make Y ogurt Hard Boil an Egg Bake Chicken Breast Cook Steak Make Jello Shots Bake Boneless Chicken Ours Ours w/o V is Ours w/o Lang Ours with LLF HMM HMM with LLF KTS [47] KTS with LLF Fig. 9: I O U max v alues for all categories, for all comp eting algorithms. Results suggest that our algorithm is outp erforming all other baselines. It also suggests that the visual information is contributing more than language for temp oral in tersection ov er union. This is rather exp ected since p eople tend to talk ab out things they did and will do; hence, language is exp ected to hav e low lo calization accuracy . Unsup ervised Semantic Action Discov ery from Video Collections 15 mAP max 60 40 20 0 Unclog a Bathtub Drain Remove Gum from Clothes Make Pancakes Make Ice Cream Cook an Omelet Broil Steak Make Scrambled Eggs Clean a Cof fee maker T ie a T ie Make Beef Jerky Make a Milkshake Make Y ogurt Hard Boil an Egg Bake Chicken Breast Cook Steak Make Jello Shots Bake Boneless Chicken Ours Ours w/o V is Ours w/o Lang Ours with LLF HMM HMM with LLF Fig. 10: AP max v alues for all categories, for all comp eting algorithms. Results suggest that our algorithm is outp erforming all other baselines in most of the cases. The failure cases included recip es like How to tie a tie? whic h is rather exp ected since a video ab out t ying a tie only includes a tie in the scene which is not informativ e enough to distinguish steps. The results also suggest that language contributes more than visual information for av erage precision, which is also rather exp ected since same step has very high visual v ariance and generally referred b y using same or similar words. Fig. 11: Qualitativ e results for parsing ‘T rav el San F rancisco’ category . The results suggest that our algorithm can generalize categories b ey ond instructional videos. F or example, tra vel videos can also b e parsed using our metho d. 16 Sener et al. Pour two cups of water Heat-up the Ramen on Stove Add the flavor packet(s) from the Ramen seem more like a meal, add chile flakes, Stir W ell moveto Ramen_1 gr asp Ramen_1 moveto S to veF ire_1 turn StoveKnob_1 turn StoveKnob_4 put Ramen_1 In Kettle_1 keep Kettle_1 On S to veF ire_1 gr asp Scoop scoop F rom IceCr eamBox scoop T o Bowl Input Query: Parsing Result Grounding to r obot Actions with Simulations Input Query: Parsing Result Grounding to r obot Actions with Robot Demonstration Place glass in the freezer Scoop some ice cream Pour in one double shot of espross right over ice cream How to make ramen? How to make affogato? Fig. 12: Demonstration on rob otic grounding. W e considered t wo queries by the user: How to make a r amen? and How to make an affo gato? . Given the result b y our video parsing system, w e find the grounded instruction for eac h recip e step. T op ro w sho ws the results as a storyline from our video parser, and the b ottom ro w shows the rob otic simulator and an actual rob otic demonstration resp ectively . During this demonstration, we man ually lab el each ob ject category and fully automate rest of the task. In order to simulate/and implement the resulting steps on rob ots, w e simply used the publicly av ailable sim ulator/source co de distributed by T ell Me Da ve (Misra et al 2014, 2015) Unsup ervised Semantic Action Discov ery from Video Collections 17 Ac kno wledgmen ts The authors would like to thank Jay Hack for dev eloping Mo dalDB and Bart Selman and Ashesh Jain for useful com- ments and discussions. References (2015) Wikiho w-ho w to do anything. http://www.wikihow.com Armeni I, Sener O, Zamir A, Sav arese S (2016) 3d semantic parsing of large-scale indo or spaces. In: CVPR Barbu A, Bridge A, Burchill Z, Coroian D, Dickinson S, Fi- dler S, Michaux A, Mussman S, Naray anaswam y S, Salvi D, et al (2012) Video in sentences out. arXiv preprint arXiv:12042742 Barnard K, Duygulu P , F orsyth D, De F reitas N, Blei DM, Jordan MI (2003) Matc hing w ords and pictures. JMLR 3:1107–1135 Beetz M, Klank U, Kresse I, Maldonado A, Mosenlechner L, P angercic D, Ruhr T, T enorth M (2011) Robotic ro om- mates making pancakes. In: Humanoids Bo janowski P , La jugie R, Bach F, Laptev I, Ponce J, Schmid C, Sivic J (2014) W eakly sup ervised action lab eling in videos under ordering constraints. In: ECCV Bollini M, Barry J, Rus D (2011) Bakebot: Baking co okies with the pr2. In: The PR2 W orkshop, IROS Carreira J, Sminchisescu C (2010) Constrained parametric min-cuts for automatic ob ject segmentation. In: CVPR Das P , Xu C, Do ell RF, Corso JJ (2013) A thousand frames in just a few words: Lingual description of videos through latent topics and sparse ob ject stitching. In: CVPR Duchenne O, Laptev I, Sivic J, Bash F, Ponce J (2009) Au- tomatic annotation of human actions in video. In: ICCV Efros AA, Berg AC, Mori G, Malik J (2003) Recognizing action at a distance. In: ICCV F arhadi A, Hejrati M, Sadeghi MA, Y oung P , Rashtc hian C, Ho c kenmaier J, F orsyth D (2010) Every picture tells a story: Generating sentences from images Fidler S, Sharma A, Urtasun R (2013) A sentence is worth a thousand pixels. In: CVPR, IEEE F ox E, Hughes M, Sudderth E, Jordan M (2014) Joint mo d- eling of m ultiple related time series via the b eta pro cess with application to motion capture segmentation. Annals of Applied Statistics 8(3):1281–1313 Google (2016) Go ogle trends. https://www.google.com/ trends/ , accessed: 2016-04-23 Grabler F, Agra wala M, Li W, Dontc hev a M, Igarashi T (2009) Generating photo manipulation tutorials by demonstration. TOG 28(3):66 Griffiths T, Ghahramani Z (2005) Infinite laten t feature mod- els and the indian buffet pro cess. Gatsby Unit Gupta A, Sriniv asan P , Shi J, Davis LS (2009) Understanding videos, constructing plots learning a visually grounded storyline mo del from annotated videos. In: CVPR Hoai M, Lan ZZ, De la T orre F (2011) Join t segmentation and classification of human actions in video. In: CVPR Hughes MC, Sudderth EB (2012) Nonparametric disco v ery of activity patterns from video collections. In: CVPR W ork- shop, pp 25–32 Jain M, Jegou H, Bouthemy P (2013) Better exploiting mo- tion for b etter action recognition. In: CVPR Jain M, v an Gemert J, Sno ek CG (2014) Univ ersit y of am- sterdam at thumos challenge 2014. ECCV In ternational W orkshop and Comp etition on Action Recognition with a Large Number of Classes Jiang YG, Liu J, Roshan Zamir A, T o derici G, Laptev I, Shah M, Sukthank ar R (2014) THUMOS c hallenge: Ac- tion recognition with a large num ber of classes. http: //crcv.ucf.edu/THUMOS14/ Karpath y A, F ei-F ei L (2014) Deep Visual-Semantic Align- ments for Generating Image Descriptions. ArXiv e-prints 1412.2306 Khosla A, Hamid R, Lin CJ, Sundaresan N (2013) Large-scale video summarization using web-image priors. In: CVPR Kim G, Xing EP (2014) Reconstructing storyline graphs for image recommendation from web communit y photos. In: CVPR Kim G, Sigal L, Xing EP (2014) Joint summarization of large- scale collections of web images and videos for storyline reconstruction. In: CVPR Kiros R, Salakh utdino v R, Zemel R (2014) Multimo dal neural language mo dels. In: ICML Kong C, Lin D, Bansal M, Urtasun R, Fidler S (2014) What are y ou talking ab out? text-to-image coreference. In: CVPR Koppula HS, Anand A, Joac hims T, Saxena A (2011) Semantic labeling of 3d p oint clouds for indo or scenes. In: Shaw e-T aylor J, Zemel RS, Bartlett PL, Pereira F, W einberger KQ (eds) Adv ances in Neural Infor- mation Pro cessing Systems 24, Curran Associates, Inc., pp 244–252, URL http://papers.nips.cc/paper/ 4226- semantic- labeling- of- 3d- point- clouds- for- indoor- scenes. pdf Krizhevsky A, Sutskev er I, Hinton GE (2012) Imagenet clas- sification with deep conv olutional neural netw orks. In: NIPS Kuehne H, Jh uang H, Garrote E, P oggio T, Serre T (2011) Hmdb: a large video database for human motion recogni- tion. In: ICCV Lan T, Chen L, Deng Z, Zhou GT, Mori G (2014a) Learn- ing action primitiv es for m ulti-lev el video ev ent under- standing. In: W orkshop on Visual Surveillance and Re- Identification Lan T, Chen TC, Sa v arese S (2014b) A hierarc hical represen- tation for future action prediction. In: ECCV Laptev I, P´ erez P (2007) Retrieving actions in mo vies. In: ICCV Laptev I, Marszalek M, Sc hmid C, Rozenfeld B (2008) Learn- ing realistic human actions from movies. In: CVPR Lee YJ, Kim J, Grauman K (2011) Key-segments for video ob ject segmentation. In: ICCV Lee YJ, Ghosh J, Grauman K (2012) Discov ering imp ortant p eople and ob jects for ego centric video summarization. In: CVPR Liao TW (2005) Clustering of time series dataa survey . Pat- tern recognition 38(11):1857–1874 Lu Z, Grauman K (2013) Story-driven summarization for ego- centric video. In: CVPR Malmaud J, W agner EJ, Chang N, Murphy K (2014) Co oking with semantics. ACL Malmaud J, Huang J, Ratho d V, Johnston N, Rabinovic h A, Murph y K (2015) What’s Co okin’ ? Interpreting Cook- ing Videos using T ext, Sp eec h and Vision. ArXiv e-prints 1503.01558 Misra DK, Sung J, Lee K, Saxena A (2014) T ell me dav e: Con text-sensitive grounding of natural language to mo- bile manipulation instructions. In: In RSS, Citeseer Misra DK, T ao K, Liang P , Saxena A (2015) Environmen t- driv en lexicon induction for high-level instructions. ACL Mot wani TS, Mo oney RJ (2012) Improving video activity recognition using ob ject recognition and text mining. In: 18 Sener et al. ECAI Niebles JC, Chen CW, F ei-F ei L (2010) Mo deling temporal structure of decomposable motion segments for activit y classification. In: ECCV Olson E, W alter M, T eller SJ, Leonard JJ (2005) Single- cluster sp ectral graph partitioning for robotics applica- tions. In: RSS Oneata D, V erb eek J, Sc hmid C (2014) The lear submission at th umos 2014. ECCV In ternational W orkshop and Com- p etition on Action Recognition with a Large Number of Classes Ordonez V, Kulk arni G, Berg TL (2011) Im2text: Describing images using 1 million captioned photographs. In: NIPS P erona P , F reeman W (1998) A factorization approac h to grouping. In: ECCV Pirsia v ash H, Ramanan D (2014) Parsing videos of actions with segmental grammars. In: CVPR P otap ov D, Douze M, Harc haoui Z, Sc hmid C (2014) Category-specific video summarization. In: ECCV Rabiner LR (1989) A tutorial on hidden marko v mo dels and selected applications in sp eech recognition. In: PRO- CEEDINGS OF THE IEEE, pp 257–286 Ren X, Bo L, F o x D (2012) Rgb-(d) scene lab eling: F eatures and algorithms. In: CVPR Rui Y, Gupta A, Acero A (2000) Automatically extracting highlights for tv baseball programs. In: ACM MM Russak ovsky O, Deng J, Su H, Krause J, Satheesh S, Ma S, Huang Z, Karpath y A, Khosla A, Bernstein M, et al (2015) Imagenet large scale visual recognition challenge. Interna tional Journal of Computer Vision 115(3):211–252 Ry o o M, Aggarwal J (2009) Spatio-temporal relationship match : Video structure comparison for recognition of complex human activities. In: ICCV Shannon CE (2001) A mathematical theory of comm unica- tion. ACM SIGMOBILE Mobile Computing and Com- mun ications Review 5(1):3–55 Socher R, F ei-F ei L (2010) Connecting mo dalities: Semi- supe rvised segmentation and annotation of images using unaligned text corp ora. In: CVPR, pp 966–973 Socher R, Karpathy A, Le QV, Manning CD, Ng A Y (2014) Grounded comp ositional semantics for finding and de- scribing images with sentences. T ACL 2:207–218 Soomro K, Roshan Zamir A, Shah M (2012) UCF101: A dataset of 101 human actions classes from videos in the wild. In: CRCV-TR-12-01 Sun C, Nev atia R (2014) Disco v er: Discov ering imp ortant seg- ments for classification of video ev en ts and recoun ting. In: CVPR T enorth M, Nyga D, Beetz M (2010) Understanding and exe- cuting instructions for everyda y manipulation tasks from the world wide web. In: ICRA T ruong BT, V enk atesh S (2007) Video abstraction: A system- atic review and classification. ACM TOMM 3(1):3 Wikip edia (2004) Wikip edia, the free encyclop edia. URL http://en.wikipedia.org/ , [Online; accessed 22-April- 2016] Y ao B, F ei-F ei L (2010) Mo deling m utual con text of ob ject and human pose in human-ob ject interaction activities. In: CVPR Y u H, Siskind JM (2013) Grounded language learning from video describ ed with sentences. In: ACL Zitnick CL, P arikh D (2013) Bringing semantics in to fo cus using visual abstraction. In: CVPR Zitnick CL, Parikh D, V anderwende L (2013) Learning the visual interpretation of sentences. In: CVPR

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment