Common Variable Learning and Invariant Representation Learning using Siamese Neural Networks

We consider the statistical problem of learning common source of variability in data which are synchronously captured by multiple sensors, and demonstrate that Siamese neural networks can be naturally applied to this problem. This approach is useful in particular in exploratory, data-driven applications, where neither a model nor label information is available. In recent years, many researchers have successfully applied Siamese neural networks to obtain an embedding of data which corresponds to a “semantic similarity”. We present an interpretation of this “semantic similarity” as learning of equivalence classes. We discuss properties of the embedding obtained by Siamese networks and provide empirical results that demonstrate the ability of Siamese networks to learn common variability.

💡 Research Summary

The paper tackles the problem of extracting a common latent variable from data streams captured simultaneously by multiple sensors, without any supervision, model knowledge, or class labels. Traditional supervised regression or model‑based signal processing requires either ground‑truth labels or an explicit generative model, which are often unavailable in exploratory data analysis. The authors propose to exploit “coincidence”: measurements taken at the same time by different sensors share the same value of the hidden common variable X, while each sensor also records its own idiosyncratic nuisance variables (Y for sensor 1, Z for sensor 2).

Mathematically, the hidden triplet (X, Y, Z) follows a joint distribution πₓ,y,z, with Y and Z conditionally independent given X. Two sensors apply bi‑Lipschitz functions g₁ and g₂ to produce observable measurements S¹ = g₁(X,Y) and S² = g₂(X,Z). The goal is to learn functions f₁ and f₂ (implemented by neural networks) such that f₁(S¹) = f₂(S²) whenever the underlying X is the same, while ensuring that different X values map to distinct embeddings. This requirement defines an equivalence relation on the observation space, and the desired embedding space is the quotient space of that relation – essentially a representation of “semantic similarity” that actually encodes the common variable.

To achieve this, the authors employ a Siamese neural network architecture consisting of two subnetworks N₁ and N₂ (for the two sensors) feeding into a single comparison unit. Training data are constructed purely from coincidence: a set D₊ of positive pairs (S¹ᵢ, S²ᵢ) that were recorded simultaneously, and a set D₋ of negative pairs formed by randomly mixing measurements from different time points, thereby approximating pairs with different X values. The loss combines a contrastive term for positives (encouraging ‖f₁(S¹)‑f₂(S²)‖² to be small) and for negatives (encouraging the distance to be large), passed through a sigmoid to bound the contribution, plus an L₂ regularization on the network weights.

Training proceeds by minimizing this loss over the parameters of N₁ and N₂. After convergence, N₁ and N₂ provide the desired mappings f₁ and f₂. Because the loss forces the embeddings of simultaneous measurements to coincide, the learned representation is invariant to the sensor‑specific nuisance variables Y and Z, while still being discriminative with respect to different values of X.

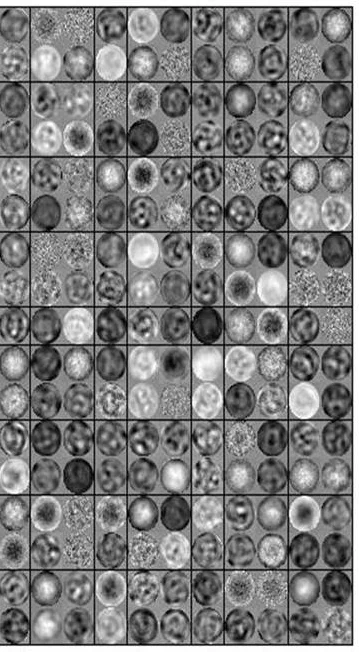

The paper validates the approach with two experimental settings. The first, a synthetic “toy” dataset, places three objects (Yoda, a bulldog, and a bunny) on rotating tables and captures simultaneous images with two cameras. The bulldog’s rotation angle X is the common hidden variable; Y and Z correspond to the rotations of Yoda and the bunny, which are visible only to one camera each. Using only the coincidence‑based positive and randomly generated negative pairs, the Siamese network learns a two‑dimensional embedding that varies smoothly with the bulldog’s angle while ignoring the other objects. The second experiment uses high‑dimensional synthetic data where X lies on a low‑dimensional manifold (e.g., a sphere) and Y, Z are high‑dimensional noise. Again, the network recovers an embedding that faithfully reflects the manifold structure of X, demonstrating robustness to complex sensor transformations.

Key contributions are: (1) framing common‑variable learning as a Siamese network problem driven solely by temporal coincidence; (2) providing a rigorous interpretation of “semantic similarity” as equivalence classes of the common variable, with the embedding representing the quotient space; (3) showing empirically that the method works without any label information or explicit model of the sensors.

Limitations include reliance on the assumption that randomly mixed negative pairs almost always involve different X values; if the underlying process changes slowly or sensor synchronization is imperfect, the negative set may contain many false negatives, degrading performance. Moreover, the theoretical guarantees depend on the bi‑Lipschitz property of g₁ and g₂; severe non‑linear sensor distortions could violate this condition.

Future work suggested by the authors involves more sophisticated negative‑pair generation (e.g., temporal windows to ensure distinct X), extending the framework to more than two sensors, and applying the method to real‑world multimodal data such as EEG/MEG recordings, multi‑camera video, or robotic sensor suites. Additionally, downstream tasks such as clustering, anomaly detection, or control could benefit from the invariant embeddings learned by this unsupervised Siamese approach.

Comments & Academic Discussion

Loading comments...

Leave a Comment