On-Average KL-Privacy and its equivalence to Generalization for Max-Entropy Mechanisms

We define On-Average KL-Privacy and present its properties and connections to differential privacy, generalization and information-theoretic quantities including max-information and mutual information. The new definition significantly weakens differe…

Authors: Yu-Xiang Wang, Jing Lei, Stephen E. Fienberg

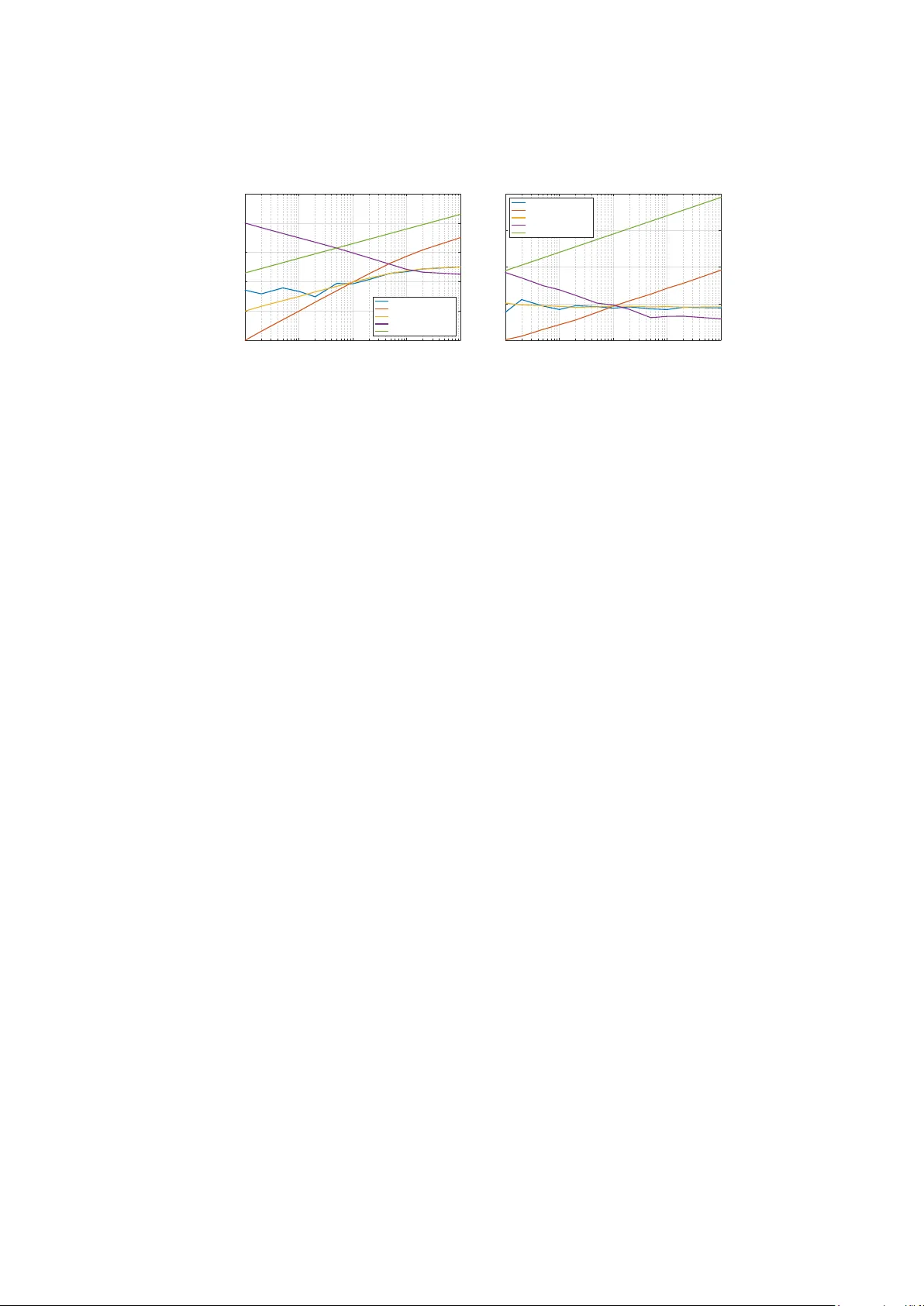

On-Av erage KL-Priv acy and its equiv alence to Generalization for Max-En trop y Mec hanisms Y u-Xiang W ang 1 , 2 , Jing Lei 2 , and Stephen E. Fien b erg 1 , 2 1 Mac hine Learning Departmen t, 2 Departmen t of Statistics, and the Heinz College, Carnegie Mellon Univ ersity , Pittsburgh, P A 15213, USA Abstract. W e define On-Average KL-Priv acy and present its prop erties and connections to differential priv acy , generalization and information- theoretic quantities including max-information and mutual information. The new definition significan tly w eakens differen tial priv acy , while preserv- ing its minimalistic design features such as composition ov er small group and m ultiple queries as well as closeness to post-pro cessing. Moreo ver, w e show that On-Average KL-Priv acy is e quivalent to generalization for a large class of commonly-used tools in statistics and machine learning that samples from Gibbs distributions—a class of distributions that arises naturally from the maxim um en tropy principle. In addition, a b ypro duct of our analysis yields a low er b ound for generalization error in terms of m utual information which rev eals an interesting interpla y with known upp er bounds that use the same quantit y . Keyw ords: differen tial priv acy , generalization, stability , information theory , maxim um en tropy , statistical learning theory , 1 In tro duction Increasing priv acy concerns hav e b ecome a ma jor obstacle for collecting, analyzing and sharing data, as well as comm unicating results of a data analysis in sensitiv e domains. F or example, the second Netflix Prize compe- tition w as canceled in resp onse to a lawsuit and F ederal T rade Commission priv acy concerns, and the National Institute of Health decided in August 2008 to remov e aggregate Genome-Wide Asso ciation Studies (GW AS) data from the public web site, after learning ab out a p oten tial priv acy risk. These concerns are well-grounded in the con text of the Big-Data era as stories ab out priv acy breac hes from improp erly-handled data set app ear v ery regularly (e.g., medical records [ 3 ], Netflix [ 27 ], NYC T axi [ 36 ]). These incidences highligh t the need for formal metho ds that prov ably protects the priv acy of individual-level data p oin ts while allowing similar database lev el of utility comparing to the non-priv ate counterpart. There is a long history of attempts to address these problems and the risk-utilit y tradeoff in statistical agencies [ 9 , 19 , 8 ] but most of the methods 2 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg dev elop ed do not provide clear and quantifiable priv acy guaran tees. Dif- feren tial priv acy [ 14 , 10 ] succeeds in the first task. While it allo ws a clear quan tification of the priv acy loss, it provides a worst-case guaran tee and in practice it often requires adding noise with a v ery large magnitude (if finite at all), hence resulting in unsatisfactory utilit y , cf., [ 32 , 37 , 16 ]. A gro wing literature fo cuses on w eakening the notion of differen tial priv acy to make it applicable and for a more fav orable priv acy-utility trade-off. P opular attempts include ( , δ )-appro ximate differential priv acy [ 13 ], p er- sonalized differential priv acy [ 15 , 22 ], random differential priv acy [ 17 ] and so on. They eac h hav e pros and cons and are useful in their sp ecific con- texts. There is a related literature addressing the folklore observ ation that “differen tial priv acy implies generalization” [ 12 , 18 , 30 , 11 , 5 , 35 ]. The implication of generalization is a minimal prop ert y that w e feel any notion of priv acy should hav e. This brings us to the natural question: – Is there a weak notion of priv acy that is equiv alen t to generalization? In this pap er, w e provide a partial answer to this question. Sp ecifically , w e define On-Average Kullbac k-Leibler(KL)-Priv acy and show that it c haracterizes On-Average Generalization 1 for algorithms that draw sample from an imp ortan t class of maximum entrop y/Gibbs distributions, i.e., distributions with probabilit y/density prop ortional to exp( −L (Output , Data)) π (Output) for a loss function L and (p ossibly improp er) prior distribution π . W e argue that this is a fundamental class of algorithms that cov ers a big p ortion of to ols in mo dern data analysis including Bay esian inference, empirical risk minimization in statistical learning as well as the priv ate releases of database queries through Laplace and Gaussian noise adding. F rom here on wards, w e will refer this class of distributions “MaxEnt distributions” and the algorithm that output a sample from a MaxEnt distribution “p osterior sampling”. R elate d work: This w ork is closely related to the v arious notions of algorithmic stability in learning theory [ 21 , 7 , 26 , 29 ]. In fact, we can treat differen tial priv acy as a v ery strong notion of stabilit y . Thus On-av erage KL-priv acy may w ell b e called On-a verage KL-stability . Stability implies generalization in many differen t settings but they are often only sufficient conditions. Exceptions include [ 26 , 29 ] who show that notions of stabilit y 1 W e will formally define these quan tities. On-Av erage KL-Priv acy and its equiv alence to Generalization 3 are also necessary for the consistency of empirical risk minimization and distribution-free learnabilit y of any algorithms. Our sp ecific stability definition, its equiv alence to generalization and its prop erties as a priv acy measure has not b een studied b efore. KL-Priv acy first app ears in [ 4 ] and is sho wn to imply generalization in [ 5 ]. On-Av erage KL-priv acy further w eakens KL-priv acy . A high-level connection can b e made to leav e-one-out cross v alidation whic h is often used as a (sligh tly biased) empirical measure of generalization, e.g., see [ 25 ]. 2 Sym b ols and Notation W e will use the standard statistical learning terminology where z ∈ Z is a data p oin t, h ∈ H is a hypothesis and ` : Z × H → R is the loss function. One can think of the negativ e loss function as a measure of utilit y of h on data p oin t z . Lastly , A is a p ossibly randomized algorithm that maps a data set Z ∈ Z n to some h yp othesis h ∈ H . F or example, if A is the empirical risk minimization (ERM), then A c ho oses h ∗ = argmin h ∈H P n i =1 ` ( z i , h ). Just to p oin t out that many data analysis tasks can be casted in this form, e.g., in linear regression, z i = ( x i , y i ) ∈ R d × R , h is the coefficient v ector and ` is just k y i − x T i h k 2 ; in k-means clustering, z ∈ R d is just the feature vector, h = { h 1 , ..., h k } is the collection of k -cluster cen ters and ` ( z , h ) = min j k z − h j k 2 . Simple calculations of statistical quan tities can often b e represen ted in this form to o, e.g., calculating the mean is equiv alen t to linear regression with iden tity design, and calculating the median is the same as ERM with loss function | z − h | . W e also consider cases when the loss function is defined o ver the whole data set Z ∈ Z , in this case the loss function is also ev aluated on the whole data set b y the structured loss L : h × Z → R . W e do not require Z to b e drawn from some pro duct distribution, but rather an y distribution D . Generally sp eaking, Z could b e a string of text, a news article, a sequence of transactions of a credit card user, or rather just the entire data set of n iid samples. W e will revisit this generalization with more concrete examples later. How ev er we would lik e to p oint out that this is equiv alen t to the ab o v e case when we only ha v e one (muc h more complicated) data p oin t and the algorithm A is applied to only one sample. 3 Main Results W e first describ e differen tial priv acy and then it will b ecome very in tu- itiv e where KL-priv acy and On-Average KL-priv acy come from. Roughly 4 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg sp eaking, differential priv acy requires that for any datasets Z and Z 0 that differs b y only one data p oin t, the algorithm A ( Z ) and A ( Z 0 ) samples output h from tw o distributions that are very similar to each other. Define “Hamming distance” d ( Z, Z 0 ) := # { i = 1 , ..., n : z i 6 = z 0 i } . (1) Definition 1 ( -Differen tial Priv acy [ 10 ]) We c al l an algorithm A - differ ential ly private (or in short -DP), if P ( A ( Z ) ∈ H ) ≤ exp( ) P ( A ( Z 0 ) ∈ H ) for ∀ Z , Z 0 ob eying d ( Z , Z 0 ) = 1 and any me asur able subset H ⊆ H . More transparen tly , assuming the range of A is the whole space H , and also assume A ( Z ) defines a density on H with resp ect to a base measure on H 2 , then -Differen tial Priv acy requires sup Z,Z 0 : d ( Z,Z 0 ) ≤ 1 sup h ∈H log p A ( Z ) ( h ) p A ( Z 0 ) ( h ) ≤ . Replacing the second suprem um with an expectation ov er h ∼ A ( Z ) w e get the maxim um KL-divergence o ver the output from t wo adjacent datasets. This is KL-Priv acy as defined in Barb er and Duc hi [ 4 ] , and b y replacing b oth supremums with expectations we get what w e call On-Average KL- Priv acy . F or Z ∈ Z n and z ∈ Z , denote [ Z − 1 , z ] ∈ Z n the data set obtained from replacing the first en try of Z b y z . Also recall that the KL-div ergence b et ween tw o distributions F and G is D KL ( F k G ) = E F dF dG . Definition 2 (On-Av erage KL-Priv acy) We say A ob eys -On-A ver age KL-privacy for some distribution D if E Z ∼D n ,z ∼D D KL ( A ( Z ) kA ([ Z − 1 , z ])) ≤ . Note that by the property of KL-div ergence, the On-Av erage KL-Priv acy is alw ays nonnegativ e and is 0 if and only if the tw o distributions are the same almost everywhere. In the ab o ve case, it happ ens when z = z 0 . Unlik e differential priv acy that pro vides a uniform priv acy guaran tee for an y users in Z , on-a verage KL-Priv acy is a distribution-sp ecific quan tity 2 These assumptions are only for presen tation simplicity . The notion of On-Av erage KL-priv acy can naturally handle mixture of densities and point masses. On-Av erage KL-Priv acy and its equiv alence to Generalization 5 that measures the amount of a verage priv acy loss of an a v erage data p oin t z ∼ D suffer from running data analysis A on an data set Z dra wn iid from the same distribution D . W e argue that this kind of a verage priv acy protection is practically useful b ecause it is able to adapt to b enign distributions and is m uc h less sensitiv e to outliers. After all, when differen tial priv acy fails to pro vide a meaningful due to p eculiar data sets that exist in Z n but rarely app ear in practice, w e would still b e interested to gauge how a randomized algorithm protects a t ypical user’s priv acy . No w we define what we mean b y gener alization . Let the empirical risk ˆ R ( h, Z ) = 1 n P n i =1 ` ( h, z i ) and the actual risk b e R ( h ) = E z ∼D ` ( h, z ). Definition 3 (On-Av erage Generalization) We say an algorithm A has on-aver age gener alization err or if E R ( A ( Z )) − E ˆ R ( A ( Z ) , Z ) ≤ . This is sligh tly weak er than the standard notion of generalization in ma- c hine learning which requires E | R ( A ( Z )) − ˆ R ( A ( Z ) , Z ) | ≤ . Nevertheless, on-a verage generalization is sufficien t for the purp ose of pro ving consis- tency for metho ds that approximately minimizes the empirical risk. 3.1 The equiv alence to generalization It turns out that when A assumes a sp ecial form, that is, sampling from a Gibbs distribution, w e can completely characterize generalization of A using On-Av erage KL-Priv acy . This class of algorithms include the most general mechanism for differen tial priv acy — exp onen tial mechanism [ 23 ], whic h casts many other noise adding pro cedures as sp ecial cases. W e will discuss a more compelling reason wh y restricting our attention to this class is not limiting in Section 3.3 . Theorem 4 (On-Av erage KL-Priv acy ⇔ Generalization) L et the loss function ` ( z , h ) = − log p ( z | h ) for some mo del p p ar ameterize d by h , and let A ( Z ) : h ∼ p ( h | Z ) ∝ exp − n X i =1 ` ( z , h ) − r ( h ) ! . If in additional A ( Z ) ob eys that for every Z , the distribution p ( h | Z ) is wel l-define d (in that the normalization c onstant is finite), then A satisfy -On-A ver age KL-Privacy if and only if A has on-aver age gener alization err or . 6 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg The pro of, giv en in the App endix, uses a ghost sample trick and the fact that the exp ected normalization constants of the sampling distribution o ver Z and Z 0 are the same. R emark 1 (Structur al L oss). T ak e n = 1, and loss function b e L . Then for an algorithm A that samples with probability prop ortional to exp ( −L ( h, Z ) − r ( h )): -On-Av erage KL-Priv acy is equiv alen t to -generalization of the structural loss. R emark 2 (Disp ersion p ar ameter γ ). The case when A ∝ exp ( − γ [ L ( h, Z ) − r ( h )]) for a constan t γ can b e handled by redefining L 0 = γ L . In that case, γ -On-Av erage KL-Priv acy with resp ect to L 0 implies γ /γ generalization with resp ect to L . F or this reason, larger γ ma y not imply strictly better generalization. R emark 3 (Comp aring to differ ential Privacy). Note that here w e do not require ` to b e uniformly b ounded, but if we do, i.e. sup z ∈Z ,h ∈H | ` ( z , h ) | ≤ B , then the same algorithm A ab o ve ob eys 4 B γ -Differential Priv acy [ 23 , 34 ] and it implies O ( B γ )-generalization. This, how ev er, could b e muc h larger than the actual generalization error (see our examples in Section 6 ). 3.2 Preserv ation of other properties of DP W e no w show that despite b eing muc h weak er than DP , On-Av erage KL- priv acy do es inherent some of the ma jor prop erties of differen tial priv acy (under mild additional assumptions in some cases). Lemma 5 (Closeness to Post-processing) L et f b e any (p ossibly r an- domize d) me asur able function fr om H to another domain H 0 , then for any Z, Z 0 D KL ( f ( A ( Z )) k f ( A ( Z 0 ))) ≤ D KL ( A ( Z ) kA ( Z 0 )) . Pr o of. This directly follows from the data pro cessing inequality for the R ´ en yi divergence in V an Erven and Harremo ¨ es [ 33 , Theorem 1]. Lemma 6 (Small group priv acy) L et k ≤ n . Assume A is p osterior sampling as in The or em 4 . Then for any k = 1 , ..., n , we have E [ Z,z 1: k ] ∼D n + k D KL ( A ( Z ) kA ([ Z − 1: k , z 1: k ])) = k E [ Z,z ] ∼D n +1 D KL ( A ( Z ) kA ([ Z − 1 , z ])) . Lemma 7 (Adaptiv e Comp osition Theorem) L et A b e 1 -(On-A ver age) KL-Privacy and B ( · , h ) b e 2 -(On-A ver age) KL-Privacy for every h ∈ Ω A wher e the supp ort of r andom function A is Ω A . Then ( A , B ) jointly is ( 1 + 2 )-(On-A ver age) KL-Privacy. W e pro ve Lemma 6 and 7 in the app endix. On-Av erage KL-Priv acy and its equiv alence to Generalization 7 3.3 P osterior Sampling as Max-Entrop y solutions In this section, w e give a few theoretical justifications why restricting to p osterior sampling is not limiting the applicabilit y of Theorem 4 muc h. First of all, Laplace, Gaussian and Exp onen tial Mechanism in the Differ- en tial Priv acy literature are sp ecial cases of this class. Secondly , among all distributions to sample from, the Gibbs distribution is the v ariational solution that simultaneously maximizes the conditional entrop y and utility . T o b e more precise on the claim, we first define conditional entrop y . Definition 8 (Conditional En tropy) Conditional entr opy H ( A ( Z ) | Z ) = − E Z E h ∼A ( Z ) log p ( h | Z ) wher e A ( Z ) ∼ p ( h | Z ) . Theorem 9 L et Z ∼ D n for any distribution D . A variational solution to the fol lowing c onvex optimization pr oblem min A − 1 γ E Z ∼D n H ( A ( Z ) | Z ) + E Z ∼D n E h ∼A ( Z ) n X i =1 ` i ( h, z i ) (2) is A that outputs h with distribution p ( h | Z ) ∝ exp ( − γ P n i =1 ` i ( h, z i )) . Pr o of. This is an instance of Theorem 3 in Mir [ 24 ] (first appeared in Tish by et al. [ 31 ] ) b y taking the distortion function to be the empirical risk. Note that this is a simple con vex optimization o ver the functions and the pro of in v olves substituting the solution in to the optimality condition with a sp ecific Lagrange multiplier chosen to appropriately adjust the normalization constan t. u t The abov e theorem is distribution-free, and in fact w orks for every instance of Z separately . Condition on each Z , the v ariational optimization finds the distribution with maxim um information entrop y among all distributions that satisfies a set of utilit y constrain ts. This corresp onds to the w ell- kno wn principle of maximum en tropy (MaxEnt) [ 20 ]. Man y philosophical justifications of this principle has b een proposed, but we w ould like to fo cus on the statistical persp ective and treat it as a form of regularization that p enalizes the complexity of the chosen distribution (akin to Ak aike Information Criterion [ 1 ]), hence av oiding o verfitting to the data. F or more information, w e refer the readers to references on MaxEn t’s connections to thermal dynamics [ 20 ], to Bay esian inference and con vex dualit y [ 2 ] as w ell as its mo dern use in mo deling natural languages [ 6 ]. 8 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg Note that the abov e characterization also allows for an y form of prior π ( h ) to b e assumed. Denote prior entrop y ˜ H ( h ) = − E h ∼ π ( h ) log ( π ( h )), and define information gain ˜ I ( h ; Z ) = ˜ H ( h ) − H ( h | Z ). The v ariational solution of p ( h | Z ) that minimizes ˜ I ( h ; Z ) + γ E Z E h | Z L ( Z, h ) is prop ortional to exp ( −L ( Z, h )) π ( h ). This pro vides an alternativ e wa y of seeing the class of algorithms A that we consider. ˜ I ( h ; Z ) can b e thought of as a information- theoretic quantification of priv acy loss, as describ ed in [ 38 , 24 ]. As a result, w e can think of the class of A that samples from MaxEnt distributions as the most priv ate algorithm among all algorithms that ac hieves a giv en utilit y constraint. 4 Max-Information and Mutual Information Recen tly , Dwork et al. [ 11 ] defined approximate max-information and used it as a tool to pro ve generalization (with high probability). Russo and Zou [ 28 ] sho wed that the weak er m utual information implies on-a verage generalization under a distribution assumption of the entire space of {L ( h, Z ) | h ∈ H} induced by distribution of Z . In this section, we compare On-Av erage KL-Priv acy with these tw o notions. Note that w e will use Z and Z 0 to denote t wo completely different datasets rather than adjacent ones as w e had in differential priv acy . Definition 10 (Max-Information, Definition 11 in [ 11 ]) We say A has an β -appr oximate max-information of k if for every distribution D , I β ∞ ( Z ; A ( Z )) = max ( H,Z ) ⊂H ×Z n : P ( h ∈ H,Z ∈ ˜ Z ) >β log P ( h ∈ H, Z ∈ ˜ Z ) − β P ( h ∈ H ) p ( Z ∈ ˜ Z ) ≤ k . This is alternatively denote d by I β ∞ ( A , n ) ≤ k . We say A has a pur e max-information of k if β = 0 . It is sho wn that differential priv acy and short description length imply b ounds on max-information [ 11 ], hence generalization. Here we show that the pure max-information implies a very strong On-Average-KL- Priv acy for any distribution D when we take A to b e a p osterior sampling mec hanism. Lemma 11 (Relationship to max -information) If A is a p osterior sampling me chanism as describ e d in The or em 4 , then I ∞ ( A , n ) ≤ k im- plies that A ob eys k /n -On-A ver age-KL-Privacy for any data gener ating distribution D . On-Av erage KL-Priv acy and its equiv alence to Generalization 9 An immediate corollary of the ab o v e connection is that we can no w significan tly simplify the pro of for “max-information ⇒ generalization” for p osterior sampling algorithms. Corollary 12 L et A b e a p osterior sampling algorithm. I ∞ ( A , n ) ≤ k implies that A gener alizes with r ate k /n . W e no w compare to m utual information and draw connections to [ 28 ]. Definition 13 (Mutual Information) The mutual information I ( A ( Z ); Z ) = E Z E h ∼A ( Z ) log p ( h, Z ) p ( h ) p ( Z ) wher e A ( Z ) ∼ p ( h | Z ) , p ( Z ) = D n and p ( h ) = R p ( h | Z ) p ( Z ) d Z . Lemma 14 (Relationship to Mutual Information) F or any r andom- ize d algorithm A , let A ( Z ) b e an R V, and Z, Z 0 b e two datasets of size n . We have I ( A ( Z ); Z ) = D KL ( A ( Z ) kA ( Z 0 )) + E Z E h ∼A ( Z ) E Z 0 log p ( A ( Z 0 )) − log E Z 0 p ( A ( Z 0 )) , which by Jensen ’s ine quality implies I ( A ( Z ) , Z ) ≤ D KL ( A ( Z ) kA ( Z 0 )) . A natural observ ation is that for MaxEn t A defined with L , m utual information low er b ounds its generalization error. On the other hand, Prop osition 1 in Russo and Zou [ 28 ] states that under the assumption that L ( h, Z ) is σ 2 -subgaussian for every h , then the on-av erage generalization error is alw a ys smaller than σ p 2 I ( A ( Z ); L ( · , Z )) . Similar results hold for sub-exp onen tial L ( h, Z ) [ 28 , Prop osition 3]. Note that in their b ounds, I ( A ( Z ); L ( · , Z )) is the m utual information b et w een the c hoice of h yp othesis h and the loss function for which w e are defining generalization on. By data pro cessing inequality , w e ha ve I ( A ( Z ); L ( · , Z )) ≤ I ( A ( Z ); Z ). F urther, when A is p osterior distribution, it only dep ends on Z through L ( · , Z ), namely L ( · , Z ) is a sufficien t statistic for A . As a result A ⊥ Z |L ( · , Z ). Therefore, w e know I ( A ( Z ); L ( · , Z )) = I ( A ( Z ); Z ). Com bine this observ ation with Lemma 14 and Theorem 4 , w e get the follo wing characterization of generalization through mutual information. Corollary 15 (Mutual information and generalization) L et A b e an algorithm that samples ∝ exp ( − γ L ( h, Z )) , and L ( h, Z ) − R ( h ) is σ 2 -sub gaussian for any h ∈ H , then 1 γ I ( A ( Z ); Z ) ≤ E Z E h ∼A ( Z ) [ L ( h, Z ) − R ( h )] ≤ σ p 2 I ( A ( Z ); Z ) . 10 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg Priv acy definition Z z Distance (pseudo)metric Pure DP sup Z ∈Z n sup z ∈Z D ∞ ( P k Q ) Appro x-DP sup Z ∈Z n sup z ∈Z D δ ∞ ( P k Q ) P ersonal-DP sup Z ∈Z n for each z D ∞ ( P k Q ) or D δ ∞ ( P k Q ) KL-Priv acy sup Z ∈Z n sup z ∈Z D KL ( P k Q ) TV-Priv acy sup Z ∈Z n sup z ∈Z k P − Q k T V Rand-Priv acy 1 − δ 1 an y D n 1 − δ 1 an y D D δ 2 ∞ ( P k Q ) On-Avg KL-Priv acy E Z ∼D n for each D E Z ∼D for each D D KL ( P k Q ) T able 1. Summary of different priv acy definitions. Pure-DP Approx-DP KL-Privacy TV -Privacy On-A vg KL-Privacy Personal-DP Random-DP 1" 𝛿 # Generalization |E Generalization |# E| Generalization |# Markov If Bounded Pinsker For insensitive queries For MaxEnt Sampling If small variance Jensen Fig. 1. Relationship of different priv acy definitions and generalization. If L ( h, Z ) − R ( h ) is σ 2 -sub exp onential with p ar ameter ( σ, b ) inste ad, then we have a we aker upp er b ound bI ( A ( Z ); Z ) + σ 2 / (2 b ) . The corollary implies that for each γ w e hav e an intriguing b ound that sa ys I ( A ; Z ) ≤ 2 γ 2 σ 2 for any distribution of Z , H and L suc h that L ( · , Z ) is σ 2 -subgaussian. One in teresting case is when γ = 1 /σ . This giv es σ I ( A ( Z ); Z ) ≤ E Z E h ∼A ( Z ) [ L ( h, Z ) − R ( h )] ≤ σ p 2 I ( A ( Z ); Z ) . The low er b ound is therefore sharp up to a multiplicativ e factor of p I ( A ( Z ); Z ). 5 Connections to Other A ttempts to W eak en DP W e compare and contrast the On-Av erage KL-Priv acy with other notions of priv acy that are designed to weak en the original DP . The (certainly in- complete) list includes ( , δ )-appro ximate differential priv acy (Appro x-DP) [ 13 ], random differential priv acy (Rand-DP) [ 17 ], Personalized Differential Priv acy (P ersonal-DP) [ 15 , 22 ] and T otal-V ariation-Priv acy (TV-Priv acy) [ 4 , 5 ]. T able 1 summarizes and compares of these definitions. On-Av erage KL-Priv acy and its equiv alence to Generalization 11 . 10 -2 10 -1 10 0 10 1 10 2 10 -6 10 -4 10 -2 10 0 10 2 10 4 Normal mean estimation Est. Generalization KL-Privacy KL-Privacy/ . Excess risk Diff. Privacy loss 0 . 10 -2 10 -1 10 0 10 1 10 2 10 -4 10 -2 10 0 10 2 10 4 Linear regression Est. Generalization KL-Privacy KL-Privacy/ . Excess risk Diff. Privacy loss 0 Fig. 2. Comparison of On-Avg KL-Priv acy and Differen tial Priv acy on tw o examples. A key difference of On-Av erage KL-Priv acy from almost all other previous definitions of priv acy , is that the probability is defined only ov er the random coins of priv ate algorithms. F or this reason, ev en if we conv ert our b ound in to the high probability form, the meaning of the small probability δ w ould b e v ery differen t from that in Approx-DP . The only exception in the list is Rand-DP , whic h assumes, lik e we do, the n + 1 data p oin ts in adjacen t data sets Z and Z 0 are dra w iid from a distribution. Ours is w eaker than Rand-DP in that ours is a distribution-sp ecific quan tity . Among these notions of priv acy , Pure-DP and Appro x-DP hav e b een sho wn to imply generalization with high probabilit y [ 12 , 5 ]; and TV-priv acy was more shown to imply generalization (in exp ectation) for a restricted class of queries (loss functions) [ 5 ]. The relationship b et ween our prop osal and these kno wn results are clearly illustrated in Fig. 1 . T o the best of our kno wledge, our result is the first of its kind that crisply characterizes generalization. Lastly , w e w ould lik e to p oint out that while each of these definitions retains some prop erties of differen tial priv acy , they migh t not p ossess all of them sim ultaneously and satisfactorily . F or example, ( , δ )-appro x-DP does not ha ve a satisfactory group priv acy guaran tee as δ gro ws exp onen tially with the group size. 6 Exp erimen ts In this section, we v alidate our theoretical results through numerical sim ulation. Sp ecifically , w e use t wo simple examples to compare the of differen tial priv acy , of on-av erage KL-priv acy , the generalization error, as w ell as the utility , measured in terms of the excess p opulation risk. 12 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg The first example is the priv ate release of mean, we consider Z to b e the mean of 100 samples from standard normal distribution truncated b et w een [ − 2 , 2]. Hyp othesis space H = R , loss function L ( Z, h ) = | Z − h | . A samples with probability prop ortional to exp ( − γ | Z − h | ). Note that this is the simple Laplace mechanism for differen tial priv acy and the global sensitivit y is 4, as a result this algorithm is 4 γ -differen tially priv ate. The second example we consider is a simple linear regression in 1D. W e generate the data from a simple univ ariate linear regression model y = xh + noise , where x and the noise are b oth sampled iid from a uniform distribution defined on [ − 1 , 1]. The true h is chosen to b e 1. Moreo ver, w e use the standard square loss ` ( z i , h ) = ( y i − x i h ) 2 . Clearly , the data domain Z = Y × X = [ − 1 , 1] × [ − 2 , 2] and if w e constrain H to b e within a b ounded set [ − 2 , 2], sup x,y ,β ( y − xβ ) 2 ≤ 16 and the p osterior sampling with parameter γ obeys 64 γ -DP . Fig. 2 plots the results o ver an exp onential grid of parameter γ . In these t wo examples, we calculate on-Av erage KL-Priv acy using kno wn formula of the KL-div ergence of Laplace and Gaussian distributions. Then we sto c hastically estimate the exp ectation o ver data. W e estimate the gener- alization error in the direct form ula by ev aluating on fresh samples. As w e can see, appropriately scaled On-Av erage KL-Priv acy c haracterizes the generalization error precisely as the theory predicts. On the other hand, if w e just compare the priv acy losses, the av erage from a random dataset giv en by On-Avg KL-Priv acy is smaller than that for the w orst case in DP b y orders of magnitudes. 7 Conclusion W e presen ted On-Average KL-priv acy as a new notion of priv acy (or sta- bilit y) on av erage. W e show ed that this new definition preserves prop erties of differen tial priv acy including closedness to post-pro cessing, small group priv acy and adaptiv e comp osition. Moreo ver, we sho wed that On-Average KL-priv acy/stabilit y c haracterizes a w eak form of generalization for a large class of sampling distributions that sim ultaneously maximize entrop y and utilit y . This equiv alence and connections to certain information-theoretic quan tities allo wed us to pro vide the first lo wer bound of generalization using m utual information. Lastly , we conduct numerical simulations whic h confirm our theory and demonstrate the substantially more fav orable priv acy-utilit y trade-off. On-Av erage KL-Priv acy and its equiv alence to Generalization 13 A Pro ofs of tec hnical results Pr o of (Pr o of of The or em 4 ). W e prov e this result using a ghost sample tric k. E z ∼D ,Z ∼D n E h ∼A ( Z ) " ` ( z , h ) − 1 n n X i =1 ` ( z i , h ) # = E Z 0 ∼D n ,Z ∼D n E h ∼A ( Z ) " 1 n n X i =1 ` ( z 0 i , h ) − 1 n n X i =1 ` ( z i , h ) # = 1 n n X i =1 E z 0 i ∼D ,Z ∼D n E h ∼A ( Z ) ` ( z 0 i , h ) − ` ( z i , h ) = 1 n n X i =1 E z 0 i ∼D ,Z ∼D n E h ∼A ( Z ) ` ( z 0 i , h ) + X j 6 = i ` ( z j , h ) + r ( h ) − ` ( z i , h ) − X j 6 = i ` ( z j , h ) − r ( h ) = 1 n n X i =1 E z 0 i ∼D ,Z ∼D n E A ( Z ) h − log p A ([ Z − i ,z 0 i ]) ( h ) + log p A ( Z )( h ) ( h ) + log K i − log K 0 i i = 1 n n X i =1 E z 0 i ∼D ,Z ∼D n E A ( Z ) h log p A ( Z ) ( h ) − log p A ([ Z − i ,z 0 i ]) ( h ) i = E z ∼D ,Z ∼D n E h ∼A ( Z ) log p A ( Z ) ( h ) − log p A ([ Z − 1 ,z ]) ( h ) . The K i and K 0 i are partition functions of p A ( Z ) ( h ) and p A ([ Z − i ,z 0 i ]) ( h ) resp ectiv ely . Since z i ∼ z 0 i , we kno w E K i − E K 0 i = 0 . The pro of is complete b y noting that the On-Av erage KL-priv acy is alw ays non-negative and so is the difference of the actual risk and exp ected empirical risk (therefore w e can take absolute v alue without changing the equiv alence). u t Pr o of (Pr o of of L emma 6 ). Let k = 2, we ha v e E [ Z,z 0 1 ,z 0 2 ] ∼D n +2 E h ∼A ( Z ) log p A ( Z ) ( h ) p A ([ Z − 1:2 ,z 0 1 ,z 0 2 ]) ( h ) = E [ Z,z 0 1 ] ∼D n +1 E h ∼A ( Z ) " log p A ( Z ) ( h ) p A ([ Z − 1 ,z 0 1 ]) ( h ) # + E [ Z,z 0 1 ,z 0 2 ] ∼D n +2 E h ∼A ( Z ) " log p A ([ Z − 1 ,z 0 1 ]) ( h ) p A ([ Z − 1:2 ,z 0 1 ,z 0 2 ]) ( h ) # ≤ + E [ Z,z 0 1 ,z 0 2 ] ∼D n +2 E h ∼A ( Z ) " log p A ([ Z − 1 ,z 0 1 ]) ( h ) p A ([ Z − 1:2 ,z 0 1 ,z 0 2 ]) ( h ) # . The tec hnical issue is that the second term do es not ha ve the correct distribution to tak e exp ectation o ver. By the prop ert y of A b eing a 14 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg p osterior sampling algorithm, we can rewrite the second term of the ab o ve equation in to E Z ∼D n ,z 0 1 ,z 0 2 ∼D E h ∼A ( Z ) log p ( z 2 , h ) − log p ( z 0 2 , h ) − log K + log K 0 where K and K 0 are normalization constan ts of exp ( log p ( z 0 1 , h )+ P n i =2 log p ( z i , h )) and exp ( log p ( z 0 1 , h ) + log p ( z 0 2 , h ) + P n i =3 log p ( z i , h )) resp ectively . The ex- p ected log-partition functions are the same so we can replace them with normalization constan ts of exp ( P n i =1 log p ( z i , h )) and exp ( log p ( z 0 2 , h ) + P i 6 =2 log p ( z i , h )). By adding and subtracting the missing log-likelihoo d functions on z 1 , z 3 , ..., z n , w e get E Z ∼D n ,z 0 1 ,z 0 2 ∼D E h ∼A ( Z ) " log p A ([ Z − 1 ,z 0 1 ]) ( h ) p A ([ Z − 1:2 ,z 0 2 ]) ( h ) # = E Z ∼D n ,z 0 1 ,z 0 2 ∼D E h ∼A ( Z ) " log p A ( Z ) ( h ) p A ([ Z − 2 ,z 0 2 ]) ( h ) # ≤ This completes the pro of for k = 2. Apply the same argument recursiv ely b y different decomp ositions of , we get the results for k = 3 , ..., n . The second statemen t follows b y the same argument with all “ ≤ ” changed in to “=”. u t Pr o of (Pr o of of L emma 7 ). E h 1 ∼A ( Z ) E h 2 ∼B ( Z ,h 1 ) log p B ( Z ,h 1 ) ( h 2 ) p B ( Z 0 ,h 1 ) ( h 2 ) p A ( Z ) ( h 1 ) p A ( Z 0 ) ( h 1 ) = E h 1 ∼A ( Z ) E h 2 ∼B ( Z ,h 1 ) log p B ( Z ,h 1 ) ( h 2 ) p B ( Z 0 ,h 1 ) ( h 2 ) + E h 1 ∼A ( Z ) E h 2 ∼B ( Z ,h 1 ) log p A ( Z ) ( h 1 ) p A ( Z 0 ) ( h 1 ) ≤ sup h 1 ∈ Ω A E h 2 ∼B ( S,h 1 ) log p B ( Z ,h 1 ) ( h 2 ) p B ( Z 0 ,h 1 ) ( h 2 ) + E h 1 ∼A ( Z ) log p A ( Z ) ( h 1 ) p A ( Z 0 ) ( h 1 ) T ak e sup o ver Z and Z 0 w e get the adaptive composition result for KL- Priv acy . T ake E o ver Z and z suc h that Z 0 = [ Z − 1 , z ], we get the adaptive comp osition result for On-Average KL-Priv acy . u t On-Av erage KL-Priv acy and its equiv alence to Generalization 15 Pr o of (Pr o of of L emma 11 ). By Lemma 12 in Dwork et al. [ 11 ] , I ∞ ( A , n ) = sup Z,Z 0 ∈Z n D ∞ ( A ( Z ) ||A ( Z 0 )). D ∞ ( A ( Z ) ||A ( Z 0 )) = sup h log p A ( Z ) ( h ) p A ( Z 0 ) ( h ) = sup h n X i =1 log p A ([ Z − 1:( i − 1) ,Z 0 1:( i − 1) ]) ( h ) p A ([ Z − 1:( i ) ,Z 0 1:( i ) ]) ( h ) ≥ E h ∼A ( Z ) n X i =1 log p A ([ Z − 1:( i − 1) ,Z 0 1:( i − 1) ]) ( h ) p A ([ Z − 1:( i ) ,Z 0 1:( i ) ]) ( h ) ≥ n X i =1 E h ∼A ( Z ) log p A ([ Z − 1:( i − 1) ,Z 0 1:( i − 1) ]) ( h ) p A ([ Z − 1:( i ) ,Z 0 1:( i ) ]) ( h ) = n X i =1 E h ∼A ( Z ) (log p ( z i ; h ) − log p ( z 0 i ; h ) − log K i + log K 0 i ) where K i and K 0 i are normalization constan ts for distribution p A ([ Z − 1:( i − 1) ,Z 0 1:( i − 1) ]) ( h ) and p A ([ Z − 1:( i ) ,Z 0 1:( i ) ]) ( h ) resp ectiv ely . T ak e exp ectation ov er Z and Z 0 on b oth sides, b y symmetry , the ex- p ected normalization constants are equal no matter which size n subset of [ Z, Z 0 ] this p osterior distribution h is defined o ver. Define Z ( i ) := [ z 1 , ..., z i − 1 , z 0 i , z i +1 , ..., z n ]. Let K b e the normalization constant of A ( Z ) and K ( i ) b e the normalization constant of A ( Z ( i ) ). W e get E Z,Z 0 ∼D n D ∞ ( A ( Z ) ||A ( Z 0 )) ≥ E Z,Z 0 ∼D n n X i =1 E h ∼A ( Z ) (log p ( z i ; h ) − log p ( z 0 i ; h )) = E Z,Z 0 ∼D n n X i =1 E h ∼A ( Z ) n X j =1 log p h ( z j ) − X j 6 = i log p h ( z j ) − log p h ( z 0 i ) − log K + log K ( i ) = E Z,Z 0 ∼D n n X i =1 E h ∼A ( Z ) " log p A ( Z ) ( h ) p A ( Z ( i ) ) ( h ) # = n X i =1 E Z ∼D n ,z 0 i ∼D E h ∼A ( Z ) " log p A ( Z ) ( h ) p A ( Z ( i ) ) ( h ) # Note that RHS = n E Z ∼D n ,z ∼D KL( A ( Z ) kA ([ Z − 1 , z ])) , 16 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg and LHS = E Z,Z 0 ∼D n D ∞ ( A ( Z ) kA ( Z 0 )) ≤ sup Z,Z 0 ∈Z n D ∞ ( A ( Z ) kA ( Z 0 )) = I ∞ ( A , n ) . Collecting the three systems of inequalities ab o ve, we get that A is k /n - On-Av erage-KL-Priv acy as claimed. u t Pr o of (Pr o of of L emma 14 ). Denote p ( A ( Z )) = p ( h | Z ). p ( h, Z ) = p ( h | Z ) p ( Z ). The marginal distribution of h is therefore p ( h ) = R Z p ( h, Z ) d Z = E Z p ( A ( Z )). By definition, I ( A ( Z ); Z ) = E Z E h | Z log p ( h | Z ) p ( Z ) p ( h ) p ( Z ) = E Z E h | Z log p ( h | Z ) − E Z E h | Z log E Z 0 p ( h | Z 0 ) = E Z E h | Z log p ( h | Z ) − E Z,Z 0 E h | Z log p ( h | Z 0 ) + E Z,Z 0 E h | Z log p ( h | Z 0 ) − E Z E h | Z log E Z 0 p ( h | Z 0 ) = E Z,Z 0 E h | Z log p ( h | Z ) p ( h | Z 0 ) + E Z,Z 0 E h | Z log p ( h | Z 0 ) − E Z E h | Z log E Z 0 p ( h | Z 0 ) = D KL ( A ( Z ) , A ( Z 0 )) + E Z E h | Z E Z 0 log p ( A ( Z 0 )) − log E Z 0 p ( A ( Z 0 )) ≤ D KL ( A ( Z ) , A ( Z 0 )) . The last line follo ws from Jensen’s inequality . u t Bibliograph y [1] Ak aik e, H.: Lik eliho o d of a mo del and information criteria. Journal of econometrics 16(1), 3–14 (1981) [2] Altun, Y., Smola, A.: Unifying divergence minimization and statis- tical inference via con v ex duality . In: Learning theory , pp. 139–153. Springer (2006) [3] Anderson, N.: “anon ymized” data really isn’t and here’s wh y not. http://arstechnica.com/tech- policy/2009/09/ your- secrets- live- online- in- databases- of- ruin/ (2009) [4] Barb er, R.F., Duchi, J.C.: Priv acy and statistical risk: F ormalisms and minimax b ounds. arXiv preprint arXiv:1412.4451 (2014) [5] Bassily , R., Nissim, K., Smith, A., Steink e, T., Stemmer, U., Ullman, J.: Algorithmic stabilit y for adaptiv e data analysis. arXiv preprin t arXiv:1511.02513 (2015) [6] Berger, A.L., Pietra, V.J.D., Pietra, S.A.D.: A maximum entrop y approac h to natural language pro cessing. Computational linguistics 22(1), 39–71 (1996) [7] Bousquet, O., Elisseeff, A.: Stability and generalization. Journal of Mac hine Learning Research 2, 499–526 (2002) [8] Duncan, G.T., Elliot, M., Salazar-Gonz´ alez, J.J.: Statistical Confi- den tiality: Principle and Practice. Springer (2011) [9] Duncan, G.T., Fienberg, S.E., Krishnan, R., P adman, R., Roehrig, S.F.: Disclosure limitation metho ds and information loss for tabu- lar data. Confiden tiality , Disclosure and Data Access: Theory and Practical Applications for Statistical Agencies pp. 135–166 (2001) [10] Dw ork, C.: Differential priv acy . In: Automata, Languages and Pro- gramming, pp. 1–12. Springer (2006) [11] Dw ork, C., F eldman, V., Hardt, M., Pitassi, T., Reingold, O., Roth, A.: Generalization in adaptive data analysis and holdout reuse. In: Adv ances in Neural Information Pro cessing Systems (NIPS-15). pp. 2341–2349 (2015) [12] Dw ork, C., F eldman, V., Hardt, M., Pitassi, T., Reingold, O., Roth, A.L.: Preserving statistical v alidit y in adaptive data analysis. In: ACM on Symp osium on Theory of Computing (STOC-15). pp. 117–126. A CM (2015) 18 Y u-Xiang W ang, Jing Lei and Stephen E. Fienberg [13] Dw ork, C., Kenthapadi, K., McSherry , F., Mirono v, I., Naor, M.: Our data, ourselv es: Priv acy via distributed noise generation. In: Adv ances in Cryptology-EUROCR YPT 2006, pp. 486–503. Springer (2006) [14] Dw ork, C., McSherry , F., Nissim, K., Smith, A.: Calibrating noise to sensitivit y in priv ate data analysis. In: Theory of cryptograph y , pp. 265–284. Springer (2006) [15] Ebadi, H., Sands, D., Schneider, G.: Differential priv acy: Now it’s getting p ersonal. In: A CM Symp osium on Principles of Programming Languages. pp. 69–81. A CM (2015) [16] Fien b erg, S.E., Rinaldo, A., Y ang, X.: Differen tial priv acy and the risk-utilit y tradeoff for m ulti-dimensional con tingency tables. In: Priv acy in Statistical Databases. pp. 187–199. Springer (2010) [17] Hall, R., Rinaldo, A., W asserman, L.: Random differential priv acy . arXiv preprin t arXiv:1112.2680 (2011) [18] Hardt, M., Ullman, J.: Preven ting false discov ery in in teractive data analysis is hard. In: IEEE Symp osium on F oundations of Computer Science (F OCS-14),. pp. 454–463. IEEE (2014) [19] Hundep ool, A., Domingo-F errer, J., F ranconi, L., Giessing, S., Nord- holt, E.S., Spicer, K., De W olf, P .P .: Statistical disclosure control. John Wiley & Sons (2012) [20] Ja ynes, E.T.: Information theory and statistical mechanics. Physical review 106(4), 620 (1957) [21] Kearns, M., Ron, D.: Algorithmic stability and sanity-c hec k b ounds for lea ve-one-out cross-v alidation. Neural Computation 11(6), 1427– 1453 (1999) [22] Liu, Z., W ang, Y.X., Smola, A.: F ast differen tially priv ate matrix factorization. In: A CM Conference on Recommender Systems (Rec- Sys’15). pp. 171–178. A CM (2015) [23] McSherry , F., T alw ar, K.: Mechanism design via differential priv acy . In: IEEE Symp osium on F oundations of Computer Science (F OCS-07). pp. 94–103 (2007) [24] Mir, D.J.: Information-theoretic foundations of differen tial priv acy . In: F oundations and Practice of Securit y , pp. 374–381. Springer (2013) [25] Mosteller, F., T uk ey , J.W.: Data analysis, including statistics (1968) [26] Mukherjee, S., Niy ogi, P ., Poggio, T., Rifkin, R.: Learning theory: stabilit y is sufficient for generalization and necessary and sufficien t for consistency of empirical risk minimization. Adv ances in Compu- tational Mathematics 25(1-3), 161–193 (2006) On-Av erage KL-Priv acy and its equiv alence to Generalization 19 [27] Nara yanan, A., Shmatiko v, V.: Robust de-anon ymization of large sparse datasets. In: Security and Priv acy , 2008. SP 2008. IEEE Symp osium on. pp. 111–125. IEEE (2008) [28] Russo, D., Zou, J.: Controlling bias in adaptiv e data analysis us- ing information theory . In: International Conference on Artificial In telligence and Statistics (AIST A TS-16) (2016) [29] Shalev-Sh wartz, S., Shamir, O., Srebro, N., Sridharan, K.: Learnabil- it y , stability and uniform con vergence. Journal of Machine Learning Researc h 11, 2635–2670 (2010) [30] Steink e, T., Ullman, J.: In teractive fingerprin ting co des and the hardness of prev en ting false discov ery . arXiv preprin t (2014) [31] Tish by , N., Pereira, F.C., Bialek, W.: The information b ottlenec k metho d. arXiv preprint ph ysics/0004057 (2000) [32] Uhlerop, C., Sla vko vi ´ c, A., Fien b erg, S.E.: Priv acy-preserving data sharing for genome-wide asso ciation studies. The Journal of priv acy and confiden tiality 5(1), 137 (2013) [33] V an Erv en, T., Harremo¨ es, P .: R´ en yi div ergence and kullback-leibler div ergence. Information Theory , IEEE T ransactions on 60(7), 3797– 3820 (2014) [34] W ang, Y.X., Fien b erg, S.E., Smola, A.: Priv acy for free: P osterior sampling and sto c hastic gradient mon te carlo. In: International Conference on Mac hine Learning (ICML-15) (2015) [35] W ang, Y.X., Lei, J., Fien b erg, S.E.: Learning with differential priv acy: Stabilit y , learnability and the sufficiency and necessit y of erm principle. Journal of Mac hine Learning Research (2016), to app ear [36] Y au, N.: Lessons from improp erly anon ymized taxi logs. http://flowingdata.com/2014/06/23/ lessons- from- improperly- anonymized- taxi- logs/ (2014) [37] Y u, F., Fien b erg, S.E., Slavk ovi ´ c, A.B., Uhler, C.: Scalable priv acy- preserving data sharing metho dology for genome-wide asso ciation studies. Journal of biomedical informatics 50, 133–141 (2014) [38] Zhou, S., Lafferty , J., W asserman, L.: Compressed and priv acy- sensitiv e sparse regression. Information Theory , IEEE T ransactions on 55(2), 846–866 (2009)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment