Device and System Level Design Considerations for Analog-Non-Volatile-Memory Based Neuromorphic Architectures

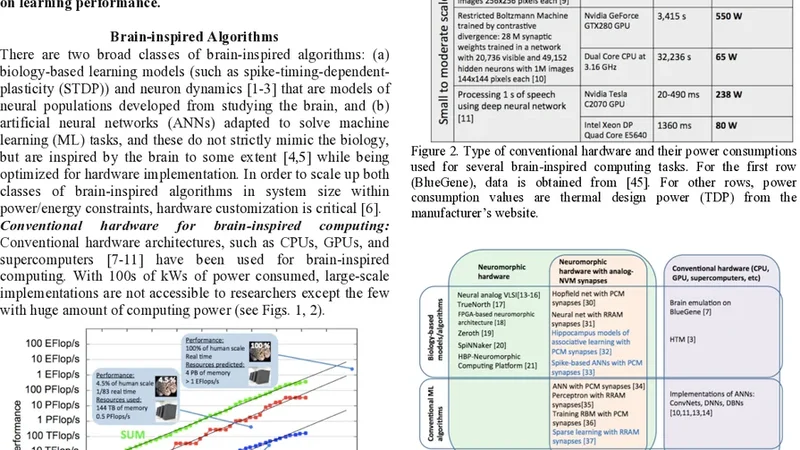

This paper gives an overview of recent progress in the brain inspired computing field with a focus on implementation using emerging memories as electronic synapses. Design considerations and challenges such as requirements and design targets on multilevel states, device variability, programming energy, array-level connectivity, fan-in/fanout, wire energy, and IR drop are presented. Wires are increasingly important in design decisions, especially for large systems, and cycle-to-cycle variations have large impact on learning performance.

💡 Research Summary

The paper provides a comprehensive review of the state‑of‑the‑art in brain‑inspired computing with a focus on using emerging analog non‑volatile memory (NVM) devices as electronic synapses. It begins by motivating neuromorphic hardware: the brain achieves massive parallelism, high connectivity, and ultra‑low energy consumption by co‑locating memory and computation, a property that conventional digital CMOS cannot replicate efficiently because of the von Neumann bottleneck. Emerging memories such as resistive‑RAM (RRAM), phase‑change memory (PCM), magnetic‑RAM (MRAM) and ferroelectric‑FET (FeFET) are highlighted because they can store conductance in an analog, continuously tunable fashion, enabling multi‑level weight representation directly in the cross‑bar array.

The authors then delineate the design targets at the device level. To support realistic neural networks, each synapse must be capable of at least four bits (16 distinct conductance levels). However, real devices exhibit strong non‑linearity, temperature dependence, and process‑induced spread, which makes the spacing between levels uneven. Precise programming pulses (controlled voltage amplitude and width) together with closed‑loop feedback are required to achieve repeatable multi‑level states. Variability is split into two categories: (1) inter‑device (static) variability arising from fabrication tolerances, which can be mitigated by initial calibration and per‑cell trimming; and (2) cycle‑to‑cycle (dynamic) variability caused by stochastic filament formation or phase‑change nucleation, which directly perturbs weight updates during learning. Experimental data in the paper show that when cycle‑to‑cycle variation stays below ~5 % the standard stochastic gradient descent converges with negligible loss penalty, whereas variations above ~10 % cause a sharp increase in training error and slow convergence. Consequently, the authors argue that either the hardware must be engineered to keep dynamic variation low, or learning algorithms must be made robust (e.g., by weight clipping, regularization, adaptive learning rates).

Programming energy is examined next. By scaling the programming voltage and limiting the current, a single‑bit update can be performed with 5–10 nJ, which is comparable to biological synaptic events. However, achieving finer granularity for multi‑bit updates requires tighter voltage steps, leading to a non‑linear increase in energy. The paper recommends a pragmatic compromise: target a minimum of 4‑bit resolution for most inference‑oriented networks, and supplement lower‑resolution devices with digital correction logic when higher precision is needed.

Array‑level considerations dominate the discussion of scalability. The canonical cross‑bar topology is analyzed for fan‑in/fan‑out limits, line resistance, and parasitic capacitance. Using a 64 × 64 array as a reference, the authors calculate that supporting up to 128 inputs per neuron requires line resistance below 1 Ω and line capacitance under 0.2 pF to keep IR‑drop and RC‑delay within acceptable bounds. As the array grows, wire energy (the energy spent charging and discharging interconnects) can account for 30–40 % of total system energy, eclipsing the intrinsic device programming cost. IR‑drop not only reduces the effective read voltage but also skews the programmed conductance, degrading inference accuracy. To combat these effects, the paper proposes voltage‑level‑restoration circuits, distributed power‑delivery networks, and three‑dimensional (3‑D) stacked cross‑bars that shorten wire lengths.

System‑level integration is presented as a co‑design problem. Device‑level variability can be compensated algorithmically (by modeling noise in the loss function or by using noise‑aware training), while circuit techniques (temperature regulation, current‑mirroring networks, on‑chip calibration) aim to suppress variability physically. Power‑management strategies, such as dynamic voltage scaling and event‑driven activation of peripheral circuits, are essential because wire energy dominates at large scales. The authors stress that the traditional memory design flow must be extended to include neuromorphic metrics like fan‑in, spike‑based activity, and learning‑induced weight drift.

In conclusion, the paper asserts that analog NVM‑based neuromorphic hardware is still in its infancy but holds great promise for achieving brain‑scale density and energy efficiency. The key challenges—multi‑level programming accuracy, device variability, wire energy, and IR‑drop—must be addressed through a tight hardware‑software‑algorithm co‑design loop. Future research directions include 3‑D integration of cross‑bars with on‑chip thermal control, development of learning algorithms robust to stochastic weight updates, and the creation of comprehensive system‑level simulation frameworks that capture device physics, circuit parasitics, and algorithmic behavior in a unified environment.