Dynamic Clustering of Histogram Data Based on Adaptive Squared Wasserstein Distances

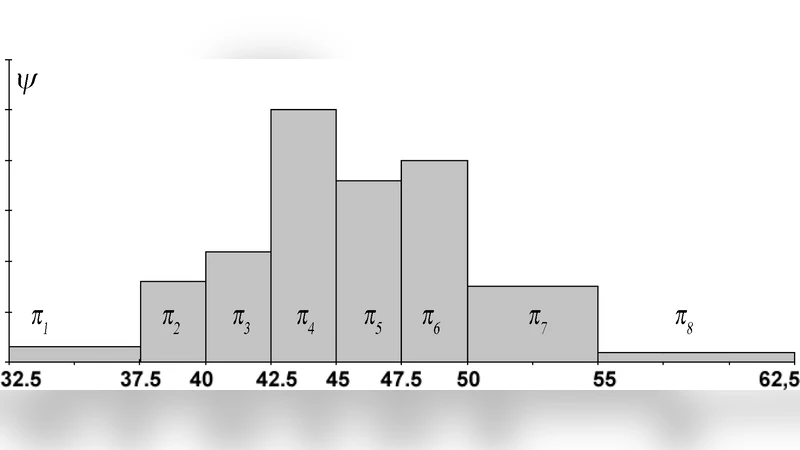

This paper deals with clustering methods based on adaptive distances for histogram data using a dynamic clustering algorithm. Histogram data describes individuals in terms of empirical distributions. These kind of data can be considered as complex descriptions of phenomena observed on complex objects: images, groups of individuals, spatial or temporal variant data, results of queries, environmental data, and so on. The Wasserstein distance is used to compare two histograms. The Wasserstein distance between histograms is constituted by two components: the first based on the means, and the second, to internal dispersions (standard deviation, skewness, kurtosis, and so on) of the histograms. To cluster sets of histogram data, we propose to use Dynamic Clustering Algorithm, (based on adaptive squared Wasserstein distances) that is a k-means-like algorithm for clustering a set of individuals into $K$ classes that are apriori fixed. The main aim of this research is to provide a tool for clustering histograms, emphasizing the different contributions of the histogram variables, and their components, to the definition of the clusters. We demonstrate that this can be achieved using adaptive distances. Two kind of adaptive distances are considered: the first takes into account the variability of each component of each descriptor for the whole set of individuals; the second takes into account the variability of each component of each descriptor in each cluster. We furnish interpretative tools of the obtained partition based on an extension of the classical measures (indexes) to the use of adaptive distances in the clustering criterion function. Applications on synthetic and real-world data corroborate the proposed procedure.

💡 Research Summary

The paper addresses the problem of clustering objects that are described by histograms, i.e., empirical probability distributions. Unlike traditional approaches that treat histograms as high‑dimensional frequency vectors, the authors adopt the L2‑Wasserstein (Mallows) distance, which naturally respects the geometry of distributions. A key property of the Wasserstein distance is its exact decomposition into a location component (the squared Euclidean distance between the means) and a dispersion component (the squared Wasserstein distance between the centered histograms). By exploiting this decomposition, the authors propose adaptive distance measures in which separate weights are attached to each variable and to each of the two components.

Two adaptive schemes are introduced. In the first, a single set of weights is estimated globally from the whole data set; these weights reflect the overall variability of each variable/component and down‑weight highly variable features. In the second scheme, weights are estimated locally for each cluster, allowing the algorithm to capture clusters that differ not only in location but also in the relative importance of variables. Both schemes are embedded in a Dynamic Clustering (DC) framework, which is a generalization of k‑means. The algorithm iterates three steps: (1) assignment of each object to the nearest prototype using the current weighted squared Wasserstein distance, (2) recomputation of the prototype as the Wasserstein barycenter (the histogram whose quantile functions are the averages of the quantile functions of the members), and (3) update of the weights based on within‑cluster variability (for the local scheme) or on total variability (for the global scheme). The process repeats until the objective function – the sum of weighted squared Wasserstein distances – stabilizes.

The authors also extend the classical inertia decomposition (total = within + between) to the adaptive Wasserstein setting. By incorporating the weights into the definitions of total (T), within‑cluster (W) and between‑cluster (B) inertia, they obtain a Huygens‑type decomposition that holds exactly. This enables the computation of variable‑wise contribution ratios, providing an interpretable diagnostic of how each variable and each component (mean vs. dispersion) drives the clustering.

Experimental validation proceeds in two parts. Synthetic data are generated with known differences in variability across variables. The global‑weight version correctly identifies the less informative variables (assigning them low weights) and improves clustering accuracy compared to a non‑adaptive Wasserstein k‑means. The local‑weight version further adapts to clusters that have distinct variable relevance, yielding higher Adjusted Rand Index scores. Real‑world applications include image colour histograms, environmental measurement histograms, and financial transaction flow histograms. In these cases the adaptive method produces more coherent clusters, avoids merging objects that differ mainly in a highly variable feature, and supplies a clear ranking of variable importance that can be inspected by analysts.

In summary, the paper contributes a principled method for histogram clustering that (i) respects the distributional nature of the data via the Wasserstein metric, (ii) decomposes the distance into interpretable components, (iii) learns adaptive weights either globally or per cluster, and (iv) provides extended inertia‑based tools for result interpretation. The approach is shown to outperform standard Euclidean‑based k‑means on both synthetic and real datasets, and it opens avenues for further research such as incorporating full covariance information for multivariate distributions, scalable approximations for large‑scale data, and hybrid supervised‑unsupervised frameworks.

Comments & Academic Discussion

Loading comments...

Leave a Comment