Image Colorization Using a Deep Convolutional Neural Network

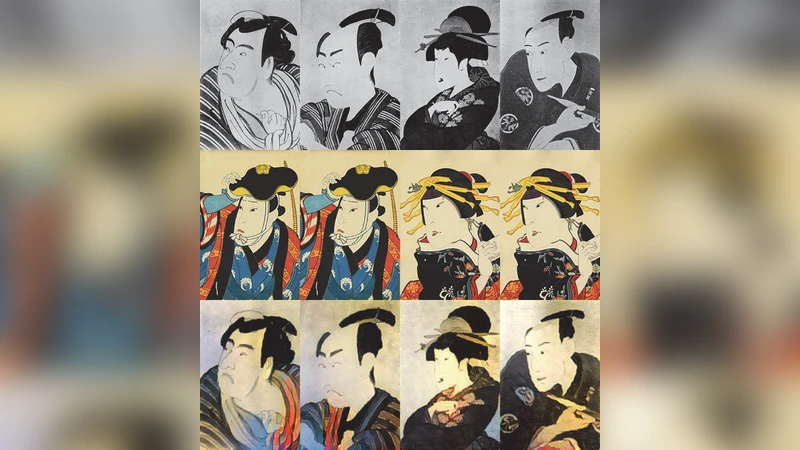

In this paper, we present a novel approach that uses deep learning techniques for colorizing grayscale images. By utilizing a pre-trained convolutional neural network, which is originally designed for image classification, we are able to separate content and style of different images and recombine them into a single image. We then propose a method that can add colors to a grayscale image by combining its content with style of a color image having semantic similarity with the grayscale one. As an application, to our knowledge the first of its kind, we use the proposed method to colorize images of ukiyo-e a genre of Japanese painting?and obtain interesting results, showing the potential of this method in the growing field of computer assisted art.

💡 Research Summary

The paper introduces a novel deep‑learning framework for automatically colorizing grayscale images by leveraging a pre‑trained convolutional neural network originally built for image classification. The authors repurpose the network as a dual‑purpose feature extractor: intermediate activations from a mid‑level layer (e.g., conv4_2) serve as a “content” representation of the grayscale input, while Gram matrices computed from multiple layers of a color reference image capture its “style,” i.e., the distribution of colors and textures. By defining a loss that combines a content term (L2 distance between the generated image’s content features and those of the grayscale input), a style term (L2 distance between the generated image’s style features and those of the reference), and a total‑variation regularizer, the method iteratively optimizes a random color map until the generated image simultaneously preserves the original structure and adopts the color palette of the reference.

A critical component of the approach is the selection of a semantically similar reference image. The authors employ image‑search techniques or tag‑based cosine similarity to match the grayscale image with a color counterpart that shares scene type, object categories, or overall composition. Experiments are conducted on standard grayscale‑to‑color benchmarks as well as on ukiyo‑e woodblock prints, a historically monochrome Japanese art form. Qualitative results show that when the reference is well‑matched, the colorized outputs exhibit natural hue transitions and retain the artistic mood of the original works, whereas mismatched references lead to color bleeding or unrealistic tones.

The methodology has several advantages: it avoids the need for large paired color‑grayscale datasets, it can be applied to any domain where a suitable reference exists, and it provides an intuitive control knob—changing the reference image directly alters the color palette. However, the reliance on reference selection introduces variability, and the optimization‑based pipeline (using L‑BFGS or Adam) is computationally intensive, limiting real‑time applicability.

Future work suggested includes developing an end‑to‑end trainable network that jointly learns content‑style separation and color synthesis, automating reference retrieval with a learned semantic matcher, incorporating user‑guided color constraints, and extending evaluation to a broader range of cultural heritage artifacts. Overall, the paper demonstrates that style‑transfer techniques can be effectively repurposed for image colorization, opening new possibilities for digital restoration, artistic creation, and assisted photography.

Comments & Academic Discussion

Loading comments...

Leave a Comment