Co-Localization of Audio Sources in Images Using Binaural Features and Locally-Linear Regression

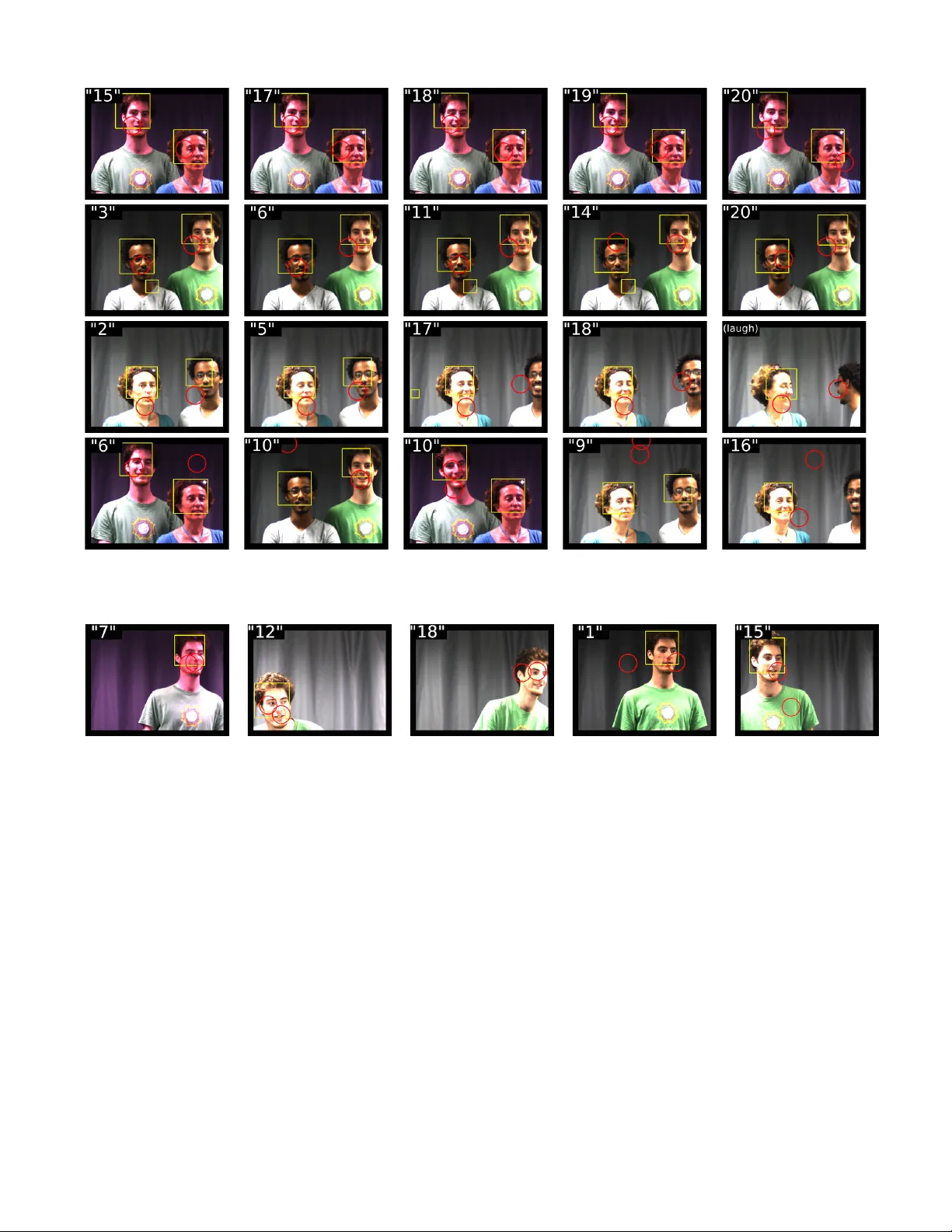

This paper addresses the problem of localizing audio sources using binaural measurements. We propose a supervised formulation that simultaneously localizes multiple sources at different locations. The approach is intrinsically efficient because, cont…

Authors: Antoine Deleforge, Radu Horaud, Yoav Schechner