Safe Probability

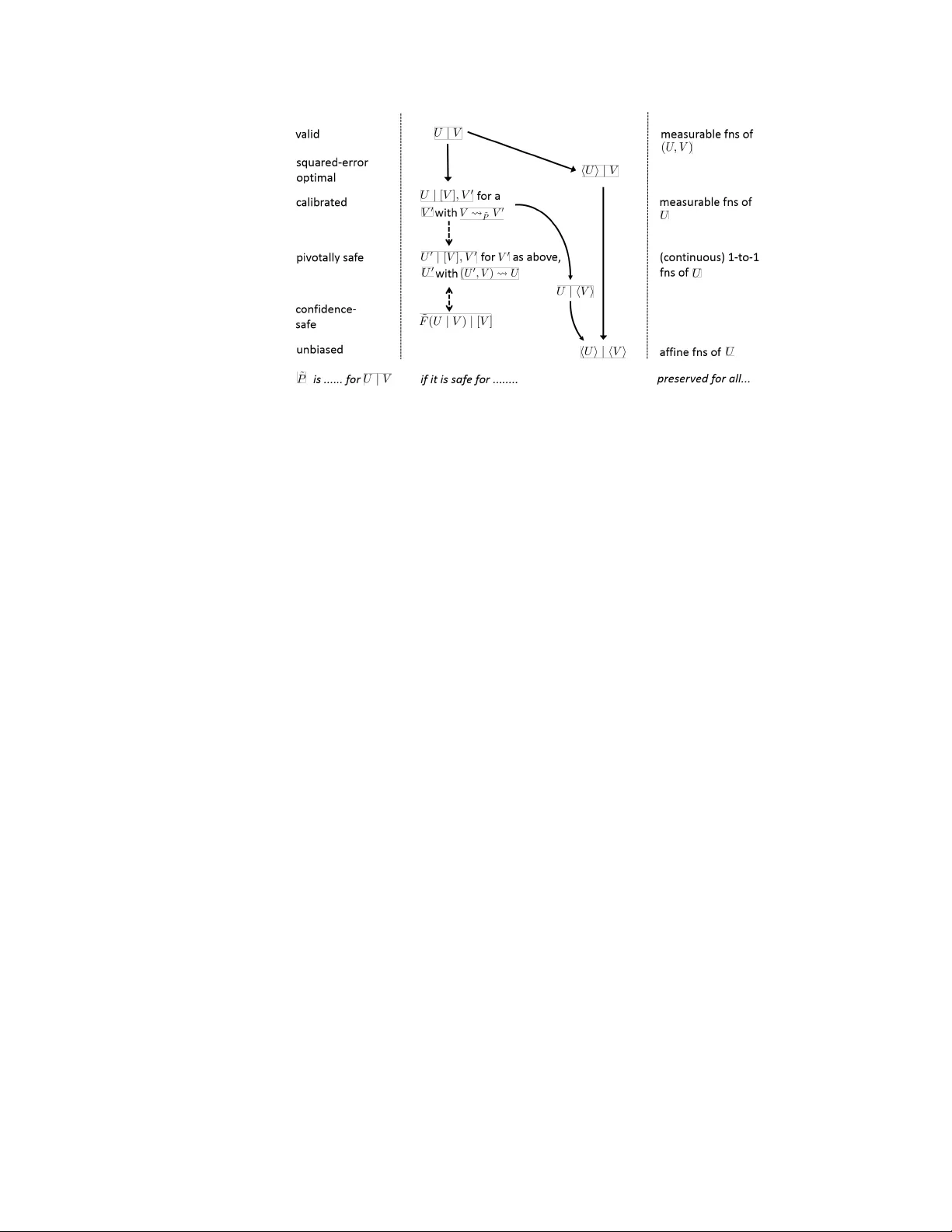

We formalize the idea of probability distributions that lead to reliable predictions about some, but not all aspects of a domain. The resulting notion of `safety' provides a fresh perspective on foundational issues in statistics, providing a middle g…

Authors: Peter Gr"unwald