Partial Recovery Bounds for the Sparse Stochastic Block Model

In this paper, we study the information-theoretic limits of community detection in the symmetric two-community stochastic block model, with intra-community and inter-community edge probabilities $\frac{a}{n}$ and $\frac{b}{n}$ respectively. We consid…

Authors: Jonathan Scarlett, Volkan Cevher

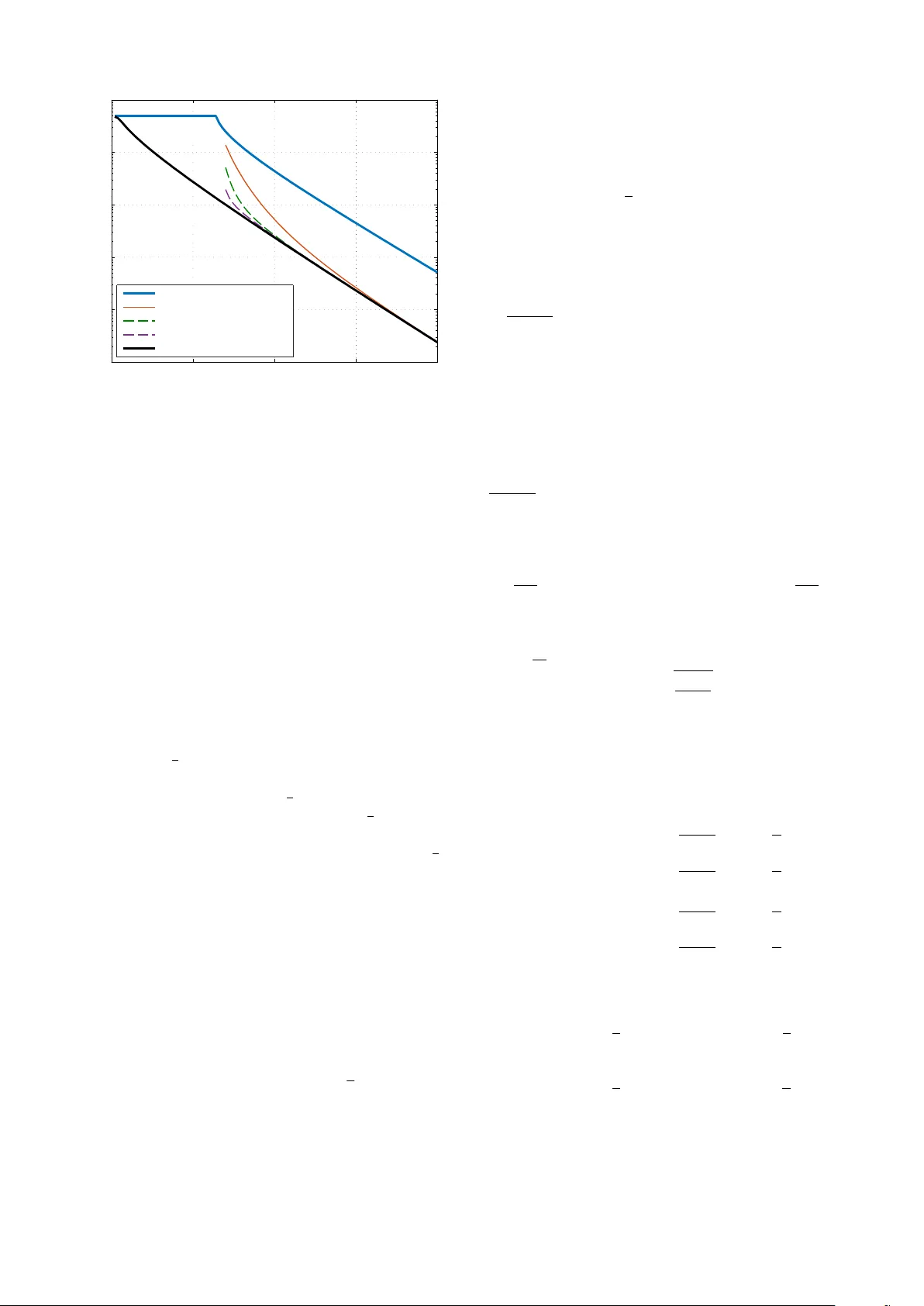

P artial Reco v ery Bounds for the Sparse Stochastic Block Model Jonathan Scarlett and V olkan Ce vher Laboratory for Information and Inference Systems (LIONS) École Polytechnique Fédérale de Lausanne (EPFL) Email: {jonathan.scarlett,volkan.ce vher}@epfl.ch Abstract —In this paper , we study the inf ormation-theoretic limits of community detection in the symmetric two-community stochastic block model, with intra-community and inter - community edge probabilities a n and b n respecti vely . W e consider the sparse setting, in which a and b do not scale with n , and pro vide upper and lo wer bounds on the proportion of community labels recov ered on average. W e provide a numerical example for which the bounds are near-matching for moderate values of a − b , and matching in the limit as a − b grows large. I . I N T RO D U C T I O N The problem of identifying community structures in undi- rected graphs is a fundamental problem in network analysis, machine learning, and computer science [1], and is relev ant to numerous practical applications such as social networks, recommendation systems, image processing, and biology . The stochastic block model (SBM) is a widely-used statis- tical model for studying this problem. Despite its simplicity , this model has helped to provide significant insight into the problem, has led to the de velopment of se veral po werful community detection algorithms, and still comes with a v ariety of interesting open problems. One such open problem, and the focus of the present paper , is to characterize the necessary and sufficient conditions for partial r ecovery , in which one seeks to correctly reco ver a fixed proportion of the community assignments. This is arguably of more practical interest compared to e xact recov ery , which is usually too stringent to be expected in practice, and compared to correlated recovery , which only seeks to marginally beat a random guess. A. The Symmetric T wo-Community SBM W e focus on the simplest SBM, in which there are only two communities and the edge probabilities are symmetric. Specifically , the n nodes, labeled { 1 , . . . , n } , are randomly assigned community labels σ = { σ 1 , . . . , σ n } , where each σ i equals 1 or 2 with probability 1 2 each. Given the community labels, a set of n 2 unordered edges E = { E ij : i 6 = j } is generated according to P [ E ij = 1 | σ ] = ( a n σ i = σ j b n σ i 6 = σ j , (1) This work was supported in part by the European Commission under Grant ERC Future Proof, SNF 200021-146750 and SNF CRSII2-147633, and ‘EPFL Fellows’ Horizon2020 grant 665667. for some constants a, b > 0 , with independence between different ( i, j ) pairs. W e assume throughout the paper that a and b are fixed (i.e., not scaling with n ), and hence the graph is sparse. W e also assume that a > b (i.e., on average there are more intra-community edges than inter-community edges). Giv en the edge set E , a decoder forms an estimate ˆ σ := { ˆ σ 1 , . . . , ˆ σ n } of the communities. Note that in this paper , we assume that a and b are known; this assumption is common in the literature, though sometimes av oided [2], [3]. B. Pr evious W ork and Contributions Studies of the SBM can roughly be categorized according to the recovery criteria of correlated recov ery , exact reco very , and partial recov ery . A comprehensiv e re view is not possible here, so we mention only some key relev ant works. The correlated recovery problem only seeks to determine whether any community structure is present or absent, thus insisting on classifying only a proportion 1 2 (1 + ) correctly for some arbitrarily small > 0 . An exact phase transition between success and failure is known to occur according to whether ( a − b ) 2 > 2( a + b ) [4], [5], as was conjectured in an earlier work based on tools from statistical physics [6]. In the exact reco very problem, one seeks to perfectly recov er the two communities. This is impossible with the abo ve- mentioned scaling laws; instead, the main scaling regime of interest is a, b = Θ(log n ) , in which a phase transition occurs according to whether 1 log n a + b 2 − √ ab > 1 [7]. Furthermore, this is achie vable via practical methods [7], [8], and extensions to the case of multiple communities and non- symmetric settings hav e been giv en [9]. Sev eral works have provided partial recovery bounds for the case that a and b exhibit certain scaling laws, or are finite but sufficiently larg e . In [10], it is shown that a practical algorithm based on belief propagation achiev es the optimal recov ery proportion when ( a − b ) 2 > C ( a + b ) for sufficiently large C . Bounds for several asymptotic scalings of a and b are giv en in [3], [11]–[13], with [3], [11] considering a regime where the recov ery proportion tends to zero, and [12], [13] considering cases where the proportion tends to a constant. A non-asymptotic bound is giv en in [14], b ut the conditions on a and b are written in terms of a loose constant whose optimization is not attempted. W e are not aware of any previous works seeking tight performance bounds at finite values of a and b . In this paper, our goal is to partially close this gap by providing partial recovery bounds specifically targeted at the case that a and b are fixed and not necessarily large. W e consider the partial recov ery criterion r ( σ , ˆ σ ) := min π ∈ Π 1 n n X i =1 1 π ( σ i ) 6 = π ( ˆ σ i ) , (2) where Π contains the two permutations of { 1 , 2 } ; this is included since one can only hope to recov er the communities up to relabeling. Note that r ( σ , ˆ σ ) is a random v ariable; we will primarily be interested in characterizing its expectation, but we will also present a high-probability bound. C. Notation All logarithms ha ve base e , and we define the binary entrop y function in nats as H 2 ( α ) := − α log α − (1 − α ) log(1 − α ) . The indicator function is denoted by 1 {·} , and we use the standard asymptotic notations O ( · ) , o ( · ) , and Θ( · ) . I I . M A I N R E S U LT S Here we present our main results, namely , information- theoretic bounds characterizing how the proportion of errors r ( σ , ˆ σ ) can behave. The proofs are giv en in Section III. A. Necessary Condition W e begin with a necessary condition that must hold for any decoding procedure. Theorem 1. (Necessary Condition) Under the symmetric SBM with fixed parameters a > b > 0 , any decoder must yield lim inf n →∞ E r ( σ , ˆ σ ) ≥ P Z 1 < Z 2 + 1 2 P Z 1 = Z 2 , (3) wher e Z 1 ∼ P oisson a 2 , Z 2 ∼ P oisson b 2 ar e independent. The proof is based on a global to local relation from [11], roughly stating that the best av erage error rate is equal to the best av erage error rate in estimating a single assignment (node 1, say). Assuming the best case scenario that all other nodes are estimated correctly , the estimation of the remaining node roughly amounts to performing a Poisson hypothesis test [9], thus yielding the expression in (3) in terms of Poisson random variables. B. Sufficient Conditions Next, we provide our sufficient conditions. Note that these are purely information-theoretic, as the decoders used in the proofs are not computationally feasible. W e first pro vide a high pr obability bound based on a minimum-bisection decoder , which has also been considered in pre vious works such as [7]. W e will see that this bound is reasonable but sometimes loose; nev ertheless, it will provide the starting point for an improv ed bound giv en in Theorem 3 below . Theorem 2. (High-Probability Sufficient Condition) Under the symmetric SBM with fixed parameter s a > b > 0 , ther e exists a decoder such that, for any > 0 , ther e exists ψ > 0 such that P [ r ( σ , ˆ σ ) > α + ] ≤ e − ψ n + 1 n 2 , (4) for sufficiently larg e n , where α ∈ 0 , 1 2 is defined to be the solution to a + b 2 − √ ab = H 2 ( α ) α (1 − α ) (5) if such a solution exists, and α = 0 . 5 otherwise. Our main sufficient condition is giv en as follows. Theorem 3. (Refined Sufficient Condition) Under the sym- metric SBM with fixed parameters a > b > 0 , suppose that ther e exists a value α ∈ 0 , 1 4 satisfying (5) . Then there exists a decoding pr ocedur e such that lim sup n →∞ E r ( σ , ˆ σ ) ≤ P Z 1 ,α < Z 2 ,α + 1 2 P Z 1 ,α = Z 2 ,α , (6) wher e Z 1 ,α ∼ Poisson a 2 (1 − α ) + b 2 α and Z 2 ,α ∼ P oisson b 2 (1 − α ) + a 2 α ar e independent. The proof uses a two-step decoding procedure inspired by [3], in which the first step uses the decoder from Theorem 2, and the second step performs local refinements. W e again liken this to a Poisson-based testing procedure to obtain (6). Note that this condition takes a similar form to that in (3); we will see numerically in Section II-D that the gap between the two is often small, particularly when a − b is large. C. Discussion and a Conjectur ed Sufficient Condition The proof of our main achiev ability bound, Theorem 3, is based on using a high probability bound in the first step, and then obtaining an improved bound in the second step using local refinements. If we could show that the av erage-distortion bound in Theorem 3 also holds with high probability (e.g., 1 − o 1 n ), then we could use this overall procedure in the first step of a ne w two-step procedure, and then obtain a further improv ed bound of the form (6), with our current achievability (6) bound playing the role of α . One could then imagine repeating this argument se veral times, further improving the bound on each iteration. See Section II-D for a numerical example. Even if this ar gument can be formalized, there is still a major hurdle in handling small values of a − b : W e require an initial high probability bound with a fraction of errors strictly smaller than 1 4 . Theorem 2 does not suffice for this purpose in general, and refined methods for obtaining such bounds would be of significant interest. Alternativ ely , one could seek to adjust the two-step procedure so that one may start with a high probability bound considering any fraction of errors in 0 , 1 2 , rather than just 0 , 1 4 . D. Numerical Example In Figure 1, we plot our asymptotic bounds for v arious values of ( a, b ) such that a = 2 b . Thus, higher values of a (or equiv alently , b ) correspond to a larger gap between a 0 50 100 150 200 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 V alue o f a E rr o r R ate Thm. 2 Achievability Thm. 3 Achievability Conjectured Achievability 1 Conjectured Achievability 2 Thm. 1 Converse Figure 1: Asymptotic partial recovery bounds with a = 2 b . The vertical axis giv es the limit of E [ r ( σ , ˆ σ )] as n → ∞ . and b , making the community detection problem easier . Along with the main achiev ability and con verse bounds, we plot the high probability achiev ability bound (i.e., the solution to (5)). Moreov er , we plot the bounds that would arise from the first two iterations of the iterativ e procedure corresponding to the conjectured sufficient condition described in Section II-C. While the high probability bound provides a similar rate of decay to the conv erse bound as a increases, the gap between the two at finite values of a remains significant. In contrast, our main achiev ability bound from the two-step procedure approaches the con verse bound as a → ∞ , which is to be expected since this procedure bears similarity to the asymptotically optimal two-step procedure proposed in [3]. In contrast, our bounds have more room for improv ement at low values of a . In particular , results from the correlated recov ery problem [4], [5] re veal that one can achiev e an error rate better than 1 2 if and only if ( a − b ) 2 > 2( a + b ) , or equiv alently a > 12 (since we are considering the case a = 2 b ). Our con verse bound is belo w 1 2 for all a > 0 , our high- probability achiev ability bound is still equal to 1 2 for a = 60 , and our refined achiev ability bound is only valid for a & 70 , since it relies on the high-probability bound being below 1 4 . Closing these gaps for small v alues of a and b is a challenging but interesting direction for future work. While our conjectured sufficient condition appears that it could help significantly at moderate values of a and b , it still has the same limitations when these values are small. The techniques of [12] may also be useful, since the genie argument used in the con verse part is more general than the one we use, and the belief propagation decoder used in the achie vability part is potentially more powerful at small values of a and b . I I I . P RO O F S Here we provide the proofs of Theorems 1 – 3. Due to space constraints, we omit some details that are in common with previous works such as [7] and [11]. A. Pr oof of Necessary Condition (Theorem 1) The proof is based on a global to local lemma giv en in [11]. Recall that Π is the set of permutations of { 1 , 2 } corresponding to reassignments (of which there are only two, since we con- sider the two-community case), and define S ( σ , ˆ σ ) = { σ 0 : σ 0 = π ( ˆ σ ) , r ( σ , ˆ σ ) = 1 n P n i =1 1 { π ( σ i ) 6 = π ( ˆ σ i ) , π ∈ Π } , containing the reassignments of ˆ σ corresponding to the set of permutations achieving the minimum in (2) (typically a singleton). Lemma 1. (Global to local [11]) The minimum value of E [ r ( σ , ˆ σ )] over all decoders is equal to the minimum value of E 1 | S ( σ , ˆ σ ) | P σ 0 ∈ S ( σ , ˆ σ ) 1 { σ 1 6 = σ 0 1 } over all decoders. This result essentially allows us to obtain a lower bound on the error rate E [ r ( σ , ˆ σ )] via a lo wer bound on the er- ror rate corresponding to the first node. For the latter, we consider a genie-aided setting in which the true assignments of nodes 2 , . . . , n are rev ealed to the decoder, which is left to estimate node 1 . W e can then assume without loss of optimality that ˆ σ i = σ i for i = 2 , . . . , n , and in this case we have S ( σ , ˆ σ ) = { ˆ σ } . Thus, we are left to bound E 1 | S ( σ , ˆ σ ) | P σ 0 ∈ S ( σ , ˆ σ ) 1 { σ 1 6 = σ 0 1 } = P [ σ 1 6 = ˆ σ 1 ] . Note that the information from the genie only makes the recovery of σ 1 easier , and hence any conv erse bound for this setting is also valid for the original setting. Suppose that, among the rev ealed nodes 2 , . . . , n , there are n 1 := n − 1 2 (1+ δ ) nodes in community 1, and n 2 := n − 1 2 (1 − δ ) in community 2, for some δ ∈ [ − 1 , 1] . Since the community assignments are independent and equiprobable, Hoeffding’ s inequality [15, Ch. 2] giv es the following with probability at least 1 − 1 n 2 : | δ | ≤ 2 r log n n − 1 . (7) For fixed δ , the study of the error e vent { σ 1 6 = σ 0 1 } in the genie-aided setting comes down to a binary hypothesis testing problem, where hypothesis H ν ( ν = 1 , 2 ) is that σ 1 = ν . Letting ` ν denote the number of edges from node 1 to nodes from 2 , . . . , n that are in the ν -th community , we have H 1 : ` 1 ∼ Binomial n − 1 2 (1 + δ ) , a n , ` 2 ∼ Binomial n − 1 2 (1 − δ ) , b n (8) H 2 : ` 1 ∼ Binomial n − 1 2 (1 + δ ) , b n , ` 2 ∼ Binomial n − 1 2 (1 − δ ) , a n . (9) W e now observe, as in [9], that this problem can be approxi- mated by a Poisson hypothesis testing problem of the form H 0 1 : ` 1 ∼ P oisson a 2 (1 + δ ) , ` 2 ∼ P oisson b 2 (1 − δ ) (10) H 0 2 : ` 1 ∼ P oisson b 2 (1 + δ ) , ` 2 ∼ P oisson a 2 (1 − δ ) . (11) ˆ 1 ˆ 2 1 2 n 1 k 1 n 2 k 2 k 1 k 2 Figure 2: Sizes of true communities and their estimates in the case that δ > 0 (i.e., n 1 > n 2 ). Specifically , we have from Le Cam’ s inequality [9, Eq. (32)] that each Binomial distrib ution above differs from the corre- sponding Poisson distribution by O 1 n in the total-variation norm, and hence the difference in the error rates resulting from the two hypothesis testing problems is also O 1 n . Recalling that our hypotheses are equiprobable, a substi- tution of the Poisson probability mass function (PMF) p k = λ k k ! e − λ into (10)–(11) rev eals that the decision rule minimizing the error rate is to choose H 0 1 if and only if ` 1 ≥ ` 2 + δ ( b − a ) log a b . (12) Using (7) and the fact that a and b do not scale with n , we find that δ ( b − a ) log a b < 1 for suf ficiently large n , and hence the decision simply amounts to testing which of ` 1 and ` 2 is larger , with ties broken according to whether δ is positive (choose H 0 1 ), neg ativ e (choose H 0 2 ), or zero (choose randomly). For example, under H 0 1 with δ = 0 , we find that the probability of incorrectly choosing H 0 2 is P [ Z 0 1 < Z 0 2 ] + 1 2 P [ Z 0 1 = Z 0 2 ] , (13) where Z 0 1 ∼ P oisson a 2 (1 + δ ) and Z 0 2 ∼ P oisson b 2 (1 − δ ) . Since δ → 0 by (7), the error rate in (13) approaches that giv en in (3). By handling the other cases of H and sign( δ ) similarly , we find that the overall error rate also approaches the right-hand side of (3), thus completing the proof. B. Pr oof of High-Pr obability Sufficient Condition (Theorem 2) The theorem is trivial for α = 1 2 , since ev en a random guess recov ers half of the communities correctly on av erage; we thus focus on the case that α ∈ 0 , 1 2 . W e also assume that n is ev en; otherwise, the same result follows by simply ignoring an arbitrary node and assigning its community at random. W e consider a minimum-bisection decoder that splits the n nodes into two communities of size n 2 , such that the number of inter-community connections is minimized. This decoder was studied in several previous works such as [7], [11]. W e begin by conditioning on the true community assign- ments having n 1 = n 2 (1 + δ ) nodes in community 1, and n 2 = n 2 (1 − δ ) nodes in community 2. As we showed in the con verse proof, we have with probability at least 1 − 1 n 2 that δ satisfies (7); this is what leads to the second term in (4). Consider a fixed estimate ˆ σ of the communities from the abov e procedure, and suppose that there are k ν indices such that σ i = ν but ˆ σ i 6 = ν ( ν = 1 , 2 ). See Figure 2 for an illustration. Since the decoder always declares exactly n 2 nodes to be in each of the two communities, we must hav e n 2 (1 + δ ) − k 1 + k 2 = n 2 and n 2 (1 − δ ) − k 2 + k 1 = n 2 , and hence k 1 − k 2 = n 2 δ or equiv alently k 1 + k 2 = 2 k 2 + n 2 δ . Since k 1 + k 2 corresponds to the total number of mis-labeled communities, and since δ satisfies (7), in order to have r ( σ , ˆ σ ) > α (1 + η ) , it is necessary that k 2 > n 2 α and k 2 < n 2 (1 − α ) for sufficiently large n (recall from (2) that the recovery is only defined up to relabeling). W e now consider the probability that a fixed estimate yield- ing some ( k 1 , k 2 ) pair is chosen by the minimum-bisection decoder . W e focus on the case that k 2 ∈ n 2 α, n 4 and k 1 ≤ k 2 (i.e., δ > 0 ), since the cases with k 2 ∈ n 4 , n 2 (1 − α ) or k 2 > k 1 are handled analogously . In order for an error to occur , the true assignment must yield a lower number of inter-community connections than the assignment obtained by swapping k 1 incorrect nodes from community 1 with k 1 incorrect nodes from community 2. Such a swap causes k 1 ( n 1 − k 1 ) + k 1 ( n 2 − k 2 ) = k 1 ( n − k 1 − k 2 ) inter- community edges to ha ve probability b n instead of a n , as well as k 1 ( k 1 − k 2 ) = k 1 n 2 δ inter-community edges to hav e probability a n instead of b n . Thus, in order for an error occur , a random variable of the following form (corresponding to the inter - community edges differing in the two assignments) must be non-negati ve: Ψ k 1 ,k 2 := W 1 ,b − W 1 ,a + W 2 ,a − W 2 ,b , (14) where W 1 ,a ∼ Binomial k 1 ( n − k 1 − k 2 ) , a n and W 2 ,a ∼ Binomial k 1 n 2 δ, a n , and analogously for W 1 ,b and W 2 ,b with b in place of a . Applying the union bound and a simple counting ar gument, we obtain P [error | σ ] ≤ 2 n 4 X k 2 = n 2 α n 1 k 1 n 2 k 2 P [Ψ k 1 ,k 2 > 0] , (15) where k 1 = k 2 + n 2 δ , and σ is an arbitrary assignment with n ν nodes in community ν ( ν = 1 , 2 ). The factor of 2 here arises from a symmetry argument with respect to the estimates with k 2 < n 4 and k 2 > n 4 . Let P A and P B denote Bernoulli PMFs with parameters a n and b n , respectiv ely . An application of the Chernof f bound yields for any λ > 0 that P [Ψ k 1 ,k 2 > 0] ≤ X z a ,z b P A ( z a ) P B ( z b ) e λ ( z b − z a ) m 1 × X z a ,z b P A ( z a ) P B ( z b ) e λ ( z a − z b ) m 2 , (16) where m 1 := k 1 ( n − k 1 − k 2 ) , m 2 := k 1 n 2 δ , and z a , z b ∈ { 0 , 1 } . It is straightforward to show that the choice of λ minimizing the first summation is λ = 1 2 log a n (1 − b n ) b n (1 − a n ) , and that the summation ev aluates to 2 q a n (1 − b n ) b n (1 − a n ) + a n b n + 1 − a n 1 − b n . The second summation also behaves as 1 + Θ 1 n , and since m 1 = Θ( n 2 ) but m 2 = o ( n 2 ) , we obtain the following after applying some asymptotic expansions: − 1 n log P [Ψ k 1 ,k 2 > 0] ≥ m 1 n 2 2 a + b 2 − √ ab + o (1) . (17) Supposing no w that k 2 = n 2 α 0 for some α 0 ∈ α, 1 2 (see (15)), we readily obtain from (7) that k 1 = n 2 α 0 (1 + o (1)) and m 1 = 1 2 n 2 α 0 (1 − α 0 )(1 + o (1)) , and we similarly have n 1 = n 2 (1 + o (1)) and n 2 = n 2 (1 + o (1)) . Substituting these estimates and (17) into (15) and using the identity 1 N log N θN = H 2 ( θ )(1 + o (1)) , we find that the right-hand side of (15) decays to zero exponentially fast provided that H 2 ( α 0 ) − α 0 (1 − α 0 ) a + b 2 − √ ab < 0 (18) for all α 0 ∈ α, 1 2 . Since H 2 ( α 0 ) α 0 (1 − α 0 ) is monotonically decreas- ing in this range, this holds provided that α satisfies (5). C. Pr oof of Refined Sufficient Condition (Theor em 3) W e again assume that n is ev en, and the case that n is odd follows similarly by ignoring one node and assigning its community randomly . Theorem 2 allows us to prov e Theorem 3 via the following two-step procedure [3]: 1) For each j = 1 , . . . , n , do the following: a) Apply the decoder from Theorem 2 to the set of nodes { 1 , . . . , n }\{ j } to obtain the estimates { ˜ σ ( j ) i } i 6 = j . Choose the remaining estimate ˜ σ ( j ) j in such a way that there are an equal number of nodes with ˜ σ ( j ) j = 1 and ˜ σ ( j ) j = 2 . b) If there are more v alues of i with ˜ σ ( j ) i = ˜ σ (1) i than ˜ σ ( j ) i 6 = ˜ σ (1) i , set each ˆ σ ( j ) i = ˜ σ ( j ) i . Otherwise, set each ˆ σ ( j ) i to be the value differing from ˜ σ ( j ) i . 2) For each j = 1 , . . . , n , set the final estimate ˆ σ j = 1 if there are more edges from node j to nodes with ˆ σ ( j ) i = 1 than to nodes with ˆ σ ( j ) i = 2 , and set ˆ σ j = 2 otherwise. W e again write n 1 = n 2 (1 + δ ) and n 2 = n 2 (1 − δ ) , and note that δ satisfies (7) with probability at least 1 − 1 n 2 . Let α 0 be an arbitrary value in the range α, 1 4 . For each j = 1 , . . . , n , let ˜ k ( j ) ν ( ν = 1 , 2 ) be the number of nodes from the ν -th community such that the j -th decoder in Step 1 outputs ˜ σ ( j ) i 6 = ν , and let k ( j ) ν be defined similarly with ˆ σ ( j ) i in place of ˜ σ ( j ) i . By Theorem 2 and the union bound, with probability 1 − O 1 n , we hav e for all j that either ˜ k ( j ) 1 + ˜ k ( j ) 2 ≤ nα 0 or ˜ k ( j ) 1 + ˜ k ( j ) 2 ≥ n (1 − α 0 ) . W e consider the case that ˜ k (1) 1 + ˜ k (1) 2 ≤ nα 0 ; the other case ˜ k (1) 1 + ˜ k (1) 2 ≥ n (1 − α 0 ) is handled analogously . From the above definitions and Step 1b above, we trivially have k (1) ν = ˜ k (1) ν , and hence k (1) 1 + k (1) 2 ≤ nα 0 . W e claim that it is also the case that k ( j ) 1 + k ( j ) 2 ≤ nα for j = 2 , . . . , n . Indeed, since α 0 < 1 4 , the contrary would imply that less than a quarter of the ˆ σ (1) i differ from the true assignments and more than three quarters of the ˆ σ ( j ) i differ from the true assignments, in turn implying that more than half of the ˆ σ (1) i differ from the ˆ σ ( j ) i , in contradiction with Step 1b abov e. By definition, among the ˆ σ ( j ) i , there are n 2 (1+ δ ) − k ( j ) 1 + k ( j ) 2 nodes estimated to be in community 1 , and n 2 (1 − δ ) − k ( j ) 2 + k ( j ) 1 to be in community 2 . Since the decoder from Step 1 outputs an estimate with an equal number n 2 of nodes in each community , this implies that k ( j ) 1 − k ( j ) 2 = n 2 δ . Summing this with k ( j ) 1 + k ( j ) 2 ≤ nα 0 , we obtain k ( j ) 1 ≤ n 2 α 0 + δ 2 , and subtracting the two equations similarly gives k ( j ) 2 ≤ n 2 α 0 − δ 2 . Finally , we consider the testing procedure giv en in Step 2 abov e. W e hav e the follo wing when σ j = 1 : (i) T o nodes with ˆ σ ( j ) i = 1 there are n 1 − k ( j ) 1 potential edges having probability a and k ( j ) 2 having probability b ; (ii) T o nodes with ˆ σ ( j ) i = 2 there are n 2 − k ( j ) 2 potential edges having probability b and k ( j ) 1 having probability a . When σ j = 2 , the same is true with the roles of a and b rev ersed. The proof is now completed in the same way as Section III-A by approximating each of these numbers of edges by a Poisson distribution. The abov e estimates, along with (7), rev eal that n 1 and n 2 behav e as n 2 + o ( n ) , and each k ( j ) ν is upper bounded by n 2 α 0 + o ( n ) . In the worst case scenario that these upper bounds are met with equality , the parameters of the resulting Poisson distributions con ver ge to a 2 (1 − α 0 ) + b 2 α 0 and b 2 (1 − α 0 ) + a 2 α 0 . Since α 0 can be chosen to be arbitrarily close to α , this leads to the final bound giv en in (6). R E F E R E N C E S [1] S. Fortunato, “Community detection in graphs, ” Physics Reports , vol. 486, no. 3, pp. 75–174, 2010. [2] E. Abbe and C. Sandon, “Recov ering communities in the gen- eral stochastic block model without knowing the parameters, ” 2015, http://arxiv .org/abs/1506.03729. [3] C. Gao, Z. Ma, A. Y . Zhang, and H. H. Zhou, “ Achie ving op- timal misclassification proportion in stochastic block model, ” 2015, http://arxiv .org/abs/1505.03772. [4] L. Massoulié, “Community detection thresholds and the weak Ramanu- jan property , ” in Proc. ACM-SIAM Symp. Disc. Alg. (SODA) , 2014, pp. 694–703. [5] E. Mossel, J. Neeman, and A. Sly , “Stochastic block models and reconstruction, ” 2012, http://arxiv .org/abs/1202.1499. [6] A. Decelle, F . Krzakala, C. Moore, and L. Zdeborová, “ Asymptotic analysis of the stochastic block model for modular networks and its algorithmic applications, ” Physical Review E , vol. 84, no. 6, 2011. [7] E. Abbe, A. Bandeira, and G. Hall, “Exact recovery in the stochastic block model, ” IEEE T rans. Inf. Theory , vol. 62, no. 1, pp. 471–487, Jan. 2016. [8] B. Hajek, Y . W u, and J. Xu, “ Achieving exact cluster recovery threshold via semidefinite programming, ” 2014, http://arxiv .org/abs/1412.6156. [9] E. Abbe and C. Sandon, “Community detection in general stochastic block models: Fundamental limits and efficient recovery algorithms, ” 2015, http://arxiv .org/abs/1503.00609. [10] E. Mossel, J. Neeman, and A. Sly , “Belief propagation, ro- bust reconstruction, and optimal recovery of block models, ” 2013, http://arxiv .org/abs/1309.1380. [11] A. Y . Zhang and H. H. Zhou, “Minimax rates of community detection in stochastic block models, ” 2015, http://arxiv .org/abs/1507.05313. [12] E. Mossel and J. Xu, “Density evolution in the degree-correlated stochastic block model, ” 2015, http://arxiv .org/pdf/1509.03281v1.pdf. [13] Y . Deshpande, E. Abbe, and A. Montanari, “ Asymptotic mu- tual information for the two-groups stochastic block model, ” 2015, http://arxiv .org/abs/1507.08685. [14] O. Guédon and R. V ershynin, “Community detection in sparse networks via Grothendieck’s inequality , ” 2014, community detection in sparse networks via Grothendieck’s inequality . [15] S. Boucheron, G. Lugosi, and P . Massart, Concentration Inequalities: A Nonasymptotic Theory of Independence . OUP Oxford, 2013.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment