Mean-Field Inference in Gaussian Restricted Boltzmann Machine

A Gaussian restricted Boltzmann machine (GRBM) is a Boltzmann machine defined on a bipartite graph and is an extension of usual restricted Boltzmann machines. A GRBM consists of two different layers: a visible layer composed of continuous visible var…

Authors: Chako Takahashi, Muneki Yasuda

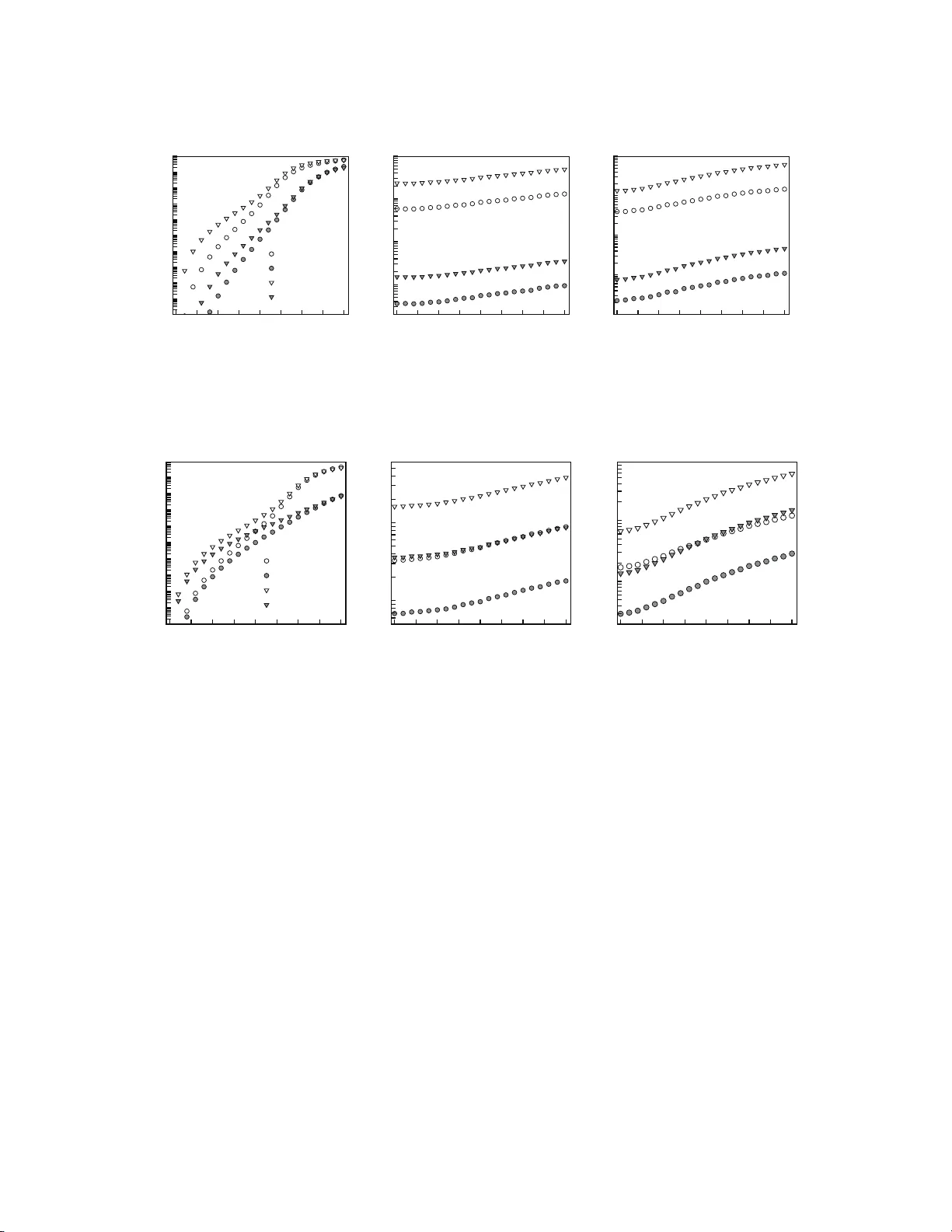

Mean-Field Inference in Ga ussian Restricted Bol tzmann Ma chine Chako T ak ahashi and Muneki Y asuda 1 Graduate School of Science and Engineering, Y amag ata Univ ersity , Japan abstract: A Gaussian r estricted Boltzmann machine (GRBM) is a Bo ltzmann machine defined on a bipartite gra ph and is an extension of usua l restricted Boltzmann machines. A GRBM consists of tw o different la yers: a visible lay er comp osed of contin uous v is ible v ar iables and a hidd en la yer composed of dis crete hidden v ariables . In this pap er, w e derive t wo different inference algorithms for GRBMs ba sed on the naive mean-field approximation (NMF A). One is an inference algorithm for whole v ariables in a GRBM, and the other is an inference algorithm for par tial v ariables in a GBRBM. W e compare the tw o metho ds ana lytically and numerically a nd show that the latter metho d is better. 1 In tro duction A restricted Boltzmann mac hine (RBM) is a statis tica l machine learning mo del that is defined o n a bipar tite graph [1 , 2] and forms a fundamen tal component of deep learning [3, 4]. The increa s ing us e of deep learning techn iques in v ario us fields is leading to a gr owing demand for the a nalysis of co mputational algo r ithms for RBMs. The computational proce dur e for RBMs is divided in to t wo main stages: the lear ning s ta ge a nd the inference s ta ge. W e tra in an RBM using an obser ved da ta set in the learning stag e, and we compute some statistical quantities, e.g., exp ectations of v ariables, for the trained RBM in the infer ence stage. F or the learning s tage, many efficient algo rithms, e.g ., con trastive divergence [2], have b een developed. On the other hand, algor ithms for the inference stage hav e not witnessed m uch so phis tication. Methods based on Gibbs sampling and the na ¨ ıve mean-field approximation (NMF A) are mainly used fo r the inference stage, for example in refer ences [5, 6]. Howev er, some new algo rithms [7, 8, 9] based o n adv anced mean-field metho ds [1 0, 11] hav e emerged in r ecent years. In this pap er, we fo cus on a mo del referred to as the Ga ussian res tricted Boltzmann machine (GRBM) which is a slightly extended v ersion of a Gaussian-Be r noulli res tr icted Bo ltzmann machine (GBRBM) [4, 12, 1 3]. A GBRBM enables us to treat contin uous data a nd is a fundament al comp onent of a Gaussian-Be rnoulli de e p Boltzmann mac hine [14]. In GBRBMs hidden v ariables a re binary , whereas in GRBMs, hidden v ariable s can take ar bitrary discr ete v a lue s . A statistical mechanical ana lysis for GBRBMs was presented in refere nce [1 5]. F or GRBMs, we study inference algor ithms based on the NMF A. Since the NMF A is o ne of the mos t imp ortant foundations of adv anced mean-field methods, gaining a deep er understanding of the NMF A for RBMs will provide us with some imp ortant insights into subseq uen t inference algorithms based on the adv anced mean-field metho ds. F or GRBMs, it is p os s ible to o btain t wo different types of NMF As: the NMF A for the who le s ystem and the NMF A fo r a mar ginalized system. First, we derive the tw o approximations and then compare them analytically and numerically . Finally , we show that the latter approximation is b etter. The remainder of this pap e r is organized as follows. The definition of GRBMs is pre sent ed in Section 2. The t wo different types of NMF As are formulated in Sections 3.1 a nd 3.2. Then, we compar e the tw o metho ds analytically in Sectio n 4 .1 and numerically in Section 4.2, and we show that the NMF A for a marginaliz ed system is b etter. Finally , Section 5 concludes the pap er. 2 Gaussian Restricted Bolt zmann Mac hine Let us co nsider a bipar tite gra ph consisting of tw o different lay ers: a visible lay er a nd a hidden layer. The contin uous v isible v ariables , v = { v i ∈ ( −∞ , ∞ ) | i ∈ V } , ar e a ssigned to the vertices in the vis ible layer and 1 Corresp onding author: mune ki@yz.yamagat a-u.ac.jp 1 the discrete hidden v ar iables with a sa mple space X , h = { h j ∈ X | j ∈ H } , are assigned to the vertices in the hidden lay er, where V and H are the sets of vertices in the visible a nd the hidden layers, resp ectively . Figure 1 shows the bipa rtite gr a ph. O n the g r aph, we define the ener g y function as ・・・ ・・・ V H Figure 1 : Bipartite g raph co nsisting o f tw o layers: the v isible lay er V a nd the hidden lay er H . E ( v , h ; θ ) := 1 2 X i ∈ V ( v i − b i ) 2 σ 2 i − X i ∈ V X j ∈ H w ij σ 2 i v i h j − X j ∈ H c j h j , (1) where b i , σ 2 i , c j , and w ij are the par ameters of the ener gy function a nd they are collectively denoted by θ . Spec ific a lly , b i and c j are the biases for the visible and the hidden v a riables, resp ectively , w ij are the couplings betw een the visible and the hidden v ariables, and σ 2 i are the par ameters rela ted to the v ariances of the visible v ar iables. The GRBM is defined by P ( v , h | θ ) := 1 Z ( θ ) exp − E ( v , h ; θ ) (2) in ter ms o f the energy function in Equation (1). Here, Z ( θ ) is the pa rtition function defined by Z ( θ ) := Z X h exp − E ( v , h ; θ ) d v , where R ( · · · ) d v = R ∞ −∞ R ∞ −∞ · · · R ∞ −∞ ( · · · ) dv 1 dv 2 · · · dv | V | is the mult iple in tegr a tion ov er all the p oss ible realiza- tions o f the visible v ariables and P h = P h 1 ∈X P h 2 ∈X · · · P h | H | ∈X is the multiple summatio n ov er those of the hidden v a riables.@ When X = { +1 , − 1 } , the GRBM corr esp onds to a GB RB M [13]. The distribution of the visible v a riables conditioned with the hidden v ariables is P ( v | h , θ ) = Y i ∈ V N ( v i | µ i ( h ) , σ 2 i ) , (3) where N ( x | µ, σ 2 ) is the Gaus sian ov er x ∈ ( −∞ , ∞ ) with mean µ and v ar iance σ 2 and µ i ( h ) := b i + X j ∈ H w ij h j . (4) On the other ha nd, the distribution of the hidden v aria ble s conditioned with the visible v ariables is P ( h | v , θ ) = Y j ∈ H exp( λ j ( v ) h j ) P h ∈X exp( λ j ( v ) h ) , (5) where λ j ( v ) := c j + X i ∈ V w ij σ 2 i v i . (6) F rom Equations (3) and (5), it is ensur ed tha t if one layer is conditioned, the v ar ia bles in the other a re statistically indepe ndent of ea ch other . This pro pe r t y is re fer red to as c onditional indep endenc e . 2 The marginal distr ibution of the hidden v ar iables is P ( h | θ ) = Z P ( v , h | θ ) d v = z H ( θ ) Z ( θ ) exp X j ∈ H B j h j + X j ∈ H D j h 2 j + X j

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment