Quantifying information transfer and mediation along causal pathways in complex systems

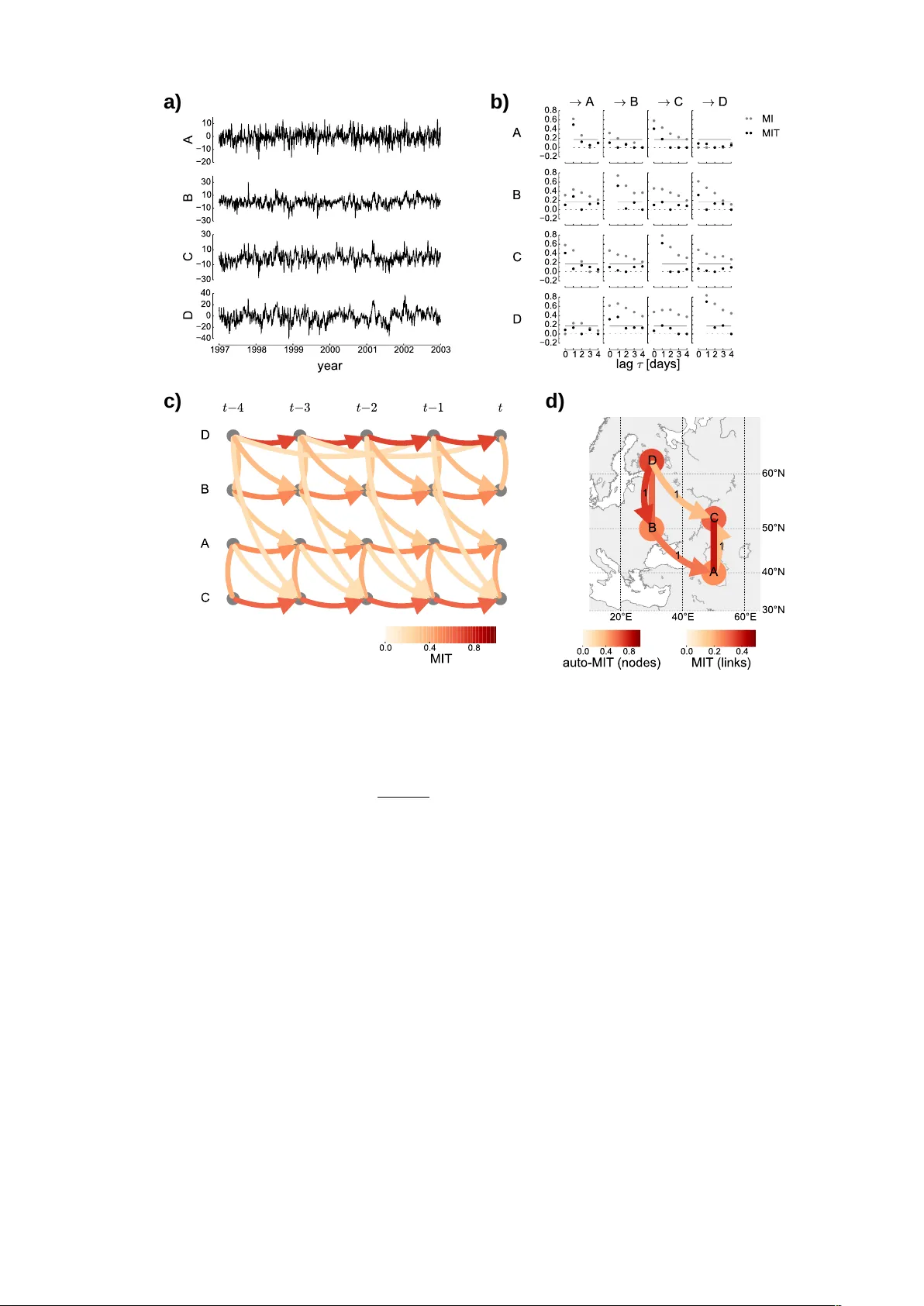

Measures of information transfer have become a popular approach to analyze interactions in complex systems such as the Earth or the human brain from measured time series. Recent work has focused on causal definitions of information transfer excluding…

Authors: Jakob Runge