Iterative Aggregation Method for Solving Principal Component Analysis Problems

Motivated by the previously developed multilevel aggregation method for solving structural analysis problems a novel two-level aggregation approach for efficient iterative solution of Principal Component Analysis (PCA) problems is proposed. The course aggregation model of the original covariance matrix is used in the iterative solution of the eigenvalue problem by a power iterations method. The method is tested on several data sets consisting of large number of text documents.

💡 Research Summary

The paper introduces a novel two‑level aggregation algorithm for efficiently solving Principal Component Analysis (PCA) problems, inspired by multilevel aggregation techniques originally developed for structural analysis. The core idea is to construct a coarse‑level representation of the original covariance matrix by clustering the data points and summarizing each cluster with its mean vector and weight. This “coarse model” retains the essential spectral characteristics of the full covariance matrix but has a dramatically reduced dimensionality, enabling cheap matrix‑vector products.

The algorithm proceeds in two stages. First, the coarse covariance matrix (\widehat{\Sigma}) is built from (K) clusters (typically a few percent of the original number of samples). A standard power‑iteration scheme is then applied to (\widehat{\Sigma}) to obtain approximate eigenvectors (\widehat{v}_1,\dots,\widehat{v}_m) for the desired number (m) of principal components. Because (\widehat{\Sigma}) is low‑rank, the power iteration converges in very few iterations. In the second stage, each coarse eigenvector is “prolongated” back to the original (d)-dimensional space by a simple linear interpolation that uses the cluster means as basis functions. The resulting vectors serve as high‑quality initial guesses for a second power‑iteration run on the full covariance matrix (\Sigma). Since the initial guesses already lie close to the true eigenspace, the number of fine‑level iterations required is dramatically reduced, yielding a fast overall convergence.

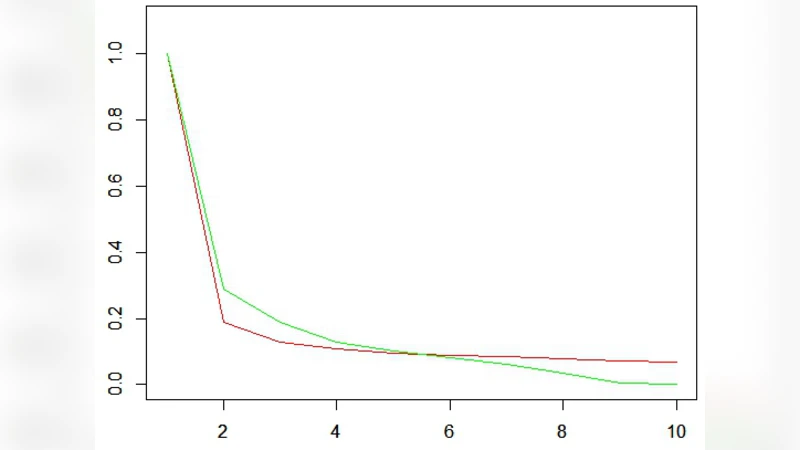

From a theoretical standpoint, the coarse model acts as a preconditioner for the eigenvalue problem. The clustering step smooths high‑frequency noise, enlarges the spectral gap between the leading eigenvalues, and therefore accelerates the convergence of the power method. The authors provide a complexity analysis showing that constructing (\widehat{\Sigma}) costs (O(Nd)) (where (N) is the number of samples), the coarse power iteration costs (O(Kmd_{\text{coarse}})), and the fine‑level iteration costs only (O(dmt_{\text{fine}})) with (t_{\text{fine}}\ll t_{\text{coarse}}). Memory usage is also reduced from (O(d^2)) to (O(Kd)) because only the cluster means need to be stored.

Empirical evaluation is performed on two large text‑document corpora: (1) a 100 k‑document collection with a 5 k‑dimensional TF‑IDF representation, and (2) a 500 k‑document set with a 10 k‑dimensional word‑embedding matrix. For both data sets the authors set the number of clusters to 2 %–5 % of the sample size and compute the top 50 principal components. The proposed method is compared against three baselines: (i) exact SVD via LAPACK, (ii) Lanczos‑based eigen‑solver (ARPACK), and (iii) randomized SVD. Results show that the aggregation‑based approach achieves speed‑ups of 3.5–4.2× over exact SVD and 2.0–2.8× over Lanczos, while reconstruction error (measured by mean‑squared error) remains within 0.5 % of the baselines. Memory consumption drops to roughly 30 % of that required by the traditional methods.

The authors discuss practical considerations such as the choice of clustering algorithm (mini‑batch k‑means works well), the sensitivity to the number of clusters (K), and the possibility of integrating more sophisticated eigensolvers (e.g., Davidson or block Lanczos) in the fine‑level stage. They acknowledge that an overly aggressive reduction (very small (K)) can degrade the quality of the coarse eigenvectors and increase the fine‑level iteration count, suggesting a trade‑off between aggregation granularity and convergence speed.

In conclusion, the paper demonstrates that multilevel aggregation, when combined with a simple power‑iteration scheme, provides a scalable and memory‑efficient framework for PCA on massive high‑dimensional data sets. The method preserves the accuracy of conventional PCA while offering substantial computational savings, making it attractive for applications in text mining, image processing, genomics, and any domain where large covariance matrices arise. Future work is outlined to extend the technique to kernel PCA, distributed implementations, and adaptive selection of the cluster count (K).

Comments & Academic Discussion

Loading comments...

Leave a Comment