A Gibbs-Newton Technique for Enhanced Inference of Multivariate Polya Parameters and Topic Models

Hyper-parameters play a major role in the learning and inference process of latent Dirichlet allocation (LDA). In order to begin the LDA latent variables learning process, these hyper-parameters values need to be pre-determined. We propose an extensi…

Authors: Osama Khalifa, David Wolfe Corne, Mike Chantler

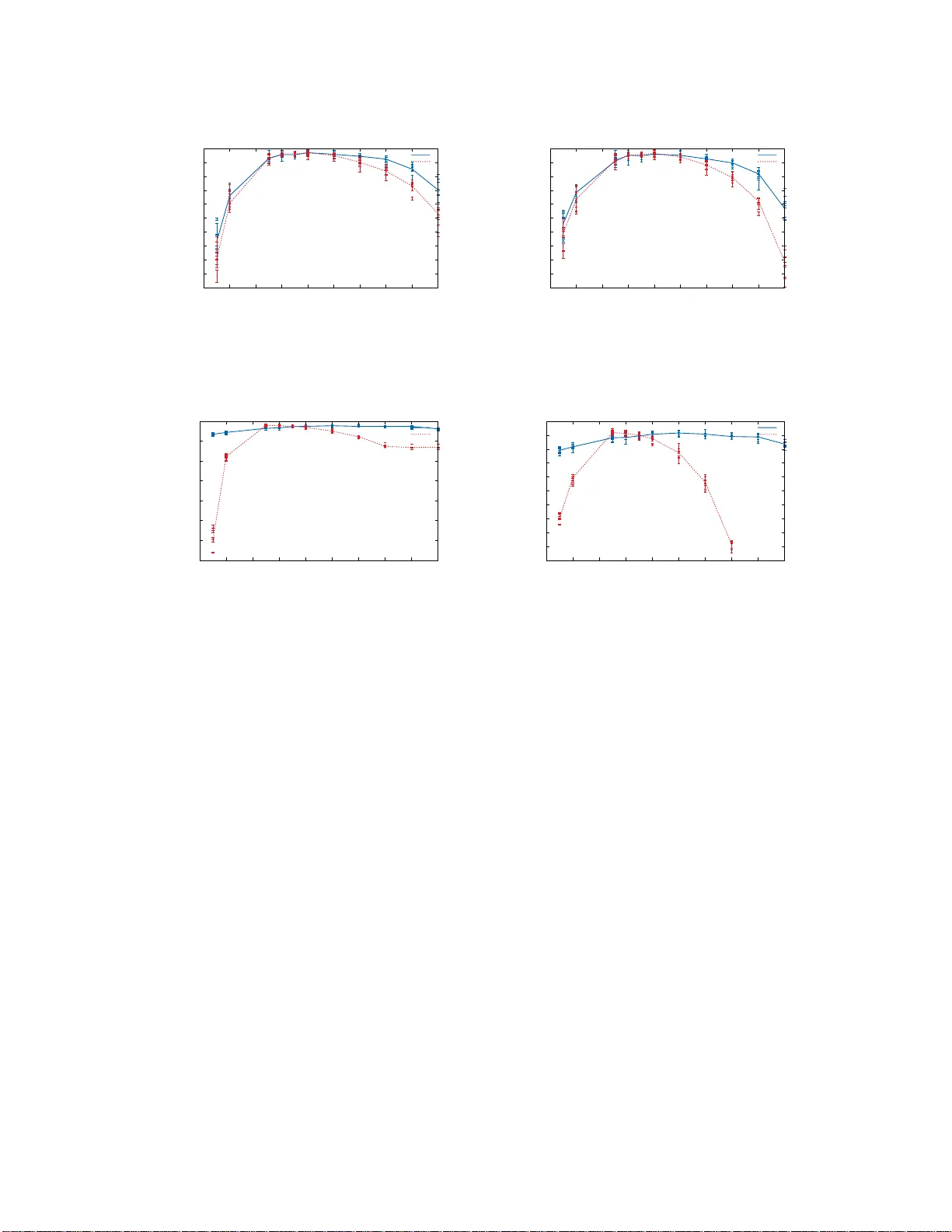

A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models A ‘Gibbs-Newton’ T ec hnique for Enhanced Inference of Multiv ariate P oly a P arameters and T opic Mo dels Osama Khalifa ok32@hw.ac.uk Da vid W olfe Corne d.w.corne@hw.ac.uk Mik e Chantler m.j.chantler@hw.ac.uk Mathematic al and Computer Scienc e Heriot-Watt University Ric c arton, Edinbur gh Unite d Kingdom Editor: Abstract Hyper -parameter s play a ma jor role in the lea rning and inference pro cess of laten t Dirichlet allo cation (LDA). In o rder to b egin the LDA latent v ariables learning pr o cess, these h yp er- parameters v alues need to b e pr e -determined. W e prop ose an extension for LDA that w e call ‘Latent Diric hlet allo catio n Gibbs Newto n’ (LDA-GN), which places non-informative priors ov er these hyper -parameter s and uses Gibbs sampling to learn appropriate v alues for them. A t the heart of LDA-GN is our propose d ‘Gibbs-Newton’ algorithm, whic h is a new technique for learning the pa rameters of m ultiv ar ia te Poly a distributions. W e re p or t Gibbs-Newton p erfo rmance res ults compared with tw o pro minen t existing appr oaches to the latter task: Mink a’s fixed-p oint iteration method and the Moments method. W e then ev aluate LD A-GN in t wo ways: (i) b y compa ring it with standard LD A in terms of the ability of the resulting topic mo dels to gener alize to unseen do cumen ts ; (ii) by co mparing it with standard LDA in its pe rformance o n a binar y classifica tion tas k. Keyw ords: T opic Mo delling, LDA, Gibbs Sampling, Perplexity , Dir ic hlet, Multiv ariate Poly a 1. In tro duction Large text corp ora are increasingly abu ndan t as a r esult of ev er-sp eedier computational pro cessing capabilities and ev er-c heap er means of d ata storage . This has led to in creased in terest in the automated extraction of usefu l information from suc h corp ora, and p articu- larly in the task of automated c h aracterization and/or sum m arizatio n of eac h d o cumen t in a corpus , as we ll as the corpu s as a whole. It is generally tacitly u ndersto o d that the first step in c haracterizing or d escribing an in - dividual do cumen t is to ident ify the topics th at are co v ered in that do cum en t. Thus, th ere is m uch current research in to topic mo d elling metho ds su c h as in (Hofmann, 1999; Blei et al., 2003; Griffiths and S teyv ers, 2004; Bun tine and Jakulin, 2004); these are algorithms that extract stru ctured s emantic topics from a collection of do cument s . Th ese algorithms h a v e man y applications in v arious fi elds suc h as genetics (Pritchard et al. , 2000 ), image analysis (F ei-F ei and Perona, 2005 ; Russell et al., 2006; Barnard et al., 2003), surve y data pr o cess- ing (Eroshev a et al., 2007) and so cial media analysis (Airoldi et al., 2007). Most curren t 1 Osama and Da vid and Mike topic mo delling metho ds are based on th e we ll-known ‘bag-of-w ords’ rep r esen tation; in this approac h, a do cum ent is represented simp ly as a b ag of w ord s, wh er e w ords coun ts are pre- serv ed b u t their order in the original do cument is ignored. Current topic mo delling metho ds also tend to use p robabilistic mo dels, inv olving many observed an d hidd en v ariables whic h need to b e learned from training data. Laten t Dirichlet allocation (LD A) (Blei et al., 200 3 )—the sp ringb oard for man y other topic m o d elling metho ds—is the sim p lest topic mo delling approac h, and the most common one in use. Ho w eve r , pre-determined hyp er-parameters pla y a ma jor role in LD A’s learning and inference pro cess; most auth ors, whether they use L DA or other algo rith m s, use fixed h yp er-parameter v alues. In this pap er, a n ew extension for LD A is prop osed, which remov es the need to pre- determine the h yp er-parameters. The basic idea b ehind this new v ersion of LD A, which w e call ‘Latent Dirichlet allo cation Gibbs Newton’ (LD A-GN), is to p lace n on-informativ e uniform p riors ov er the LD A hyp er-parameters α and β . Eac h comp onen t in α and β is sampled from a uniform distr ibution. A non-informativ e prior is used since we generally d o not hav e pr ior information ab ou t these parameters. W e ev aluate LD A-GN by comparing it with standard LD A usin g its recommended settings for α an d β as describ ed and tested in (W allac h et al., 2009a ). This comparison is based on t wo ev aluation metrics. Firs tly , the p erplexit y of the inferred topic mo del, measured on unseen test do cuments (this is a common appr oac h in the literature to ev aluate topic mo d els); secondly , w e test the inferred topic mo del’s p erform ance on a su p ervised task s uc h as sp am filtering. A t the h eart of LD A-GN is what we call a ’Gibbs-Newton’ (GN) app r oac h f or learning the hyper -p arameters of a m ultiv ariate Poly a distribution. Within LD A-GN, the role of GN is to learn the p arameters for what amount s to the com bin ation of t wo distinct multiv ariate P oly a distributed data streams that are assumed in the LD A mo del. Ho w ever, w e also extract GN as a standalone metho d—since it is able to learn the parameters f or an y data distributed und er a m u ltiv ariate P olya distribution—and we compare it with tw o prominent metho ds for this task: the Momen ts metho d su ggested by Ronning (1989 ) and Mink a’s fi xed- p oin t iteratio n metho d (Mink a, 2000) enh anced by W allac h (2008). A J av a implementa tion for LD A-GN and also for standalone GN is pro vid ed at ht tp://is.gd /GNTMOD . The r est of this pap er is organised as f ollo ws: F ir stly , f or completeness, we p ro vide a brief description of standard L D A; this includ es an elab oration of the inference and learn- ing algorithms inv olv ed in standard LD A, and some discussion of the effect of the hyp er- parameters. F ollo wing that, we briefly review current algorithms for learning the parameters of m ultiv ariate P olya distributions. In th e su cceeding section, w e pr esen t the prop osed GN algorithm, and w e ev aluate it b y comparison with other metho d s in terms of accuracy and sp eed. Afterwards, the prop osed new LD A extension, LDA- GN, is d etaile d . W e th en mov e on to a discussion of our ev aluatio n metrics, follo wed by an ev aluation of LD A-GN, b efore a concluding discussion. 2. An ov erview of Latent Diric hlet Allo cation Laten t Diric hlet Allocation (LDA ) (Blei et al., 2003) is an unsu p ervised generativ e mo d el to disco v er hidd en topics in a collection of do cument s or corpus W = { W 1 , W 2 , .., W M } where M is the total num b er of do cumen ts in the corpus W an d W i is the i th do cument in 2 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models α θ Z W ϕ β N M K Figure 1: LD A mo del this corpus. T his mo d el tr eats w ords as observed v ariables and topics as un observ ed laten t v ariables. The basic idea is to consider eac h do cumen t W d as ha ving b een generated by sampling from a mixture of laten t topics θ d . Eac h topic ϕ k is a m ultinomial distribution o v er the full set of vocabulary terms in the corp us. By ‘v o cabu lary terms’ w e mean the list of u n ique w ord tok ens that app ear in the corpu s. Diric hlet d istributions D ir ( θ | α ) and D ir ( ϕ | β ) are used to mo del θ and ϕ v ariables resp ectiv ely . Where, α and β are the concen tration p arameters for Diric h let distributions and the mo del’s hyp er-parameters. Figure 1 sho ws a graphical rep resen tation of the LD A mo del us in g plate notation where K is the total num b er of laten t topics and Z is topic assignmen ts for eac h w ord in eac h do cum ent. Th u s, th e LD A mo del’s generativ e pro cess can b e d escrib ed as follo ws: at the b eginnin g of the pro cess, K topic distrib u tions ϕ are s ampled fr om a Diric hlet distribution ov er all v o cabulary terms with parameter β . Th is set of topics is then us ed to represents all d o cu- men ts in the corpus. F or eac h do cumen t W d in th e corpus, a topic mixture θ d is sampled from a Diric hlet distribution with parameter α . Then, in order to sample W d,t the t th w ord in the do cum en t W d , a topic Z d,t is sampled fr om a m ultinomial distribution with p aram- eter θ d . After that, the word W d,t itself is samp led from the multinomial distr ibution with parameter ϕ Z d,t whic h is the wo r d distrib u tion of topic Z d,t . Consequent ly , there are t wo Diric hlet d istributions: Th e first one is a distr ib ution ov er the K d imensional simplex, which is u sed to mo del topic mixtures. On the other han d , the second one is a distribution o ver the V dimensional simplex, wh ic h is u sed to mo del topic distributions; w here V is the total n u m b er of corpus vocabulary terms. LD A assumes that samples for eac h sp ecific v ariable are considered to b e i.i.d. 2.1 LD A Learning a nd Inference F rom Figure 1 and the generativ e pro cess describ ed ab o ve, the LD A join t distribution is giv en b y the follo wing equation: P ( W , Z, θ , ϕ | α, β ) = K Y k =1 P ( ϕ k | β ) M Y d =1 P ( θ d | α ) N d Y t =1 P ( Z d,t | θ d ) P ( W d,t | ϕ Z d,t ) , where N d is num b er of words in the do cumen t W d . The conju gacy b et w een Diric h let and m ultinomial distrib utions allo ws θ and ϕ to b e marginalized out: P ( W , Z | α, β ) = M Y d =1 B ( n d, ◦ + α ) B ( α ) · K Y k =1 B ( n k ◦ + β ) B ( β ) . 3 Osama and Da vid and Mike where, n d, ◦ is a vec tor of length K , and eac h comp onen t v alue n k d, ◦ represent s n u m b er of w ords in do cumen t W d assigned to the topic k . O n the other hand , n k ◦ is a ve ctor of length V ; eac h comp onent v alue n k ◦ ,r represent s the num b er of in stances of term r in the wh ole corpu s that are assigned to topic k . B ( α ) is the Diric hlet distribu tion’s normalization constan t, whic h is a m ultiv ariate v ersion of the biv ariate Beta fun ction, and is th e norm alizing constant of Beta d istribution. The Diric h let distrib u tion’s normalization constan t is giv en b y the follo wing formula: B ( α ) = Q K k =1 Γ( α k ) Γ( P K k =1 α k ) . The ke y inference problem that needs to b e calculated is the p osterior distrib ution giv en b y the f ormula: P ( Z | W, α, β ) = P ( W , Z | α, β ) P ( W | α, β ) = Q M d =1 Q N d t =1 P ( W d,t , Z d,t | α, β ) Q M d =1 Q N d t =1 P K k =1 P ( Z d,t = k , W d,t | α, β ) . Unfortunately , the exac t calculation of the p osterior distribution is generally intractable due to the denominator. Its calculation inv olv es su m ming ov er all p ossible settings of the topic assignmen t v ariable Z . This n umb er has an exp onent ial v alue giv en by K N where N = P M d =1 N d is the tota l n umb er of corpus w ord s. Ho wev er, there are sev eral appro xi- mation algorithms to samp le fr om th e p osterior distribution whic h can b e used for LD A suc h as: v ariational inference metho ds (Blei et al., 2003; Hoffman et al., 2013), exp ectation propagation (Mink a and Laffert y, 2002), and Gibbs sampling (Steyv ers and Griffiths, 2007; Griffiths and Steyv ers, 2004; Pr itchard et al., 2000). V ariational metho d s and Mark ov- c hain Mon te C arlo metho ds such as Gibbs sampling are widely used in the literature. Gibbs sampling, d espite b eing the slo west, is widely considered to provide the most accurate results (Namata et al. , 2009). Ho we ver, Asun cion et al. (2009) s h o w that with app r opriate v alues of h yp er-parameters α and β these m etho d s p ro vide almost the same leve l of accuracy . In this pap er , Gibbs s amp ling is used for all exp eriment s . 2.1.1 The LD A Gibbs S ampler Gibbs sampling is a Mark ov- chain Monte Carlo (MCMC) algorithm, whic h can b e seen as a sp ecial case of the Metrop olis–Hastings algorithm (Metrop olis et al., 1953). It can b e used to obtain an observ atio n sequence from a high-dimens ional multiv ariate probability distribution. Consequentl y , the sequence can b e used to appro ximate a marginal distribution for one or a sub set of the mo del’s v ariables. In addition, it can b e used to compute an int egral o v er one of the hid den v ariables, and consequently compute its exp ected v alue. T hanks to the conju gacy b et ween Mu ltinomial and Dirichlet d istributions, a ‘collapsed’ Gibbs sampler (Liu, 1994) can b e implemented for LDA , where the θ and ϕ v ariables can b e analytically in tegrated out b efore carrying out the Gibbs sampling p ro cess. This allo ws us to sample directly from the d istribution P ( Z | W, α, β ) instead of the distribu tion P ( Z | θ , ϕ, W, α, β ). This in tu r ns reduces the n umb er of hidd en v ariables in the LD A mo del, an d mak es inference and learning faster. In order to build a Gibbs sampler for a mo del with one m u ltidimensional hidden v ariable x and observed v ariable D , full cond itionals P ( x i | x ¬ i , D ) need to b e calculated, where, 4 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models x ¬ i represent s all other dimens ions of v ariable x exclud in g dimension i . T hus, the Gibbs sampling pro cess in volv es rep etition of t wo steps: 1. Cho ose a dimension i (order is not imp ortant) 2. Sample x i from distrib ution P ( x i | x ¬ i , D ). F or LD A, it is required to get samples from the p osterior d istribution P ( Z | W , α, β ), th us full conditional distributions P ( Z ( d,t ) | Z ¬ ( d,t ) , W , α, β ) should b e d efined, w here, Z ¬ ( d,t ) represent s topic assignmen ts v alues for all corpus w ord s excluding the t th w ord in d o cumen t W d . Assuming that the t th w ord in do cumen t W d is a w ord instance of term v , W ( d,t ) = v then: P ( Z ( d,t ) = k | Z ¬ ( d,t ) , W , α, β ) = P ( Z ( d,t ) = k , Z ¬ ( d,t ) , W | α, β ) P ( Z ¬ ( d,t ) , W ¬ ( d,t ) | α, β ) P ( W ( d,t ) | α, β ) ∝ P ( Z ( d,t ) = k , Z d, ¬ t , W d | α, β ) P ( Z d, ¬ t , W d, ¬ t | α, β ) ∝ ( n k , ¬ ( d,t ) d, ◦ + α k ) n k , ¬ ( d,t ) ◦ ,v + β v P V r =1 n k , ¬ ( d,t ) ◦ ,r + β r . where, W d, ¬ t is w ords of do cument W d excluding its t th w ord and Z d, ¬ t is W d, ¬ t w ord’s topic assignmen ts. Also, n k , ¬ ( d,t ) d, ◦ is the num b er of w ords in do cument W d assigned to topic k excluding the do cument’s t th w ord, wh er eas n k , ¬ ( d,t ) ◦ ,v is the num b er of w ord instances of term v assigned to topic k from all corpus do cum ents exclud in g the t th w ord in do cumen t W d . Finally , w e need to construct v alues of θ and ϕ whic h corresp ond to setting Z . By definition, those t w o v ariables are distr ibuted Multinomially with Dirichlet priors. Th us, they are distributed b y Diric hlet-Multinomial d istribution as follo w s : P ( θ d | Z d , α ) ∼ D ir ( n d, ◦ + α ) P ( ϕ k | Z, β ) ∼ D ir ( n k ◦ + β ) . where, n d, ◦ is a vec tor of topics observ ation coun ts in the do cument W d and n k ◦ is a v ector of term observ ation count s for topic k . Th er efore, and using the exp ectatio n of th e Dirichlet distribution, θ and ϕ corresp onding to the setting Z are giv en by: θ k d = n k d, ◦ + α k P K i =1 n i d, ◦ + α i (1) ϕ v k = n k ◦ ,v + β v P V r =1 n k ◦ ,r + β r . (2) Consequent ly , LD A’s collapsed Gibbs sampling algorithm is giv en by Algorithm 1, w here, n k ◦ , ◦ = P V r =1 n k ◦ ,r and β ◦ = P V r =1 β r 5 Osama and Da vid and Mike Algorithm 1 LDA collapse Gibbs samp ler Input: W w ords of the corpu s, α and β the mo del p arameters. Output: Z topic assignments, θ topics mixtures, ϕ topics d istributions. Randomly initialize Z with integ ers ∈ [1 ..K ] rep eat for d = 1 to M do for t = 1 t o N d do v ← W d,t ; k ← Z d,t n k d, ◦ ← n k d, ◦ − 1; n k ◦ ,v ← n k ◦ ,v − 1; n k ◦ , ◦ ← n k ◦ , ◦ − 1; k ∼ ( n k d, ◦ + α k ) n k ◦ ,v + β v n k ◦ , ◦ + β ◦ Z d,t ← k n k d, ◦ ← n k d, ◦ + 1; n k ◦ ,v ← n k ◦ ,v + 1; n k ◦ , ◦ ← n k ◦ , ◦ + 1; end for end for un til con v er gence Calculate θ using Equation 1 Calculate ϕ using Equ ation 2 return Z , θ , ϕ 2.2 LD A Mo del Hyp er-parameters The h yp er-p arameters α and β pla y a large role in learnin g and bu ilding high-qualit y topic mo dels (Asu ncion et al., 2009; W allac h et al., 2009a; Hu tter et al. , 2014). T yp ically , sym- metric v alues of α and β are us ed in the literature. Using s ymmetric α v alues means that all topics hav e the same c hance to b e assigned to a fixed n u mb er of do cumen ts. Symmetric β v alues mean that all terms—frequen t and in frequen t ones—ha ve the same c hance to b e assigned to fixed n umber of topics. Ho w ever, according to W allac h et al. (2009a), using asymmetric α and symmetric β tends to giv e the b est p erf orm ance r esults in terms of the inferred mo del’s abilit y to generalise to u n seen d o cu men ts. The h yp er-parameters α and β generally ha ve a smo othing effect ov er multinomial v ariables and they con trol the sparsit y of θ and ϕ resp ectiv ely . The sp arsit y of θ is con trolled b y α ; h en ce smaller α v alues mak e the mo d el prefer to describ e eac h d o cu men t using a smaller num b er of topics. The sparsit y of ϕ is con trolled by β ; hence smaller β v alues mak es the mo del r eluctan t to assign corre- sp ondin g terms to m ultiple topics. Consequen tly , similar wo rd s with s im ilar small β v alues tend to b e assigned to the same su bset of topics. 3. Estimation of Multiv ariate P oly a Distr ibution P arameters The Multiv ariate Poly a d istr ibution, also kn o wn as the Dirichlet -Multinomial distribution, is a comp ou n d d istribution. Sampling from the multiv ariate Poly a distribution in volv es sampling a v ector ρ f rom a K dimensional Diric h let d istribution with parameter α and then dra win g a set of discr ete samples fr om a categorical distrib ution with p arameter ρ . This pro cess corresp on d s to the ’P olya urn’ which compr ises sampling w ith replacemen t from an 6 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models urn con taining coloured b alls. Every time a ball is s amp led, its colour is observe d and it is replaced into the u r n; then an ad d itional ball w ith the same colour is added to the urn. An insp ection of the LD A mo del rev eals that the mod el comprises t wo m ultiv ariate P oly a distribu tions to mo del th e data. The fir st distribution is used to mo del the dis- tribution of the do cumen ts ov er topics giv en m ultinomial counts. These count s represent the n u m b ers of words assigned to eac h topic for eac h do cument. The second distribution mo dels the distribu tion of the topics o ver vocabulary terms, giv en m ultinomial coun ts of the w ord instances assigned to different topics in the corpus as a whole. Thus, accurate metho ds to learn m ultiv ariate Po lya distribution parameters can enhance the qualit y of LD A topic mo delling at the lev el of do cuments ov er topics, as well as at the leve l of topics o v er v o cabulary terms. Consider a set of data-coun ts ve ctors D = { π 1 , π 2 , ..π N } wh ere π i j is the num b er of times the outcome wa s i in the j th sample. Assuming that these data are distrib uted according to a multiv ariate P oly a distribu tion with parameter α , the b asic idea b ehind learning this parameter fr om data is to maximize the lik eliho o d : L ( α |D ) = N Y j =1 P ( π j | α ) = N Y j =1 Z ρ j P ( π j | ρ j ) P ( ρ j | α ) dρ j = N Y j =1 Γ( α ◦ ) Γ( π ◦ j + α ◦ ) K Y i =1 Γ( π i j + α i ) Γ( α i ) . where, α ◦ = P K i =1 α i and π ◦ j = P K i =1 π i j . The researc h literature is replete with metho ds to estimate multiv ariate P olya p arameters; h o w ever, there is no exact closed form solution a v ailable (Ronning, 1989; Wic k er et al., 2008). O ne of the most accurate method s is Mink a’s fixed-p oint iteration metho d (Mink a , 2000). On the other hand , one of th e fastest metho ds is the Momen ts metho d (Mink a , 2000; Ronning, 1989; Leeds and Gelfand, 1989). Both will no w b e br iefly describ ed and reviewe d . 3.1 The Momen ts Metho d The Moment s metho d is an app ro ximate m axim um lik eliho o d tec hniqu e usefu l as an initial- ization step for other metho ds. It p r o vides a fast wa y to learn appr o ximations to Diric hlet or m u ltiv ariate Poly a distribu tion p arameters directly from data. Th e Momen ts metho d uses kno wn f ormulae for the fi rst and second momen ts of the distrib u tion’s den sit y function to calc u late the parameter. The first momen t (mean) of the multiv ariate P oly a densit y function is giv en by the follo wing f orm ula: E [ π i ] = π ◦ α i α ◦ . (3) It is easy to calculate the empirical mean v alue from data coun ts. Consequently , all that is required is to calculate the v alue α ◦ in order to figur e out the v alue of parameter α . This can b e d one us in g the second momen t (v ariance) v alue. V ariance of one dimension is enough to calculate α ◦ (Dishon and W eiss , 1980): v ar [ π i ] = E [ π i ]( π ◦ − E [ π i ])( π ◦ + α ◦ ) π ◦ (1 + α ◦ ) , 7 Osama and Da vid and Mike giv es: α ◦ = π ◦ E [ π i ] π ◦ − E [ π i ] − v ar [ π i ] π ◦ ( v ar [ π i ] − E [ π i ]) + E [ π i ] 2 . Ho w ever, Ronning (1989) suggests that using the fir s t K − 1 d imensions giv es more accurate results: log α ◦ = 1 K − 1 K − 1 X i =1 log π ◦ E [ π i ] π ◦ − E [ π i ] − v ar [ π i ] π ◦ ( v ar [ π i ] − E [ π i ]) + E [ π i ] 2 ! . (4) 3.2 Mink a’s F ixed-P oin t I teration Metho d The Basic idea b ehin d Mink a’s fixed-p oint iteration metho d for maximizing th e likelihoo d is as follo ws: Starting from an initial guess of the multi v ariate Poly a distrib u tion parameter α , a simple lo w er b ound on the likelihoo d, which is tigh t on α . is constructed. The maxim um v alue of th is n ew lo w er b ound is calculate d in closed f orm and b ecomes a new estimate of α (Mink a, 200 0 ). This pro cess is rep eated u n til con vergence. Thus, the ob j ectiv e is to maximise the lik eliho o d fu nction for the multiv ariate Pol ya distr ib ution: L ( α |D ) = N Y j =1 Γ( α ◦ ) Γ( π ◦ j + α ◦ ) K Y i =1 Γ( π i j + α i ) Γ( α i ) . (5) The f ollo wing tw o lo w er b ounds can b e used to facilit ate the calc u lation of the m aximum lik eliho o d: Γ( x ) Γ( η + x ) ≥ Γ( ˆ x ) Γ( η + ˆ x ) e ( ˆ x − x )(Ψ( η + ˆ x ) − Ψ( ˆ x )) (6) and, Γ( η + x ) Γ( x ) ≥ Γ( ˆ x + η ) Γ( ˆ x ) x ˆ x ˆ x [Ψ( ˆ x + η ) − Ψ( ˆ x )] . (7) where η ∈ Z ≥ 0 is a p ositive int eger, ˆ x ∈ R > 0 and x ∈ R > 0 are strictly p ositiv e real num b ers. The Ψ function is the fir st d eriv ative of the loggamma function, kno wn as the digamma function (Da vis , 1972): Ψ( x ) = ∂ [log Γ( x )] ∂ x = Γ ′ ( x ) Γ( x ) Substituting E quation 6 and Equation 7 in Equ ation 5 Leads to: L ( α |D ) ≥ N Y j =1 e − α ◦ [ Ψ( π ◦ j + α ⋆ ◦ ) − Ψ( α ⋆ ◦ ) ] K Y i =1 α α ⋆ i [ Ψ( π i j + α ⋆ i ) − Ψ( α ⋆ i ) ] i · C . (8) where α ⋆ i , α ⋆ ◦ are t wo real v alues close to the original v alues of α i and α ◦ resp ectiv ely . The v alues used here are the p r evious guess of α i and α ◦ . And, C is a constant that comprises all terms wh ic h do n ot in volv e α . Thus, taking the logarithm of b oth sides of Equation 8 leads to: log L ( α |D ) ≥ F ( α ) + C , 8 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models where, F is a fu nction giv en by the follo wing formula: F ( α ) = N X j =1 − α ◦ Ψ( π ◦ j + α ⋆ ◦ ) − Ψ( α ⋆ ◦ ) + K X i =1 log α i Ψ( π i j + α ⋆ i ) − Ψ ( α ⋆ i ) α ⋆ i . Consequent ly , it is p ossib le fi nd the maxim um of this b ound in a closed form. Firstly by calculating the deriv ativ e of F with r esp ect to α i and then solving the equation ∂ [ F ( α )] ∂ α i = 0: ∂ [ F ( α )] ∂ α i = N X j =1 h Ψ( π i j + α ⋆ i ) − Ψ( α ⋆ i ) i α ⋆ i α i − Ψ( π ◦ j + α ⋆ ◦ ) − Ψ( α ⋆ ◦ ) = 0 . The pr evious first degree equation h as a simp le solution: α i = α ⋆ i P N j =1 Ψ( π i j + α ⋆ i ) − Ψ( α ⋆ i ) P N j =1 Ψ( π ◦ j + α ⋆ ◦ ) − Ψ( α ⋆ ◦ ) . (9) W allac h (2008 ) p ro vides a faster version of this algorithm b y using the digamma function recurrence relation. T h is is d on e by representing d ata counts samples as h istograms. In other w ords, Let N b e the num b er of samples for a K dimens ional multiv ariate P oly a distribution. Then, a more efficien t repr esen tation would b e as K ve ctors of coun ts of elemen ts, where the m th cell of the i th v ector r ep resen ts the n u mb er of times the count m is observe d in the set of N v alues related to the dimension i . This v alue is represente d by: C m i = N X j =1 δ ( π i j − m ) . (10) Similarly , C m ◦ represent s the n umb er of times the sum m is obser ved in the set of N sum v alues—o v er all dimensions—of samp le counts. C m ◦ = N X j =1 δ π ◦ j − m , (11) where, π ◦ j = P K i =1 π i j . Equation 10, Equation 11 allo ws u s to rewrite Equ ation 9 in a more efficien t wa y: α i = α ⋆ i P dim ( C i ) m =1 C m i [Ψ( m + α ⋆ i ) − Ψ( α ⋆ i )] P dim ( C ◦ ) m =1 C m ◦ [Ψ( m + α ⋆ ◦ ) − Ψ( α ⋆ ◦ )] , (12) where, C i is a histogram ve ctor for all coun ts in the N samples associated to the dimension i and C ◦ is a histogram v ector for all coun ts sums o ver all dimensions in the N samp les. And, dim ( C i ) and dim ( C ◦ ) are the n u m b ers of elemen ts in v ectors C i and C ◦ resp ectiv ely . This new form ula sp eeds the computation to an exten t that dep ends on how many frequent count v alues can b e sp otted in eac h dimension i ∈ [1 ..K ]. T h e more f r equen t v alues there are the faster the computation is. Unfortunately , the d igamma function call is time-c ons uming in 9 Osama and Da vid and Mike a α ρ π K N Figure 2: P olya d istribution generativ e mo d el practice; ho wev er, W allac h (2008) suggests that there is ro om for impro ving the p erformance b y get ting r id of the d igamma function call completel y . This can b e done by taking into consideration the digamma r ecurrence relation in (Da vis , 1972): Ψ( x + 1) = Ψ( x ) + 1 x . This formula can b e extended for an y p ositive in teger m : Ψ( x + m ) = Ψ( x ) + m X l =1 1 x + l − 1 . Rewriting give s : Ψ( x + m ) − Ψ( x ) = m X l =1 1 x + l − 1 . (13) Substituting E quation 13 in Equation 12 leads to: α i = α ⋆ i P dim ( C i ) m =1 C m i P m l =1 1 α ⋆ i + l − 1 P dim ( C ◦ ) m =1 C m ◦ P m l =1 1 α ⋆ ◦ + l − 1 . (14) An efficient implemen tation of Mink a’s fixed-p oin t iteration using Equation 14 is describ ed in Algorithm 2. 3.3 The Proposed GN Metho d The parameters of a m u ltiv ariate P olya d istribution or Diric hlet distribution can b e learned from data u sing standard Ba yesian metho ds. In th is pap er, w e fo cus on the multiv ariate P oly a distribu tion as it p la ys a ma jor role in LD A. Th u s , giv en N samples f rom a m ulti- v ariate P olya distrib ution, the data can b e mo delled usin g th e generativ e m o d el shown in Figure 2. The generativ e pro cess in th is case amounts to first sampling a v alue for eac h of the K comp onents from a unif orm d istribution with parameters 0, a . Then, a v ector ρ of dimension K is sampled from a Diric h let distribution with parameter α . Even tually , a m ultinomial v ariable π is sampled from the multinomial distribu tion with parameter ρ . A non-informativ e u niform pr ior is p laced b efore eac h comp onen t α i of the parameter v ector α b ecause w e ha ve n o p rior kn o wledge ab out their v alues. The mo del’s join t probabilit y is: P ( α, π | a ) = N Y j =1 Z ρ P ( π | ρ ) P ( ρ | α ) dρ K Y i =1 P ( α | a ) . 10 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models Algorithm 2 Mink a fixed-p oin t iteration metho d Input: C samples coun ts histograms, C ◦ samples lengths histogram. Output: α the parameter for multiv ariate p oly a distribution. Initialize α using E quation 3 and Equation 4 (the Moments metho d). rep eat D g ma ← 0 D ntr ← 0 for m = 1 t o dim ( C ◦ ) do D g ma ← D g ma + 1 α ◦ + m − 1 D ntr ← D ntr + C m ◦ D g ma end for for i = 1 to K do D g ma ← 0 N m t r ← 0 for m = 1 t o dim ( C i ) do D g ma ← D g ma + 1 α i + m − 1 N m t r ← N mtr + C m i D g ma end for α i ← α i N mtr D ntr end for un til con v er gence return α where P ( π | ρ ) ∼ M utl nomial ( ρ ), P ( ρ | α ) ∼ D ir ( α ) and P ( α | a ) ∼ U nif or m (0 , a ). Proba- bilit y densities substitution and furth er simplification leads to: P ( α, π | a ) = 1 a K N Y j =1 Γ( α ◦ ) Γ( π ◦ j + α ◦ ) K Y i =1 Γ( π i j + α i ) Γ( α i ) , where, π i j represent s the count in sample j and dimension i ; wh ereas, π ◦ j represent s coun t sum in sample j ov er all dimensions. In order to learn v alues of the h idden v ariable α a Gibbs samp ler needs to b e designed. The goal of Gibbs sampling here is to app ro ximate the distribution P ( α | π , a ). Th is tak es place after calculating the distribution P ( α k | α ¬ k , π , a ) and then w e sample eac h α i v alue separately . P ( α k | α ¬ k , π , a ) = P ( α k , α ¬ k , π | a ) P ( α ¬ k , π | a ) ∝ P ( α, π | a ) . 11 Osama and Da vid and Mike It is not imp ortan t to calculate the exact probabilit y for Gibbs sampling. A ratio of pr ob- abilities is sufficien t; thus, starting fr om the joint distribution: P ( α k | α ¬ k , π , a ) ∝ N Y j =1 Γ( α ◦ ) Γ( π ◦ j + α ◦ ) Γ( π k j + α k ) a Γ( α k ) Y i 6 = k Γ( π i j + α i ) Γ( α i ) ∝ N Y j =1 Γ( α ◦ ) Γ( π ◦ j + α ◦ ) Γ( π k j + α k ) Γ( α k ) . Instead of samp ling from this distrib ution, the v alue wh ic h maximize the logarithm of this densit y function is tak en. T hus, the task is to maximize the function F ( x ) wh ic h is giv en b y the f ollo w ing form u la: F ( α k ) = N X j =1 [log Γ ( π k j + α k ) − log Γ( α k )] − [log Γ( π ◦ j + α ◦ ) − log Γ( α ◦ )] . The fir st deriv ative of F ( α k ) is: ∂ [ F ( α k )] ∂ α k = N X j =1 [Ψ( π k j + α k ) − Ψ ( α k )] − [Ψ( π ◦ j + α ◦ ) − Ψ( α ◦ )] = 0 . (15) Unfortunately , there is n o trivial solution for the pr evious equation; so we use Newton’s metho d to find its ro ot. Although the p revious equatio n has multiple ro ots, we are in terested in the p ositiv e real ro ot. In order to app ly Newton’s metho d, w e need to find th e s econd deriv ativ e of F ( α k ). ∂ 2 [ F ( α k )] ∂ α 2 k = N X j =1 [Ψ 1 ( π k j + α k ) − Ψ 1 ( α k )] − [Ψ 1 ( π ◦ j + α ◦ ) − Ψ 1 ( α ◦ )] . where Ψ 1 ( x ) = ∂ 2 log Γ( x ) ∂ x 2 is the second deriv ative of the loggamma fun ction, cal led the trigamma function (Da vis, 1972). It is not imp ortan t to fi nd a solution with high precision at the b eginnin g, b ecause it can b e seen that Equation 15 includ es the co efficien t α ◦ = P K k =1 α k . T his co efficien t is not accurate in the fir s t iteration of Gibbs sampling as it represents a sum of guessed v alues. The v alue of α ◦ is up dated after eac h full iteratio n of the Gibbs sampler; in other wo r ds, after pro cessing all α k v alues. Thus, only one iteration of Newton’s metho d is u sed for eac h α k . α k = α ⋆ k − P N j =1 h Ψ( π k j + α ⋆ k ) − Ψ ( α ⋆ k ) i − h Ψ( π ◦ j + α ⋆ ◦ ) − Ψ( α ⋆ ◦ ) i P N j =1 h Ψ 1 ( π k j + α ⋆ k ) − Ψ 1 ( α ⋆ k ) i − h Ψ 1 ( π ◦ j + α ⋆ ◦ ) − Ψ 1 ( α ⋆ ◦ ) i . 12 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models Rewriting b y taking in to consideration the recurrence formulae f or the digamma and trigamma functions: Ψ( x + η ) − Ψ( x ) = η X l =1 1 ( x + l − 1) Ψ 1 ( x + η ) − Ψ 1 ( x ) = η X l =1 − 1 ( x + l − 1) 2 giv es: α k = α ⋆ k − P N j =1 P π k j l =1 1 ( α ⋆ k + l − 1) − P π ◦ j l =1 1 ( α ⋆ ◦ + l − 1) P N j =1 P π k j l =1 − 1 ( α ⋆ k + l − 1) 2 − P π ◦ j l =1 − 1 ( α ⋆ ◦ + l − 1) 2 . Rewriting u sing the histogram coun ts C for more compu tational efficiency: α k = α ⋆ k − L 1 − P dim ( C k ) m =1 C m k P m l =1 1 ( α ⋆ k + l − 1) L 2 − P dim ( C k ) m =1 C m k P m l =1 − 1 ( α ⋆ k + l − 1) 2 . where L 1 and L 2 are giv en by the follo w in g f orm ulae: L 1 = dim ( C ◦ ) X m =1 C m ◦ m X l =1 1 ( α ⋆ ◦ + l − 1) L 2 = dim ( C ◦ ) X m =1 C m ◦ m X l =1 − 1 ( α ⋆ ◦ + l − 1) 2 . The complete GN m etho d is d escrib ed in Algorithm 3. 3.4 Exp erimen ta l Ev aluation: GN In this section, t w o main exp er im ents are designed to assess the p erformance of the GN metho d against the Moments metho d and Mink a’s fixed-p oint iteration. The first exp eri- men t is int en d ed to ev aluate accuracy w h ereas th e second exp eriment is aimed at assessing its efficiency . Artificial data are used in this section, allo wing us to compare metho ds un d er a wide v ariety of conditions. Th e num b er of m ultiv ariate Poly a samples used ranges from 10 to 1000, and the num b er of eleme nts used to generat e eac h sample falls in the range [1000 , 20000 ] . 3.4.1 A c curacy Benchma rk In ord er to assess the accuracy of the prop osed metho d, tw o categ ories of data sets are considered. Both categ ories are designed to use a 10 dimensional multiv ariate P olya distri- bution with kn o wn parameters α . Th e fi r st category has small comp onent v alues in α , b eing real num b ers sampled uniform ly from the range ]0 , 1]. The second category has relativ ely large α comp onen t v alues, in the range ]0 , 50] , again sampled uniform ly . Eac h categ ory can con tain from 50 to 1000 m u ltinomial count v ectors or multiv ariate P olya samples. 13 Osama and Da vid and Mike Algorithm 3 GN metho d pseud o co de Input: C samples coun ts histograms, C ◦ samples lengths histogram. Output: α the parameter for multiv ariate p oly a distribution. Initialize α using E quation 3 and Equation 4 (the Moments metho d). rep eat D g ma ← 0, T g ma ← 0 L 1 ← 0, L 2 ← 0 α ◦ ← P K i =1 α i for m = 1 t o dim ( C ◦ ) do D g ma ← D g ma + 1 ( α ◦ + m − 1) T g ma ← T g ma − 1 ( α ◦ + m − 1) 2 L 1 ← L 1 + C m ◦ D g ma L 2 ← L 2 + C m ◦ T g ma end for for i = 1 to K do D g ma ← 0, T g ma ← 0 N m t r ← 0, D ntr ← 0 for m = 1 t o dim ( C i ) do D g ma ← D g ma + 1 ( α i + m − 1) T g ma ← T g ma − 1 ( α i + m − 1) 2 N m t r ← N mtr + C m i D g ma D ntr ← D ntr + C m i T g ma end for α new i ← α i − L 1 − N mtr L 2 − D ntr if α new i < 0 then α new i ← α i 2 end if α i ← α new i end for un til con v er gence return α 14 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models 0 0 . 2 0 . 4 0 . 6 0 . 8 1 0 0 . 2 0 . 4 0 . 6 0 . 8 1 α i Difference 0 0 . 2 0 . 4 0 . 6 0 . 8 1 (a) GN metho d 0 0 . 2 0 . 4 0 . 6 0 . 8 1 0 0 . 2 0 . 4 0 . 6 0 . 8 1 α i Difference 0 0 . 2 0 . 4 0 . 6 0 . 8 1 (b) Fix ed-po in t iteration 0 0 . 2 0 . 4 0 . 6 0 . 8 1 0 0 . 2 0 . 4 0 . 6 0 . 8 1 α i Difference 0 0 . 2 0 . 4 0 . 6 0 . 8 1 (c) Moments metho d Figure 3: The differences b et wee n actual and learned v alues of α parameter comp onent s for small v alues of α , α i ∈ ]0 , 1] . Th e smaller the d ifference the b etter. (a) The pr op osed GN metho d, (b) Mink a’s fixed-p oint iteration metho d, (c) Th e Momen ts metho d 0 10 20 30 40 50 0 2 4 6 8 10 12 14 16 18 20 22 24 α i Difference 0 5 10 15 20 25 (a) GN metho d 0 10 20 30 40 50 0 2 4 6 8 10 12 14 16 18 20 22 24 α i Difference 0 5 10 15 20 25 (b) Fix ed-po in t iteration 0 10 20 30 40 50 0 2 4 6 8 10 12 14 16 18 20 22 24 α i Difference 0 5 10 15 20 25 (c) Moments metho d Figure 4: The differences b et wee n actual and learned v alues of α parameter comp onent s for large v alues of α , α i ∈ ]0 , 50] . The smaller th e difference the b etter. (a) Th e prop osed GN metho d, (b) Mink a’s fixed-p oint iteration metho d, (c) Th e Momen ts metho d The Momen ts metho d, Mink a’s fixed-p oin t iteration metho d, and the prop osed GN metho d are u sed to learn the parameter α v ectors from the data. Giv en th e r esu lting α v ector, the d ifferen ce b et ween eac h comp onent α i and its actual v alue is calculated and registered. 80 exp eriment s were done, 40 for eac h category of data set, allo wing these metho ds to b e ev aluated und er h ighly v aried settings in terms of data sparsit y and num b er of samp les needed. Figure 3 displays the differences of small α comp onents and their actual v alues usin g the fir st set of data. Fi gur e 4 sho ws the d ifferences when α i has relativ ely large v alues, in the second category of data. The figur e indicates that Mink a’s fixed-p oin t iteration and GN metho d r ecord similar lev els of accuracy , and b oth are clearly b etter than the Momen ts metho d in this resp ect. This is not su rprising, as Mink a’s fixed-p oin t iteration metho d and the GN metho d are ev entually maximizing the same log-lik eliho o d fun ction. W allac h (2008) b enc h marks Mink a’s fixed-p oint iteration m etho d alongside other meth- o ds inv olving Mink a’s Newton iteration on the log evidence (Mink a , 2000), fixed-p oint iteration on the lea ve- one-out log evid en ce (Mink a, 2000), and fixed-p oint iteratio n on the log evidence in tro du ced by MacKa y an d Pet o (1995). She finds that her efficien t implemen- tation of Mink a’s fi xed-p oin t iterati on is the fastest and the most accurate. In this pap er, w e are comparing the prop osed metho d with W allac h’s efficien t implementa tion of Mink a’s fixed-p oint iteratio n metho d and with the Moments m etho d (Ronnin g, 1989). MALLET (McCallum, 2002) imp lemen tation for the Momen ts metho d is used . It can b e seen from 15 Osama and Da vid and Mike 0 . 5 1 1 . 5 2 · 10 4 500 1 , 000 0 50 100 150 Elemen ts Samples Time (ms) Mink a FPI GN Figure 5: Execution time for GN and Mink a’s fixed-p oin t iteratio n (Mink a FPI) for a 10 dimensional m ultiv ariate P olya d istribution us in g differen t v alues of n u m b er of samples and differen t v alues of n u mb er of elements used to generate eac h sample Figure 3 and Figure 4 that GN and Mink a’s fixed -p oin t iteration metho d provide the same lev el of accuracy . Ho w ever, it is also u seful to b enchmark those tw o metho ds against eac h other in terms of sp eed. 3.4.2 Spee d Benc hmark Another t w o data sets are generated f or this purp ose. The first set is generated using a 10 dimensional m ultiv ariate P oly a distribu tion whereas the Second set is generated u sing a 1000 dimensional m ultiv ariate Poly a d istribution. These t wo datasets are used to test the p erformance of the pr op osed algorithm against Mink a’s fixed-p oin t iteratio n in relativ ely lo w and high dimensional cases r esp ectiv ely . Both distrib utions hav e known parameter v ector α , where all comp onents α i are in ]0 , 1]. F or b oth sets, the n umb er of m ultinomial coun ts v ectors or num b er of samples falls in the r ange 10 to 1000, starting from 10 and increasing in steps of 50. The total num b er of element s used to generate eac h sample h as a v alue b et ween 1000 and 20000, starting at 1000 and increasing with step size 1000. Using the fir st data set, and for eac h com bination of num b er of samples and n u m b er of elemen ts, th e data set is generated f rom give n random α v alues, and then the time take n b y the estimatio n metho d is m easured. Th e solution is considered to b e con verged (for b oth metho ds) w hen the maxim um v alue among differences b et we en previous guesses of alpha comp onen ts v alues and their current estimates is less than 1.0 e-6 . This pro cess is rep eated 100 times, and th e mean time is plotted as a dot on the 3D su rface sho w n in Figure 5 . The whole p ro cess was r ep eated 100 times again, this time using the higher dimens ional samples. The corresp onding 3D surface for the high-dimensional tr ials is shown in Figure 6. Figure 5 and Figure 6 show that the prop osed metho d is faster th an Mink a’s fixed-p oint iteration under all settings. Although the GN m ethod requ ir es more computation inside eac h 16 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models 0 . 5 1 1 . 5 2 · 10 4 500 1 , 000 0 50 100 Elemen ts Samples Time (ms) Mink a FPI GN Figure 6: Execution time for GN and Mink a’s fixed-p oint iteration (Mink a FPI) for a 1000 dimensional m ultiv ariate P olya d istribution us in g differen t v alues of n u m b er of samples and differen t v alues of n u mb er of elements used to generate eac h sample iteration ov er all alpha v alues, it requires less than half the num b er of iterations required b y Mink a’s fi x ed -p oin t iteration metho d until conv ergence. This sp eedup is more pronoun ced in the case of th e lo w er-dimens ional dataset, h o we ver the n umb er of iterations needed is less than that r equ ired b y Mink a’s fixed-p oin t iteration algorithm und er all settings. 4. LD A-GN: Incorp orating Hyp er-Pa r ameter Inference for E nhanced T opic Mo dels LD A-GN is a v arian t of LD A that incorp orates the prop osed GN metho d , using it to learn v ariables ϕ , θ , Z , α and β . The m ain idea b ehind L D A-GN is to allo w similar words to ha ve similar b eta v alues and consequently to b e d istributed similarly o v er topics. Thus, an asymmetric b eta p rior sh ould b e used in this case. In order to learn b eta v alues, the LDA mo del can b e extended by placing a n on -in f ormativ e prior b efore b eta v ariables as sho wn in Figure 7. T his gives corpu s words the abilit y to b e d istributed differen tly ov er topics. This is useful and n ecessary b ecause some terms n eed to b e participating in a h igher num b er of topics compared w ith other terms. O n the other h and, when a symmetric b eta is us ed , all w ords hav e to participate in roughly the same n umb er of topics, which can b e seen as a limitation in the original LD A mo del. F u rther, it may b e argued that topics should not b e b ound ed by the n u m b er of do cuments that th ey b e d istributed o ver. Th us, an asymmetric alpha p rior is advisable as w ell. Th e same tec hn ique is applied to alpha, which is, in other w ords placing a non-inform ativ e pr ior b efore the alpha v ariables, as also sh own in Figure 7. The generativ e pro cess asso ciated with LD A-GN is d escrib ed in Algorithm 4. T he LD A-GN generativ e pr o cess is similar to the standard LDA generativ e pro cess, with an extra pair of steps. The first step is sampling eac h α v ector comp onen t v alue from a uniform distribu tion with parameters 0 and a . The second step is samp ling eac h β v ector comp onen t v alue from a u niform distribution with parameters 0 and b . This will giv e α k 17 Osama and Da vid and Mike a α θ Z W ϕ β b K N M K V Figure 7: LD A-GN mo del and β v the abilit y to tak e an y suitable v alue in the range [0 , a ] and [0 , b ] resp ectiv ely; w here a and b are a p ositiv e real n umbers . The remaining steps of the generativ e pro cess are exactly the same as in the standard LDA mo del. Algorithm 4 LDA -GN generativ e p ro cess for v = 1 to V do Cho ose a b eta v alue β v ∼ U nif or m (0 , b ) end for for k = 1 to K do Cho ose an alph a v alue α k ∼ U nif or m (0 , a ) Cho ose a distribution o ver terms ϕ k ∼ D ir ( β ) end for for d = 1 to M do Dra w a topic prop ortion θ d ∼ D ir ( α ) for t = 1 t o N d do Dra w a topic assignment z d,n ∼ M ul tinomial ( θ d ) , z d,n ∈ 1 ..K Dra w a wo r d w d,n ∼ M ul tinomial ( ϕ z d,n ) end for end for 4.1 LD A-GN Mo del I nference F rom Figure 7 and the LD A-GN generativ e pro cess describ ed in Algorithm 4, the join t distribution is giv en by the follo wing equation: P ( W , Z, θ , ϕ, β , α | a, b ) = V Y r =1 P ( β r | b ) K Y k =1 P ( α k | a ) P ( ϕ k | β ) M Y d =1 P ( θ d | α ) N d Y t =1 P ( Z d,t | θ d ) P ( W d,t | ϕ Z d,t ) . Again, the conjugacy b et ween Diric hlet and m ultinomial distr ib utions allo ws θ an d ϕ to b e marginalized out: P ( W , Z, β , α | a, b ) = K Y k =1 1 a V Y r =1 1 b M Y d =1 B ( n d, ◦ + α ) B ( α ) K Y k =1 B ( n k ◦ + β ) B ( β ) . 18 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models Gibbs samp lin g equations: P ( Z ( d,t ) = k | Z ¬ ( d,t ) , W , α, β , a, b ) ∝ ( n k , ¬ ( d,t ) d, ◦ + α k ) n k , ¬ ( d,t ) ◦ ,v + β v P V r =1 n k , ¬ ( d,t ) ◦ ,r + β r P ( α k | α ¬ k , Z, W, β , a, b ) ∝ M Y d =1 Γ( α ◦ )Γ( n k d, ◦ + α k ) Γ( α k )Γ( n ◦ d, ◦ + α ◦ ) ∝ M Y d =1 Q n k d, ◦ − 1 l =0 α k + l Q n ◦ d, ◦ − 1 l =0 α ◦ + l P ( β v | β ¬ v , Z, W, α, a, b ) ∝ K Y k =1 Γ( β ◦ )Γ( n k ◦ ,v + β v ) Γ( β v )Γ( n k ◦ , ◦ + β ◦ ) ∝ K Y k =1 Q n k ◦ ,v − 1 l =0 β v + l Q n k ◦ , ◦ − 1 l =0 β ◦ + l . where n k ◦ , ◦ is the total num b er of words assigned to the topic k in the whole corpus, and n ◦ d, ◦ is the total num b er of w ord s in the do cument W d . Because P ( θ d | Z d , α ) and P ( ϕ k | Z, β ) are samples from a Multiv ariate Poly a distribution, Equation 1 and E q u ation 2 can still b e used to calculate the v ariables θ and ϕ resp ectiv ely . Th is calculation can tak e p lace after Gibbs sampling con ve r gence by using a go o d sample. Con s equen tly , the LDA-G N collapsed Gibbs samp lin g algorithm is giv en by Algorithm 5 5. Ev aluation Due to the un sup ervised nature of LD A-based topic mo delling algo r ithms, ev aluation of inferred topic mo dels is a d iffi cu lt task. Ho we ver, there are some p opu lar metho ds in the literature to attempt th is ev aluation. A topic mo del’s per pl exity , und er a hold-out set of test do cument s, is us ually used as a standard ev aluation metric. On the other hand, a topic mo del’s p erformance in a sup er v ised task can also b e used to assess its p erformance against other mo d els. Th ese metho ds are furth er describ ed next. 5.1 P erplexity One common w a y to ev aluate a topic mod el is to calculate its p erplexity un der a set of unseen test do cument s . P erplexit y is a measure to b enc hmark a topic mo del’s ability to generalize to u nseen do cu men ts. In other w ord s , it p r o vides a numerical v alue ind icating, in effect, ho w m uch the topic mod el is ’surp rised’ b y new data. The h igher the pr obabilit y of test do cument words give n the mo del, the s m aller the p erplexit y v alue b ecomes. Consequently , a mo del with a smaller p erplexit y v alue can b e considered to h a v e b etter abilit y to generalize to un s een do cuments. Let ˜ W b e an u nseen test corpus which con tains ˜ M do cumen ts. The p erplexit y is calc u lated b y exp onenti ating the negativ e m ean log- likelihoo d v alue of the whole set of d o cumen t words giv en the mo d el. P erplexity is giv en by the follo wing f orm ula: P er pl exity ( ˜ W | W , Z , α, β ) = exp − P ˜ M j =1 log P ( ˜ W j | W , Z , α, β ) P ˜ M j =1 ˜ N j (16) where j ∈ 1 .. ˜ M and ˜ N j is the num b er of words in test do cu men t ˜ W j . Unfortunately the exact v alue of the marginal distributions P ( ˜ W j | W , Z , α, β ) is in tr actable d ue to the need to su m o ve r all differen t Z settings (Whic h is exp onen tial in the n umb er of w ord s in the 19 Osama and Da vid and Mike Algorithm 5 LDA -GN collapsed Gibbs s ampler Input: W w ords of the corpu s Output: Z topic assignments, θ topics mixtures, ϕ topics distrib utions, α and β the mo dels parameters. Randomly initialize Z with integ ers ∈ [1 ..K ] rep eat for k = 1 to K do α k ← arg max α k Q M d =1 Γ( α ◦ )Γ( n k d, ◦ + α k ) Γ( α k )Γ( n ◦ d, ◦ + α ◦ ) end for for v = 1 to V do β v ← arg m ax β v h Q K k =1 Γ( β ◦ )Γ( n k ◦ ,v + β v ) Γ( β v )Γ( n k ◦ , ◦ + β ◦ ) i end for for d = 1 to M do for t = 1 t o N d do v ← W d,t ; k ← Z d,t n k d, ◦ ← n k d, ◦ − 1; n k ◦ ,v ← n k ◦ ,v − 1; n k ◦ , ◦ ← n k ◦ , ◦ − 1; k ∼ ( n k d, ◦ + α k ) n k ◦ ,v + β v n k ◦ , ◦ + β ◦ Z d,t ← k n k d, ◦ ← n k d, ◦ + 1; n k ◦ ,v ← n k ◦ ,v + 1; n k ◦ , ◦ ← n k ◦ , ◦ + 1; end for end for un til con v er gence Calculate θ using Equation 1 Calculate ϕ using Equ ation 2 return Z , θ , ϕ , α , β 20 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models corpus). Ho wev er, there are m ultiple m ethod s to approximat e th is marginal pr obabilit y in the literature suc h as: annealed imp ortance sampling (AIS) (Neal, 200 1 ), harmonic mean metho d (Newton and Raftery , 1994), Chib-style estimation (Chib , 1995 ; W allac h et al., 2009b). One of the b est metho d s in the literature is L eft-T o-Righ t algorithm (W allac h et al., 2009b; Buntine, 2009). 5.1.1 The Left-To-Right Algorithm The Left-T o-Righ t m etho d is b ased on br eaking the pr oblem in to a ser ies of p arts: P ( ˜ W j | W , Z , α, β ) = ˜ N j Y t =1 P ( ˜ W j,t | ˜ W j, 1 , ˜ W j, 2 , ..., ˜ W j,t − 1 , W , Z , α, β ) = ˜ N j Y t =1 X ˜ Z j, 1 ,..., ˜ Z j,t P ( ˜ W j,t , ˜ Z j, 1 , ..., ˜ Z j,t | ˜ W j, 1 , ..., ˜ W j,t − 1 , W , Z , α, β ) . where ˜ Z j giv es the topic assignmen ts of test do cument ˜ W j . It can b e s een that the pre- vious equation in volv es marginalizing out the v ariable ˜ Z j ; this is in tractable for large test do cument s and a high num b er of topics K . Luc kily , the previous sum o ver all p ossib le v alue settings of ˜ Z j, 1 , ..., ˜ Z j,t can b e app ro ximated using sequential Monte Carlo tec hniques (Del Moral et al., 2006) with R p articles. T h us , let ( ˜ Z j, 1 , ..., ˜ Z j,t ) ∼ P ˜ Z j, 1 , ..., ˜ Z j,t | ˜ W j, 1 , ..., ˜ W j,t − 1 , W , Z , α, β . Consequent ly , the appr oximati on can b e calculated using R samples from the previous dis- tribution as follo ws: X ˜ Z j, 1 ,..., ˜ Z j,t P ( ˜ W j,t , ˜ Z j, 1 , ..., ˜ Z j,t | ˜ W j, 1 , ..., ˜ W j,t − 1 , W , Z , α, β ) ≈ 1 R R X r =1 P ( ˜ W j,t | ˜ W j, 1 , ..., ˜ W j,t − 1 , ˜ Z r j, 1 , ..., ˜ Z r j,t − 1 , W , Z , α, β ) = 1 R R X r =1 K X k =1 n k ◦ , ˜ W j,t + β ˜ W j,t n k ◦ , ◦ + β ◦ ˜ n k ,r j, ◦ + α k P K i =1 ˜ n i,r j, ◦ + α i , where ˜ n i,r j, ◦ is the num b er of w ord s in test do cument ˜ W j that are assigned to topic i in the sample r , and ˜ Z r j,t is the topic assignmen t for the t th w ord in test do cu men t ˜ W j and sample r . Th us, the Left-T o-Righ t algorithm is given b y Algorithm 6. 5.2 Spam Filtering P erformance Another w ay to ev aluate a topic mo del is to use the mo del in a sup ervised task suc h as classification or spam filtering. The topic m o del’s p erformance can then b e tested against some other mo d els’ p erformance. In th is pap er, we c hose a spam filtering task to compare LD A-GN against standard LD A. There are m u ltiple wa ys to use topic mo del for spam filter- ing. F or example, after treating the s pam filtering task as a bin ary classification problem, a topic m o d el can b e used as a do cument dimensionalit y r eduction tec hnique to choose fea- tures and then carry out classificatio n usin g standard metho ds (Blei et al., 2003). Ho w ever, 21 Osama and Da vid and Mike Algorithm 6 Th e Left-to-righ t algorithm to estimate the v alue log P ( ˜ W j | W , Z , α, β ) Input: W words of th e training corpus, ˜ W j w ords of the j th test d o cu men t, Z topic as- signmen ts of the training corpu s, α and β the mo dels p arameters. Output: l = log P ( ˜ W j | W , Z , α, β ) the log like lih o o d of the test do cument ˜ W j giv en a trained LD A mod el. l ← 0 for t = 1 t o ˜ N j do P t ← 0 for r = 1 t o R do for t ′ = 1 to t do v ← ˜ W j,t ′ ; k ← ˜ Z j,t ′ ˜ n k j, ◦ ← ˜ n k j, ◦ − 1 k ∼ ( ˜ n k j, ◦ + α k ) n k ◦ ,v + β v n k ◦ , ◦ + β ◦ ˜ Z j,t ′ ← k ˜ n k j, ◦ ← ˜ n k j, ◦ + 1 end for P t ← P t + P K k =1 n k ◦ , ˜ W j,t + β ˜ W j,t n k ◦ , ◦ + β ◦ ˜ n k,r j, ◦ + α k P K i =1 ˜ n i,r j, ◦ + α i end for l ← l + log P t R k ∼ ( ˜ n k j, ◦ + α k ) n k ◦ , ˜ W j,t + β ˜ W j,t n k ◦ , ◦ + β ◦ ˜ n k j, ◦ ← ˜ n k j, ◦ + 1 ˜ Z j,t ← k end for return l 22 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models in th is pap er we us e the ‘Multi-Corpus LDA’ app roac h from (B ´ ır´ o, 2009 ; B ´ ır´ o et al., 2008); that appr oac h is describ ed n ext. 5.2.1 Mul ti-Corpus LDA In the Multi-corpus LD A (MC-LDA) approac h (B ´ ır´ o, 2009 ; B ´ ır´ o et al., 2008), t wo distinct LD A mo dels are inferred using the s ame vocabulary words. The Firs t mo d el is inferr ed from the coll ection of spam d o cumen ts with K ( s ) topics, whereas the second mo del is inferred from th e collectio n of a non-sp am do cuments with K ( n ) topics. Consequen tly , the wo rd s distributions for K ( n ) + K ( s ) topics are learned. The idea of MC-LDA is to merge the previous t w o mo d els and create a unified m o d el with K ( n ) + K ( s ) topics. T his is done by simply enco ding the topic iden tification num b er s of the spam topic mo del to b egin from K ( n ) + 1 instead of b eginning from 1. Thus, for an unseen do cument ˜ W ˜ d , the inference in the u nified mo del can b e made using the follo wing formula: P ( ˜ Z ( ˜ d,t ) = k | ˜ Z ¬ ( ˜ d,t ) , ˜ W , W , Z , α, β ) ∝ ( ˜ n k , ¬ ( ˜ d,t ) ˜ d, ◦ + α k ) n k , ¬ ( ˜ d,t ) ◦ ,v + β v P V r =1 n k , ¬ ( ˜ d,t ) ◦ ,r + β r , where ˜ n k , ¬ ( ˜ d,t ) ˜ d, ◦ represent s the n u m b er of w ords in test do cument ˜ W ˜ d that are assigned to topic k excludin g the t th w ord in that do cum en t. Ho wev er , the count n k , ¬ ( ˜ d,t ) ◦ ,v , which represent s the num b er of word instances of v o cabulary term v form all do cumen ts assigned to topic k , is un kno wn. Th u s the previous Multi-Corpus inf erence formula’s s econd factor can b e app r o ximated u sing the ϕ t k v alue. Let ˜ W ( ˜ d,t ) , wh ic h is th e t th w ord in test do cu men t ˜ W ˜ d , b e v , then: P ( ˜ Z ( ˜ d,t ) = k | ˜ Z ¬ ( ˜ d,t ) , ˜ W , W , Z , α, β ) ∝ ∼ ( ˜ n k , ¬ ( ˜ d,t ) ˜ d, ◦ + α k ) ϕ v k . As a result of the in ference pro cess and after a su fficien t num b er of iterati ons , the w ord s topic assignmen t Z ˜ d is cal cu lated. C onsequen tly , d o cumen t topic d istr ibution θ ˜ d is cal cu lated using: θ k ˜ d = ˜ n k ˜ d, ◦ + α k P K i =1 ˜ n i ˜ d, ◦ + α i . In order to classify a d o cumen t ˜ W ˜ d , the LD A prediction v alue τ = P K ( s ) i = K ( n ) +1 θ i ˜ d is calcu- lated. if the LDA prediction v alue τ is ab o ve than a sp ecific threshold, the do cument will b e classified as spam. Otherwise, the do cum ent can b e classified as legitimate . 6. Exp erimen tal Ev aluation of LD A -GN In this section, ev aluation results using metho ds detailed in the pr evious sectio n is dis- pla ye d . T his mainly inv olv es ev aluating LD A-GN using the p erplexit y metric and using its p erf ormance on a spam filtering task. In b oth cases, LD A-GN is compared with the standard LD A m o d el suggeste d in (W allac h et al., 2009a). Acco r ding to W allac h et al. (2009 a ) the standard LD A mo del u se an asymmetric Dirichlet pr ior o v er do cum en ts-o ver- topics distribu tions θ and a symmetric Dirichlet prior ov er topics-o ver-w ords distrib utions 23 Osama and Da vid and Mike ϕ . How ev er, in this pap er the new method LD A-GN has asymmetric Diric h let pr iors o ve r b oth do cu men ts-o ver-to p ics distribu tions θ and topics-o ver-w ords distributions ϕ . 6.1 P erplexity Score Using Algorithm 5, an LDA-G N mo del Gibbs sampler is implement ed . On the other h an d , MALLET (McCallum , 2002) L DA im p lemen tation is used for standard LD A. Both are im- plemen ted u sing Ja v a. Recommended settings suggested by W allac h et al. (2009a) are used for the standard LD A mo del, whic h are: asymmetric Dirichlet pr ior o v er do cum en ts-o ver- topics distrib utions and a symmetric Dirichlet prior o ve r topics-o v er-w ord s distribu tions. In order to train and ev aluate these mo d els, t wo corp ora are used. First corpus is EPS R C corpus (623 do cumen ts conta in in g 122 672 w ord s and 13035 v o cabularies) (Khalifa et al., 2013) which comprises summaries of pr o j ects in Inform ation and Comm u nication T ec hnol- ogy (ICT) f unded by th e En gineering and Physica l S ciences Researc h Council (EPS R C). Second corp us is News corpu s (2213 do cuments con taining 453462 words and 38500 v o cab- ularies) wh ic h is a sub set of Asso ciated Press (AP) data fr om the First T ext Retriev al Con- ference (TREC-1) (Harman , 1992). Both corp ora are pro vid ed at http: //is.gd/G NTMOD . All standard En glish stop words are remo ved from the corp ora b efore learning or infer - ence application. Eac h corpus is d ivided into t wo p arts: the fi r st p art is u sed f or training, whereas the s econd part is used for ev aluation p urp oses. The first p art, which comprises 80% of corpus d o cumen ts, is used to train b oth LD A and LD A-GN mo d els. The remaining 20% part are used to calc u late p er p lexit y scores using Equation 16. In order to calculate probabilities P ( ˜ W j | W , Z , α, β ), a Ja v a implemen tation of the Left-T o-Righ t algorithm 6 is used, where j ∈ [1 .. ˜ M ] is the test d o cumen t ind ex, ˜ W j is the j th test do cument and ˜ M is total num b er of test do cumen ts. Th u s, a b etter mo del should has a higher probabilit y P ( ˜ W j | W , Z , α, β ) v alue and consequen tly a low er p erplexity score. Initial v alues of v ariable α are set as α k = 50 /K for all topics k ∈ [1 ..K ]. The β v ariable v alues are initialized as β v = 0 . 01 for all v o cabulary terms v ∈ [1 ..V ]. T hese initial v alues are recommended in the MALLET p ac k age do cumenta tion (McCal lu m, 2002). After that, the standard L D A mo d el’s MALLET imp lemen tation is r un using a training corpus as an input. F or the fi rst 50 iterations (the b urn-in p erio d), b oth α and β v alues are kept fi xed. After the burn -in p erio d, Mink a’s fixed-p oint iteration is u sed to learn α and β v alues from the sampler’s histograms. The α and β v alues learning pr o cess is rep eated once ev ery 20 iterations. After 2000 iterations, the mo del is considered fully trained. On the other hand, LD A-GN mo d el is trained u sing the s ame training corpus whic h is used for standard LD A. Asymmetric α and β v alues are used in th is mo del. Similarly to the stand ard LD A mo del, LD A-GN mo del is considered fully trained after 2000 iterations. The p erformance of standard LD A and L DA-GN is tested ov er a range of scenarios. Both approac h es are ru n five times for eac h of the follo win g settings for n umb er of topics: 5, 10, 25, 50, 100, 150, 200, 300, an d 600 topics. F ollo wing ev ery individual run,a fresh split is used to generate trainin g and testing corp ora. Figure 8 and Figure 9 sho w p erplexit y v alues of u n seen test data for mo dels inferred b y LD A-GN and LD A, on the EPSRC and News corp ora resp ectiv ely . Error bars are drawn for eac h p oin t in the figures. Figure 8 and Figure 9 sho w that LD A-GN outp erforms standard LDA for all settings in these t wo 24 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models 2100 2200 2300 2400 2500 2600 2700 2800 2900 0 50 100 150 200 250 300 350 400 450 500 Perplexity Number of Topics LDA-GN LDA Figure 8: EPS RC corpus, LDA and LDA-G N p erplexit y v alues f or different num b er of topics 3600 3800 4000 4200 4400 4600 4800 5000 5200 5400 5600 0 50 100 150 200 250 300 350 400 450 500 Perplexity Number of Topics LDA-GN LDA Figure 9: NEWS corpus, LD A and LD A-GN p erplexit y v alues for different num b er of topics corp ora used for ev aluation, s uggesting that topic m o dels inf erred via LD A-GN are b etter able to generalize than mo del’s in ferred via stand ard LDA . 6.2 Spam Filtering Score Another w a y to ev aluate a topic mo del is to c h ec k its p erf ormance in a su p ervised task suc h as spam filtering. Thus, tw o sp am filters are bu ilt using the MC-LDA metho d elab orated b efore. The firs t one is built using standard LD A whereas th e second one is built using LD A-GN. Three spam corpora are used for ev aluation purp oses: (i) The Enron corpus (Metsis et al., 2006), whic h compr ises a subset of Enron emails from the p erio d from 1999 unt il 2002; this corpus conta in s 16545 legitimate message and 17169 spam;(ii) the LingSpam corpus (S akkis et al., 2003) whic h contai n s 2412 legitimate m essage and 481 spam; (iii) Th e SMS Collection v.1 (Almeida et al. , 2011) which contai n s 482 7 legitimate S MS messages and 747 spam SMS messages. Standard English stop w ords are remov ed from these three corp ora. E ac h corpus is split into t wo parts: the first p art, wh ic h comprises 80% of the corpus, is u sed for training whereas the remaining 20% is used for testing purp oses. us ing only the training part, t wo MC-LDA mo dels are bu ilt u sing standard LD A and LD A-GN resp ectiv ely . 25 Osama and Da vid and Mike 0.75 0.8 0.85 0.9 0.95 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Accuracy Threshold LDA-GN LDA a (a) Spam filtering a ccuracy 0.65 0.7 0.75 0.8 0.85 0.9 0.95 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 F-Measure Threshold LDA-GN LDA b (b) Spam filtering f-Meas ure Figure 10: Enron corpus, LD A and LD A-GN spam filtering p erformance using different threshold settings The first MC-LD A mo del whic h is built using standard LD A comprises t wo L DA mo dels com bined. The first one is trained using only legitimate messages and 50 topics, w hereas the s econd one is trained using only spam messages and 10 topics. On the other hand, a second MC-LD A mod el whic h is b u ilt using LD A-GN comprises t wo LD A-GN mo dels com bined. Aga in , the first one is trained using legitimat e messages and 50 topics, wh ereas the second one is trained u s ing sp am messages w ith 10 topics. Give n t w o fully tr ained MC-LD A mo dels, an inference is p erformed for all test do cument s . In order to fully test the mo dels’ classification abilities, multiple thresholds are used. Th resholds v alues used are: 0.05, 0.1, 0.25, 0.3, 0.35, 0.4, 0.5, 0.6, 0.7, 0.8 and 0.9. F or eac h threshold and give n the trained MC-LDA mo dels the inferen ce is applied three times f or eac h mo d el. Me an v alues of accuracy and f-Measure are calculated, then these p oin ts are registered in a grap h . Standard deviation or standard err or (S EM) v alues of accuracy and f-Measure are calculate d as w ell, and sho wn as error bars. Th e whole pro cess is r ep eated 5 times, ev ery time with a f r esh tr ain/test split. Ev entual ly , the med ian of the fiv e p oints asso ciated w ith eac h thr eshold v alue is calculated and a cu r v e is drawn. Figure 10a, Figur e 11a and Figure 12a sho w accuracy scores for b oth LD A-GN and standard LDA mo dels for the En ron, LingSpam and SMS Collection v.1 corp ora resp ectiv ely . Moreo ver, Figure 10b, Figure 11b and Figure 12b sho w f-Measure s cores for b oth LDA- GN and stan- dard LD A mo d els f or the En ron, LingSpam and SMS Collect ion v.1 corp ora resp ectiv ely . P erus al of these figures sho ws that mo dels in ferred via LDA-G N lead to results that are less sensitiv e to the th reshold v alue. Ho w eve r , when the righ t th reshold v alue is c hosen b oth, mo dels are able to pro vid e almost th e same leve l of accuracy . Since LD A-GN mo dels p ro vide less sensitivit y to threshold v alues, it can b e argued that the topic mo dels inferred b y LD A-GN ha ve higher discrimination than those in ferred b y standard LD A. In th e MC-LD A approac h, a do cument is classified as spam if its score τ = P K ( s ) i = K ( n ) +1 θ i ˜ d is larger than the sp ecific threshold v alue. On the one h and, when the threshold v alue is less th an its op timal v alue, the s pam fi lter tend s to b ecome m ore s tr ict. This means that m ore legitimate do cu men ts are classified as sp am. O n the other hand , when the thr esh old v alue is larger than its optimal v alue, the sp am filter tend s to b ecome more toleran t, consequen tly , classifying more sp am do cuments as legitimate. MC-LD A based on LD A-GN is able to ac hiev e higher accuracy and f-measure scores than MC-LDA based on 26 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models 0.8 0.82 0.84 0.86 0.88 0.9 0.92 0.94 0.96 0.98 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Accuracy Threshold LDA-GN LDA a (a) Spam filtering a ccuracy 0.5 0.55 0.6 0.65 0.7 0.75 0.8 0.85 0.9 0.95 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 F-Measure Threshold LDA-GN LDA b (b) Spam filtering f-Meas ure Figure 11: LingSpam corpus, LDA and LD A-GN spam filtering p erformance using d ifferent threshold settings 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Accuracy Threshold LDA-GN LDA a (a) Spam filtering a ccuracy 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 F-Measure Threshold LDA-GN LDA b (b) Spam filtering f-Meas ure Figure 12: SMS Collection v.1 corpus, L D A and LD A-GN sp am filtering p erformance u sing differen t threshold settings LD A mo del wh en the thr eshold is less or more than its optimal v alue. More sp ecifically , Figure 13 shows that LD A-GN, in the Enr on case, has b etter p recision for threshold v alues less than optimal, and b etter recall scores for threshold v alues m ore than optimal. Thus, a legitimate do cument’s score o v er LD A-GN spam topics is alwa ys less than its score o v er standard LD A s p am topics. O n th e con trary , a s pam d o cumen t’s score ov er LDA- GN spam topics is alw ays higher than its score ov er standard LD A s pam topics. So, the topic m o d el inferred by LD A-GN pro vides a b etter represen tation of the corpu s than that inferr ed b y standard LDA. 7. Conclusions In this pap er, tw o main con tribu tions are offered: Firstly , a new algorithm to learn mul- tiv ariate Po lya distribu tion parameters named ‘GN’ is describ ed and ev aluated. Secondly , based on GN, a new extension for LDA, dub b ed ‘LDA -GN’ is prop osed and ev aluated. In order to assess its p erformance, GN is compared with t wo other appropriate m eth- o ds: The Moment s metho d—a quick and appr o ximate appr oac h —and Mink a’s fixed-p oint iteration metho d—a more accurate and a s lo w er metho d. GN is ab le to infer more accu- rate v alues than the Momen ts metho d and it is able to pro vide the same lev el of accuracy pro vid ed b y the Mink a’s fixed-p oint iteration metho d. Ho w ever, th e time tak en by GN to 27 Osama and Da vid and Mike 0.5 0.6 0.7 0.8 0.9 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Precision Threshold LDA-GN LDA a (a) Spam filtering precisio n 0.5 0.6 0.7 0.8 0.9 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Recall Threshold LDA-GN LDA b (b) Spam filter ing r e c all Figure 13: Enron corpu s, LD A and LDA-G N sp am fi ltering precision and r ecall scores using differen t threshold settings compute its results is inv ariably less than the time consumed b y the Mink a’s fi xed-p oin t iteration metho d for the same accuracy . GN algorithm can b e used in all applications that use the Diric h let distr ib ution or m ultiv ariate Po lya d istributions to learn parameters f rom the d ata itself. The new extension LD A-GN sho ws b etter p erformance compared with standard LD A. Our exp eriments usin g tw o corp ora suggest that its abilit y to generalise to uns een do cuments is greater, since it s ho ws low er p erplexit y v alues o v er unseen do cum en ts. T o measure h o w b oth mo dels p erf orm in a sup ervised task, standard LD A and LD A-GN were used in the con text of the MC-LD A metho d in a spam classification task. Generally , LDA-G N sho w ed b etter p erformance in th is task ov er m ultiple c hoices of the threshold v alue. Ho w eve r , b oth mo dels were are able to pro vid e th e same lev els of accuracy giv en judicious choice s of the threshold v alue. The lo w er s ensitivit y to threshold in the spam classification tasks—as sho wn by mo dels inferred using LD A-GN—suggests that LDA- GN was able to infer higher q u alit y topic mo dels than LD A, b eing b etter represen tations and more discrimin atory of th e legitimate and sp am parts of th ese corp ora. Recommended settings describ ed in (W allac h et al., 2009a), w hic h are m ainly using asymmetric alpha and symmetric b eta pr iors, lead to different wo r ds generally b eing con- strained to contribute to the s ame num b er of topics. When a symmetric beta is used, and all beta v ha ve a relativ ely large v alue, some words that should r eally only app ear in a small n u m b er of topics are encouraged to spr ead to other topics. On the other hand , when beta v has a relativ ely small v alue, all words tend to b e d istributed o v er a small num b er of topics, despite the fact that some w ords could legitimately app ear in m any more topics. Con- sequen tly , topic mod els b uilt with these constrain ts can typica lly con tain many irrelev ant w ords among the topics. In con trast, in LD A-GN ev ery v o cabulary term has the freedom to b e distrib uted o ve r an y n umb er of topics with no restriction. Ho w ever, with no such restriction, stop w ord s will b e encouraged to b e distributed o v er all topics ev enly . So, it is imp ortan t to remo ve stop w ords b efore an LDA-G N mo del is learn t. Th at is why all stop w ords we r e remov ed in adv ance for b oth LDA and LD A-GN in this pap er. One p oten tial area of future work f or LDA-G N is to inv estiga te the placemen t of informed priors b efore the alpha and b eta v ariables. Suc h ma y b e a v ailable for many app licatio n s 28 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models (including, for example, u p dating a topic mo del follo wing extension of the corpu s). Mean- while, the qualit y of th e topic mod els learned by LD A-GN seems to augur well for their use in su p ervised learning tasks; spam classifica tion is one example, but w e b eliev e other tasks in the general area of sup erv ised do cument classification ma y b enefit from LD A-GN in the con text of the MC-LD A approac h. This ma y b e esp ecially fruitful in the case of discrimination tasks that inv olv e ’close’ categories (e.g. ’finance’ vs ’insurance’). 29 Osama and Da vid and Mike References Edoardo M. Airoldi, Da vid M. Blei, Stephen E. Fien b erg, and Eric P . Xing. Combin- ing sto c h astic block mo dels and mixed members hip for statistical net work analysis. In Edoardo Airoldi, Da vid M. Blei, Stephen E. Fien b erg, An na Golden b erg, Er ic P . Xing, and Alice X. Zheng, editors, Statistic al Ne twork Analysis: Mo dels, Issues, and New Di- r e ctions , Lecture Notes in Computer Science, pages 57–74. Sp ringer Berlin Heidelb erg, 2007. Tiago A. Almeida, J os ´ e Mar ´ ıa G. Hidalgo, and Ak eb o Y amak ami. Contributions to the study of SMS spam filtering: New collection and r esults. In P r o c e e dings of the 11th A CM Symp osium on D o cument Engine ering , Do cEng ’11, pages 259–2 62. A CM, 2011. Arth u r Asu n cion, Max W elling, P adhraic Smyth, and Y ee Wh ye T eh. On smo othing and inference for topic mo dels. In Pr o c e e dings of the Twenty-Fifth Confer enc e on Unc ertainty in Artificial Intel ligenc e , UAI ’09, pages 27–34 , Ar lington, Virginia, United States, 2009 . A UAI Press. Kobus Barn ard, Pinar Duygulu, Da vid F orsyth, Nando d e F reitas, Da vid M. Blei, and Mic hael I . Jordan. Matc hing w ords and pictures. J. M ach. L e arn. R e s. , 3:1107–11 35, Marc h 2003. Istv´ an B ´ ır´ o. Do cument Classific ation with L atent Di ric hlet Al lo c ation . Ph D thesis, Etvs Lornd Universit y , F acult y of Informatics, 2009. Istv´ an B ´ ır´ o, J´ acin t Szab´ o, and An dr´ as A. Bencz´ ur. Latent Dirichlet allo cation in w eb sp am filtering. In A dversarial Information R etriev al on the Web , pages 29–32, 2008. Da vid M. Blei, And rew Y. Ng, and Mic hael I. Jord an. Laten t Diric hlet allo cation. J. Mach. L e arn. R es. , 3:993–102 2, Marc h 2003. W ray Bun tine. Estimating lik eliho o d s for topic mo dels. In Z hi-Hua Zhou and T ak ashi W ashio, editors, A dvanc es in M achine L e arning , v olume 582 8 of L e ctur e Notes in Com- puter Scienc e , pages 51–64. S pringer Berlin Heidelb erg, 2009. W ray Bun tine and Aleks Jakulin. Applying discrete p ca in data analysis. In Pr o c e e dings of the 20th Confer enc e on U nc ertainty in Artificial Intel ligenc e , UAI ’04, pages 59–6 6. A UAI Press, 2004. Siddharth a Chib. Marginal lik eliho o d f rom the Gibbs output. Journal of the Am eric an Statistic al Asso c i ation , 90(43 2):1313–13 21, 1995. Philip J. Da vis. Handb o ok of Mathematic al F unctions, With F ormulas, Gr aph s, and Math- ematic al T ables , chapter Gamma F unction and Related F unctions. Do v er Publications Inc., 1972. Pierre Del Moral, Arnaud Doucet, and Ajay Jasra. Sequen tial monte carlo samplers. Journal of the R oyal Statistic al So cie ty: Series B (Statistic al Metho dolo gy) , 68(3):411–4 36, 2006. 30 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models Menac hem Dishon an d George H. W eiss. Small samp le comparison of estimation metho ds for the b eta distribution. Journal of Statistic al Computation and Simulation , 11(1):1 –11, 1980. Elena A. Eroshev a, Stephen E. Fien b erg, and Cyrille J ou tard . Describing d isabilit y through individual-lev el mixtur e mo dels for m ultiv ariate binary data. The Annal s of Applie d Statistics , 1(2):502–53 7, 12 2007. Li F ei-F ei and Pietro Perona. A ba yesia n hierarc hical mo del for learning natural scene cate- gories. In Computer V ision and Pattern R e c o gnition, 2005. CV PR 2005. IEEE Computer So ciety Confer enc e on , p ages 524–53 1, June 2005. Thomas L. Griffiths and Mark Steyv ers. Findin g s cien tific topics. Pr o c e e dings of The National A c ademy of Scienc es , 101:52 28–5235, April 2004. Donna Harman. Overview of the fir st text retriev al conference (trec-1 ). In P r o c e e dings of the First T e xt R etriev al Confer enc e (TREC-1) , p ages 1–20, 1992. Matthew D. Hoffman, David M. Blei, Ch ong W ang, and John P aisley . S to c hastic v ariational inference. J. Mach. L e arn. R es. , 14:1303–13 47, Ma y 2013. Thomas Hofmann. Probabilistic laten t s eman tic indexing. In Pr o c e e dings of the 22Nd An- nual International ACM SIGIR Confer e nc e on R ese ar ch and D evelopment in Informatio n R etrieval , S IGIR ’99, pages 50–57 . A CM, 1999. F rank Hutter, Holge r Ho os, and Kevin Leyton-Bro wn . An efficien t approac h for assessing h yp erparameter imp ortance. In Pr o c e e dings of the 31th Internationa l Confer enc e on Machine L e arning , ICML 2014, p ages 754–76 2. JMLR.org, Jun e 2014. Osama Khalifa, DavidW olfe Corne, Mike Chan tler, and F r aser Halley . Multi-ob jectiv e topic mo d eling. In RobinC. Pu rshouse, P eterJ. Fleming, CarlosM. F onseca, Salv atore Greco, and Jane Shaw, editors, Evolutionary Multi-Criterion Optimization , volume 7811 of L e ctu r e Notes in Computer Scienc e , pages 51–65 . Springer Berlin Heidelb erg, 2013. Stev e Leeds and Alan E. Gelfand. Estimation for Diric hlet mixed mo d els. N aval R ese ar ch L o gistics (NRL) , 36(2):197 –214, 1989. Jun S . Liu. Th e collapsed Gibbs sampler in Ba yesian computations with application to a gene regulatio n p roblem. Journal of the Am eric an Statistic al Asso ciation , 89(427): 958–9 66, 1994. Da vid J. C. MacKa y an d Lind a C. Bauman P eto. A hierarchical Diric h let language mo del. Journal of N atur al L anguage Engine ering , 1:289–308 , 9 1995. Andrew Kac hites McCallum. MALLET: A mac hin e learning for language toolkit. h ttp://mallet.cs.umass.edu, 2002. Nic holas Metrop olis, Arianna W. Rosenbluth, Marshall N. Rosenbluth, Au gu s ta H. T eller, and Edw ard T eller. Equation of state calculatio n s by fast computing mac hines. The Journal of Chemic al Physics , 21(6):108 7–1092, 1953. 31 Osama and Da vid and Mike V angelis Metsis, Ion Androutsop oulos, and Georgios Pa liour as. Spam filtering with naiv e Ba y es - wh ic h naiv e Ba y es? In Thir d Confer enc e on Email and Anti-Sp am (CEA S) , 200 6. Thomas Mink a and John Laffert y . Exp ectation-propagation for the generativ e asp ect mo d el. In Pr o c e e dings of the E ighte enth Confer enc e on U nc ertainty in Ar tifici al Intel ligenc e , UAI’02, p ages 352–359, San F rancisco, CA, USA, 2002 . Morgan Kaufmann Publishers Inc. Thomas P . Mink a. Estimating a Diric hlet distrib ution. T ec h nical r ep ort, Microsoft, 2000. Galileo Mark Namata, Prithvira j S en, Mustafa Bilgic, and Lise Geto or. Collectiv e classi- fication for text classificat ion. In Mehran Sahami and Ashok S r iv asta v a, editors, T ext Mining: Classific ation, Clustering, and Applic ations . T a ylor and F rancis Group, 2009. Radford M. Neal. Annealed imp ortance sampling. Statistics and Computing , 11(2):12 5–139, 2001. Mic hael A. Newton and Adrian E. Raftery . Appro x im ate b a y esian in ference with the w eight ed likel ih o o d b o otstrap. Journal of the R oyal Statistic al So ciety. Series B (M etho d- olo gic al) , 56(1):3–4 8, 1994. Jonathan K. Pritc h ard, Matthew S tephens, and P eter Donnelly . Inf er en ce of p opulation structure u sing m u ltilocus genot yp e d ata. Genetics , 155(2):9 45–959, F ebr u ary 2000. Gerd Ronn ing. Maxim um lik eliho o d estimation of Diric hlet distributions. Journal of Sta- tistic al Computation and Si mulation , 32(4):215 –221, 1989. Bry an C. Russell, William T. F reeman, Alexei A. Ef ros, Josef Sivic, and Andrew Zisserman. Using multiple segmen tations to disco v er ob j ects and their exten t in image collections. In Pr o c e e dings of the 2006 IEEE Computer So ciety Confer enc e on Computer Vision and Pattern R e c o gnition - V olume 2 , pages 1605–1614 . IE EE Computer S o ciet y , 2006. Georgios Sakkis, I on Androutsop oulos, an d Constantine D. Spyrop oulos. A memory-based approac h to anti-spam filtering for mailing lists. Informatio n R etrieval , 6:49–73, 2003. Mark Steyv ers and T om Griffiths. Handb o ok of L atent Semantic A nalysis , c hapter Prob- abilistic T opic Mo dels, p ages 427–448. Universit y of Colorado Institute of C ognitiv e Science Series. La wr ence Erlbau m Ass o ciates, 2007. ISBN 97808058 54183. Hanna M. W allac h. Structur e d T opic Mo dels for L anguage . PhD thesis, Univ ers ity of Cam br idge, 2008. Hanna M. W allac h, Da vid Mimno, and Andrew McCallum. Rethinking lda: Why priors matter. In Pr o c e e dings of Neu r al Information Pr o c essing Systems , NIP S ’22, pages 1973– 1981, 2009a. Hanna M. W allac h, Iain Mur ra y , Ru slan S alakh utdinov, and Da vid Mimno. Ev aluation metho ds for topic mo dels. In P r o c e e dings of the 26th Annual Internat i onal Confer enc e on Machine L e arning , IC ML ’09, p ages 1105–1 112. A CM, 2009b. 32 A ‘Gibbs-Newton’ Technique for Enhanced Inference of Topic Models Nicolas Wic ke r , J ean Muller, Ra vi Kiran Red d y K alath ur, and Olivier Poch. A maximum lik eliho o d appro ximation metho d for Dirichlet’ s parameter estimation. Computationa l Statistics and Data Ana lysis , 52:1315– 1322, 2008. 33

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment