Learning Laplacian Matrix in Smooth Graph Signal Representations

The construction of a meaningful graph plays a crucial role in the success of many graph-based representations and algorithms for handling structured data, especially in the emerging field of graph signal processing. However, a meaningful graph is not always readily available from the data, nor easy to define depending on the application domain. In particular, it is often desirable in graph signal processing applications that a graph is chosen such that the data admit certain regularity or smoothness on the graph. In this paper, we address the problem of learning graph Laplacians, which is equivalent to learning graph topologies, such that the input data form graph signals with smooth variations on the resulting topology. To this end, we adopt a factor analysis model for the graph signals and impose a Gaussian probabilistic prior on the latent variables that control these signals. We show that the Gaussian prior leads to an efficient representation that favors the smoothness property of the graph signals. We then propose an algorithm for learning graphs that enforces such property and is based on minimizing the variations of the signals on the learned graph. Experiments on both synthetic and real world data demonstrate that the proposed graph learning framework can efficiently infer meaningful graph topologies from signal observations under the smoothness prior.

💡 Research Summary

The paper tackles the fundamental problem of inferring a graph structure directly from data when a suitable graph is not given a priori. The authors focus on the class of graph signals that are smooth with respect to the underlying graph, i.e., signals whose values change little across edges with large weights. Smoothness is quantified by the quadratic form (x^{\top} L x), where (L) is the combinatorial Laplacian of the graph.

To connect smoothness with a statistical model, the authors extend the classical factor analysis framework to graph signals. They assume that each observed signal vector (x_i\in\mathbb{R}^n) can be expressed as a linear combination of latent variables (z_i) through a transformation matrix (U) that is derived from the eigen‑decomposition of the Laplacian: (L = U\Lambda U^{\top}). The latent variables are endowed with a zero‑mean Gaussian prior having covariance (\Lambda^{-1}). This prior forces the latent representation to concentrate on low‑frequency (small‑eigenvalue) components of the Laplacian, which directly translates into smoothness of the reconstructed signals.

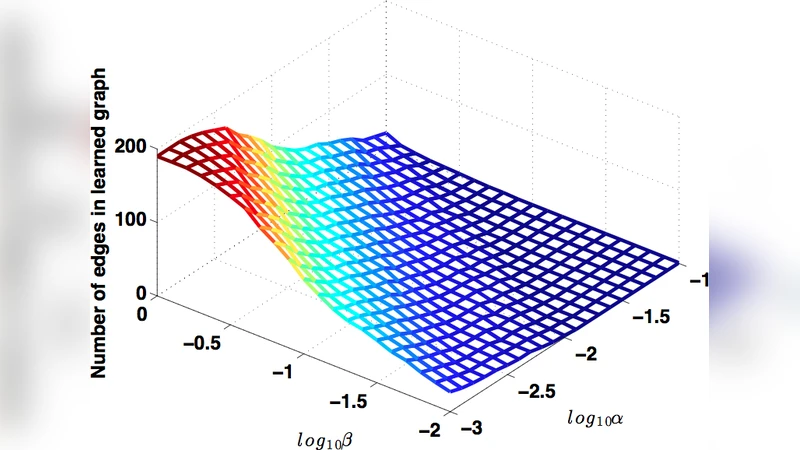

Mathematically, the joint likelihood leads to the following optimization problem:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment