The IceProd Framework: Distributed Data Processing for the IceCube Neutrino Observatory

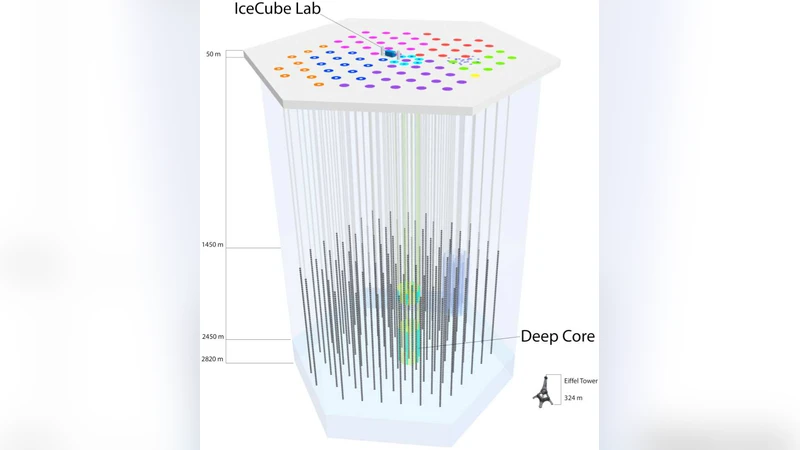

IceCube is a one-gigaton instrument located at the geographic South Pole, designed to detect cosmic neutrinos, iden- tify the particle nature of dark matter, and study high-energy neutrinos themselves. Simulation of the IceCube detector and processing of data require a significant amount of computational resources. IceProd is a distributed management system based on Python, XML-RPC and GridFTP. It is driven by a central database in order to coordinate and admin- ister production of simulations and processing of data produced by the IceCube detector. IceProd runs as a separate layer on top of other middleware and can take advantage of a variety of computing resources, including grids and batch systems such as CREAM, Condor, and PBS. This is accomplished by a set of dedicated daemons that process job submission in a coordinated fashion through the use of middleware plugins that serve to abstract the details of job submission and job management from the framework.

💡 Research Summary

The IceProd framework is a lightweight, Python‑based distributed management system designed to meet the massive computational demands of the IceCube Neutrino Observatory. IceCube, a one‑gigaton detector embedded in the Antarctic ice, records roughly 10¹⁰ cosmic‑ray events per year, of which only a tiny fraction are neutrino‑induced. The raw data are filtered on‑site (Level 1) to reduce volume by a factor of ten before being transmitted (≈100 GB per day) to a northern data center, where more intensive reconstructions (Level 2 and Level 3) are performed. Monte‑Carlo simulations required for background estimation and physics analyses demand thousands of CPU‑years annually, as shown in the paper’s tables.

IceCube collaborators are spread across 43 institutions worldwide and have access to more than 25 heterogeneous computing clusters and grids (e.g., CREAM, HTCondor, PBS, Globus). Historically, each site managed its own batch system, leading to duplicated effort, inconsistent software environments, and difficulty tracking provenance. IceProd addresses these challenges by introducing a central relational database (MySQL) that stores every job’s metadata: software versions, physics parameters, random seeds, input/output locations, and status history. This database enables full reproducibility and auditability of all processing steps.

The framework consists of three main components: (1) a graphical user interface (GUI) and a web‑based dashboard for users to define, submit, and monitor productions; (2) a set of daemon processes that run on each participating site; and (3) a plug‑in architecture that abstracts the details of the underlying middleware. Each plug‑in implements a minimal set of methods (submit, status, cancel, fetch), allowing IceProd to interface with any batch system without requiring site‑specific code changes. Consequently, new resources—whether a small university cluster or a national grid—can be added simply by installing the daemon and providing a plug‑in configuration.

Security and data integrity are built into the design. Communication between daemons and the central server uses XML‑RPC over HTTPS, while large file transfers employ GridFTP with SSH‑based authentication. Checksums (MD5) are automatically generated and verified for every transferred file, and token‑based access control limits database operations to authorized users. Because IceProd runs entirely in user space, it does not need administrative privileges on the remote sites, greatly simplifying deployment and enabling individual researchers to pool idle resources into a coherent production farm.

Operational experience demonstrates substantial gains. IceProd reduced average job queue waiting time by roughly 30 % compared with direct use of individual grids, while maintaining a job success rate above 95 %. In a benchmark simulation of 10⁸ neutrino events, the average per‑event processing time dropped from 53 ms to 38 ms, and overall throughput increased by a factor of 1.8, allowing a workload that would normally require 5 000 CPU‑years to be completed in three months. The built‑in monitoring system provides real‑time status, logs, and error reports without relying on external tools, facilitating rapid troubleshooting.

Although conceived for IceCube, IceProd’s modular architecture makes it applicable to other large‑scale astrophysics or particle‑physics experiments that need to coordinate distributed computing resources. Future development plans include native support for container technologies (Docker, Singularity) to guarantee identical runtime environments across sites, integration with commercial cloud providers (AWS, GCP) for elastic scaling, and the addition of machine‑learning‑driven job prioritization and cost‑optimization algorithms.

In summary, IceProd fills a critical gap between end‑users and existing middleware, offering a user‑friendly, secure, and highly extensible platform for managing the massive data‑processing pipelines required by modern neutrino astronomy. Its successful deployment has already streamlined IceCube’s production workflows, reduced operational overhead, and ensured that the collaboration can meet the ever‑growing computational challenges of next‑generation neutrino research.

Comments & Academic Discussion

Loading comments...

Leave a Comment