Adversarial Top-$K$ Ranking

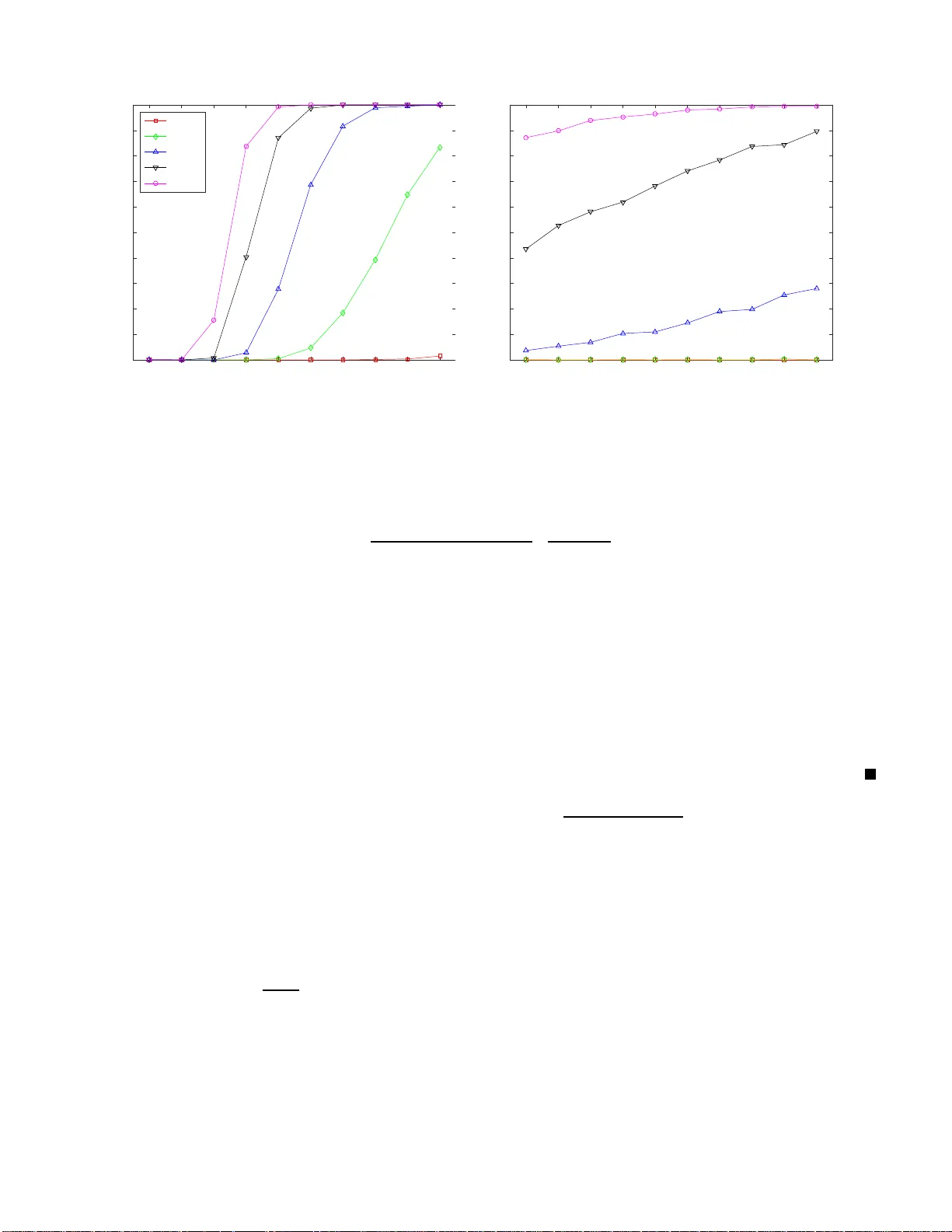

We study the top-$K$ ranking problem where the goal is to recover the set of top-$K$ ranked items out of a large collection of items based on partially revealed preferences. We consider an adversarial crowdsourced setting where there are two populati…

Authors: Changho Suh, Vincent Y. F. Tan, Renbo Zhao