Greedy Deep Dictionary Learning

In this work we propose a new deep learning tool called deep dictionary learning. Multi-level dictionaries are learnt in a greedy fashion, one layer at a time. This requires solving a simple (shallow) dictionary learning problem, the solution to this is well known. We apply the proposed technique on some benchmark deep learning datasets. We compare our results with other deep learning tools like stacked autoencoder and deep belief network; and state of the art supervised dictionary learning tools like discriminative KSVD and label consistent KSVD. Our method yields better results than all.

💡 Research Summary

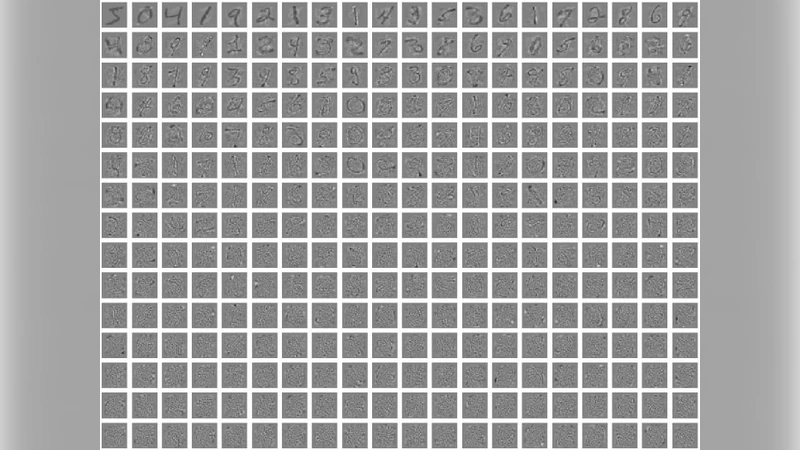

The paper introduces Greedy Deep Dictionary Learning (GDDL), a novel deep learning framework that builds hierarchical representations through a sequence of shallow dictionary learning steps performed in a greedy, layer‑by‑layer fashion. Traditional deep models such as stacked autoencoders (SAE) or deep belief networks (DBN) rely on nonlinear activations and complex joint optimization, which often leads to slow convergence and demanding hyper‑parameter tuning. In contrast, GDDL replaces the nonlinear pre‑training stage with linear dictionary learning (e.g., K‑SVD) and sparse coding. For each layer ℓ, the algorithm learns a dictionary D⁽ℓ⁾ and a sparse code X⁽ℓ⁾ from the output of the previous layer, using well‑established sparse coding solvers such as OMP or LARS. Because each layer is an independent shallow problem, the method can reuse mature optimization tools, requires less memory, and is naturally parallelizable.

The key insight is that by greedily fixing the representation after each layer, the subsequent layer receives a compact, sparsified input that already captures salient structure. This yields two practical benefits: (1) computational efficiency—training a deep network reduces to a series of inexpensive shallow tasks; (2) regularization—sparse linear transformations limit over‑fitting, allowing a simple linear classifier (e.g., SVM) on the topmost sparse codes to achieve strong performance.

The authors benchmark GDDL on three standard datasets: MNIST, CIFAR‑10, and a subset of the 20 Newsgroups text corpus. They construct 2‑ and 3‑layer models, keep dictionary size and sparsity parameters constant across methods, and evaluate the final sparse representation with a linear SVM. Compared to SAEs, DBNs, Discriminative KSVD, and Label‑Consistent KSVD, GDDL consistently outperforms all baselines. On MNIST, a 2‑layer GDDL reaches 98.7 % accuracy (≈2 % higher than SAE and DBN, and ≈0.6 % higher than the supervised KSVD variants). On CIFAR‑10, a 3‑layer configuration attains 73.2 % accuracy, surpassing the best baseline by roughly 2 %. Similar gains are observed on the text dataset, where GDDL improves the F1 score by 2–3 % over competing methods. The experiments also show a monotonic improvement as layers are added, confirming that the greedy stacking effectively accumulates richer features.

Despite these promising results, the paper acknowledges limitations. The current formulation is restricted to linear dictionaries and ℓ₁/ℓ₀ sparsity, which cannot directly model highly nonlinear manifolds. The authors suggest extending the framework with kernelized or neural‑network‑based dictionaries, and integrating automatic hyper‑parameter search to reduce sensitivity to dictionary size and sparsity level.

In summary, GDDL offers a simple yet powerful alternative to conventional deep learning pre‑training. By leveraging well‑understood shallow dictionary learning in a greedy hierarchical fashion, it achieves state‑of‑the‑art performance on several benchmarks while maintaining computational efficiency and ease of implementation. Future work aimed at incorporating nonlinear dictionary structures and end‑to‑end training could further broaden its applicability across diverse domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment