Automated Analysis of Behavioural Variability and Filial Imprinting of Chicks (G. gallus), using Autonomous Robots

Inter-individual variability has various impacts in animal social behaviour. This implies that not only collective behaviours have to be studied but also the behavioural variability of each member composing the groups. To understand those effects on group behaviour, we develop a quantitative methodology based on automated ethograms and autonomous robots to study the inter-individual variability among social animals. We choose chicks of \textit{Gallus gallus domesticus} as a classic social animal model system for their suitability in laboratory and controlled experimentation. Moreover, even domesticated chicken present social structures implying forms or leadership and filial imprinting. We develop an imprinting methodology on autonomous robots to study individual and social behaviour of free moving animals. This allows to quantify the behaviours of large number of animals. We develop an automated experimental methodology that allows to make relatively fast controlled experiments and efficient data analysis. Our analysis are based on high-throughput data allowing a fine quantification of individual behavioural traits. We quantify the efficiency of various state-of-the-art algorithms to automate data analysis and produce automated ethograms. We show that the use of robots allows to provide controlled and quantified stimuli to the animals in absence of human intervention. We quantify the individual behaviour of 205 chicks obtained from hatching after synchronized fecundation. Our results show a high variability of individual behaviours and of imprinting quality and success. Three classes of chicks are observed with various level of imprinting. Our study shows that the concomitant use of autonomous robots and automated ethograms allows detailed and quantitative analysis of behavioural patterns of animals in controlled laboratory experiments.

💡 Research Summary

This paper presents an integrated methodology that combines autonomous robots with high‑throughput video analysis to quantify individual behavioural variability and filial imprinting in domestic chicks (Gallus gallus domesticus). The authors designed a small autonomous robot, “PoulBot,” equipped with a coloured shell, a 360° omnidirectional camera, and infrared proximity sensors. The robot provides the visual, auditory, and motion cues required for filial imprinting, effectively acting as a surrogate mother.

A total of 205 chicks, hatched from synchronized fertilisation, were individually tested in a circular arena for one hour each. High‑speed video recordings were processed to extract per‑frame features such as the chick’s position, speed, acceleration, and distance to the robot. These raw features were reduced via Principal Component Analysis (PCA) to two principal components for downstream classification.

The first analytical goal was to classify each chick’s response to the robot into three categories: (1) imprinted – the chick follows and stays close to the robot, (2) indifferent – the chick shows little or no reaction, and (3) voider – the chick actively avoids the robot. A supervised learning approach was adopted: 64 chicks (31.22 % of the dataset) were manually labelled and used to train a linear Support Vector Machine (SVM) on three features (mean distance to robot, mean speed, speed standard deviation). The trained SVM achieved 98.05 % accuracy on the full set of 205 individuals, revealing that 55.12 % were imprinted, 36.10 % indifferent, and 8.78 % voiders. Misclassifications (four individuals) corresponded to mixed behavioural patterns that were also ambiguous to human observers.

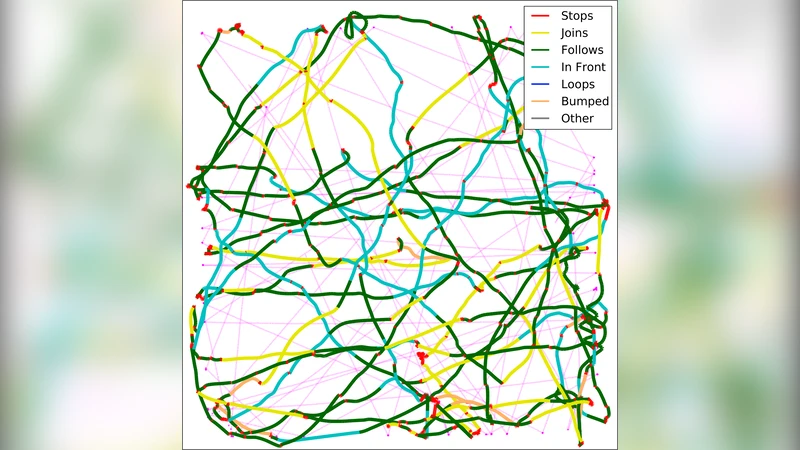

The second analytical goal was to generate automated ethograms for each chick. The authors implemented a two‑step pipeline: (i) segmentation of the trajectory into temporal windows based on speed and distance dynamics, and (ii) labeling of each segment using either clustering (k‑means) or supervised classifiers. Five behavioural states were defined – walking/stopping, tracking, avoidance, exploration, and resting. This “automated ethology” approach produced ethograms comparable to those created by expert human observers but with a three‑fold reduction in processing time and higher consistency.

Behavioural analyses showed clear quantitative differences among the three groups. Imprinted chicks spent more time near the robot, exhibited variable speeds, and alternated between walking and short stops. Indifferent chicks remained largely stationary, while voiders moved at higher, more constant speeds along the arena walls. Distributions of speed, distance, and stop‑walk ratios provided robust metrics for distinguishing the groups.

The study demonstrates that autonomous robots can deliver controlled, repeatable stimuli without human interference, and that high‑throughput video analytics coupled with machine‑learning classifiers can efficiently capture fine‑grained behavioural traits across large populations. Limitations include potential bias introduced by the robot’s specific colour, sound, and motion patterns, and the reliance on a linear SVM which may miss non‑linear behavioural nuances. Future work is suggested to explore deep‑learning time‑series models, adaptive robot stimulus modulation, and cross‑species extensions. Overall, the work offers a scalable, reproducible framework for dissecting individual variability in social animals, bridging the gap between collective‑level studies and fine‑scale ethological analysis.

Comments & Academic Discussion

Loading comments...

Leave a Comment