Solving the G-problems in less than 500 iterations: Improved efficient constrained optimization by surrogate modeling and adaptive parameter control

Constrained optimization of high-dimensional numerical problems plays an important role in many scientific and industrial applications. Function evaluations in many industrial applications are severely limited and no analytical information about obje…

Authors: Samineh Bagheri, Wolfgang Konen, Michael Emmerich

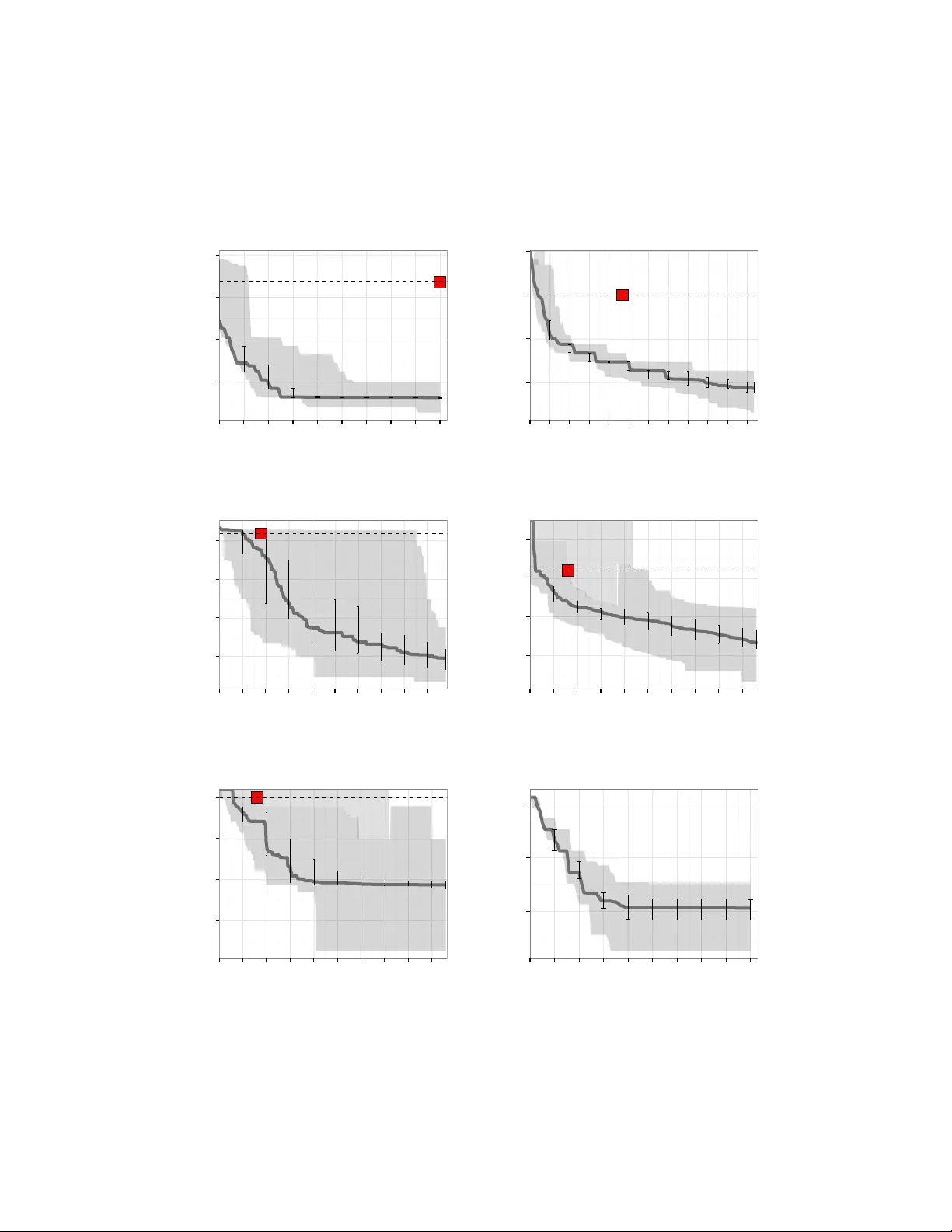

Solving the G-problems in less than 500 iterations: Impro v ed efficien t constrained optimization b y surrogate mo deling and adaptiv e parameter con trol Samineh Bagheri a , W olfgang Konen a, ∗ , Mic hael Emmerich b , Thomas B¨ ac k b a Dep artment of Computer Scienc e, TH K¨ oln (University of Applie d Scienc es), 51643 Gummersbach, Germany b L eiden University, LIACS, 2333 CA L eiden, The Netherlands Abstract Constrained optimization of high-dimensional n umerical problems plays an important role in man y scientific and industrial applications. F unction ev aluations in man y industrial ap- plications are severely limited and no analytical information ab out ob jectiv e function and constrain t functions is a v ailable. F or such exp ensive black-box optimization tasks, the con- strain t optimization algorithm COBRA was proposed, making use of RBF surrogate mo d- eling for b oth the ob jective and the constrain t functions. COBRA has shown remark able success in solving reliably complex b enc hmark problems in less than 500 function ev aluations. Unfortunately , COBRA requires careful adjustmen t of parameters in order to do so. In this work w e present a new self-adjusting algorithm SACOBRA, which is based on COBRA and capable to achiev e high-quality results with very few function ev aluations and no parameter tuning. It is sho wn with the help of p erformance profiles on a set of benchmark problems (G-problems, MOPT A08) that SA COBRA consistently outp erforms any COBRA algorithm with fixed parameter setting. W e analyze the imp ortance of the several new elemen ts in SACOBRA and find that eac h elemen t of SACOBRA plays a role to bo ost up the ov erall optimization p erformance. W e discuss the reasons b ehind and get in this w ay a b etter understanding of high-quality RBF surrogate mo deling. Keywor ds: optimization; constrained optimization; exp ensiv e blac k-b o x optimization; radial basis function; self-adjustmen t ∗ Corresponding Author Email addr esses: {samineh.bagheri,wolfgang.konen}@th-koeln.de (W olfgang Konen), {m.t.m.emmerich,T.H.W.Baeck}@liacs.leidenuniv.nl (Thomas B¨ ack) Pr eprint submitte d to Applie d Soft Computing January 1, 2016 1 INTR ODUCTION 2 1. Introduction Real-w orld optimization problems are often sub ject to constrain ts, restricting the feasible region to a smaller subset of the search space. It is the goal of constraint optimizers to av oid infeasible solutions and to stay in the feasible region, in order to con verge to the optimum. Ho wev er, the searc h in constraint blac k-b o x optimization can b e difficult, since we usually ha ve no a-priori kno wledge ab out the feasible region and the fitness landscap e. This problem ev en turns out to be harder, when only a limited n umber of function ev aluations is allo w ed for the search. How ev er, in industry go o d solutions are requested in very restricted time frames. An example is the well-kno wn b enc hmark MOPT A08 [17]. In the past different strategies hav e b een prop osed to handle constrain ts. E. g., repair metho ds try to guide infeasible solutions into the feasible area. P enalty functions giv e a negativ e bias to the ob jectiv e function v alue, when constraints are violated. Man y con- strain t handling metho ds are av ailable in the scientific literature, but often demand for a large num b er of function ev aluations (e. g., results in [35, 21]). Up to now, only little work has been devoted to efficient constrain t optimization (sev erely reduced num b er of function ev aluations). A p ossible solution in that regard is to use surr o gate mo dels for the ob jec- tiv e and the constraint functions. While the real function might be exp ensiv e to ev aluate, ev aluations on the surrogate functions are usually c heap. As an example for this approach, the solver COBRA (Constrained Optimization by Radial Basis F unction Approximation) w as prop osed b y Regis [31] and outp erforms many other algorithms on a large num ber of b enc hmark functions. In our previous work [18, 19] we ha ve studied a reimplementation of COBRA in R [30] enhanced by a new repair mechanism and rep orted its strengths and w eaknesses. Although go od results were obtained, eac h new problem required tedious manual tuning of the man y parameters in COBRA. In this pap er w e follo w a more unifying path and presen t SA COBRA (Self-Adjusting COBRA), an extension of COBRA whic h starts with the same settings on all problems and adjusts all necessary parameters in ternally . 1 This is adaptive p ar ameter c ontr ol according to the terminology introduced b y Eib en et al. [10]. W e presen t extensive tests of SA COBRA and other algorithms on a w ell-known and p opular b enchmark from the litera- ture: The so-called G-problem or G-function b enc hmark was introduced by Michalewicz and Sc ho enauer [22] and Floudas and Pardalos [14]. It pro vides a set of constrained optimization problems with a wide range of differen t conditions. W e define the follo wing research questions for the constrained optimization experiments in this w ork: (H1) Do n umerical instabilities o ccur in RBF surrogates and is it p ossible to av oid them? (H2) Is it p ossible with SACOBRA to start with the same initial parameters on all G- problems and to solv e them by self-adjusting the parameters on-line? (H3) Is it possible with SACOBRA to solv e all G-problems in less than 500 function ev al- uations? 1 SACOBRA is av ailable as open-source R -pack age from CRAN: https://cran.r- project.org/web/ packages/SACOBRA 1 INTR ODUCTION 3 1.1. R elate d work F ollo wing the surv eys on constrain t optimization giv en b y Michalewicz and Schoenauer [22], Eib en and Smith [11], Co ello Co ello [7], Jiao et al. [16], and Kramer [20], several approaches are a v ailable for constrain t handling : (i) unconstrained optimization with a penalty added to the fitness v alue for infeasible solutions (ii) feasible solution preference metho ds and sto chastic ranking (iii) repair algorithms to resolv e constraint violations during the search (iv) m ulti-ob jective optimization, where the constraint functions are defined as additional ob jectives A frequently used approac h to handle constraints is to incorp orate static or dynamic p enalty terms (i) in order to stay in the feasible region [7, 20, 24]. P enalty functions can b e very helpful for solving constrained problems, but their main drawbac k is that they often require additional parameters for balancing the fitness and p enalt y terms. T essema and Y en [38] prop ose an interesting adaptive p enalty metho d whic h do es not need an y parameter tuning. F easible solution preference metho ds (ii) [9, 23] alwa ys prefer feasible solutions to in- feasible solutions. They ma y use too little information from infeasible solutions and risk getting stuck in local optima. Deb [9] impro ves this method b y introducing a diversit y mec h- anism. Stochastic ranking [35, 36] is a similar and v ery successful improv emen t: With a certain probabilit y an infeasible solution is rank ed not b ehind, but – according to its fitness v alue – among the feasible solutions. Sto c hastic ranking has shown go o d results on all 11 G-problems. How ever it requires usually a large num ber of function ev aluations (300 000 and more) and is th us not well suited for efficient optimization. Repair algorithms (iii) try to transform infeasible solutions in to feasible ones [5, 40, 19]. The work of Chootinan and Chen [5] shows v ery go od results on 11 G-problems, but requires a large n umber of function ev aluations (5 000 – 500 000) as well. In recent y ears, multi-ob jective optimization tec hniques (iv) ha ve attracted increasing atten tion for solving constrained optimization problems. The general idea is to treat the constrain ts as one or more ob jective functions to be optimized in conjunction with the fitness function. Co ello Co ello and Mon tes [8] use Pareto dominance-based tournament selection for a genetic algorithm (GA). Similarly , V enk atraman and Y en [39] propose a tw o-phase GA, where the second phase is form ulated as a bi-ob jective optimization problem whic h uses non-dominated ranking. Jiao et al. [16] use a no vel selection strategy based on bi- ob jective optimization and get impro v ed reliabilit y on a large n um b er of benchmark functions. Emmeric h et al. [12] use Kriging mo dels for approximating constraints in a m ulti-ob jective optimization sc heme. In the field of mo del-assisted optimization algorithms for constrained problems, sup- p ort v ector machines (SVMs) hav e b een used by Poloczek and Kramer [26]. They make use of SVMs as a classifier for predicting the feasibility of solutions, but achiev e only sligh t impro vemen ts. P ow ell [28] prop oses COBYLA, a direct search metho d which mo dels the ob jective and the constraints using linear approximation. Recently , Regis [31] dev elop ed COBRA, an efficient solver that makes use of Radial Basis F unction (RBF) interpolation 1 INTR ODUCTION 4 to mo del ob jectiv e and constraint functions, and outp erforms most algorithms in terms of required function ev aluations on a large num ber of b enc hmark functions. T enne and Arm- field [37] present an adaptive top ology RBF net work to tac kle highly multimodal functions. But they consider only unconstrained optimization. Most optimization algorithms need their parameter to b e set with resp ect to the sp ecific optimization problem in order to show go o d p erformance. Eib en et al. [10] introduced a terminology for parameter settings for ev olutionary algorithms: They distinguish parame- ter tuning (before the run) and parameter control (online). Parameter con trol is further sub divided in to predefined control schemes (deterministic), control with feedbac k from the optimization run (adaptive), or control where the parameters are part of the ev olv able chro- mosome (self-adaptiv e). Sev eral pap ers deal with adaptiv e or self-adaptiv e parameter con trol in uncon- strained or constrained optimization: Qin and Suganthan [29] propose a self-adaptive differ- en tial evolution (DE) algorithm. Brest et al. [2] prop ose another self-adaptive DE algorithm. But they do not handle constraints, whereas Zhang et al. [41] describ e a constraint-handling mec hanism for DE. W e will compare later our results with the DE-implementation DEoptimR 2 whic h is based on b oth works [2, 41]. F armani and W right [13] prop ose a self-adaptiv e fitness form ulation and test it on all 11 G-problems. They sho w comparable results to sto c hastic ranking [35], but require man y function ev aluations (abov e 300 000) as w ell. Co ello Co ello [6] and T essema and Y en [38] prop ose self-adaptiv e p enalty approaches. A surv ey of self-adaptiv e p enalt y approac hes is given in [10]. The area of efficient constrained optimization , that is optimization under severely limited budget of less than 1000 function ev aluations, is attracting more and more atten tion in recen t y ears: Regis prop osed b esides the already mentioned COBRA approach [31] a trust- region evolutionary algorithm [33] which uses RBF surrogates as w ell and which exhibits high-qualit y results on man y but not all G-functions in less than 1000 function ev aluations. Jiao et al. [16] prop ose a self-adaptive selection metho d to combine informative feasible and infeasible solutions and they formulate it as a m ulti-ob jectiv e problem. Their algorithm can solv e some of the G-functions (G08,G11,G12) really fast in less than 500 ev aluations, some others are solved with less than 10 000 ev aluations, but the remaining G-functions (G01- G03,G07,G10) require 20 000 to 120 000 ev aluations to b e solved. Zahara and Kao [40] sho w similar results (1000 – 20 000 ev aluations) on some G-functions, but they inv estigate only G04, G08, and G12. T o the b est of our kno wledge there is currently no approach whic h can solv e all 11 G-problems in less than 1 000 ev aluations. T enne and Armfield [37] present an in teresting approac h with appro ximating RBFs to optimize highly m ultimo dal functions in less than 200 ev aluations, but their results are only for unconstrained functions and they are not comp etitiv e in terms of precision. The rest of this pap er is organized as follows: In Sec. 2 we presen t the constrained optimization problem and our methods: the RBF surrogate modeling tec hnique, the COBRA- R algorithm and the SA COBRA algorithm. In Sec. 3 w e perform a thorough exp erimen tal study on analytical test functions and on a real-world benchmark function MOPT A08 [17] from the automotive domain. W e analyze with the help of data profiles the impact of the v arious SACOBRA e lemen ts on the ov erall p erformance. The results are discussed in Sec. 4 2 R -pack age DEoptimR , av ailable from https://cran.r- project.org/web/packages/DEoptimR 2 METHODS 5 and w e give conclusive remarks in Sec. 5. 2. Metho ds 2.1. Constr aine d optimization A constrained optimization problem can b e defined b y the minimization of an ob jective function f sub ject to constraint function(s) g 1 , . . . , g m : Minimize f ( x ) (1) sub ject to g i ( x ) ≤ 0 , i = 1 , 2 , . . . , m, x ∈ [ a, b ] ⊂ R d In this pap er we alwa ys consider minimization problems. Maximization problems are trans- formed to minimization without loss of generality . Problems with equality constraints ha ve them transformed to inequalities first (see Sec. 3.1 and Sec. 4.2). 2.2. R adial Basis F unctions The COBRA algorithm incorp orates optimization on auxiliary functions, e. g. regression mo dels o ver the searc h space. Although n umerous regression models are a v ailable, w e emplo y in terp olating RBF mo dels [4, 27], since they outp erform other mo dels in terms of efficiency and quality . In this paper w e use the same notation as Regis [32]. RBF mo dels require as input a set of design p oints (a training set): n p oints u (1) , . . . , u ( n ) ∈ R d are ev aluated on the real function f ( u (1) ) , . . . , f ( u ( n ) ). W e use an in terp olating radial basis function as appro ximation: s ( n ) ( x ) = n X i =1 λ i ϕ ( || x − u ( i ) || ) + p ( x ) , x ∈ R d (2) Here, || · || is the Euclidean norm, λ i ∈ R for i = 1 , . . . , n , p ( x ) = c 0 + c x is a linear p olynomial in d v ariables with d + 1 co efficients c 0 = ( c 0 , c ) T = ( c 0 , c 1 , . . . , c d ) T ∈ R d +1 , and ϕ is of cubic form ϕ ( r ) = r 3 . An alternativ e to cubic RBFs are Gaussian RBFs ϕ ( r ) = e − r 2 / (2 σ 2 ) whic h introduce an additional width parameter σ . The RBF mo del fit requires a distance matrix Φ ∈ R n × n : Φ ij = ϕ ( || u ( i ) − u j || ) , i, j = 1 , . . . , n . The RBF mo del requires the solution of the following linear system of equations: Φ P P T 0 ( d +1) × ( d +1) λ c 0 = F 0 d +1 (3) for the unknowns λ, c 0 . Where P ∈ R n × ( d +1) is a matrix with (1 , u ( i ) ) in its i th row, 0 ( d +1) × ( d +1) ∈ R ( d +1) × ( d +1) is a zero matrix, 0 d +1 is a vector of zeros, F = ( f ( u (1) ) , . . . , f ( u ( n ) )) T , and λ = ( λ 1 , . . . , λ n ) T ∈ R n . The matrix in Eq. (3) is inv ertible if it has full rank. F or this reason it is necessary to provide indep endent p oin ts in the initial design. This is usually the case, if d + 1 linearly indep enden t p oints are provided. The matrix inv ersion can b e done efficiently by using singular v alue decomp osition (SVD) or similar algorithms. 2 METHODS 6 1 1000 10000 ● ● ● ● ● ● ● ● ● ● RMSE=1.6e−12 ● ● ● ● ● ● ● ● ● ● RMSE=3.7e−02 ● ● ● ● ● ● ● ● ● ● RMSE=8.2e+00 1 2 3 4 5 6 7 0.0 0.5 1.0 1.5 2.0 0 500 1000 1500 2000 0 5000 10000 15000 20000 x f(x) true function RBF model SCALE ARTEF ACTS Figure 1: The influence of scaling. F rom left to right the plots show the RBF mo del fit for scale S = 1 , 1000 , 10000 (upper facet bar). The linear p olynomial p ( x ) in Eq. (2) serves the purp ose to alleviate the fit of simple linear functions f () which otherwise hav e to b e approximated by superimp osing many RBFs in a complicated wa y . Polynomials of higher order ma y b e used as w ell. W e consider here the option of additional direct squares, where p ( x ) in Eq. (2) is replaced by p sq ( x ) = p ( x ) + e 1 x 2 1 + . . . + e d x 2 d (4) with additional coefficients e = ( e 1 , . . . , e d ) T . The matrix in Eq. (3) is extended in a straigh t- forw ard manner from an ( n + d + 1) × ( n + d + 1)-matrix to an ( n + 2 d + 1) × ( n + 2 d + 1)-matrix. 2.3. Common pitfal ls in surr o gate-assiste d optimization RBF mo dels are very fast to train, even in high dimensions. They often provide go o d appro ximation accuracy even when only few training p oints are given. This makes them ideally suited as surrogate mo dels for high-dimensional optimization problems with a large n umber of constraints. There are ho wev er some pitfalls whic h should be a v oided to ac hiev e goo d modeling results for an y surrogate-assisted black-box optimization. 2.3.1. R esc aling the input sp ac e If a mo del is fitted with to o large v alues in input space, a striking failure may o ccur. Consider the follo wing simple example: f ( x ) = 3 x S + 1 (5) where x ∈ [0 , 2 S ]. If S is large, the x -v alues (which en ter the RBF-mo del) will b e large, although the output pro duced b y Eq. (5) is exactly the same. Since the function f ( x ) to b e 2 METHODS 7 PLOG: F ALSE PLOG: TR UE ● ● ● ● ● ● ● ● ● ● RMSE = 1228 ● ● ● ● ● ● ● ● ● ● RMSE = 50 0 2000 4000 6000 8000 −1 0 1 2 3 −1 0 1 2 3 x f(x) true function RBF model RANGE ARTEF ACTS Figure 2: The influence of large output ranges. Left: Fitting the original function with a cubic RBF mo del. Right: Fitting the plog -transformed function with an RBF-mo del and transforming the fit bac k to original space with plog − 1 . mo deled is exactly linear and the RBF-mo del contains a linear tail as well, one would expe ct at first sight a perfect fit (small RMSE) for each surrogate model. But – as Fig. 1 shows – this is not the case for large S : The fit (based on the same set of five p oin ts) is p erfect for S = 1, w eaker for S = 1000, and extremely bad in extrap olation for S = 10000. The reason for this b eha vior: Large v alues for x lead to computationally singular (ill- conditioned) co efficien t matrices, b ecause the cubic co efficients tend to b e many orders of magnitude larger than the coefficients for the linear part. Either the linear equation solver will stop with an error or it pro duces a result which ma y hav e large RMSE, as it is demonstrated in the right plot of Fig. 1. The solv er sets the linear tail of the RBF mo del to zero in order to av oid numerical instabilities. The RBF mo del thus attempts to approximate the linear function with a sup erp osition of cubic RBFs. This is b ound to fail if the RBF mo del has to extrap olate b eyond the green sample p oin ts. This effect exactly o ccurs in problem G10, where the ob jectiv e function is a simple linear function x 1 + x 2 + x 3 and the range for the input dimensions is large and different, e.g. [100 , 10000] for x 1 and [10 , 1000] for x 3 . The solution to this pitfall is simple: Rescale a giv en problem in all its input dimensions to a small and iden tical range, e.g. either to [0,1] or to [-1,1] for all x i . 2.3.2. L o garithmic tr ansform for lar ge output r anges Another pitfall are large output ranges in ob jectiv e or constraint functions. As an example consider the function f ( x ) = e x 2 (6) whic h has small v alues < 10 in the interv al [-1,1] around its minimum, but quickly grows to large v alues ab ov e 8000 at x = 3. If we fit the original function with an RBF model using the green sample p oin ts shown in Fig. 2, we see in the left plot an oscillating b ehavior in the RBF function. This results in a large RMSE (approximation error). 2 METHODS 8 The reason is that the RBF mo del tries to av oid large slopes. Instead the fitted mo del is similar to a spline function. Therefore it is a useful remedy to apply a logarithmic transform whic h puts the output in to a smaller range and results in smaller slop es. Regis and Sho emak er [34] define the function plog( y ) = ( + ln(1 + y ) if y ≥ 0 − ln(1 − y ) if y < 0 (7) whic h has – in contrast to the plain logarithm – no singularities and is strictly monotonous for all y ∈ R . The RBF mo del can p erfectly fit the plog -transformed function. Afterward we transform the fit with pl og − 1 bac k to the original space and the back-transform takes care of the large slop es. As a result we get a m uch smaller approximation error RMSE in the original space, as the righ t-hand side of Fig. 2 shows. W e will apply the pl og -transform only to functions with steep slop es in our surrogate- assisted optimization SACOBRA. F or functions with flat or constan t slop e (e. g. linear functions) our exp erimen ts hav e shown that – due to the nonlinear nature of pl og – the RBF appro ximation for pl og ( f ) is less accurate. 2.4. COBRA-R The COBRA algorithm has been dev elop ed by Regis [31] with the aim to solv e constrained optimization tasks with severely limited budgets. The main idea of COBRA is to do most of the costly optimization on surrogate mo dels (RBF mo dels, b oth for the ob jective function f and the constraint functions g i ). W e reimplemented this algorithm in R [30] with a few small mo difications. W e giv e a short review of this algorithm COBRA-R in the following. COBRA-R starts b y generating an initial p opulation P with n init p oin ts (i. e. a random initial design 3 , see Fig. 3) to build the first set of surrogate mo dels . The minimum num b er of p oin ts is n init = d + 1, but usually a larger choice n init = 3 d giv es b etter results. Un til the budget is exhausted, the following steps are iterated on the current p opulation P = { x 1 , . . . , x n } : The constrained optimization problem is executed by optimizing on the surr o gate functions : That is, the true functions f , g 1 , . . . , g m are approximated with RBF surrogate mo dels s ( n ) 0 , s ( n ) 1 , . . . , s ( n ) m , given the n p oin ts in the current population P . In eac h iteration the COBRA-R algorithm solves with any standard constrained optimizer 4 the constrained surrogate subproblem Minimize s ( n ) 0 ( x ) (8) sub ject to x ∈ [ a, b ] ⊂ R d , s ( n ) i ( x ) + ( n ) ≤ 0 , i = 1 , 2 , . . . , m ρ n − || x − x j || ≤ 0 , j = 1 , . . . , n. Compared to the original problem in Eq. (1) this subproblem uses surrogates and it contains t wo new elements ( n ) and ρ n whic h are explained in the next subsections. Before going into 3 usually a latin hypercub e sampling (LHS) 4 Regis [31] uses MA TLAB ’s fmincon , an in terior-p oin t optimizer, whic h is not av ailable in the R envi- ronment. In COBRA-R we use mostly Po well’s COBYLA, but other constrained optim izer like ISRES are implemented in our R -pack age https://cran.r- project.org/web/packages/SACOBRA as well. 2 METHODS 9 Generate & ev al- uate initial design Run repair heuristic Solution repaired or feasible? Ev aluate solution on real functions Update the best solution Budget exhausted? Add solution to the p opulation Run optimization on surrogates Fit RBF surro- gates of ob jectiv e and constraints Y es No Y es No Figure 3: COBRA-R flow chart these details w e finish the description of the main loop: The optimizer returns a new solution x n +1 . If x n +1 is not feasible, a repair algorithm RI2 describ ed in our previous work [19] tries to replace it with a feasible solution in the vicinit y . 5 In an y case, the new solution x n +1 is ev aluated on the true functions f , g 1 , . . . , g m . It is compared to the b est feasible solution found so far and replaces it if b etter. The new solution x n +1 is added to the p opulation P = { x 1 , . . . , x n +1 } and the next iteration starts with incremen ted n . 2.4.1. Distanc e r e quir ement cycle COBRA [31] applies a distance requiremen t factor which determines how close the next solution x n +1 ∈ R d is allow ed to b e to all previous ones. The idea is to av oid frequen t up dates in the neighborho o d of the curren t b est solution. The distance requiremen t can be passed by the user as external parameter vector Ξ = h ξ (1) , ξ (2) , . . . , ξ ( κ ) i with ξ ( i ) ∈ R ≥ 0 . In eac h iteration n , COBRA selects the next elemen t ρ n = ξ ( i ) of Ξ and adds the constrain ts || x − x j || ≥ ρ n , j = 1 , ..., n to the set of constrain ts. This measures the distance b et ween the proposed infill solution and all n previous infill points. The distance requirement cycle (DR C) is a clever idea, since small elements in Ξ lead to more exploitation of the search space, while larger elements lead to more exploration. If the last element of Ξ is reached, the selection starts with the first element again. The size of Ξ and its single components can b e arbitrarily c hosen. 5 RI2 is only rarely inv ok ed on the G-problem b enc hmark but more often in the MOPT A08 case. 2 METHODS 10 2.4.2. Unc ertainty of c onstr aint pr e dictions COBRA [31] aims at finding feasible solutions b y extensive search on the surrogate func- tions. How ev er, as the RBF mo dels are probably not exact, esp ecially in the initial phase of the search, a factor ( n ) is used to handle wrong predictions of the constraint surrogates. Starting with ( n ) = 0 . 005 · l , where l is the diameter of the search space, a p oin t x is said to b e fe asible in iter ation n if s ( n ) i ( x ) + ( n ) ≤ 0 ∀ i = 1 , . . . , m (9) holds. That is, we tigh ten the constrain ts by adding the factor ( n ) whic h is adapted during the searc h. The ( n ) -adaptation is done b y coun ting the feasible and infeasible infill p oin ts C f eas and C inf eas o ver the last iterations. When the num ber of these counters reaches the threshold for feasible or infeasible solutions, T f eas or T inf eas , respectively , w e divide or double ( n ) b y 2 (up to a giv en maximum max ). When ( n ) is decreased, solutions are allo wed to mo ve closer to the real constraint b oundaries (the imaginary b oundary is relaxed), since the last T f eas infill p oin ts were feasible. Otherwise, when no feasible infill point is found for T inf eas iterations, ( n ) is increased in order to keep the p oin ts further aw a y from the real constrain t b oundary . 2.4.3. Differ enc es COBRA vs. COBRA-R Although COBRA and COBRA-R are sharing man y common principles, there are sev eral differences which can lead to different results on identical problems. The main differences b et w een COBRA [31] and COBRA-R [18] are listed as follows: • COBRA is implemen ted in MA TLAB while COBRA-R is implemented in R . • Internal optimizer: COBRA uses MA TLAB ’s fmincon , an interior-point optimizer, COBRA-R uses COBYLA. 6 • Skipping phase 1: COBRA has an additional phase 1 for searching the first feasible p oin t. 7 • R ep air algorithm: COBRA-R has an additional repair algorithm RI2 [19]. • R esc aling the input sp ac e : COBRA rescales each dimension to [0,1], COBRA-R in its initial form [18] do es not. See Sec. 2.5.1 for further remarks on rescaling. 2.5. SACOBRA COBRA and COBRA-R achiev e go o d results on most of the G-problems and on MOPT A08 as studies from Regis [31] and our previous w ork [19] hav e shown. How ev er, it was neces- sary in b oth pap ers to carefully adjust the parameters of the algorithm to each problem and 6 Other optimizers like ISRES and unconstrained optimizers with p enalt y are also av ailable in COBRA-R / SACOBRA pack age, but not used in this paper. 7 Phase 1 uses an ob jectiv e function whic h rewards constraint fulfillment. W e implemented this in COBRA- R as w ell but found it to b e unnecessary for our problems. In this pap er, COBRA-R alwa ys skips phase 1 and directly pro ceeds with phase 2 even if no feasible solution is found in the initialization phase. 2 METHODS 11 Algorithm 1 SACOBRA. Input: Ob jective function f , set of constraint function(s) g = ( g 1 , . . . , g m ) : [ a, b ] ⊂ R d → R (see Eq. (1)), initial starting p oin t x init ∈ [ a, b ], maximum ev aluation budget N max . Output: The b est solution x best found b y the algorithm. 1: function SACOBRA ( f , g , x init , N max ) 2: Rescale the input space to [ − 1 , 1] d 3: Generate a random initial p opulation: P = { x 1 , x 2 , · · · , x 3 · d } 4: ( d F R, d GR i )= Anal yseInitialPopula tion ( P, f , g ) 5: e g ← AdjustConstraintFunctions ( d GR i , g ) 6: Ξ ← AdjustDR C ( d F R ) 7: Q ← Anal ysePlogEffect ( f , P , x init ) 8: x best ← x init 9: while (budget not exhausted, | P | < N max ) do 10: n ← | P | 11: e f () ← ( Q > 1 ? pl og ( f ()) : f () ) see function plog in Eq. (7) 12: Build surrogate mo dels s ( n ) =( s ( n ) 0 , s ( n ) 1 , · · · , s ( n ) m ) for ( e f , e g 1 , · · · , e g m ) 13: Select ρ n ∈ Ξ and ( n ) i according to COBRA base algorithm 14: x start ← RandomSt ar t ( x best , N max ) 15: x new ← OptimCOBRA ( x start , s ( n ) ) see Eq. (8) 16: Ev aluate x new on the real functions e f , e g 17: if ( | P | mod 10 == 0) then ev ery 10th iteration 18: Q ← Anal ysePlogEffect ( f , P , x new ) 19: end if 20: x new ← rep airRI2 ( x new ) see Ko ch et al. [19] for de- tails on RI2 (repair algo) 21: ( P , x best ) ← upda teBest ( P, x new , x best ) 22: end while 23: return x best 24: end function 25: function upda teBest ( P , x new , x best ) 26: P ← P ∪ { x new } 27: if ( x new is feasible AN D x new < x best ) then 28: return ( P , x new ) 29: end if 30: return ( P , x best ) 31: end function 2 METHODS 12 Algorithm 2 SACOBRA adjustment functions 1: function Anal yseInitialPopula tion ( P , f , g ) 2: d F R =max P f ( P ) − min P f ( P ) range of ob jective function 3: d GR i =max P g i ( P ) − min P g i ( P ) ∀ i = 1 , . . . , m 4: end function 5: function AdjustConstraintFunction ( d GR i , g ) 6: g i () ← g i () · a vg ( d GR i ) d GR i ∀ i = 1 , . . . , m see Eq. (10) 7: return g 8: end function 9: function AdjustDRC ( d F R ) 10: if d F R > F R l then Threshold F R l = 1000 11: Ξ = Ξ s = h 0 . 001 , 0 . 0 i 12: else 13: Ξ = Ξ l = h 0 . 3 , 0 . 05 , 0 . 001 , 0 . 0005 , 0 . 0 i 14: end if 15: end function 16: function Anal ysePlogEffect ( f , P , x new ) x new / ∈ P 17: S f ← surrogate mo del for f () using all p oints in P 18: S p ← surrogate mo del for pl og ( f ()) using all p oin ts in P see function plog in Eq. (7) 19: E ← E ∪ | S f ( x new ) − f ( x new ) | | plog − 1 ( S p ( x new )) − f ( x new ) | E , the set of approximation error ratios, is initially empty 20: return Q = log 10 (median( E )) 21: end function Algorithm 3 RandomSt ar t (RS) . Input : x best : the ever-best feasible solution. Parame- ters: restart probabilities p 1 = 0 . 125 , p 2 = 0 . 4. Output : New starting p oint x start . 1: function RandomSt ar t ( x best ) 2: F low ← ( | P f eas | / | P | < 0 . 05) True if less than 5% of the p opulation are feasible 3: p ← ( F low = T RU E ? p 2 : p 1 ) 4: ← a random v alue ∈ [0 , 1] 5: if ( < p ) then 6: x start ← a random p oin t in searc h space 7: else 8: x start ← x best 9: end if 10: return ( x start ) 11: end function 2 METHODS 13 Rescale in- put space Generate & ev al- uate initial design Adjust constraint function(s) Adjust DRC Run repair heuristic Solution repaired or feasible? Up date the b est solution Budget exhausted? Online adjustment of fitness function Fit RBF surro- gates of ob jective and constraints Select start p oin t (RS) Run optimization on surrogates Add solution to the p opulation Ev aluate new p oint on real functions Y es No No Y es Figure 4: SACOBRA flow chart sometimes ev en to mo dify the problem by applying a pl og -transform (Eq. (7)) to the ob jec- tiv e function or linear transformations to the constraints or by rescaling the input space. In real blac k-b o x optimization all these adjustments would probably require knowledge of the problem or sev eral executions of the optimization co de otherwise. It is the main con tribution of the current pap er to presen t with SA COBRA (Self-Adjusting COBRA) an enhanced COBRA algorithm whic h has no needs for manual adjustment to the problem at hand. Instead, SACOBRA extracts during its execution information about the sp ecific problem (either after the initialization phase or online during iterations) and takes in ternally appropriate measures to adjust its parameters or to transform functions. W e presen t in Fig. 4 the flo wc hart of SA COBRA where the fiv e new elemen ts compared to COBRA-R are highligh ted as gray b o xes. The complete SA COBRA algorithm is presen ted in detail in Algorithm 1 – 3. W e describe in the following the five new elements in the order of their app earance: 2.5.1. R esc aling the input sp ac e The input vector x is element-wise rescaled to [ − 1 , +1]. This is done b efore the initial- ization phase. It helps to ha v e a b etter exploration all ov er the search space b ecause all dimensions are treated the same. More importantly , it a v oids numerical instabilities caused b y high v alues of x as sho wn in Sec. 2.3.1. 2 METHODS 14 2.5.2. A djusting c onstr aint function(s) (aCF) aCF is done b y normalizing the range of constrain t functions for eac h problem. The range d GR i for the i th constraint is estimated from the initial p opulation in Algorithm 2. Normalizing eac h d GR i b y the av erage constrain t range a vg d GR i = 1 m X i d GR i (10) helps to shift the range of all constraints as little as p ossible. Including this step aCF b o osts up the optimization p erformance b ecause all constraints op erate now in a similar range. 2.5.3. A djusting DRC p ar ameter (aDRC) aDR C is done after the initialization phase. Our exp erimental analysis show ed that large DR C v alues can be harmful for problems with a v ery steep ob jective function, because a large mo ve in the input space yields a v ery large c hange in the output space. This may sp oil the RBF mo del in a sense similar to Sec. 2.3.2 and lead in consequence to large approximation errors. Therefore, we dev eloped an automatic DR C adjustmen t whic h selects the appropriate DR C set according to the information extracted after the initialization phase. F unction Anal yzePlogEffect in Algorithm 2 selects the ’small’ DRC Ξ s if the estimated ob jective function range d F R is larger than a threshold, otherwise it selects the ’large’ DRC Ξ l . 2.5.4. R andom start algorithm ( RS ) Normally COBRA starts optimization from the curren t b est p oint. With RS (Algo- rithm 3), the optimization starts from a random p oin t in the search space with a certain constan t probability p 1 . If the rate of feasibile individuals in the population P drops b elow 5% then we replace p 1 with a larger probability p 2 . RS is esp ecially b eneficial when the searc h gets stuc k in lo cal optima or when it gets stuck in a region where no feasible p oin t can b e found. 2.5.5. Online adjustment of fitness function (aFF) Our analysis in Sec. 2.3.2 has shown that a fitness function f with steep slop es p oses a problem for RBF approximation. F or some problems, mo deling plog ( f ) instead of f and transforming the RBF result back with plog − 1 b oosts up the optimization p erformance sig- nifican tly . On the other hand, our tests ha v e sho wn that the plog -transform is harmful for some other problems. Therefore, a careful decision whether to use pl og or not should b e made. The idea of our online adjustment algorithm (Algorithm 2, function Anal yzePlogEf- fect ) is the following: Giv en the p opulation P , w e build RBFs for f and pl og ( f ), tak e a new p oin t x new not yet added to P , and calculate the ratio of appro ximation errors on x new (line 15 of Algorithm 2). W e do this in every k th iteration (usually k = 10) and collect these ratios in a set E . If Q = log 10 (median( E )) (11) 2 METHODS 15 is abov e 0, then the RBF for plog ( f ) is better in the ma jorit y of the cases. Otherwise, the RBF on f is b etter. 8 Step 11 of Algorithm 1 decides on the basis of this criterion Q whic h function e f is used as RBF surrogate in the optimization step. Note that the decision for e f tak en in earlier iterations can b e rev oked in later iterations, if the ma jority of the elements in E shows that no w the other choice is more promising. This completes the description of our SACOBRA algorithm. SA COBRA is av ailable as op en-source R -pack age from CRAN. 9 2.6. Performanc e Me asur es In many pap ers on optimization the strength of an optimization technique is measured b y comparing the final solution achiev ed b y different algorithms [35]. This approach only pro vides the information about the quality of the results and neglects the speed of conv ergence whic h is a very imp ortant measure for exp ensive optimization problems. Comparing the con vergence curve ov er time (num b er of function ev aluations) is also one of the common b enc hmarking approaches [31]. Although a con vergence curve provides go o d information ab out the speed of con vergence and the final qualit y of the optimization result, it can b e used to compare p erformance of several algorithms only on one problem. It is often interesting to compare the ov erall capabilit y of a tec hnique on solving a group of problems. The data and p erformance profiles dev eloped by Mor ´ e and Wild [25] are a go o d approac h to analyze the p erformance of an y optimization algorithm on a whole test suite and are no w used frequen tly in the optimization literature [3, 33]. 2.6.1. Performanc e Pr ofiles P erformance profiles are defined with the help of the p erformance ratio r p,s = t p,s min ∀ s 0 ∈ S { t p,s 0 } , p ∈ P (12) where P is a set of problems, S is a set of solv ers and t p,s is the n um b er of iterations solver s ∈ S requires to solve problem p ∈ P . A problem is said to b e solve d when a feasible ob jective v alue f ( x ) is found which is not more than τ larger than the b est ob jective f L determined b y any solver in S : f ( x ) − f L ≤ τ (13) W e use τ = 0 . 05 for all our exp eriments b elo w. Smaller v alues are more desirable for the p erformance ratio r p,s . When using the b est solver s to solve problem p then r p,s = 1. If a solver s cannot solve problem p the performance ratio is set to infinit y . The p erformance 8 Our exp erimen tal analysis on the G-problem test suite will show (Sec. 3.4) that a threshold 1 is slightly more robust than 0. W e use this threshold 1 in step 11 of Algorithm 1, but the difference to threshold 0 is only marginal. 9 https://cran.r- project.org/web/packages/SACOBRA 3 EXPERIMENTS 16 T able 1: Characteristics of the G-functions: d : dimension, type of fitness function, ρ ∗ : feasibility rate (%) after changing equalit y constraints to inequalit y constraints, F R : range of the fitness v alues, GR : ratio of largest to smallest constraint range, LI: num b er of linear inequalities, NI: num ber of nonlinear inequalities, NE: num ber of nonlinear equalities, a : num ber of constraints active at the optimum. Fct. d t yp e ρ ∗ F R GR LI NI NE a G01 13 quadratic 0.0003% 298.14 1.969 9 0 0 6 G02 10 nonlinear 99.997% 0.57 2.632 1 1 0 1 G03 20 nonlinear 0.0000% 92684985979.23 1.000 0 0 1 1 G04 5 quadratic 26.9217% 9832.45 2.161 0 6 0 2 G05 4 nonlinear 0.0919% 8863.69 1788.74 2 0 3 3 G06 2 nonlinear 0.0072% 1246828.23 1.010 0 2 0 2 G07 10 quadratic 0.0000% 5928.19 12.671 3 5 0 6 G08 2 nonlinear 0.8751% 1821.61 2.393 0 2 0 0 G09 7 nonlinear 0.5207% 10013016.18 25.05 0 4 0 2 G10 8 linear 0.0008% 27610.89 3842702 3 3 0 3 G11 2 linear 66.7240% 4.99 1.000 0 0 1 1 profile ρ s is no w defined as a function of the steerable p erformance factor α : ρ s ( α ) = 1 | P | |{ p ∈ P : r p,s ≤ α }| . (14) 2.6.2. Data Pr ofiles Data profiles are appropriate for ev aluating optimization algorithms on exp ensiv e prob- lems. They are defined as d s ( α ) = 1 | P | |{ p ∈ P : t p,s d p + 1 ≤ α }| , (15) with P , S and t p,s defined as ab o v e and d p as the dimension of problem p . W e prefer data profiles o ver p erformance profiles, because the p erformance factor α has a more intuitiv e meaning for data profiles: If w e allo w for each problem with dimension d p a budget of B α = α ( d p + 1) function ev aluations, then the v alue d s ( α ) can b e interpreted as the fraction of problems whic h solver s can solve within this budget B α . 3. Exp eriments 3.1. Exp erimental Setup W e ev aluate SA COBRA b y using a w ell-studied test suite of G-problems describ ed in [14, 22]. The div ersit y of the G-problem characteristics mak es them a very c hallenging benchmark for optimization techniques. In T able 1 we show features of these problems. The features ρ ∗ , F R and GR (defined in T able 1) are measured by Monte Carlo sampling with 10 6 p oin ts in the searc h space of each G-problem. Equalit y constraints are treated by replacing each equalit y op erator with an inequality op erator of the appropriate direction. This approach (same as in Regis’ work [31]) takes 3 EXPERIMENTS 17 T able 2: The default parameter setting used for COBRA. l is the length of the smallest side of the search space (after rescaling, if rescaling is done). The settings for T f eas , T inf eas proportional to √ d ( d : dimension of problem) are taken from [31]. parameter v alue COBRA-R SACOBRA init 0 . 005 · l 0 . 005 · l max 0 . 01 · l 0 . 01 · l T f eas b 2 √ d c b 2 √ d c T inf eas b 2 √ d c b 2 √ d c Ξ { 0 . 3 , 0 . 05 , 0 . 001 , 0 . 0005 , 0 . 0 } Adaptive plog ( . ) Nev er Adaptiv e aC F Nev er Alw ays RS Nev er Adaptiv e as ” appropriate direction “ this side of the equalit y hyperplane where the ob jective function increases. The MOPT A08 b enc hmark b y Jones [17] is a substitute for a high-dimensional real-world problem encoun tered in the automotiv e industry: It is a problem with d = 124 dimensions and with 68 constraints. The problem should b e solved within 1860 = 15 · d function ev alua- tions. This corresp onds to one month of computation time on a high-p erformance computer for the real automotive problem since the real problem requires time-consuming crash-test sim ulations. The COBRA-R optimization framework allo ws the user to choose b etw een sev eral initial- ization approaches: Latin hypercub e sampling ( LHS ), Biased and Optimized [18]. While LHS initialization is alwa ys p ossible (and is in fact used for all runs of the G-problem b ench- mark with n init = 3 d ), the other algorithms are only possible if a feasible starting p oint is provided. In COBRA [31] the initialization is alwa ys done randomly by means of Latin h yp ercub e sampling for functions without feasible starting p oint. In the case of MOPT A08 a feasible p oin t is kno wn. W e use the Optimized initialization approac h, where an initial optimization run is started from this feasible point with the Hooke & Jeeves pattern searc h algorithm [15]. This initial run provides a set of n init = 500 p oints in the vicinit y of the feasible p oin t. This set serves as initial design for MOPT A08. T able 2 shows the parameter settings used for COBRA-R and SA COBRA. All G-problems w ere optimized with exactly the same initial parameter settings. In contrast to that, the COBRA results in Regis [31] and our previous work [18] w ere obtained by manually activ ating plog for some G-problems and b y man ually adjusting constraint factors and other parameters. 3.2. Conver genc e Curves Figures 5 – 7 show the SA COBRA con vergence plots for all G-problems. It is clearly visible that all problems except G02 are solved in the ma jority of runs, if we define solve d as a target error b elow τ = 0 . 05 in comparison to the true optimum. In some cases (G03, G05, G09, G10) the worst error do es not meet the target, but in the other cases it do es. In most cases, as indicated b y the red squares, there is a clear improv emen t to Regis’ COBRA results [31]. 3 EXPERIMENTS 18 ● ● ● ● ● ● ● ● ● ● ● ● ● ● 1e−06 1e−03 1e+00 40 45 50 55 60 65 70 75 80 85 90 95 100 function ev aluations f(x)−f(x*) G01 problem ( d=13, m=9) ● ● ● ● ● ● ● ● ● ● ● 1e−06 1e−04 1e−02 1e+00 60 105 150 195 240 285 330 375 420 465 function ev aluations f(x)−f(x*) G03 problem ( d=20, m=1) ● ● ● ● ● ● ● ● ● ● ● 1e−07 1e−04 1e−01 1e+02 20 40 60 80 100 120 140 160 180 200 function ev aluations f(x)−f(x*) G04 problem ( d=5, m=6) ● ● ● ● ● ● ● ● ● ● ● 1e−02 1e+00 1e+02 10 30 50 70 90 110 130 150 170 190 function ev aluations f(x)−f(x*) G05 problem ( d=4, m=5) Figure 5: SACOBRA optimization pro cess for G01 – G05. The gray curve shows the median of the error for 30 indep endent trials. The error is calculated with resp ect to the true minimum f ( x ∗ ). The gray shade around the median is showing the worst and the b est error. The error bars mark the 25% and 75% quartile. The red square is the result rep orted by Regis [31] after 100 iterations. 3 EXPERIMENTS 19 ● ● ● ● ● ● ● ● ● ● ● 1e−05 1e−02 1e+01 1e+04 10 20 30 40 50 60 70 80 90 100 function ev aluations f(x)−f(x*) G06 problem ( d=2, m=2) ● ● ● ● ● ● ● ● ● ● ● ● ● 1e−06 1e−03 1e+00 1e+03 30 45 60 75 90 105 120135 150 165180 195 function ev aluations f(x)−f(x*) G07 problem ( d=10, m=8) ● ● ● ● ● ● ● ● ● ● ● 1e−11 1e−08 1e−05 1e−02 10 60 110 160 210 260 310 360 410 460 function ev aluations f(x)−f(x*) G08 problem ( d=2, m=2) ● ● ● ● ● ● ● ● ● ● ● 1e−03 1e+00 1e+03 1e+06 20 70 120 170 220 270 320 370 420 470 function ev aluations f(x)−f(x*) G09 problem ( d=7, m=4) ● ● ● ● ● ● ● ● ● ● ● 1e−08 1e−04 1e+00 1e+04 20 70 120 170 220 270 320 370 420 470 function ev aluations f(x)−f(x*) G10 problem ( d=8, m=6) ● ● ● ● ● ● ● ● ● ● ● 1e−11 1e−08 1e−05 10 20 30 40 50 60 70 80 90 100 function ev aluations f(x)−f(x*) G11 problem ( d=2, m=1) Figure 6: Same as Fig. 5 for G06 – G11. 3 EXPERIMENTS 20 ● ● ● ● ● ● ● ● ● ● ● ● 0.3 0.4 0.5 0.6 0.7 30 45 60 75 90 105 120 135 150 165 180 195 function ev aluations f(x)−f(x*) G02−10d problem ( d=10, m=2) ● ● ● ● ● ● ● ● ●● 0.3 0.4 0.5 0.6 0.7 60 75 90 105 120 135 150 165 180 195 function ev aluations f(x)−f(x*) G02−20d problem ( d=20, m=2) Figure 7: Same as Fig. 5 for G02 in 10 and 20 dimensions. 3.3. Performanc e Pr ofiles Our main result is shown in Fig.8. It shows the data profiles for different SACOBRA v arian ts in comparison with the data profile for COBRA-R. COBRA-R was run with a fixed parameter set. 10 W e note in passing that other fixed parameter settings for COBRA-R w ere tested, they were p erhaps b etter on some of the runs but inevitably worse on other runs, so that in the end a similar or slightly worse data profile for COBRA-R would emerge. SA COBRA increases significantly the success rate on the G-problem b enchmark suite. In addition, we analyze in Fig. 8 the effect of the five elements of SACOBRA: The \ -data profiles present the SA COBRA results when one sp ecific of the fiv e SACOBRA elements is switched off. W e see that the strongest effects o ccur when rescale is switched off (early iterations) or when aFF is switc hed off (later iterations). Fig. 9 shows that each of these elements has its relev ance for some of the G-problems: The full SACOBRA metho d is compared with other SACOBRA- or COBR A-v ariants M ∗ on 30 runs. SA COBRA is significantly b etter than each M ∗ at least for some G-problems (eac h column has a dark cell). And each G-problem b enefits from one or more SA COBRA extensions (each ro w has a dark cell). The only exception from this rule is G11, but for a simple reason: G11 is an easy problem which is solved by al l SA COBRA v ariants in each run, so none is significan tly b etter than the others. 3.4. Fitness F unction A djustment By comparing the conv ergence curv es of G-functions we realized that applying the loga- rithmic transform is strictly harmful for three of the G-functions, significantly b eneficial for t wo other problems, and with negligible effect on the other problems. Therefore, a careful 10 In our previous work [18, 19] we rep orted go o d results with COBRA-R, but this was with v arying parameters and with tedious parameter tuning on each sp ecific G-problem. 3 EXPERIMENTS 21 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 0.4 0.5 0.6 0.7 0.8 0.9 10 20 30 40 50 perf or mance f actor α data profile ● ● ● SA COBRA SA COBRA\aDRC SA COBRA\aCF SA COBRA\rescale SA COBRA\RS SA COBRA\aFF COBRA−R(rescale) COBRA−R(no rescale) G−problems , τ = 0.05 Figure 8: Analyzing the impact of different elemen ts of SACOBRA on the G-problems. Data profile of SACOBRA, SACOBRA \ rescale (SACOBRA without rescaling the input space), and other ” \ “ -algorithms are with a similar meaning. COBRA-R is the old version of COBRA [18], i. e. SACOBRA with all adjustment extensions switched off. These algorithms are p erformed on 330 different problems (11 test problems from G-function suite which are initialized with 30 different initial design p oin ts). selection should b e done. Although w e demonstrated in Sec. 2.3.2 that steep functions can b e better mo deled after the logarithmic transformation, it is not trivial to define a correct threshold to classify steep functions. Also, there is no direct relation b et ween steepness of the function and the effect of logarithmic transformation on optimization. W e defined in Sec. 2.5.5 and Algorithm 2, function Anal yzePlogEffect , a measure called Q in order to quan tify online whether RBF mo dels with and without pl og transformation are b etter or w orse. Here we test by exp erimen ts whether the Q -v alue do es a go o d job. Fig. 10 shows the Q - v alue for all G-problems. The G-problems are are ranked on the horizontal axis according to the impact of logarithmic transformation of the fitness function on the optimization outcome. This means that applying the plog -transformation has the worst effect for mo deling the fitness of G01 and the b est effect for G03. W e measure the impact on optimization in the following w ay: F or eac h G-problem we perform 30 runs with plog inactiv e and with plog active. W e calculate the median of the final optimization error in b oth cases and take the ratio R = median( E opt ) median( E ( plog ) opt ) . (16) 3 EXPERIMENTS 22 G11 G10 G09 G08 G07 G06 G05 G04 G03 G02 G01 M1 M2 M3 M4 M5 M6 M7 p-value 0.5 < p ≤ 1 0.05 < p ≤ 0.5 p ≤ 0.05 Figure 9: Wilcoxon rank sum test, paired, one sided, significance lev el 5%. Sho wn is the p-v alue for the hypothesis that for a sp ecific G-problem the full SACOBRA method at the final iteration is b etter than another solv er M ∗ . Significan t impro v ements ( p ≤ 5%) are marked as cells with dark blue color. Optimization methods: M1: SA COBRA \ rescale (SACOBRA without rescaling the input space), M2: SACOBRA \ RS (SACOBRA without random start), M3: SACOBRA \ aDR C, M4: SACOBRA \ aFF, M5: SACOBRA \ aCF, M6: COBRA (Ξ = Ξ s ), M7: COBRA (Ξ = Ξ l ). Note that R is usually not a v ailable in normal optimization mo de. If R is { close to zero, close to 1, muc h larger than 1 } then the effect of pl og on optimization p erformance is { harmful, neutral, beneficial } . It is a striking feature of Fig. 10 that the Q -ranks are v ery similar to the R -ranks. 11 This means that the b eneficial or harmful effect of pl og is strongly c orrelated with the RBF appro ximation error. Our exp eriments ha ve sho wn that for all problems with Q ∈ [ − 1 , 1] the optimization p er- formance is only weakly influenced by the logarithmic transformation of the fitness function. Therefore, in Step 19 of function AdjustFitnessFunction in Algorithm 2, any threshold in [ − 1 , 1] will work. W e choose the threshold 1, b ecause it has the largest margin to the colored bars in Fig. 10. The G-problems for which pl og is b eneficial are G03 and G09: These are according to T able 1 the tw o problems with the largest fitness function range F R , thus strengthening our h yp othesis from Sec. 2.3.2: F or suc h functions a plog -transform should b e used to get go o d RBF-mo dels. The G-problems for which pl og is harmful are G01, G07, and G10: Lo oking at 11 The only notable difference, namely the switch in the order of G07 and G10, can b e seen as an imp erfection of measure R . Although G10 has rank 3 in R , it has weaker worst-case b ehavior than G07 b ecause tw o G10 runs never pro duce a feasible solution if plog is active. 3 EXPERIMENTS 23 −6 −4 −2 0 2 4 6 G01 G07 G10 G04 G05 G08 G06 G11 G09 G03 Q harmful neutral beneficial Figure 10: Q -v alue (Eq. (11)) at end of optimization for all G-problems. The G-problems are ordered along the x-axis according to the R -v alue defined in Eq. (16) which measures the impact of pl og on the optimization performance. Any threshold for Q in [ − 1 , 1] will clearly separate the harmful from the b eneficial problems. This figure shows that the online a v ailable Q is a goo d predictor of the impact of pl og on the ov erall optimization p erformance. the analytical form of the ob jective function in those problems 12 w e can see that these are the only three functions b eing of quadratic type (T able 1) and having no mixed quadratic terms. Those functions can be fitted p erfectly b y the polynomial tail (Eq. (4)) in SA COBRA, if pl og is in activ e. With pl og they b ecome nonlinear and a more complicated approximation b y the radial basis functions is needed. This results in a larger approximation error. 3.5. Comp arison with other optimizers T able 3 sho ws the comparison with different state-of-the-art optimizers on the G-problem suite. While ISRES (Improv ed Sto chastic Ranking [36]) and DE (Differential Evolution [2]) are the b est optimizers in terms of solution qualit y , they require the highest num b er of function ev aluations as well. SACOBRA has on most G-problems (except G02) the same solution quality , only G09 and G10 are very sligh tly w orse. A t the same time SACOBRA requires only a small fraction of function ev aluations (fe): roughly 1/1000 as compared to ISRES and R GA and 1/300 as compared to DE (row aver age fe in T able 3). G02 is marked in red cell color in T able 3 b ecause it is not solved to the same level of accuracy by most of the optimizers. ISRES and RGA (Repair GA [5]) get close, but only after more than 300 000 fe. DE p erforms even b etter on G02, but requires more than 200 000 fe as w ell. SACOBRA and COBRA cannot solv e G02. 12 The analytical form is av ailable in the app endices of [35] or [36]. 3 EXPERIMENTS 24 T able 3: Different optimizers: median (m) of b est feasible results and (fe) av erage num b er of function ev aluations. Results from 30 indep enden t runs with different random number seeds. Numbers in b oldface (blue) : distance to the optim um ≤ 0 . 001. Numbers in italic (r e d) : rep ortedly b etter than the true optim um. COBYLA sometimes returns slightly infeasible solutions (num b er of infeasible runs in brack ets). Fct. Optimum SACOBRA COBRA ISRES RGA 10% COBYLA DE [this work] [31] [36, 35] [5] [28] (infeas) [2, 41] G01 -15.0 m -15.0 NA -15.0 -15.0 -13.83 -15.0 fe 100 NA 350000 95512 12743 59129 G02 -0.8036 m -0.3466 NA -0.7931 -0.7857 -0.197 (5) -0.8036 fe 400 NA 349600 331972 97391 226994 G03 -1.0 m -1.0 -0.09 -1.001 -0.9999 -1.0 (3) -0.9999 fe 300 100 349200 399804 31069 211966 G04 -30665.539 m -30665.539 -30665.15 -30665.539 -30665.539 -30665.539 -30665.539 fe 200 100 192000 26981 418 33963 G05 5126.497 m 5126.498 5126.51 5126.497 5126.498 5126.498 (7) 5126.498 fe 200 100 195600 39459 194 13375 G06 -6961.81 m -6961.81 -6834.48 -6961.81 -6961.81 -6961.81 (3) -6961.81 fe 100 100 168800 13577 134 2857 G07 24.306 m 24.306 25.32 24.306 24.471 24.306 (6) 24.306 fe 200 100 350000 428314 13072 94313 G08 -0.0958 m -0.0958 -0.1 -0.0958 -0.0958 -0.0282 -0.0958 fe 200 100 160000 6217 553 990 G09 680.630 m 680.761 3953.97 680.630 680.638 680.630 (2) 680.630 fe 300 100 271200 388453 8973 34836 G10 7049.248 m 7049.253 18031.74 7049.248 7049.566 7064.8 (22) 7049.248 fe 300 100 348800 572629 270840 74875 G11 0.75 m 0.75 NA 0.75 0.75 0.75 0.75 fe 100 NA 137200 7215 11788 2190 av erage fe 218 100 261127 210012 40652 68681 total fe 2400 800 2872400 2310133 447175 755488 3 EXPERIMENTS 25 ● ● ● ● ● ● ● ● ● ● 0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0 10 20 30 40 50 performance f actor α data profile ● SACOBRA COBRA−R COBYLA DE G−problems, τ = 0.05 ● ● ● ● ● ● ● ● 0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 0 200 400 600 800 1000 performance f actor α data profile ● SACOBRA COBRA−R COBYLA DE G−problems, τ = 0.05 Figure 11: Comparing the p erformance of the algorithms SACOBRA, COBRA-R (with rescale), Differential Evolution (DE), and COBYLA on optimizi ng all G-problems G01-G11 (30 runs with different initial random populations). The results in column SA COBRA, DE and COBYLA are from our own calculation in R while the results in column COBRA, ISRES and R GA were taken from the pap ers cited. In t wo cases (red italic n um b ers in T able 3) the rep orted solution is b etter than the true opti- m um, p ossibly due to a sligh t infeasibility . This is explicitly stated in the case of ISRES [35, p. 288], b ecause the equality constraint h ( x ) = 0 of G03 is transformed into an approximate inequalit y | h ( x ) | ≤ with = 0 . 0001. COBRA [31] comes close to SACOBRA in terms of efficiency (function ev aluations), but it has to b e noted that [31] do es not present results for all G-problems (G01 and G11 are missing and G02 results are for 10 dimensions, but the commonly studied version of G02 has 20 dimensions). F urthermore, for many G-problems (G03, G06, G07, G09, G10) a manual transformations of the original fitness function or the constraint functions was done in [31] prior to optimization. SACOBRA starts without such transformations and proposes instead self-adjusting mec hanisms to find suitable transformations (after the initialization phase or on-line). COBYLA often pro duces slightly infeasible solutions, these are the num bers in brac kets. If suc h infeasible runs o ccur, the median was only taken o ver the remaining feasible runs, whic h is in principle to o optimistic in fa vor of COBYLA. Fig. 11 shows the comparison of SACOBRA and COBRA-R with other w ell-known con- strain t optimization solvers av ailable in R, namely DE 13 and COBYLA. 14 The righ t plot in Fig. 11 sho ws that DE achiev es go od results after many function ev aluations, in accordance with T able 3. But the left plot in Fig. 11 sho ws that DE is not really comp etitive if very tigh t b ounds on the budget are set. T ab. 4 shows that SACOBRA greatly reduces the n umber of infeasible runs as compared to COBRA-R. Most of the SACOBRA v arian ts ha ve less than 2% infeasible runs whereas 13 R -pack age DEoptimR , av ailable from https://cran.r- project.org/web/packages/DEoptimR 14 R -pack age nloptr , av ailable from https://cran.r- project.org/web/packages/nloptr 4 DISCUSSION 26 T able 4: Number of infeasible runs among 330 runs returned by each metho d on the G-problem b enc hmark. A run is infeasible if the final b est solution is infeasible. metho d infeasible runs functions SA COBRA 0 – SA COBRA \ rescale 4 G05 SA COBRA \ RS 13 G03, G05, G07,G09,G10 SA COBRA \ aDRC 0 – SA COBRA \ aFF 1 G10 SA COBRA \ aCF 0 – COBRA-R(no rescale) 37 G03,G05,G07,G09,G10 COBRA-R(rescale) 23 G05,G07,G09,G10 COBYLA 48 G02,G03,G05,G06,G07,G09,G10 DE 0 – T able 5: Comparing different algorithms on optimizing MOPT A08 after 1000 function ev aluations. Algorithm b est median mean worst COBRA-R [19] 226.3 227.0 227.3 229.5 TRB [33] 225.5 226.2 226.4 227.4 SA COBRA \ RS 222.4 223.1 223.6 224.8 SA COBRA 223.0 223.3 223.3 223.8 COBRA-R has 7-11%. The full SACOBRA metho d has no infeasible runs at all. 3.6. MOPT A08 Fig. 12 sho ws that w e get goo d results with SACOBRA on the high-dimensional MOPT A08 problem ( d = 124) as well. A problem is said to be solve d in the data profile of Fig. 12 if it is not more than τ = 0 . 4 aw a y from the b est v alue obtained in all runs by all algorithms. T able 5 shows the results after 1000 iterations for Regis’ recent trust-region based ap- proac h TRB [33] and our algorithms. W e can improv e the already goo d mean b est feasible results of 227.3 and 226.4 obtained with COBRA-R [19] and TRB [33], resp., to 223.3 with SA COBRA. The reason that SA COBRA \ RS is sligh tly better than COBRA-R [19] is that SA COBRA uses an improv ed DR C. 4. Discussion 4.1. SACOBRA and surr o gate mo deling SA COBRA is an algorithm capable of self-adjusting its parameters to a wide-ranging set of problems in constraint optimization. W e analyzed the differen t elements of SA COBRA and their imp ortance for efficient optimization on the G-problem b enc hmark. It turned out that the tw o most imp ortan t elemen ts are rescaling (especially in the early phase of optimization) and automatic fitness function adjustment (aFF, especially in the later phase 4 DISCUSSION 27 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1.0 5 10 15 20 perf or mance f actor α data profile ● SA COBRA SA COBRA\RS COBRA−R MOPT A08, τ = 0.4 Figure 12: Data profile for MOPT A08: Same as Fig. 8 but with 10 runs on MOPT A08 with different initial designs. The curves for SACOBRA without rescale, aDR C, aFF, or aCF are identical to full SACOBRA, since in the case of MOPT A08 the ob jective function and the constraints are already normalized. of optimization). Exclusion of either one of these t w o elements led to the largest performance drop in Fig. 8 compared to the full SACOBRA algorithm. W e may step back for a moment and ask why these tw o elements are imp ortan t. Both of them are directly related to accurate RBF mo deling, as our analysis in Sec. 2.3.1 has shown. If w e do not rescale, then the RBF model for a problem lik e G10 will hav e large approximation errors due to numeric instabilities. If we do not p erform the pl og -transformation in problems lik e G03 with a very large fitness range F R (T ab. 1) and thus very steep regions, then such problems cannot b e solved. This can b e attributed to large RBF approximation errors as w ell. W e diagnosed that the qualit y of the surrogate mo dels is in relationship with the correct c hoice of the DR C parameter, which controls the step size in eac h iteration. It is more desirable to choose a set of smaller step sizes for functions with steep slop es. An automatic adjustmen t step in SACOBRA can identify steep functions after a few function ev aluations and decide whether to use a large DRC or a small one. F or the G-problem suite the constrain t functions v ary in num ber, t yp e and range. Our exp erimen ts sho wed that handling all constrain ts can be c hallenging, especially when the con- strain t functions ha ve widely differen t ranges. F or that reason, we considered an automatic adjustmen t approach to normalize all the constraints by using the information gained ab out the constraints after the ev aluation of the initial p opulation. The SACOBRA algorithm also b enefits from using a random start mechanism to av oid getting stuck in a lo cal optimum of the fitness surrogate. 5 CONCLUSION 28 4.2. Limitations of SACOBRA 4.2.1. Highly multimo dal functions Surrogate mo dels like RBF are a great thing for efficien t optimization and probably the only w a y to solve constrained optimization problems in less than 500 iterations. But a curren t b order for surrogate mo deling are highly multimodal functions. G02 is such a function, it has a large n um b er of local minima. Those functions ha v e usually large first and higher order deriv ativ es. If a surrogate mo del interpolates isolated points of suc h a function, it tends to o vershoot in other parts of the function. T o the b est of our knowledge, highly m ultimo dal problems cannot b e solved so far by surrogate mo dels, at least not for higher dimensions with high accuracy . This is also true for SACOBRA. Usually the RBF mo del has a go o d appro ximation only in the region of one of the lo cal minima and a bad approximation in the rest of the search space. F urther researc h on highly multimodal function approximation is required to solv e this problem. 4.2.2. Equality c onstr aints The current approach in COBRA (and in SACOBRA as w ell) can only handle inequality constrain ts. The reason is that equality constraints do not w ork together with the uncertaint y mec hanism of Sec. 2.4. A reformulation of an equality constraint h ( x ) = 0 as inequalit y | h ( x ) | ≤ 0 . 0001 as in [35] is not well-suited for COBRA and for RBF mo deling. W e used in this work the same approac h as Regis [31] and replaced eac h equalit y op erator with an inequalit y op erator of the appropriate direction. It has to b e noted how ever, that such an approac h contradicts a true black-box handling of constrain ts and that it is – although b eing viable for the problems G01-G11 – not viable for more complicated ob jectiv e functions having their minima on b oth sides of equalit y constrain ts. In a forthcoming paper [1] w e will address this problem separately . 5. Conclusion W e summarize our discussion b y stating that a go o d understanding of the capabilities and limitations of RBF surrogate mo dels – which is not often undertak en in the surrogate literature w e are aw are of – is an imp ortan t prerequisite for efficien t and effectiv e constrained optimization. The analysis of the errors and problems o ccurring initially for some G-problems in the COBRA algorithm ha ve given us a b etter understanding of RBF mo dels and led to the dev elopment of the enhancing elemen ts in SACOBRA. By studying a widely v arying set of problems w e observ ed certain c hallenges when modeling v ery steep or relativ ely flat functions with RBF. This can result in large appro ximation errors. SA COBRA tackles this problem b y making use of a conditional plog -transform for the ob jectiv e function. W e proposed a new online mec hanism to let SACOBRA decide automatically when to use pl og and when not. Numerical issues to train RBF mo dels can also o ccur in the case of a very large input space. A simple solution to this problem is to rescale the input space. Although many other optimizers recommend to rescale the input, this work has shown the reason b ehind it and the imp ortance of it by evidence. Therefore, we can answer our first research question (H1) p ositiv ely: Numerical instabilities can o ccur in RBF mo deling, but it is p ossible to av oid them with the prop er function transformations and search space adjustmen ts. REFERENCES 29 SA COBRA b enefits from all its extension elemen ts in tro duced in Sec. 2.5. Each element b oosts up the optimization p erformance on a subset of all problems without harming the optimization pro cess on the other ones. As a result, the ov erall optimization p erformance on the whole set of problems is impro v ed by 50% as compared to COBRA (with a fixed parameter set). Ab out 90% of the tested problems can b e solv ed efficiently b y SA COBRA (Fig. 8). The answ er to (H2) is: SACOBRA is capable to cop e with many diverse c hallenges in constraint optimization. It is the m ain contribution of this pap er to prop ose with SA COBRA the first surrogate-assisted constrained optimizer which solv es efficiently the G-problem b enc hmark and requires no parameter tuning or manual function transformations. Finally , let us pro vide a result to (H3) : SACOBRA requires less than 500 function ev aluations to solv e 10 out of 11 G-problems (exception: G02) with similar accuracy as other state-of-the- art algorithms. Those other algorithms often need betw een 300 and 1000 times more function ev aluations. Our future research will b e devoted to ov ercome the current limitations of SACOBRA men tioned in Sec. 4.2. These are: (a) highly multimodal functions like G02 and (b) equalit y constrain ts. Ac kno wledgements This w ork has b een supp orted by the Bundesministerium f¨ ur Wirtsc haft (BMWi) under the ZIM gran t MONREP (AiF FKZ KF3145102, Zen trales Innov ationsprogramm Mittelstand). References [1] S. Bagheri, T. B¨ ack, and W. Konen. Equality constraint handling for surrogate-assisted constrained optimization. In WCCI’2016 , V ancouver, Canada, in preparation, 2016. [2] J. Brest, S. Greiner, B. Bo ˇ sko vi ´ c, M. Mernik, and V. Zumer. Self-adapting control pa- rameters in differential evolution: a comparativ e study on n umerical b enc hmark prob- lems. Evolutionary Computation, IEEE T r ansactions on , 10(6):646–657, 2006. [3] D. Bro ckhoff, T.-D. T ran, and N. Hansen. Benc hmarking n umerical m ultiob jectiv e optimizers revisited. In Genetic and Evolutionary Computation Confer enc e (GECCO 2015) , Madrid, Spain, July 2015. [4] M. D. Buhmann. R adial Basis F unctions: The ory and Implementations . Cambridge Univ ersity Press, 2003. [5] P . Cho otinan and A. Chen. Constraint handling in genetic algorithms using a gradient- based repair metho d. Computers & Op er ations R ese ar ch , 33(8):2263–2281, 2006. [6] C. A. Coello Coello. Use of a self-adaptiv e p enalt y approac h for engineering optimization problems. Computers in Industry , 41(2):113–127, 2000. REFERENCES 30 [7] C. A. Co ello Co ello. Constrain t-handling techniques used with evolutionary algorithms. In Pr o c. 14th International Confer enc e on Genetic and Evolutionary Computation Con- fer enc e (GECCO) , pages 849–872. A CM, 2012. [8] C. A. Co ello Co ello and E. M. Montes. Constraint-handling in genetic algorithms through the use of dominance-based tournamen t selection. A dvanc e d Engine ering In- formatics , 16(3):193–203, 2002. [9] K. Deb. An efficient constraint handling method for genetic algorithms. Computer metho ds in applie d me chanics and engine ering , 186(2):311–338, 2000. [10] A. E. Eib en, R. Hin terding, and Z. Mic halewicz. P arameter con trol in evolutionary algorithms. Evolutionary Computation, IEEE T r ansactions on , 3(2):124–141, 1999. [11] A. E. Eiben and J. E. Smith. Intr o duction to evolutionary c omputing . Springer, 2003. [12] M. Emmerich, K. C. Giannak oglou, and B. Naujoks. Single- and multiob jective evo- lutionary optimization assisted by gaussian random field metamo dels. Evolutionary Computation, IEEE T r ansactions on , 10(4):421–439, 2006. [13] R. F armani and J. W righ t. Self-adaptiv e fitness form ulation for constrained optimization. Evolutionary Computation, IEEE T r ansactions on , 7(5):445–455, 2003. [14] C. A. Floudas and P . M. P ardalos. A Col le ction of T est Pr oblems for Constr aine d Glob al Optimization Algorithms . Springer-V erlag New Y ork, Inc., New Y ork, NY, USA, 1990. [15] R. Hooke and T. Jeev es. Direct search solution of numerical and statistical problems. Journal of the A CM (JACM) , 8(2):212–229, 1961. [16] L. Jiao, L. Li, R. Shang, F. Liu, and R. Stolkin. A nov el selection evolutionary strategy for constrained optimization. Information Scienc es , 239:122 – 141, 2013. [17] D. R. Jones. Large-scale multi-disciplinary mass optimization in the auto industry . In Confer enc e on Mo deling A nd Optimization: The ory And Applic ations (MOPT A), Ontario, Canada , pages 1–58, 2008. [18] P . Ko c h, S. Bagheri, W. Konen, C. F oussette, P . Krause, and T. B¨ ac k. Constrained op- timization with a limited n um b er of function ev aluations. In F. Hoffmann and E. H¨ uller- meier, editors, Pr o c. 24. Workshop Computational Intel ligenc e , pages 119–134. Univ er- sit¨ atsv erlag Karlsruhe, 2014. [19] P . Koch, S. Bagheri, W. Konen, C. F oussette, P . Krause, and T. B¨ ack. A new re- pair metho d for constrained optimization. In Pr o c e e dings of the 2015 on Genetic and Evolutionary Computation Confer enc e (GECCO) , pages 273–280. ACM, 2015. [20] O. Kramer. A review of constraint-handling tec hniques for evolution strategies. Applie d Computational Intel ligenc e and Soft Computing , 2010:1–11, 2010. [21] O. Kramer and H.-P . Sch w efel. On three new approaches to handle constraints within ev olution strategies. Natur al Computing , 5(4):363–385, 2006. REFERENCES 31 [22] Z. Michalewicz and M. Schoenauer. Evolutionary algorithms for constrained parameter optimization problems. Evolutionary Computation , 4(1):1–32, 1996. [23] E. M. Montes and C. A. Co ello Co ello. A simple multimem b ered evolution strategy to solv e constrained optimization problems. IEEE T r ansactions on Evolutionary Compu- tation , 9(1):1–17, 2005. [24] E. M. Mon tes and C. A. Coello Coello. Constraint handling in nature-inspired n umerical optimization: past, presen t and future. Swarm and Evolutionary Computation , 1(4):173– 194, 2011. [25] J. J. Mor´ e and S. M. Wild. Benchmarking deriv ativ e-free optimization algorithms. SIAM J. Optimization , 20(1):172–191, 2009. [26] J. Poloczek and O. Kramer. Lo cal SVM constraint surrogate mo dels for self-adaptive ev olution strategies. In KI 2013: A dvanc es in Artificial Intel ligenc e , pages 164–175. Springer, 2013. [27] M. J. D. Po well. The theory of radial basis function approximation in 1990. A dvanc es In Numeric al A nalysis , 2:105–210, 1992. [28] M. J. D. P ow ell. A direct search optimization metho d that mo dels the ob jectiv e and constrain t functions by linear interpolation. In A dvanc es In Optimization And Numeric al A nalysis , pages 51–67. Springer, 1994. [29] A. K. Qin and P . N. Sugan than. Self-adaptive differential ev olution algorithm for n u- merical optimization. In IEEE Congr ess on Evolutionary Computation (CEC), 2005 , v olume 2, pages 1785–1791. IEEE, 2005. [30] R Core T eam. R: A L anguage and Envir onment for Statistic al Computing . R F oundation for Statistical Computing, Vienna, Austria, 2013. [31] R. G. Regis. Constrained optimization b y radial basis function in terp olation for high- dimensional exp ensive black-box problems with infeasible initial p oints. Engine ering Optimization , 46(2):218–243, 2014. [32] R. G. Regis. Particle swarm with radial basis function surrogates for expensive black-box optimization. Journal of Computational Scienc e , 5(1):12–23, 2014. [33] R. G. Regis. T rust regions in surrogate-assisted ev olutionary programming for con- strained exp ensiv e black-box optimization. In R. Datta and K. Deb, editors, Evolution- ary Constr aine d Optimization , pages 51–94. Springer, 2015. [34] R. G. Regis and C. A. Sho emak er. A quasi-multistart framew ork for global optimization of expensive functions using resp onse surface mo dels. Journal of Glob al Optimization , 1:1, 2012. [35] T. P . Runarsson and X. Y ao. Sto chastic ranking for constrained evolutionary optimiza- tion. IEEE T r ansactions on Evolutionary Computation , 4(3):284–294, 2000. REFERENCES 32 [36] T. P . Runarsson and X. Y ao. Searc h biases in constrained evolutionary optimization. IEEE T r ansactions on Systems, Man, and Cyb ernetics, Part C: Applic ations and R e- views , 35(2):233–243, 2005. [37] Y. T enne and S. W. Armfield. A memetic algorithm assisted by an adaptiv e topol- ogy RBF netw ork and v ariable lo cal mo dels for exp ensive optimization problems. In W. Kosinski, editor, A dvanc es in Evolutionary A lgorithms , page 468. INTECH Op en Access Publisher, 2008. [38] B. T essema and G. G. Y en. An adaptiv e penalty formulation for constrained evolutionary optimization. Systems, Man and Cyb ernetics, Part A: Systems and Humans, IEEE T r ansactions on , 39(3):565–578, 2009. [39] S. V enk atraman and G. G. Y en. A generic framework for constrained optimization using genetic algorithms. Evolutionary Computation, IEEE T r ansactions on , 9(4):424–435, Aug 2005. [40] E. Zahara and Y.-T. Kao. Hybrid Nelder–Mead simplex search and particle sw arm opti- mization for constrained engineering design problems. Exp ert Systems with Applic ations , 36(2):3880–3886, 2009. [41] H. Zhang and G. Rangaiah. An efficient constraint handling metho d with integrated differen tial ev olution for n umerical and engineering optimization. Computers & Chemic al Engine ering , 37:74 – 88, 2012. Vitae Samineh Bagheri received her B.Sc. degree in electrical engineering sp e- cialized in electronics from Shahid Behesh ti Univ ersity , T ehran, Iran in 2011. She received her M.Sc. in Industrial Automation & IT from Cologne Univ ersit y of Applied Sciences, where she is currently research assistant and Ph.D. student in co operation with Leiden Univ ersity . Her research interests are mac hine learning, ev olutionary computation and constrained and m ultiob jectiv e optimization tasks. REFERENCES 33 W olfgang Konen is Professor of Computer Science and Mathematics at Cologne Univ ersity of Applied Sciences, Germany . He received his Diploma in physics and his Ph.D. degree in theoretical ph ysics from the Univ ersity of Mainz, Germany , in 1987 and 1990, resp. He work ed in the area of neuroinformatics and computer vision at Ruhr- Univ ersity Bo ch um, Germany , and in several companies. He is founding member of the Researc h Centers Computational Intelligence, Optimization & Data Mining ( http://www. gociop.de ) and CIplus ( http://ciplus- research.de ). He co-authored more than 100 pap ers and his research interests include, but are not limited to: efficient optimization, neuro ev olution, mac hine learning, data mining, and computer vision. Dr. Michael Emmeric h is Assistant Professor at LIA CS, Leiden Univ er- sit y , and leader of the Multicriteria Optimization and Decision Analysis research group. He receiv ed his do ctorate in 2005 from Dortmund Univ ersity (H.-P . Sch w efel, promoter) and w orked as a researcher at ICD e.V. (Germany), IST Lisb on, Univ ersity of the Algarve (Por- tugal), A CCESS Material Science e.V. (German y), and the F OM/AMOLF institute (Nether- lands). He is known for pioneering w ork on model-assisted and indicator-based m ultiob jective optimization, and has co-authored more than 100 pap ers in mac hine learning, multicriteria optimization and surrogate-assisted optimization and its applications in chemoinformatics and engineering optimization. Thomas B¨ ac k is head of the Natural Computing Research Group at the Leiden Institute of Adv anced Computer Science (LIACS). He received his PhD in Computer Science from Dortmund Univ ersit y , Germany , in 1994. He has b een Asso ciate Professor of REFERENCES 34 Computer Science at Leiden Universit y since 1996 and full Professor for Natural Computing since 2002. Thomas B¨ ac k has more than 150 publications on natural computing tec hnologies. His main research interests are theory and applications of evolutionary algorithms (adaptive optimization metho ds gleaned from the model of organic ev olution), cellular automata, data- driv en mo deling and applications of those metho ds in medicinal chemistry , pharmacology , and engineering.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment