Mining Top-K Co-Occurrence Items

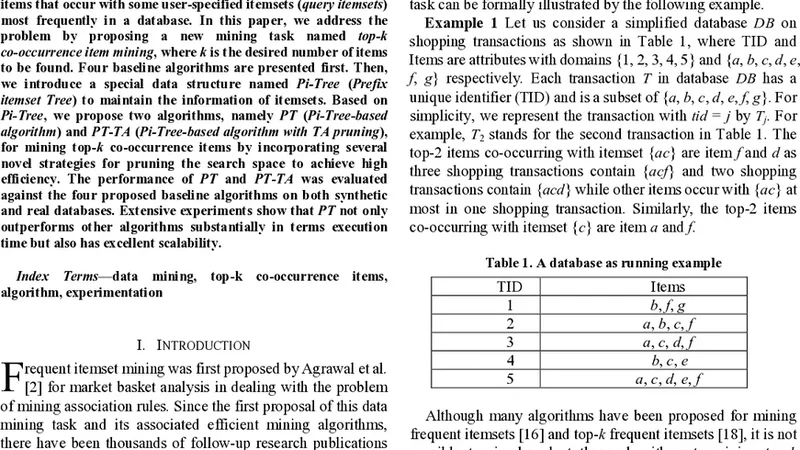

Frequent itemset mining has emerged as a fundamental problem in data mining and plays an important role in many data mining tasks, such as association analysis, classification, etc. In the framework of frequent itemset mining, the results are itemsets that are frequent in the whole database. However, in some applications, such recommendation systems and social networks, people are more interested in finding out the items that occur with some user-specified itemsets (query itemsets) most frequently in a database. In this paper, we address the problem by proposing a new mining task named top-k co-occurrence item mining, where k is the desired number of items to be found. Four baseline algorithms are presented first. Then, we introduce a special data structure named Pi-Tree (Prefix itemset Tree) to maintain the information of itemsets. Based on Pi-Tree, we propose two algorithms, namely PT (Pi-Tree-based algorithm) and PT-TA (Pi-Tree-based algorithm with TA pruning), for mining top-k co-occurrence items by incorporating several novel strategies for pruning the search space to achieve high efficiency. The performance of PT and PT-TA was evaluated against the four proposed baseline algorithms on both synthetic and real databases. Extensive experiments show that PT not only outperforms other algorithms substantially in terms execution time but also has excellent scalability.

💡 Research Summary

The paper introduces a novel data‑mining task called Top‑K Co‑Occurrence Item Mining, which focuses on finding the K items that most frequently appear together with a user‑specified query itemset Q, rather than identifying globally frequent itemsets. After formally defining the problem, the authors first present four baseline approaches: (1) an Apriori‑style candidate generation and filtering method, (2) a direct transaction scan that counts co‑occurrences, (3) an FP‑Tree based technique that extracts counts from a compressed tree, and (4) an inverted‑index method that intersects transaction lists of the query items. These baselines suffer from issues such as candidate explosion, high I/O cost, limited pruning capability, or excessive memory consumption.

To overcome these limitations, the authors propose a new data structure named Pi‑Tree (Prefix itemset Tree). Pi‑Tree inserts each transaction in a globally fixed item order (typically descending frequency) and shares common prefixes among transactions, thereby compressing the dataset. Each node stores the item identifier, a count of how many transactions pass through the node, and a “sub‑transaction count” that represents the total number of transactions in the subtree. This structure enables rapid access to all transactions that contain a given prefix and supports efficient pruning.

Based on Pi‑Tree, two algorithms are developed. PT (Pi‑Tree‑based algorithm) locates the nodes corresponding to the query itemset Q, then traverses the relevant subtrees to accumulate co‑occurrence frequencies for all other items. PT employs an Upper‑Bound Pruning strategy: before descending into a subtree, it computes the maximum possible additional count that could be contributed by that subtree and aborts the traversal if this bound cannot improve the current top‑K list.

PT‑TA extends PT with a Threshold Algorithm (TA) style pruning. It maintains sorted inverted lists for each item and, during the accumulation phase, compares the highest unseen count in these lists with the current K‑th highest co‑occurrence count (the threshold). Items whose potential counts fall below the threshold are eliminated from further consideration, dramatically reducing the number of subtree visits, especially when K is small and the candidate space is large.

The experimental evaluation uses both synthetic datasets (varying transaction count, average length, and item universe size) and real‑world datasets (retail point‑of‑sale logs and social‑network hashtag streams). Across different scales and values of K (e.g., 10, 50, 100), PT consistently outperforms all four baselines, achieving speedups of 3–10× on average. PT‑TA provides even larger gains in the most demanding scenarios, sometimes exceeding 20× faster than the best baseline. Memory consumption is reduced by 30–50 % compared with FP‑Tree, and the number of visited nodes drops to less than 5 % of the total when pruning is active.

The paper’s contributions are threefold: (1) it defines a practically relevant mining problem that aligns with recommendation and social‑network analysis needs; (2) it introduces Pi‑Tree, a compact prefix‑tree representation that enables fast co‑occurrence counting; and (3) it devises PT and PT‑TA, which integrate novel pruning techniques to achieve superior time and space efficiency. The authors acknowledge that Pi‑Tree construction incurs an upfront cost, that the choice of global item order influences performance, and that the current methods assume a static database. Future work is suggested on incremental updates, optimal ordering heuristics, and distributed implementations to handle streaming or massive‑scale environments.