On the Differential Privacy of Bayesian Inference

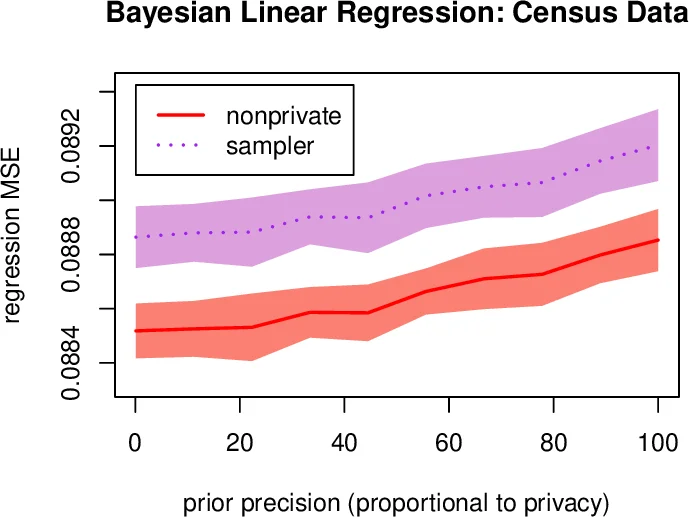

We study how to communicate findings of Bayesian inference to third parties, while preserving the strong guarantee of differential privacy. Our main contributions are four different algorithms for private Bayesian inference on proba-bilistic graphical models. These include two mechanisms for adding noise to the Bayesian updates, either directly to the posterior parameters, or to their Fourier transform so as to preserve update consistency. We also utilise a recently introduced posterior sampling mechanism, for which we prove bounds for the specific but general case of discrete Bayesian networks; and we introduce a maximum-a-posteriori private mechanism. Our analysis includes utility and privacy bounds, with a novel focus on the influence of graph structure on privacy. Worked examples and experiments with Bayesian na{"i}ve Bayes and Bayesian linear regression illustrate the application of our mechanisms.

💡 Research Summary

This paper addresses the problem of releasing Bayesian posterior distributions to third parties while guaranteeing differential privacy (DP). The authors focus on probabilistic graphical models (PGMs), where the structure of conditional independencies can be exploited to improve privacy‑utility trade‑offs. Four distinct mechanisms are proposed and analyzed:

- Laplace Mechanism on Posterior Parameters – For exponential‑family likelihoods with conjugate priors (e.g., Beta‑Bernoulli), the algorithm adds independent Laplace noise to each posterior count update (Δα, Δβ). The global sensitivity is shown to be 2|V|, where |V| is the number of variables in the graph. After noise addition, the counts are truncated to the feasible interval

Comments & Academic Discussion

Loading comments...

Leave a Comment