Signal Representations on Graphs: Tools and Applications

We present a framework for representing and modeling data on graphs. Based on this framework, we study three typical classes of graph signals: smooth graph signals, piecewise-constant graph signals, and piecewise-smooth graph signals. For each class, we provide an explicit definition of the graph signals and construct a corresponding graph dictionary with desirable properties. We then study how such graph dictionary works in two standard tasks: approximation and sampling followed with recovery, both from theoretical as well as algorithmic perspectives. Finally, for each class, we present a case study of a real-world problem by using the proposed methodology.

💡 Research Summary

The paper proposes a comprehensive framework for representing and processing signals defined on graphs, addressing three canonical classes of graph signals: smooth, piecewise‑constant, and piecewise‑smooth. It begins by reviewing the fundamentals of graph signal processing (GSP), introducing the graph shift operator and the graph Laplacian as two interchangeable ways to encode graph structure. By defining a graph structure matrix (R) (either a shift matrix (A) or a Laplacian (L)), the authors derive the graph Fourier transform, where eigenvectors of (R) serve as graph Fourier bases. Variations of a signal are quantified either by the spectral norm of (A) or by the quadratic form (x^{\top}Lx), providing a principled way to order eigenvectors from low‑frequency (smooth) to high‑frequency (oscillatory) components.

The core of the framework consists of three components: (1) a graph signal model that captures the essential properties of a class of signals, either descriptively (by bounding an operator output) or generatively (by specifying a dictionary representation); (2) a graph dictionary designed either passively (solely from the graph structure) or actively (using training signals). The authors enumerate desirable dictionary properties: frame bounds, sparsity guarantees, and uncertainty principles. (3) Graph signal processing tasks, focusing on approximation (sparse coding) and sampling‑plus‑recovery (linear dimension reduction followed by reconstruction).

For each signal class the paper defines a concrete model and constructs a tailored dictionary:

-

Smooth graph signals are modeled by a small Laplacian quadratic form (x^{\top}Lx). The dictionary consists of the low‑frequency eigenvectors of (R) (graph Fourier basis). The authors extend the classical uncertainty principle to graphs, showing a trade‑off between spectral concentration and vertex‑domain localization. Approximation experiments demonstrate that a handful of low‑frequency atoms suffice to reconstruct smooth signals with low error. For sampling, they propose selecting vertices based on spectral leverage scores or deterministic criteria, and recover signals via linear interpolation or sparse coding, achieving provable error bounds. A case study on a co‑authorship network illustrates that the smooth‑signal dictionary captures collaborative intensity patterns efficiently.

-

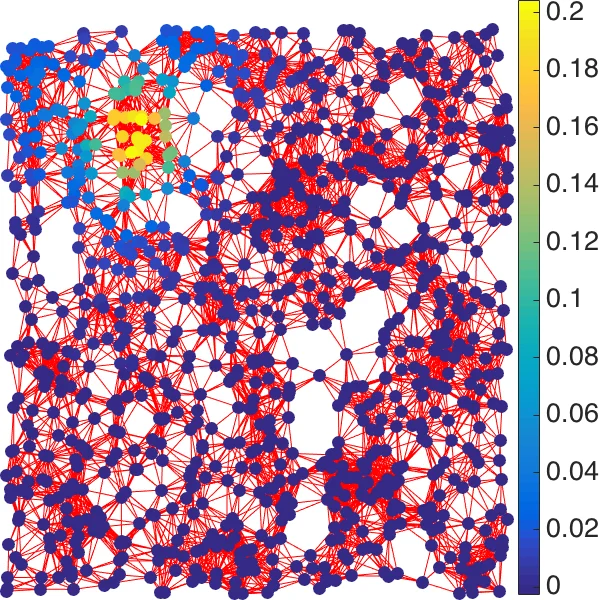

Piecewise‑constant graph signals are characterized by a small number of constant regions separated by sharp edges. The authors introduce a multiresolution decomposition of the graph into local sets (clusters) and construct three related dictionaries: (a) a local‑set based piecewise‑constant dictionary, (b) a local‑set based wavelet basis, and (c) a hierarchical partition dictionary. These dictionaries are designed to be tight frames with explicit bounds, and they promote sparsity because a signal constant on a set can be represented by a single atom. Approximation results show superior performance over global Fourier bases, especially when the number of regions is small. Sampling strategies exploit the locality of the atoms: sampling one vertex per active local set and reconstructing via graph‑Laplacian smoothing yields accurate recovery. The epidemic‑process case study demonstrates that the method can quickly identify infected clusters from few observations.

-

Piecewise‑smooth graph signals combine the previous two ideas: within each region the signal varies smoothly, while across region boundaries there may be abrupt changes. The authors propose a local‑set based piecewise‑smooth dictionary that fuses low‑frequency Laplacian eigenvectors (for intra‑region smoothness) with wavelet‑like atoms (for inter‑region transitions). This hybrid dictionary retains frame bounds and sparsity guarantees. Approximation experiments reveal that fewer atoms are needed compared with using either smooth or piecewise‑constant dictionaries alone. In the environmental‑change‑detection case study (satellite‑image time series), the method successfully isolates subtle land‑cover changes with limited samples, outperforming baseline methods.

Across all three classes, the paper provides rigorous theoretical analysis: bounds on approximation error as a function of the number of selected atoms, conditions for exact recovery under noiseless sampling, and stability guarantees under noise. The sampling analysis distinguishes three regimes—uniform random, deterministic, and active (adaptive) sampling—each with corresponding recovery algorithms (least‑squares, convex optimization, or greedy pursuit). The authors also discuss computational aspects, noting that many dictionary atoms can be pre‑computed offline, and that sparse coding can be solved efficiently via orthogonal matching pursuit or Lasso.

In conclusion, the work establishes a unified “model → dictionary → task” pipeline for graph‑based data, demonstrating that carefully crafted dictionaries aligned with signal structure dramatically improve both compression (approximation) and inference (sampling‑plus‑recovery). The framework is validated on three real‑world problems—academic collaboration networks, epidemic spreading, and environmental monitoring—showing practical relevance. The authors suggest future extensions toward graph neural networks, dynamic graphs, and large‑scale distributed implementations, positioning the framework as a foundational tool for the growing field of graph signal processing.

Comments & Academic Discussion

Loading comments...

Leave a Comment