Information entropy as an anthropomorphic concept

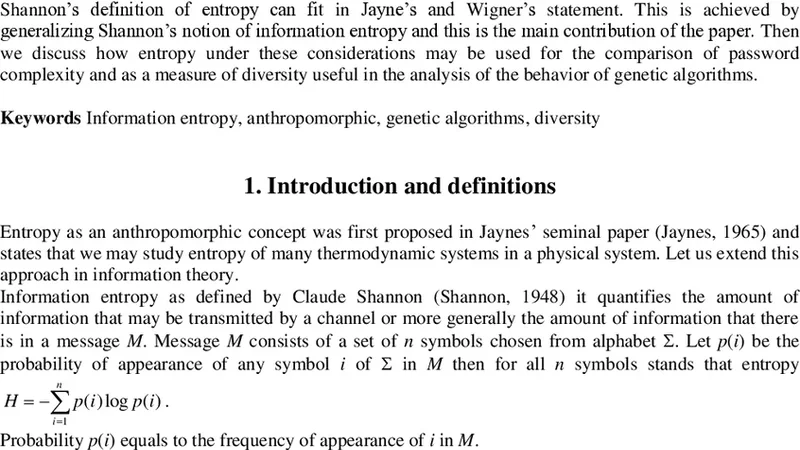

According to E.T. Jaynes and E.P. Wigner, entropy is an anthropomorphic concept in the sense that in a physical system correspond many thermodynamic systems. The physical system can be examined from many points of view each time examining different variables and calculating entropy differently. In this paper we discuss how this concept may be applied in information entropy; how Shannon’s definition of entropy can fit in Jayne’s and Wigner’s statement. This is achieved by generalizing Shannon’s notion of information entropy and this is the main contribution of the paper. Then we discuss how entropy under these considerations may be used for the comparison of password complexity and as a measure of diversity useful in the analysis of the behavior of genetic algorithms.

💡 Research Summary

The paper “Information entropy as an anthropomorphic concept” builds a bridge between the philosophical view of entropy in statistical physics and its quantitative use in information theory. Starting from the statements of E.T. Jaynes and E.P. Wigner, the authors recall that a physical system can be described by many different sets of macroscopic variables, each leading to a distinct thermodynamic entropy. In this sense entropy is “anthropomorphic”: its numerical value depends on the observer’s choice of variables and on the questions the observer wishes to answer.

The authors transpose this idea to Shannon’s information entropy. Instead of treating entropy as a single scalar derived from one probability distribution over a message space, they propose a generalized framework in which a data object is represented by a vector of attributes (a₁, a₂, …, a_k). For each attribute a_j a separate probability distribution p^{(j)} is estimated, and its Shannon entropy H_j = –∑_i p^{(j)}_i log p^{(j)}_i is computed. A set of weights w_j, reflecting the relevance of each attribute to the problem at hand, is then applied, and the total entropy is defined as

H_total = Σ_{j=1}^k w_j H_j.

In this formulation the entropy value is explicitly dependent on the observer’s perspective: by changing the attribute set or the weight vector, the same underlying data can be assigned different entropy values. This formalism therefore captures the “anthropomorphic” nature of entropy within the domain of information theory.

To demonstrate the practical relevance of the generalized entropy, the paper presents two case studies.

-

Password‑strength evaluation – Conventional password meters usually count character classes (uppercase, lowercase, digits, symbols) and length, then compute a simple log‑based entropy. The authors argue that real‑world security also depends on patterns that humans tend to reuse (dictionary words, keyboard sequences, repeated substrings). They decompose a password into three layers: (i) the set of character classes used, (ii) the positional distribution of those classes, and (iii) a pattern‑frequency model derived from large password leaks. Separate probability models are built for each layer, weighted according to security relevance, and the combined entropy is calculated. Experiments on a corpus of leaked passwords show that the new metric correlates much more strongly with actual cracking success rates than the traditional metric, indicating that a human‑centered entropy measure can capture subtle weaknesses that a naïve count‑based approach misses.

-

Diversity measurement in genetic algorithms (GAs) – In evolutionary computation, maintaining population diversity is crucial to avoid premature convergence. Existing diversity indicators (average fitness, Hamming distance, etc.) often ignore the structural distribution of genes. The authors treat each gene locus as an attribute, compute the frequency distribution of allele values across the population, and obtain a per‑locus entropy. By aggregating these with locus‑specific weights (e.g., higher weight for loci known to affect fitness), they obtain a population‑wide entropy that drops sharply when a particular gene becomes fixed. The paper reports that using this entropy as a trigger for adaptive mutation rates or for early‑stop decisions leads to faster convergence to high‑quality solutions on benchmark optimisation problems. Moreover, the framework allows the practitioner to focus on a subset of “critical” genes, providing a targeted view of diversity that aligns with the problem’s objective function.

The experimental results in both domains confirm that the anthropomorphic entropy is more sensitive to the aspects that matter to the human analyst (security policy in the password case, convergence control in the GA case) than traditional, single‑distribution entropy measures.

In the discussion, the authors emphasise that the anthropomorphic view does not diminish the objectivity of entropy; rather, it recognises that any quantitative assessment of uncertainty must be anchored to a set of variables chosen by the analyst. By making this choice explicit through attribute selection and weighting, the generalized entropy becomes a flexible tool that can be adapted to any context where the notion of “information content” is tied to human goals.

Finally, the paper suggests several avenues for future work: (i) automated methods for selecting and weighting attributes based on information‑theoretic criteria, (ii) extensions to continuous‑valued data using differential entropy, and (iii) integration with machine‑learning pipelines where feature importance can be reflected directly in the entropy calculation. The authors conclude that embracing the anthropomorphic nature of entropy opens new possibilities for more nuanced, goal‑directed quantification of uncertainty across a wide range of scientific and engineering disciplines.