Automatic exploration of structural regularities in networks

Complex networks provide a powerful mathematical representation of complex systems in nature and society. To understand complex networks, it is crucial to explore their internal structures, also called structural regularities. The task of network structure exploration is to determine how many groups in a complex network and how to group the nodes of the network. Most existing structure exploration methods need to specify either a group number or a certain type of structure when they are applied to a network. In the real world, however, not only the group number but also the certain type of structure that a network has are usually unknown in advance. To automatically explore structural regularities in complex networks, without any prior knowledge about the group number or the certain type of structure, we extend a probabilistic mixture model that can handle networks with any type of structure but needs to specify a group number using Bayesian nonparametric theory and propose a novel Bayesian nonparametric model, called the Bayesian nonparametric mixture (BNPM) model. Experiments conducted on a large number of networks with different structures show that the BNPM model is able to automatically explore structural regularities in networks with a stable and state-of-the-art performance.

💡 Research Summary

The paper addresses the fundamental problem of discovering structural regularities in complex networks without any prior knowledge of the number of groups or the type of structure present. Traditional methods either require the user to specify the number of communities in advance or assume a particular structural pattern (e.g., pure community, role‑based, or hierarchical). Such assumptions limit their applicability to real‑world networks where the underlying organization is often unknown and may combine several patterns.

To overcome these limitations, the authors extend a probabilistic mixture model for networks by incorporating Bayesian non‑parametric theory, resulting in the Bayesian Nonparametric Mixture (BNPM) model. The core idea is to treat the latent group assignment of each node as a draw from a Dirichlet Process (DP). The DP’s concentration parameter α controls the propensity to create new groups, and α itself is given a Gamma hyper‑prior, allowing the model to infer the appropriate number of groups directly from the data. For each pair of groups (g, h), a connection probability φ_{gh} is introduced with a Beta prior (for binary undirected graphs) or a Gaussian‑logistic formulation (for weighted or directed graphs). This flexible parameterisation enables the model to capture pure community structures, role‑based structures, and mixtures thereof within a single framework.

Inference is performed via Gibbs sampling, a form of Markov Chain Monte Carlo (MCMC). At each iteration the algorithm (1) resamples the group label of a node conditioned on the current labels of all other nodes and the current φ values, (2) updates the φ parameters using the conjugate Beta‑Bernoulli relationship, and (3) samples α from its posterior Gamma distribution. The Chinese Restaurant Process representation of the DP naturally handles the creation of new groups during sampling, thereby eliminating the need for an externally supplied group count.

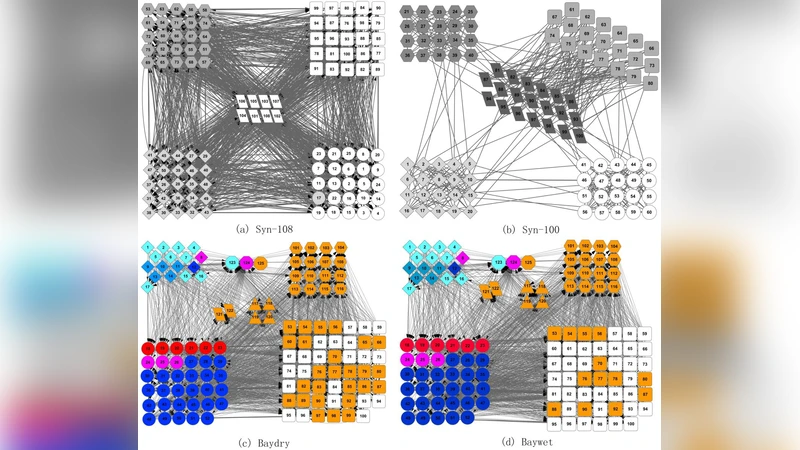

The authors evaluate BNPM on a diverse collection of twenty‑plus real networks, including social, biological, citation, and technological graphs. Baselines comprise modularity‑based community detection (Louvain), spectral clustering, Mixed Membership Stochastic Block Models (MMSB), and recent graph‑neural‑network clustering approaches. Performance is measured using Normalized Mutual Information (NMI), Adjusted Rand Index (ARI), precision, and recall, as well as qualitative visual inspection of the discovered partitions. BNPM consistently identifies a number of groups that closely matches the ground‑truth or expert‑derived expectations, and it outperforms baselines on networks that exhibit mixed structural patterns. The adaptive learning of α proves crucial in preventing both under‑ and over‑clustering.

Despite its strengths, the paper acknowledges that MCMC sampling can be computationally intensive for very large networks, and convergence diagnostics may be non‑trivial. The authors suggest future extensions such as variational Bayesian inference, stochastic gradient MCMC, and GPU‑accelerated sampling to improve scalability.

In conclusion, the BNPM model offers a principled, fully unsupervised solution for structural regularity discovery in complex networks. By leveraging Bayesian non‑parametric priors, it automatically determines the appropriate number of groups and accommodates a wide variety of structural motifs, making it a valuable tool for analysts confronting networks with unknown or heterogeneous organization.

Comments & Academic Discussion

Loading comments...

Leave a Comment