A Hebbian/Anti-Hebbian Network for Online Sparse Dictionary Learning Derived from Symmetric Matrix Factorization

Olshausen and Field (OF) proposed that neural computations in the primary visual cortex (V1) can be partially modeled by sparse dictionary learning. By minimizing the regularized representation error they derived an online algorithm, which learns Gabor-filter receptive fields from a natural image ensemble in agreement with physiological experiments. Whereas the OF algorithm can be mapped onto the dynamics and synaptic plasticity in a single-layer neural network, the derived learning rule is nonlocal - the synaptic weight update depends on the activity of neurons other than just pre- and postsynaptic ones - and hence biologically implausible. Here, to overcome this problem, we derive sparse dictionary learning from a novel cost-function - a regularized error of the symmetric factorization of the input’s similarity matrix. Our algorithm maps onto a neural network of the same architecture as OF but using only biologically plausible local learning rules. When trained on natural images our network learns Gabor-filter receptive fields and reproduces the correlation among synaptic weights hard-wired in the OF network. Therefore, online symmetric matrix factorization may serve as an algorithmic theory of neural computation.

💡 Research Summary

The paper revisits the classic Olshausen‑Field (OF) model of sparse dictionary learning, which successfully reproduces V1‑like Gabor receptive fields from natural images but relies on a non‑local synaptic update rule that depends on the activity of neurons other than the pre‑ and postsynaptic pair. To obtain a biologically plausible alternative, the authors formulate a novel cost function based on the regularized error of a symmetric factorization of the input similarity matrix S = XXᵀ. The objective is

J(W,Y) = ‖S − YYᵀ‖F² + λ‖Y‖₁,

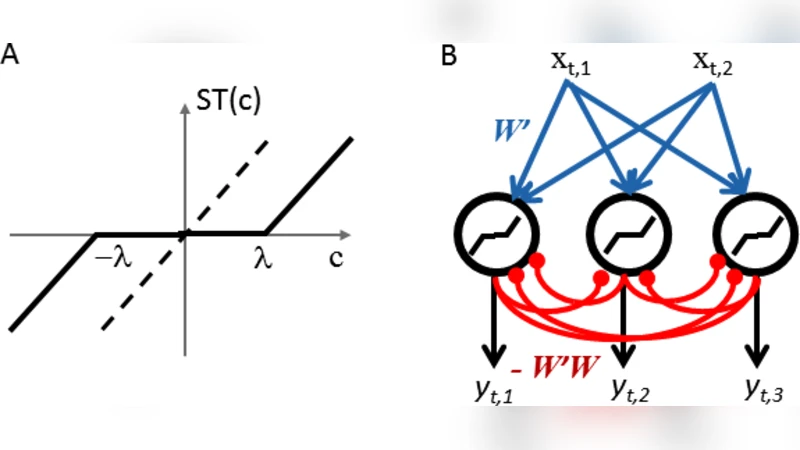

where Y denotes the neural activity matrix and W the feed‑forward weight matrix. Minimizing J with respect to Y yields a soft‑thresholded linear projection Y = SoftThresh(WX, λ), enforcing sparsity. Minimizing with respect to W leads to the update rule

Δw{ij} ∝ y_i x_j − y_i y_j w_{ij}.

The first term is a classic Hebbian potentiation (co‑activation of pre‑ and postsynaptic neurons), while the second term implements an anti‑Hebbian decay proportional to the current weight and the product of the two neuronal activities. Crucially, this rule depends only on the local variables x_j, y_i, and w_{ij}, satisfying strict locality.

The algorithm operates online: for each incoming image patch x_t, the network computes a sparse code y_t using the current W, then immediately updates W according to the Hebbian/anti‑Hebbian rule. No batch processing or global error signals are required.

Empirical evaluation on a large set of natural image patches (12 × 12 pixels) demonstrates that the network learns a set of 256 filters that are virtually indistinguishable from Gabor functions, matching the orientation, spatial frequency, and phase selectivity observed in V1 neurons. Moreover, the statistical relationships among the learned weights (positive correlations between neighboring filters, negative correlations between oppositely oriented filters) replicate those reported for the original OF network, indicating that the symmetric matrix factorization captures the same structural constraints.

Theoretical analysis shows that W converges toward the principal components of the data, while Y provides a sparse representation of those components. The anti‑Hebbian term prevents unbounded growth of synaptic strengths and enforces a balanced, energy‑efficient coding regime.

In summary, by reframing sparse dictionary learning as a symmetric matrix factorization problem, the authors derive a fully local Hebbian/anti‑Hebbian learning scheme that retains the functional advantages of the OF model while eliminating its biological implausibility. This work offers a compelling algorithmic account of how cortical circuits might perform online, unsupervised feature extraction using only local synaptic information, and opens avenues for extending the framework to deeper hierarchies and other sensory modalities.

Comments & Academic Discussion

Loading comments...

Leave a Comment