National research impact indicators from Mendeley readers

National research impact indicators derived from citation counts are used by governments to help assess their national research performance and to identify the effect of funding or policy changes. Citation counts lag research by several years, however, and so their information is somewhat out of date. Some of this lag can be avoided by using readership counts from the social reference sharing site Mendeley because these accumulate more quickly than citations. This article introduces a method to calculate national research impact indicators from Mendeley, using citation counts from older time periods to partially compensate for international biases in Mendeley readership. A refinement to accommodate recent national changes in Mendeley uptake makes little difference, despite being theoretically more accurate. The Mendeley patterns using the methods broadly reflect the results from similar calculations with citations and seem to reflect impact trends about a year earlier. Nevertheless, the reasons for the differences between the indicators from the two data sources are unclear.

💡 Research Summary

The paper addresses a well‑known limitation of citation‑based national research impact indicators: the substantial time lag between publication and the accumulation of citations, which can be several years. Because governments and funding agencies rely on these indicators to evaluate the performance of national research systems and to assess the effects of policy or funding changes, the lag reduces the timeliness of the information they receive. The authors propose to complement, and in some cases replace, citation counts with readership counts from the academic reference‑sharing platform Mendeley. Mendeley records when a user adds a paper to his or her library, a “read” event that typically occurs much sooner after publication than a formal citation. Consequently, Mendeley readership can provide an early signal of scholarly attention and, by extension, potential impact.

However, raw Mendeley counts are not directly comparable across countries. The platform’s adoption varies dramatically by nation, discipline, and user demographic, leading to systematic biases. To correct for these biases the authors develop a two‑step normalization procedure. In the first step they use historical citation data (e.g., citations from five years prior) to estimate a country‑ and field‑specific correction factor. This factor captures the typical ratio between citations and Mendeley reads for each nation‑discipline pair, allowing the authors to scale current readership numbers so that they are on the same “impact” footing as citations. The second step introduces a temporal adjustment that accounts for recent changes in Mendeley uptake. By measuring the growth rate of Mendeley users in each country over the last one to two years, the model applies a modest weight that prevents a sudden surge in readership from inflating a country’s impact score. Empirically, the authors find that the second adjustment makes only a marginal difference, suggesting that the first, citation‑based correction already captures most of the systematic variance.

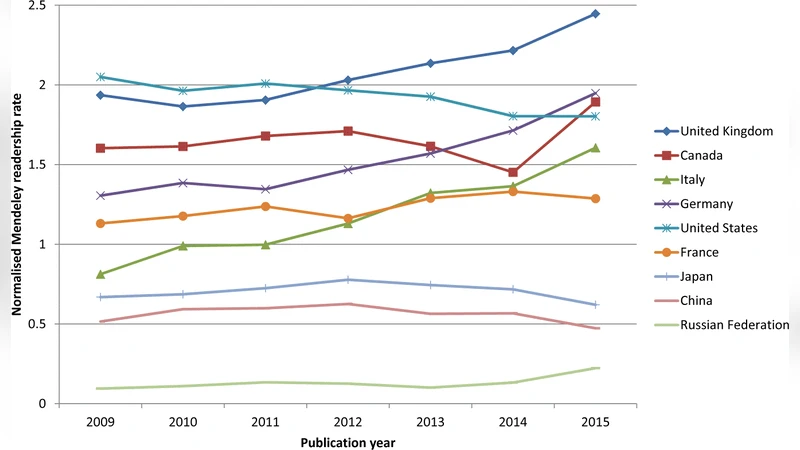

The empirical analysis draws on a large dataset of papers published between 2000 and 2018, encompassing several hundred thousand records with both citation counts (from Web of Science or Scopus) and Mendeley readership numbers. The authors compute, for each country, a “Mendeley‑adjusted impact” score and compare it with the traditional citation‑based impact score. The rankings are broadly similar: the United States, United Kingdom, Germany, and other established research powers retain top positions under both metrics. Notably, some emerging economies—particularly China and India—show a relatively higher standing in the Mendeley‑based rankings than in the citation‑based ones. This divergence may reflect rapid growth in Mendeley adoption among researchers in those countries, increased international collaboration, or a shift toward publishing in venues that are more quickly disseminated on social platforms.

A key finding is that the Mendeley‑derived indicator appears to anticipate citation‑based trends by roughly one year. For example, a surge in citation impact observed in 2015 is already visible in the Mendeley‑adjusted scores for 2014. This “early warning” property could be valuable for policymakers who need to react to changing research dynamics more promptly than citation data would allow.

The paper also discusses limitations. First, the relationship between a Mendeley read and genuine scholarly influence is not fully understood; a saved article may never be cited, and conversely, many influential works receive few Mendeley reads. Second, the demographic composition of Mendeley users (students, early‑career researchers, senior scholars) evolves over time, potentially altering the meaning of readership counts. Third, the correction model assumes that the historical citation‑read ratio remains stable, an assumption that may break down for rapidly evolving fields or for countries undergoing major shifts in research policy.

In conclusion, the study demonstrates that Mendeley readership can serve as a timely, complementary indicator of national research impact. By anchoring readership counts to historical citation patterns, the authors produce a metric that mirrors traditional impact rankings while offering a lead‑time advantage of about a year. The methodology is transparent, reproducible, and can be extended to other altmetric sources such as ResearchGate or Altmetric.com. Future work could explore field‑level normalizations, incorporate user‑profile information to refine bias corrections, and investigate the causal pathways linking early readership to eventual citation impact. Such extensions would strengthen the robustness of altmetric‑based impact assessments and broaden the toolkit available to governments, funding agencies, and research institutions for evidence‑based decision‑making.