Authorship Attribution through Function Word Adjacency Networks

A method for authorship attribution based on function word adjacency networks (WANs) is introduced. Function words are parts of speech that express grammatical relationships between other words but do not carry lexical meaning on their own. In the WANs in this paper, nodes are function words and directed edges stand in for the likelihood of finding the sink word in the ordered vicinity of the source word. WANs of different authors can be interpreted as transition probabilities of a Markov chain and are therefore compared in terms of their relative entropies. Optimal selection of WAN parameters is studied and attribution accuracy is benchmarked across a diverse pool of authors and varying text lengths. This analysis shows that, since function words are independent of content, their use tends to be specific to an author and that the relational data captured by function WANs is a good summary of stylometric fingerprints. Attribution accuracy is observed to exceed the one achieved by methods that rely on word frequencies alone. Further combining WANs with methods that rely on word frequencies alone, results in larger attribution accuracy, indicating that both sources of information encode different aspects of authorial styles.

💡 Research Summary

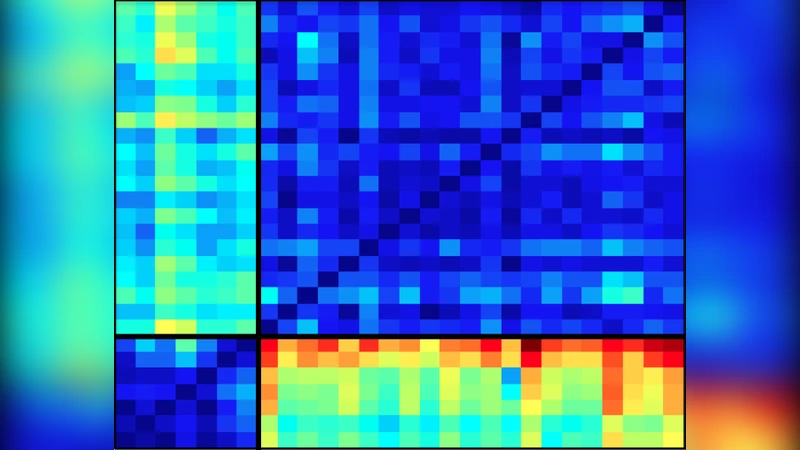

The paper introduces a novel authorship attribution method that builds on the relational structure of function words rather than their mere frequencies. Function words—such as prepositions, conjunctions, determiners, and modals—are extracted from each sentence of a text. For any ordered pair of function words (f_i, f_j) that appear within a fixed window of D positions (the authors use D = 10), a directed proximity weight α^{d‑1} is assigned, where d is the distance between the words and α (≈ 0.8) is a discount factor that reduces the influence of more distant pairs. Summing these weighted proximities over all sentences yields a raw co‑occurrence matrix Q_t for each text t.

Because Q_t scales with text length, each row is normalized by its row sum, producing a stochastic matrix ˆQ_t whose rows sum to one. This matrix can be interpreted as the transition matrix of a discrete‑time Markov chain (MC) P_t that models the probability of encountering a particular function word after another. For each author a_c, the authors aggregate the Q_t matrices of all known texts written by that author, normalize the aggregate, and obtain an author‑specific MC P_c that serves as a stylometric fingerprint.

To compare two MCs, the authors employ the relative entropy (Kullback‑Leibler divergence) H(P₁, P₂) = ∑_{i,j} π_i P₁(i,j) log

Comments & Academic Discussion

Loading comments...

Leave a Comment