Solvers for $mathcal{O} (N)$ Electronic Structure in the Strong Scaling Limit

We present a hybrid OpenMP/Charm++ framework for solving the $\mathcal{O} (N)$ Self-Consistent-Field eigenvalue problem with parallelism in the strong scaling regime, $P\gg{N}$, where $P$ is the number of cores, and $N$ a measure of system size, i.e. the number of matrix rows/columns, basis functions, atoms, molecules, etc. This result is achieved with a nested approach to Spectral Projection and the Sparse Approximate Matrix Multiply [Bock and Challacombe, SIAM J.~Sci.~Comput. 35 C72, 2013], and involves a recursive, task-parallel algorithm, often employed by generalized $N$-Body solvers, to occlusion and culling of negligible products in the case of matrices with decay. Employing classic technologies associated with generalized $N$-Body solvers, including over-decomposition, recursive task parallelism, orderings that preserve locality, and persistence-based load balancing, we obtain scaling beyond hundreds of cores per molecule for small water clusters ([H${}_2$O]${}_N$, $N \in { 30, 90, 150 }$, $P/N \approx { 819, 273, 164 }$) and find support for an increasingly strong scalability with increasing system size $N$.

💡 Research Summary

The paper introduces a hybrid OpenMP/Charm++ framework designed to solve the self‑consistent‑field (SCF) eigenvalue problem with linear‑scaling (O(N)) complexity in the strong‑scaling regime, where the number of processing cores far exceeds the problem size. Traditional SCF solvers scale as O(N³) and quickly become prohibitive for systems larger than a few thousand atoms. Recent O(N) methods exploit the locality of electronic interactions, but most implementations have only demonstrated weak‑scaling performance (constant P/N). The authors address the remaining challenges—communication overhead, load imbalance, and integration of multiple quantum‑chemical solvers—by combining three key ideas.

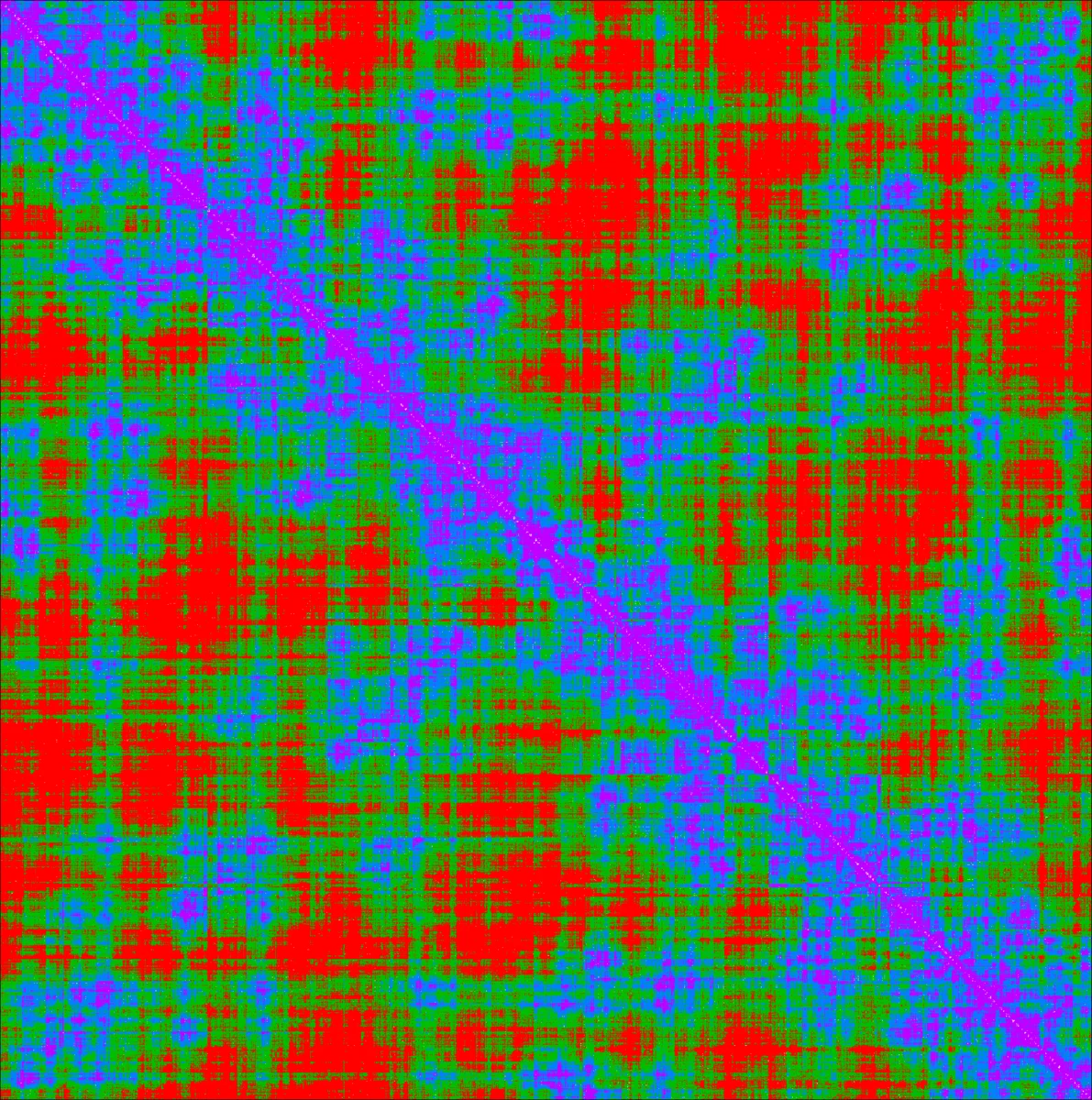

First, they employ a nested Spectral Projection together with Sparse Approximate Matrix Multiply (SpAMM). SpAMM treats matrices whose elements decay exponentially or algebraically with distance. The matrix is represented as a quadtree; each node stores the Frobenius norm of its sub‑block. During multiplication, the product of two sub‑blocks is omitted (occluded) if the product of their norms falls below a user‑defined threshold τ, based on the sub‑multiplicative inequality ‖AB‖ ≤ ‖A‖‖B‖. This early culling reduces the effective work to O(N log N) while preserving a controllable error bound.

Second, the authors map the three‑dimensional convolution space (indices of A‑blocks, B‑blocks, and the resulting C‑blocks) onto a massive set of lightweight tasks. By over‑decomposing the work into far more tasks than cores, they enable fine‑grained dynamic scheduling. The recursive nature of the algorithm mirrors N‑Body methods: tasks are generated on‑the‑fly as the quadtree is traversed. OpenMP 3.0 tasking handles intra‑node parallelism, while Charm++ manages inter‑node distribution and load balancing. A persistence‑based load‑balancer reuses the history of task execution from previous SCF iterations, dramatically reducing the cost of rebalancing when the sparsity pattern remains similar across iterations.

Third, memory layout is optimized for modern cache hierarchies. Sub‑trees are stored in contiguous chunks of size Nc × Nc, and leaf blocks of size Nb × Nb are chosen to fit L1/L2 caches (Nb≈4–8). This improves data locality, reduces cache miss penalties, and facilitates vectorization without hand‑written assembly. The authors deliberately avoid aggressive low‑level tuning to retain portability across CPUs, Xeon Phi, and GPUs (via source‑to‑source translation).

The implementation is evaluated on water clusters

Comments & Academic Discussion

Loading comments...

Leave a Comment