A Critical Review of Recurrent Neural Networks for Sequence Learning

Countless learning tasks require dealing with sequential data. Image captioning, speech synthesis, and music generation all require that a model produce outputs that are sequences. In other domains, such as time series prediction, video analysis, and musical information retrieval, a model must learn from inputs that are sequences. Interactive tasks, such as translating natural language, engaging in dialogue, and controlling a robot, often demand both capabilities. Recurrent neural networks (RNNs) are connectionist models that capture the dynamics of sequences via cycles in the network of nodes. Unlike standard feedforward neural networks, recurrent networks retain a state that can represent information from an arbitrarily long context window. Although recurrent neural networks have traditionally been difficult to train, and often contain millions of parameters, recent advances in network architectures, optimization techniques, and parallel computation have enabled successful large-scale learning with them. In recent years, systems based on long short-term memory (LSTM) and bidirectional (BRNN) architectures have demonstrated ground-breaking performance on tasks as varied as image captioning, language translation, and handwriting recognition. In this survey, we review and synthesize the research that over the past three decades first yielded and then made practical these powerful learning models. When appropriate, we reconcile conflicting notation and nomenclature. Our goal is to provide a self-contained explication of the state of the art together with a historical perspective and references to primary research.

💡 Research Summary

This paper provides a comprehensive survey of recurrent neural networks (RNNs) for sequence learning, tracing three decades of research from early theoretical foundations to modern large‑scale applications. It begins by motivating the need for explicit sequence modeling, arguing that feed‑forward architectures and traditional classifiers such as SVMs treat each example as independent and therefore cannot capture long‑range dependencies required in dialogue systems, autonomous robots, or any task where context spans many time steps. The authors contrast RNNs with Markov models, highlighting that hidden Markov models (HMMs) suffer from a discrete, limited state space and quadratic (or worse) computational complexity as the number of states grows, making them impractical for modeling long‑range structure.

The survey then discusses the expressive power of RNNs. It cites the classic result that a finite‑size RNN with sigmoidal activations is Turing‑complete, meaning it can in principle compute any algorithm. However, the paper emphasizes that this theoretical universality does not guarantee practical learnability; unlike arbitrary programs, RNN parameters are differentiable, allowing gradient‑based optimization, and regularization techniques (weight decay, dropout, early stopping) can mitigate over‑fitting.

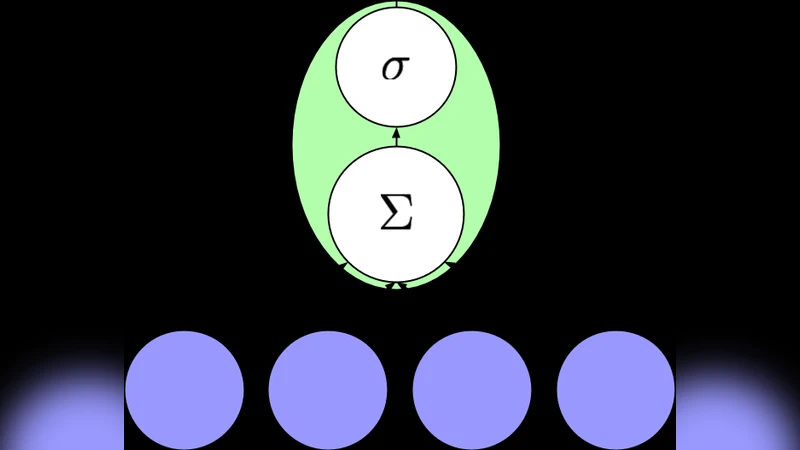

Training challenges are examined in depth. The authors explain the vanishing and exploding gradient problems that plagued early RNNs and describe how Long Short‑Term Memory (LSTM) units, Gated Recurrent Units (GRU), and bidirectional RNNs (BRNN) address these issues by introducing gates that control information flow across time steps. They detail back‑propagation through time (BPTT), its memory and computational costs, and practical remedies such as gradient clipping, truncation, and mini‑batch processing. The impact of modern hardware—GPUs, TPUs, and parallel computation frameworks—is highlighted as a key factor that now enables training of models with millions of parameters on massive datasets.

A significant portion of the paper is devoted to notation and terminology inconsistencies across the literature. The authors propose a unified scheme: time indices are denoted with parenthesized superscripts (t), neuron indices with subscripts (j), activation functions with ℓ_j, and weighted sums with a_j. They clarify the often‑confused direction of weight matrices (w_{j←i} versus w_{i→j}) and stress the importance of explicit edge‑time annotations in graphical models. This effort aims to improve reproducibility and reduce the cognitive load for newcomers.

The survey also reviews seminal works: early cognitive‑science papers (Hopfield 1982, Jordan 1986, Elman 1990) that emphasized biological plausibility; engineering‑focused studies (Schuster & Paliwal 1997, Karpathy & Fei‑Fei 2014) that prioritized empirical performance; and more recent comprehensive resources such as Graves (2012) on supervised sequence labeling and Gers (2001) doctoral thesis. It notes specialized surveys on language modeling (De Mulder et al. 2015) and gradient computation in continuous‑time RNNs (Pearlmutter 1995).

Finally, the authors outline future research directions. They call for memory‑efficient architectures capable of capturing very long dependencies without prohibitive computational cost, suggesting hybrid models that combine RNNs with attention‑based Transformers. Model compression techniques—pruning, quantization, knowledge distillation—are identified as essential for deploying RNNs on edge devices. The paper encourages exploration of non‑temporal sequences (graphs, trees) and multimodal sequence learning, as well as tighter integration with reinforcement learning for interactive robotics and autonomous driving.

In summary, this review synthesizes theoretical insights, architectural innovations, training methodologies, and practical considerations, offering a self‑contained reference that clarifies the state of the art in recurrent neural networks for sequence learning and points toward promising avenues for future investigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment