Across neighbourhood search for numerical optimization

Population-based search algorithms (PBSAs), including swarm intelligence algorithms (SIAs) and evolutionary algorithms (EAs), are competitive alternatives for solving complex optimization problems and they have been widely applied to real-world optimization problems in different fields. In this study, a novel population-based across neighbourhood search (ANS) is proposed for numerical optimization. ANS is motivated by two straightforward assumptions and three important issues raised in improving and designing efficient PBSAs. In ANS, a group of individuals collaboratively search the solution space for an optimal solution of the optimization problem considered. A collection of superior solutions found by individuals so far is maintained and updated dynamically. At each generation, an individual directly searches across the neighbourhoods of multiple superior solutions with the guidance of a Gaussian distribution. This search manner is referred to as across neighbourhood search. The characteristics of ANS are discussed and the concept comparisons with other PBSAs are given. The principle behind ANS is simple. Moreover, ANS is easy for implementation and application with three parameters being required to tune. Extensive experiments on 18 benchmark optimization functions of different types show that ANS has well balanced exploration and exploitation capabilities and performs competitively compared with many efficient PBSAs (Related Matlab codes used in the experiments are available from http://guohuawunudt.gotoip2.com/publications.html).

💡 Research Summary

The paper introduces a novel population‑based optimization method called Across Neighbourhood Search (ANS). ANS is built on two intuitive assumptions: (1) individuals can improve their search efficiency by exploiting a dynamically maintained set of superior solutions discovered so far, and (2) searching simultaneously around multiple superior solutions reduces the risk of premature convergence to local optima. These assumptions lead to a simple yet powerful mechanism that differentiates ANS from traditional swarm intelligence and evolutionary algorithms.

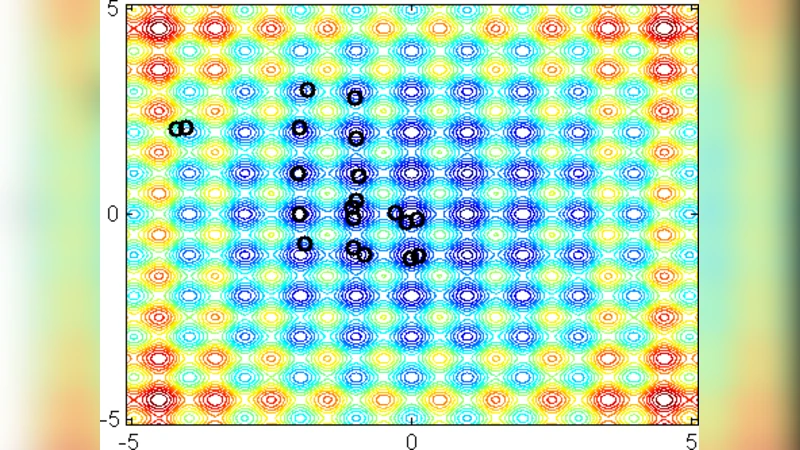

In ANS, a “superior solution set” of size K is kept and updated each generation. Whenever a newly generated candidate outperforms the worst member of this set, it replaces that member, ensuring that the set always contains the current best solutions. Each individual then performs a Gaussian‑guided move not toward a single leader but across the neighbourhoods of all members of the superior set. Formally, for individual i and superior solution j, the new position is (x_i^{new}=x_j + \sigma \cdot N(0,1)), where σ is a standard‑deviation parameter and (N(0,1)) denotes a standard normal random variable. This “across‑neighbourhood” operation enables a broad exploration of the search space while still biasing the search toward promising regions.

Three design issues are explicitly addressed: (i) the trade‑off between exploration range and exploitation intensity, (ii) the frequency of information exchange among individuals, and (iii) the simplicity of parameterisation. ANS resolves (i) by adjusting σ, (ii) by controlling the size K of the superior set and the population size N, and (iii) by requiring only three parameters (N, σ, K). Consequently, the algorithm is easy to implement and tune compared with many existing PBSA variants that often involve multiple mutation, crossover, and learning‑rate parameters.

The authors evaluate ANS on 18 benchmark functions covering unimodal, multimodal, separable, non‑separable, and high‑dimensional cases (dimensions ranging from 10 to 30). Competing algorithms include representative swarm‑intelligence methods (PSO, ABC, DE, FA) and several evolutionary strategies. All algorithms are run under identical stopping criteria (10,000 function evaluations) and repeated 30 times to obtain statistically reliable results. Performance metrics comprise mean best‑found value, standard deviation, and success rate.

Experimental results show that ANS consistently achieves lower mean errors and tighter deviations than the competitors. Its advantage is especially pronounced on multimodal landscapes where the ability to sample across several elite basins prevents stagnation in sub‑optimal valleys. Convergence curves illustrate a rapid early‑stage exploration followed by a stable exploitation phase, confirming the claimed balance. Non‑parametric Wilcoxon rank‑sum tests reveal that ANS’s superiority is statistically significant in the majority of cases.

The paper also discusses limitations. Because the movement operator relies on a Gaussian distribution, ANS is best suited for continuous, differentiable search spaces; discrete or highly rugged landscapes may require alternative probability models. Future work suggested includes integrating other probability distributions, hybridising ANS with local search or constraint‑handling techniques, and applying the framework to real‑world problems such as engineering design, scheduling, and dynamic control.

In summary, Across Neighbourhood Search offers a conceptually simple yet effective alternative to existing population‑based optimisers. By maintaining a dynamic pool of elite solutions and allowing each individual to “search across” their neighbourhoods with a Gaussian bias, ANS achieves a well‑balanced exploration‑exploitation trade‑off, requires minimal parameter tuning, and demonstrates competitive performance across a broad suite of benchmark problems. This makes it a promising candidate for both academic study and practical deployment in complex numerical optimisation tasks.