ParallelPC: an R package for efficient constraint based causal exploration

Discovering causal relationships from data is the ultimate goal of many research areas. Constraint based causal exploration algorithms, such as PC, FCI, RFCI, PC-simple, IDA and Joint-IDA have achieved significant progress and have many applications.…

Authors: Thuc Duy Le, Tao Hoang, Jiuyong Li

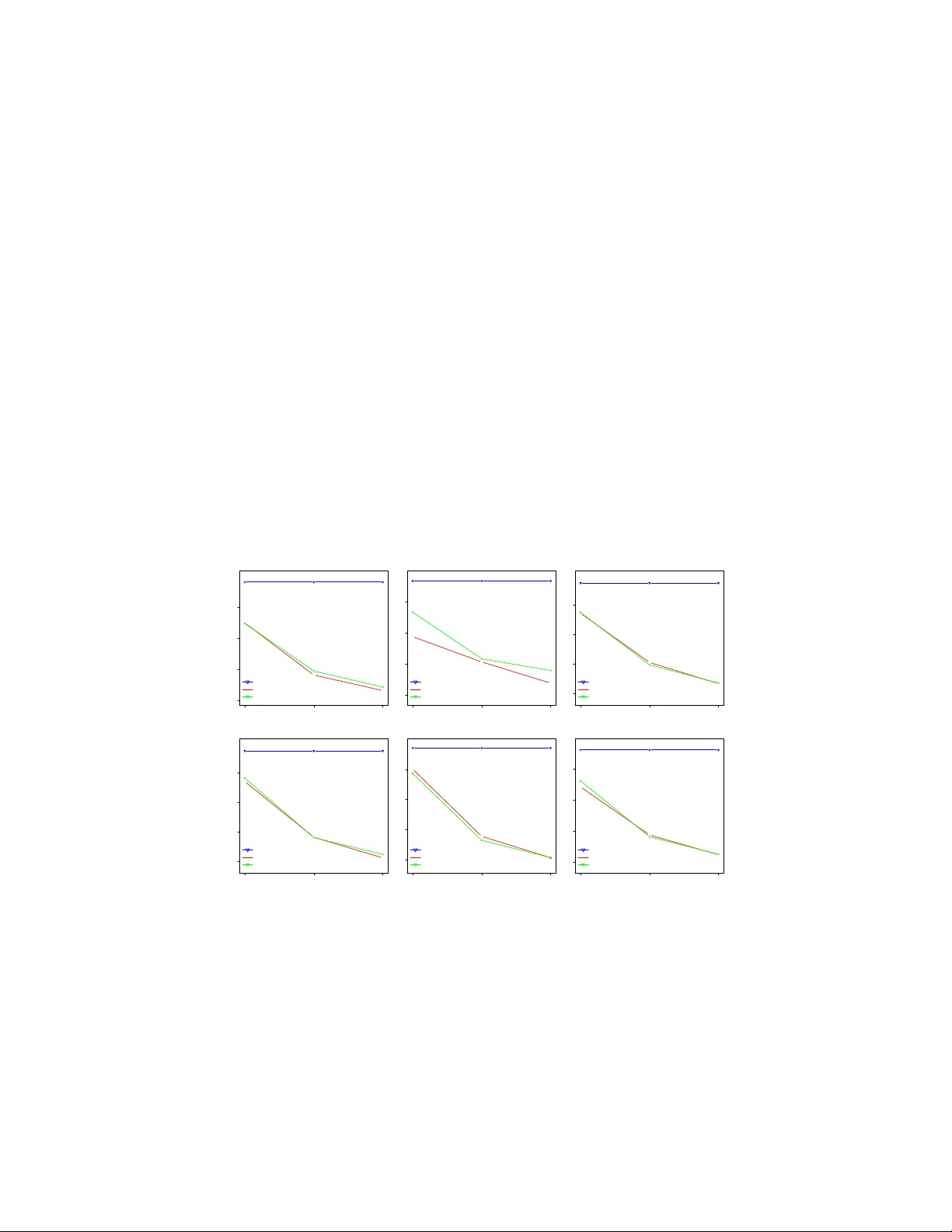

Journal of Machine Learning Research 1 (2000) 1-48 Submitted 4/00; Published 10/00 P arallelPC: an R pac k age for efficien t constrain t based causal exploration Th uc D. Le Thuc.Le@unisa.edu.au T ao Hoang hoa tn002@mymail.unisa.edu.au Jiuy ong Li Jiuyong.Li@unisa.edu.au Lin Liu Lin.Liu@unisa.edu.au Sh u Hu huysy011@mymail.unisa.edu.au Scho ol of Information T e chnolo gy and Mathematic al Scienc es University of South Austr alia Mawson L akes, SA, Austr alia Editor: Abstract Disco vering causal relationships from data is the ultimate goal of many researc h areas. Constrain t based causal exploration algorithms, such as PC, FCI, RF CI, PC-simple, IDA and Join t-ID A ha ve ac hieved significan t progress and hav e many applications. A common problem with these methods is the high computational complexit y , whic h hinders their applications in real w orld high dimensional datasets, e.g gene expression datasets. In this paper, we presen t an R pack age, Par al lelPC , that includes the parallelised versions of these causal exploration algorithms. The parallelised algorithms help sp eed up the pro cedure of exp erimen ting big datasets and reduce the memory used when running the algorithms. The pac k age is not only suitable for sup er-computers or clusters, but also con venien t for researc hers using p ersonal computers with multi core CPUs. Our experiment results on real w orld datasets sho w that using the parallelised algorithms it is now practical to explore causal relationships in high dimensional datasets with thousands of v ariables in a single m ulticore computer. Par al lelPC is av ailable in CRAN repository at h ttps://cran.r- pro ject.org/web/pac k ages/P arallelPC/index.html. Keyw ords: Causalit y disco very , Ba yesian netw orks, Parallel computing, Constrain t- based metho ds. 1. In tro duction Inferring causal relationships b etw een v ariables is an ultimate goal of man y researc h ar- eas, e.g. in v estigating the causes of cancer, finding the factors affecting life exp ectancy . Therefore, it is imp ortant to develop to ols for causal exploration from real world datasets. One of the most adv anced theories with widespread recognition in discov ering causality is the Causal Bay esian Netw ork (CBN), Pearl (2009). In this framework, causal relation- ships are represen ted with a Directed Acyclic Graph (DA G). There are t wo main approaches for learning the D AG from data: the search and score approach, and the constraint based approac h. While the search and score approach raises an NP-hard problem, the complexity of the constraint based approac h is exp onential to the n um b er of v ariables. Constrain t based approach for causality discov ery has b een adv anced in the last decade and has b een c 2000 Marina Meil˘ a and Michael I. Jordan. Thuc D. Le et al. sho wn to b e useful in some real world applications. The approach includes causal structure learning metho ds, e.g. PC (Spirtes et al. (2000)), FCI and RFCI (Colombo et al. (2012)), causal inference metho ds, e.g. ID A (Maathuis et al. (2009)) and Joint-ID A (Nandy et al. (2014)), and lo cal causal structure learning such as PC-Simple ( B ¨ uhlmann et al. (2010)). Ho wev er, the high computational complexity has hindered the applications of causal dis- co very approac hes to high dimensional datasets, e.g. gene expression datasets where the n umber of genes (v ariables) is large and the n umber of samples is normally small. In Le et al. (2015a), w e presented a metho d that is based on parallel computing tec hnique to sp eed up the PC algorithm. Here in the Par al lelPC pack age, we parallelise a family of causal structure learning and causal inference metho ds, including PC, FCI, RF CI, PC- simple, IDA, and Joint-ID A. W e also collate 12 differen t conditional independence (CI) tests that can b e used in these algorithms. The algorithms in this pack age return the same results as those in the pcal g pac k age, Kalisch et al. (2012), but the runtime is m uch low er dep ending on the n um b er of cores CPU sp ecified by users. Our exp erimen t results sho w that with the Par al lelPC pac k age it is no w practical to apply those metho ds to high dimensional datasets in a mo dern p ersonal computer. 2. Con train t based algorithms and their parallelised v ersions ● ● ● 6 11 16 21 BR51 Number of cores Runtime (mins) 65.05 18.43 10.08 7.6 4 8 12 18.35 10.72 8.18 ● Sequential−PC Parallel−PC Parallel−PC−mem ● ● ● 20 26 32 38 BR51 Number of cores Runtime (mins) 83.28 31.28 26.42 22.35 4 8 12 36 27.02 24.78 ● Sequential−FCI Parallel−FCI Parallel−FCI−mem ● ● ● 7 11 15 19 BR51 Number of cores Runtime (mins) 63.48 17.89 11.18 8.3 4 8 12 17.99 10.87 8.37 ● Sequential−RFCI Parallel−RFCI Parallel−RFCI−mem ● ● ● 11 15 19 23 BR51 Number of cores Runtime (mins) 100.4 21.83 14.25 11.43 4 8 12 22.3 14.2 11.9 ● Sequential−IDA Parallel−ID A Parallel−ID A−mem ● ● ● 12 19 26 33 adultAll Number of cores Runtime (mins) 99.47 33.06 17.45 12.47 4 8 12 32.09 16.55 12.49 ● Sequential−PCSelect Parallel−PCSelect Parallel−PCSelect−mem ● ● ● 7 12 17 22 BR51 Number of cores Runtime (mins) 75.1 19.05 11.36 8.19 4 8 12 20.02 11.07 8.26 ● Sequential−jointIDA Parallel−jointID A Parallel−jointID A−mem Figure 1: Run time of the sequen tial and parallelised versions (with and without the memory efficien t option) of PC, FCI, RF CI, ID A, PC-simple, and Joint-ID A W e paralellised the following causal discov ery and inference algorithms. • PC, Spirtes et al. (2000). The PC algorithm is the state of the art metho d in constraint based approach for learning causal structures from data. It has t wo main steps. In the first step, it learns from data a skeleton graph, whic h contains only undirected 2 P arallelPC edges. In the second step, it orien ts the undirected edges to form an equiv alence class of D AGs. In the skeleton learning step, the PC algorithm starts with the fully connected net work and uses the CI tests to decide if an edge is remov ed or retained. The stable v ersion of the PC algorithm (the Stable-PC algorithm, Colombo and Maath uis (2014)) up dates the graph at the end of each level (the size of the conditioning set) of the algorithm rather than after eac h CI test. Stable-PC limits the problem of the PC algorithm, whic h is dep enden t on the order of the CI tests. It is not possible to parallelise the Stable-PC algorithm globally , as the CI tests across different lev els in the Stable-PC algorithm are dep endent to one another. In Le et al. (2015a), w e prop osed the P arallel-PC algorithm whic h parallelised the CI tests inside each lev el of the Stable-PC algorithm. The Parallel-PC algorithm is more efficient and returns the same results as that of the Stable-PC algorithm (see Figure 1). • F CI, Colom b o et al. (2012). FCI is designed for learning the causal structure that takes laten t v ariables in to consideration. In real world datasets, there are often unmeasured v ariables and they will affect the lean t causal structure. F CI w as implemen ted in the pcal g pac k age and it uses PC algorithm as the first step. The sk eleton of the causal structure learnt by PC algorithm will b e refined b y p erforming more CI test. Therefore, FCI is not efficient for large datasets. • RF CI, Colombo et al. (2012). RFCI is an improv emen t of the FCI algorithm to sp eed up the running time when the underlying graph is sparse. Ho wev er, our exp eriment results show that it is still impractical for high dimensional datasets. • PC-simple, B ¨ uhlmann et al. (2010). PC-simple is a lo cal causal disco very algorithm to search for parents and children of the target v ariable. In dense datasets where the target v ariable has large n umber of causes and effects, the algorithm is not efficien t. W e utilise the idea of taking order-indep endent approac h (Le et al. (2015a)) on the lo cal structure learning problem to parallelise the PC-simple algorithm. • ID A , Maath uis et al. (2009). ID A is a causal inference metho d whic h infers the causal effect that a v ariable has on another v ariable. It firstly learns the causal structure from data, and then based on the learn t causal structure, it estimates the causal effect b et ween a cause no de and an effect no de by adjusting the effects of the parents of the cause. Learning the causal structure is time consuming. Therefore, IDA will b e efficient when the causal structure learning step is impro ved. Figure 1 sho ws that our parallelised version of the IDA impro ves the efficiency of the IDA algorithm significan tly . • Join t-IDA, Nandy et al. (2014). Joint-ID A estimates the effect of the target v ari- able when jointly in tervening a group of v ariables. Similar to IDA, Join t-IDA learns the causal structure from data and the effects of in tervening m ultiple v ariables are estimated. T o illustrate the effectiv eness of the parallelised algorithms, we apply the sequen tial and parallelised versions of PC, F CI, RF CI, ID A and Joint-ID A algorithms to a breast 3 Thuc D. Le et al. cancer gene expression dataset. The dataset includes 50 expression samples with 92 mi- croRNAs (a class of gene regulators) and 1500 messenger RNAs that w as used to infer the relationships b et ween miRNAs and mRNAs downloaded from Le et al. (2015b). As PC-simple (PC-Select) is efficien t in small datasets, w e use the Adult dataset from UCI Mac hine Learning Repository with 48842 samples. W e use the binary discretised version from Li et al. (2015) and select 100 binary v ariables for the experiment with PC-simple. W e run all the exp eriments on a Linux serv er with 2.7 GB memory and 2.6 Ghz p er core CPU. As shown in Figure 1, the parallelised versions of the algorithms are muc h more efficient than the sequen tial v ersions as exp ected, while they are still generating the same results. The parallelised algorithms also detect the free memory of the running computer to estimate the num b er of CI tests that will b e distributed ev enly to the cores. This step is to ensure that each core of the computer will not hold off a big amount of memory while waiting for the synchronisation step. The memory-effcient pro cedure may consume a little bit more time compared to the original parallel version. Ho w ever, this option is recommended for computers with limited memory resources or for big datasets. 3. Conditional indep endence tests for constrain t based metho ds It is shown that different CI tests may lead to different results for a particular constraint based algorithm, and a CI test ma y b e suitable for a certain t yp e of datasets. In this pac k age, w e collate 12 CI tests in the pcal g (Kalisch et al. (2012)) and bnl ear n (Scutari (2009)) pack ages to provide options for function calls of the constraint-based metho ds. These CI tests can b e used separately for the purp ose of testing (conditional) dep endency b et ween v ariables. They can also b e used within the constraint based algorithms (b oth sequen tial and parallelised algorithms) in the Par al lelPC pac k age. The following codes sho w an example of running the FCI algorithm using the sequen tial v ersion in the pcal g pac k age, the parallelised versions with or without the memory efficient option, and using a differen t CI test (m utual information) rather than the Gaussian CI test. ## Using the FCI-stable algorithm in the pcalg package library(pcalg) data("gmG") p<-ncol(gmG$x) suffStat<-list(C=cor(gmG$x),n=nrow(gmG$x)) fci_stable(suffStat, indepTest=gaussCItest, p=p, skel.method="stable", alpha=0.01) ## Using fci_parallel without the memory efficient option fci_parallel(suffStat, indepTest=gaussCItest, p=p, skel.method="parallel", alpha=0.01, num.cores=2) ## Using fci_parallel with the memory efficient option fci_parallel(suffStat, indepTest=gaussCItest, p=p, skel.method="parallel", alpha=0.01, num.cores=2, mem.efficient=TRUE) ## Using fci_parallel with mutual information test fci_parallel(gmG$x, indepTest=mig, p=p, skel.method="parallel", alpha=0.01, num.cores=2, mem.efficient=TRUE) 4 P arallelPC Ac kno wledgments This work has b een supp orted by Australian Research Council Disco very Pro ject DP140103617. References P eter B ¨ uhlmann, Markus Kalisch, and Marloes H Maath uis. V ariable selection in high- dimensional linear mo dels: partially faithful distributions and the PC-simple algorithm. Biometrika , 97(2):261–278, 2010. Diego Colom b o and Marloes H Maath uis. Order-indep endent constraint-based causal struc- ture learning. The Journal of Machine L e arning R ese ar ch , 15(1):3741–3782, 2014. Diego Colombo, Marlo es H Maathuis, Markus Kalisch, Thomas S Richardson, et al. Learn- ing high-dimensional directed acyclic graphs with laten t and selection v ariables. The A nnals of Statistics , 40(1):294–321, 2012. Markus Kalisch, Martin M¨ ac hler, Diego Colombo, Marloes H Maathu is, and Peter B ¨ uhlmann. Causal inference using graphical mo dels with the R pack age p calg. Jour- nal of Statistic al Softwar e , 47(11):1–26, 2012. Th uc Duy Le, T ao Hoang, Jiuyong Li, Lin Liu, and Huaw en Liu. A fast PC algorithm for high dimensional causal disco v ery with m ulti-core PCs. arXiv pr eprint arXiv:1502.02454 , 2015a. Th uc Duy Le, Lin Liu, Junp eng Zhang, Bing Liu, and Jiuyong Li. F rom miRNA regulation to miRNA–TF co-regulation: computational approaches and c hallenges. Briefings in bioinformatics , 16(3):475–496, 2015b. Jiuy ong Li, Thuc Duy Le, Lin Liu, Jixue Liu, Zhou Jin, Bingyu Sun, and Saisai Ma. F rom observ ational studies to causal rule mining. arXiv pr eprint arXiv:1508.03819 , 2015. Marlo es H Maathuis, Markus Kalisch, Peter B ¨ uhlmann, et al. Estimating high-dimensional in terven tion effects from observ ational data. The Annals of Statistics , 37(6A):3133–3164, 2009. Preetam Nandy , Marlo es H Maath uis, and Thomas S Richardson. Estimating the effect of joint in terven tions from observ ational data in sparse high-dimensional settings. arXiv pr eprint arXiv:1407.2451 , 2014. Judea Pearl. Causality . Cambridge univ ersity press, 2009. Marco Scutari. Learning bay esian netw orks with the bnlearn R pack age. arXiv pr eprint arXiv:0908.3817 , 2009. P eter Spirtes, Clark N Glymour, and Richard Scheines. Causation, pr e diction, and se ar ch , v olume 81. MIT press, 2000. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment