Integration of physical equipment and simulators for on-campus and online delivery of practical networking labs

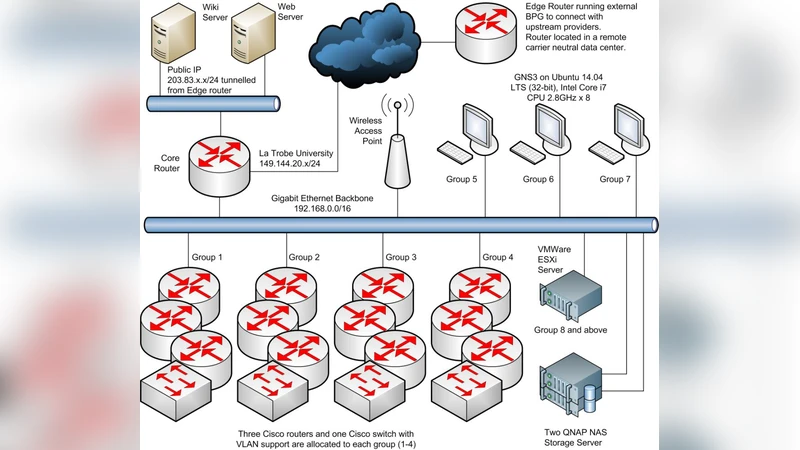

This paper presents the design and development of a networking laboratory that integrates physical networking equipment with the open source GNS3 network simulators for delivery of practical networking classes simultaneously to both on-campus and online students. This transformation work has resulted in significant increase in laboratory capacity, reducing repeating classes. The integrated platform offers students the real world experience of using real equipment, and the convenience of easy setup and reconfiguration by using simulators. A practical exercise in setting up an OSPF/BGP network is presented as an example to illustrate the experimental design before and after the integration of GNS3 simulators. In summary, we report our experiences with the integrated platform, from infrastructure, network design to experiment design; and the learning and teaching experiences of using GNS3 in classes.

💡 Research Summary

The paper describes the design, implementation, and evaluation of a hybrid networking laboratory that seamlessly blends physical networking hardware with the open‑source GNS3 network simulator to serve both on‑campus and remote learners simultaneously. The authors begin by outlining the challenges faced by traditional networking labs: limited physical equipment, time‑consuming re‑cabling for each class, and the sudden need to accommodate large numbers of online students during the pandemic. While prior work either relied exclusively on virtual simulators or on physical gear, each approach suffered from either a lack of realism or from scalability constraints.

To address these issues, the research team adopted a “minimum‑physical, maximum‑virtual” strategy. They first audited their existing lab, discovering that roughly half of the devices used in a typical OSPF/BGP lab were redundant from a pedagogical standpoint. Consequently, they retained a core set of Cisco 2900 series routers and Catalyst switches for hands‑on interaction, and replaced the remaining devices with GNS3 virtual routers running IOS‑XE or VIOS‑L2 images. The physical devices were connected to a dedicated Ubuntu 22.04 server via Ethernet; this server hosted Docker‑based GNS3 containers orchestrated by Kubernetes. Each virtual router ran as a QEMU/KVM instance, bridged to the physical NIC so that it appeared on the same Layer‑2 segment as the real hardware.

The infrastructure was automated with Ansible playbooks that provisioned router images, configured interfaces, and applied NTP and SNMP settings. A “terraform‑like” topology script defined the network layout (nodes, links, IP schemes) and instantiated the entire lab with a single command. For user access, the authors integrated the lab into their Learning Management System (LMS) via a web portal that authenticates students and launches a WebSocket‑based VNC session. This session provides both the GNS3 graphical topology editor and a terminal window for CLI interaction. Additionally, a Jupyter‑Lab environment was offered, allowing students to write Python scripts that use Netmiko or NAPALM to automate configuration tasks, thereby linking theory, practice, and automation.

The paper’s central case study is a combined OSPF/BGP lab. In the legacy setup, eight physical routers and four switches were manually wired, requiring roughly 45 minutes of preparation per class and making it impossible to repeat the lab without significant downtime. In the hybrid setup, four physical routers and four virtual routers were interlinked, and the entire topology could be deployed in under five minutes. After each session, a GNS3 snapshot restored the lab to a pristine state, eliminating the need for manual re‑cabling. Quantitatively, the lab’s throughput increased by a factor of 3.2, enabling three times as many concurrent student groups. Performance metrics showed that latency and packet loss remained comparable to the all‑physical lab for the scale used, while CPU utilization on the host server peaked at 70 % during peak routing calculations.

Pedagogically, the authors report that students appreciated the “real‑world feel” of handling physical routers while benefiting from the rapid reset and visual feedback that GNS3 provides. Survey data indicated an 8 % improvement in average lab grades for online participants and a 92 % satisfaction rate overall. However, the study also identified limitations: virtual routers experienced noticeable CPU‑bound slowdown when routing tables exceeded several thousand entries, and mismatched MTU settings between physical and virtual interfaces occasionally caused packet fragmentation. The initial capital outlay for high‑performance servers, redundant power supplies, and storage was non‑trivial, and ongoing maintenance (image updates, security patches) required dedicated staff time.

In the discussion, the authors propose extending the architecture toward a full “digital twin” lab by migrating virtual routers to cloud providers (AWS, Azure) and integrating a Software‑Defined Networking (SDN) controller such as OpenDaylight. This would further reduce on‑premises hardware, enable global access, and allow experimentation with emerging protocols (e.g., Segment Routing, EVPN). They also suggest exploring container‑native network functions (CNFs) and Kubernetes‑based network service meshes to keep the lab aligned with industry trends.

In conclusion, the paper demonstrates that a thoughtfully engineered hybrid lab can dramatically increase capacity, reduce operational overhead, and enhance learning outcomes without sacrificing the tactile experience that is essential for networking education. The authors’ experience provides a practical blueprint for other institutions seeking to modernize their networking curricula in a post‑pandemic, increasingly distributed learning environment.