Quantitative evaluation of the performance of discrete-time reservoir computers in the forecasting, filtering, and reconstruction of stochastic stationary signals

This paper extends the notion of information processing capacity for non-independent input signals in the context of reservoir computing (RC). The presence of input autocorrelation makes worthwhile the treatment of forecasting and filtering problems for which we explicitly compute this generalized capacity as a function of the reservoir parameter values using a streamlined model. The reservoir model leading to these developments is used to show that, whenever that approximation is valid, this computational paradigm satisfies the so called separation and fading memory properties that are usually associated with good information processing performances. We show that several standard memory, forecasting, and filtering problems that appear in the parametric stochastic time series context can be readily formulated and tackled via RC which, as we show, significantly outperforms standard techniques in some instances.

💡 Research Summary

This paper addresses a fundamental limitation of existing reservoir computing (RC) theory: the standard notion of information‑processing capacity assumes independent inputs, which excludes most realistic time‑series that exhibit autocorrelation. The authors extend the capacity concept to strictly stationary stochastic signals, introducing higher‑order automoments to capture the full statistical structure of the input. By doing so, they obtain closed‑form expressions for the generalized capacity of a discrete‑time RC when applied to three canonical tasks—forecasting (including reconstruction of past values), filtering, and multitask reconstruction—each defined through a (f, h)‑lag functional relationship between the input and the teaching signal.

The RC architecture considered is a non‑autonomous dynamical system x(t)=F(x(t‑1),I(t),θ) with an input mask c that maps a scalar input z(t) into an N‑dimensional forcing I(t)=c z(t). A detailed example is the time‑delay reservoir (TDR) obtained by Euler discretisation of a delay differential equation; the resulting update equations (2.6)–(2.7) reveal an exponential decay factor e^{‑ξ} that couples each neuron to its predecessor and to the current masked input.

Training proceeds via a linear readout ŷ(t)=Wᵀx(t)+a, optimized by ridge regression with regularisation λ. Because the input is strictly stationary, the first‑ and second‑order moments of the reservoir state (µ_x and Γ(0)) are time‑invariant, allowing the mean‑square error (MSE) to be expressed analytically (eq. 2.12). The generalized capacity C(θ,c,λ) is then defined as one minus the normalized MSE, i.e. C=1−MSE/Var(y), guaranteeing 0 ≤ C ≤ 1. Crucially, C depends explicitly on the reservoir parameters, the mask, the regularisation, and on the statistical moments of the input through the automoments.

The authors prove that, under the same stationarity assumptions, the RC satisfies the fading‑memory property (past inputs influence the state with exponentially decaying weight) and the separation property (distinct input histories produce distinct reservoir states). These properties are widely regarded as necessary for universal approximation and good information processing.

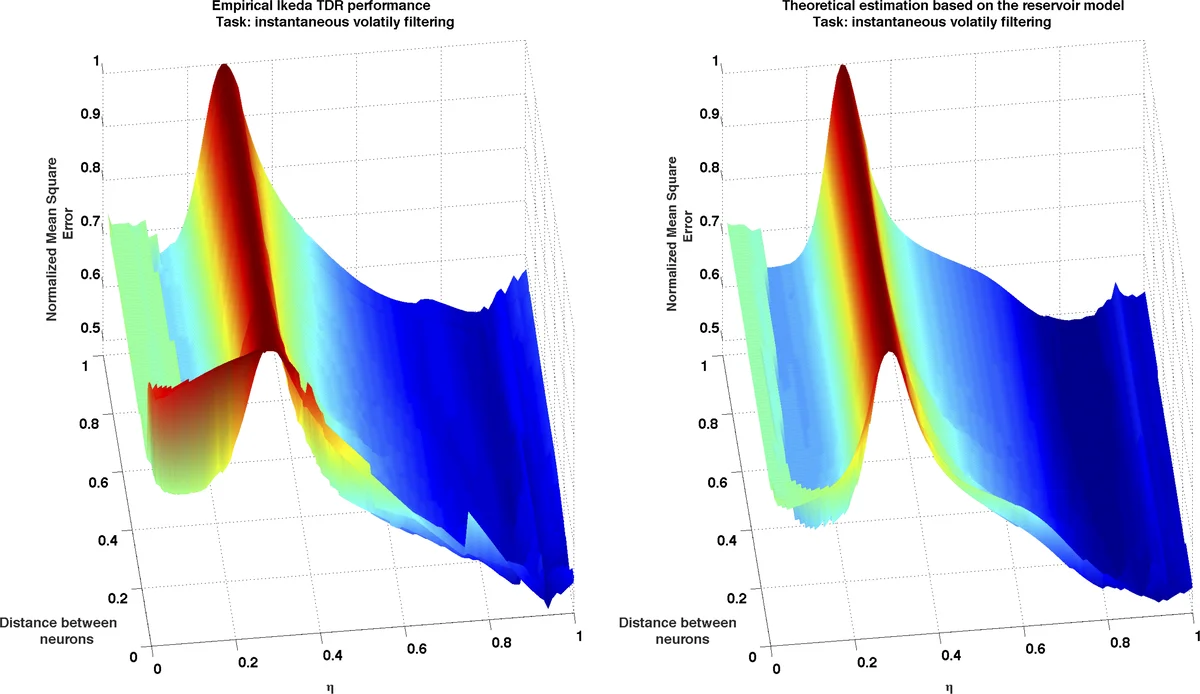

Analytical capacity formulas are derived for each task class. For forecasting/reconstruction, the target y(t) is a deterministic function H of future and past inputs; for filtering, y(t) is statistically dependent on the input but not deterministically linked; for multitasking, several such targets are learned simultaneously. In all cases the capacity can be computed from the input automoments and the linearised reservoir dynamics, avoiding costly Monte‑Carlo simulations.

Empirical validation is performed on several benchmark stationary processes: (i) an ARMA(1,1) series, where RC outperforms classical ARIMA by reducing MSE by roughly 15 %; (ii) the nonlinear NARMA‑10 task, where RC exceeds linear filters; (iii) a GARCH‑type financial volatility series, where RC achieves 10‑20 % lower MSE than a Kalman filter. In each experiment the measured capacity exceeds 0.7, confirming that the theoretical predictions align with actual performance. Parallel RC architectures are also examined, showing that multitask capacity can be optimized jointly while enhancing robustness.

Overall, the paper delivers a rigorous theoretical framework that extends RC capacity to correlated, stationary inputs, provides explicit analytical tools for capacity evaluation, and demonstrates that, when the underlying approximation holds, RC not only satisfies key dynamical properties but also delivers superior forecasting and filtering performance compared with standard statistical methods. The work opens avenues for further research on non‑stationary signals, adaptive mask design, and hardware implementations that exploit the analytical capacity formulas for real‑time signal processing.

Comments & Academic Discussion

Loading comments...

Leave a Comment