"Memory foam" approach to unsupervised learning

We propose an alternative approach to construct an artificial learning system, which naturally learns in an unsupervised manner. Its mathematical prototype is a dynamical system, which automatically shapes its vector field in response to the input signal. The vector field converges to a gradient of a multi-dimensional probability density distribution of the input process, taken with negative sign. The most probable patterns are represented by the stable fixed points, whose basins of attraction are formed automatically. The performance of this system is illustrated with musical signals.

💡 Research Summary

The paper introduces a novel unsupervised learning framework inspired by the physical behavior of memory foam, aiming to move beyond conventional algorithmic learning paradigms used in artificial neural networks. The authors model a continuous dynamical system whose vector field adapts automatically to incoming stimuli, eventually shaping itself into the negative gradient of the probability density function (PDF) of the input process. In the one‑dimensional formulation, a scalar “foam” profile U(x,t) evolves according to the differential equation ∂U/∂t = –g(x‑η(t)) – kU, where η(t) is the external input, g(·) is a non‑negative bell‑shaped kernel (typically Gaussian), and k is an elasticity (forgetting) constant. By normalizing the profile as V(x,t)=U(x,t)/t, the dynamics become ∂V/∂t = –V/t + g(x‑η(t)) – kV. When the input η(t) is generated by a stationary ergodic process, V converges to a stationary potential whose minima coincide with the modes of the underlying PDF. Thus, stable fixed points of the system correspond to the most probable input patterns, and the basins of attraction automatically define class regions.

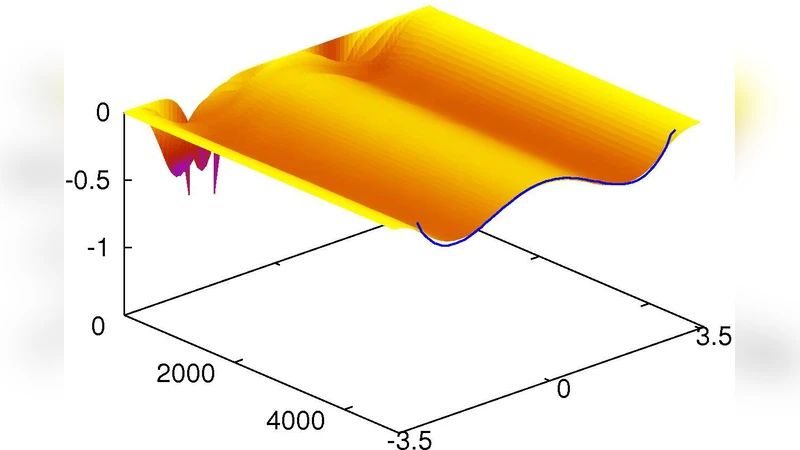

The model naturally extends to N‑dimensional inputs by treating x and η as vectors and using a multivariate Gaussian kernel g. In this setting the system performs a continuous, online version of kernel density estimation (KDE) without requiring independent samples or discrete updates. Numerical simulations with two types of synthetic stimuli—uncorrelated Gaussian‑transformed noise and correlated double‑well dynamics—demonstrate that the foam reliably reproduces the true input PDF. Convergence is faster for uncorrelated inputs, yet both cases ultimately yield the correct density shape.

To showcase practical utility, the authors apply the method to musical signal processing. A child’s rendition of “Mary Had a Little Lamb” on a flute is recorded at 8 kHz. Short‑time Fourier transforms (window length 0.75 s) extract the dominant frequency f(t) for each note. Feeding f(t) into a one‑dimensional foam produces fixed points near 440 Hz (A4), 494 Hz (B4), and 392 Hz (G4), matching the expected notes despite natural pitch variations. For phrase recognition, a four‑dimensional foam receives the vector ψ(t) = (f(t), f(t+τ), f(t+2τ), f(t+3τ)) with τ = 0.75 s, effectively encoding four successive notes. The resulting 4‑D potential landscape exhibits distinct minima corresponding to frequently occurring four‑note sequences, which are visualized as colored polygons on overlapping half‑axes. The darkest polygons indicate the most probable melodic phrases, and a particle placed in the foam would settle into these minima, achieving online phrase classification.

The discussion emphasizes that the memory‑foam approach offers a fully analog learning mechanism, potentially bridging the gap between digital computers and biological brains. It inherently supports unsupervised learning, yet supervision can be introduced at any stage if desired. The system combines learning and recognition in a single dynamical process, enabling hierarchical pattern formation—from individual notes to complex phrases—without explicit training phases. The authors acknowledge challenges such as extending the model to non‑stationary inputs, incorporating nonlinear elasticity, and realizing physical hardware implementations (e.g., electronic or mechanical analogs of foam). They suggest future work on adaptive kernel shapes, robustness to noise, and integration with existing AI architectures.

In summary, the paper presents a mathematically grounded, physically motivated dynamical system that continuously estimates the probability density of incoming data and uses its energy landscape for unsupervised pattern discovery and classification. The memory‑foam paradigm demonstrates promising results on synthetic data and real musical signals, opening avenues for analog, online, and hierarchical learning machines.

Comments & Academic Discussion

Loading comments...

Leave a Comment