Achieving Optimal Misclassification Proportion in Stochastic Block Model

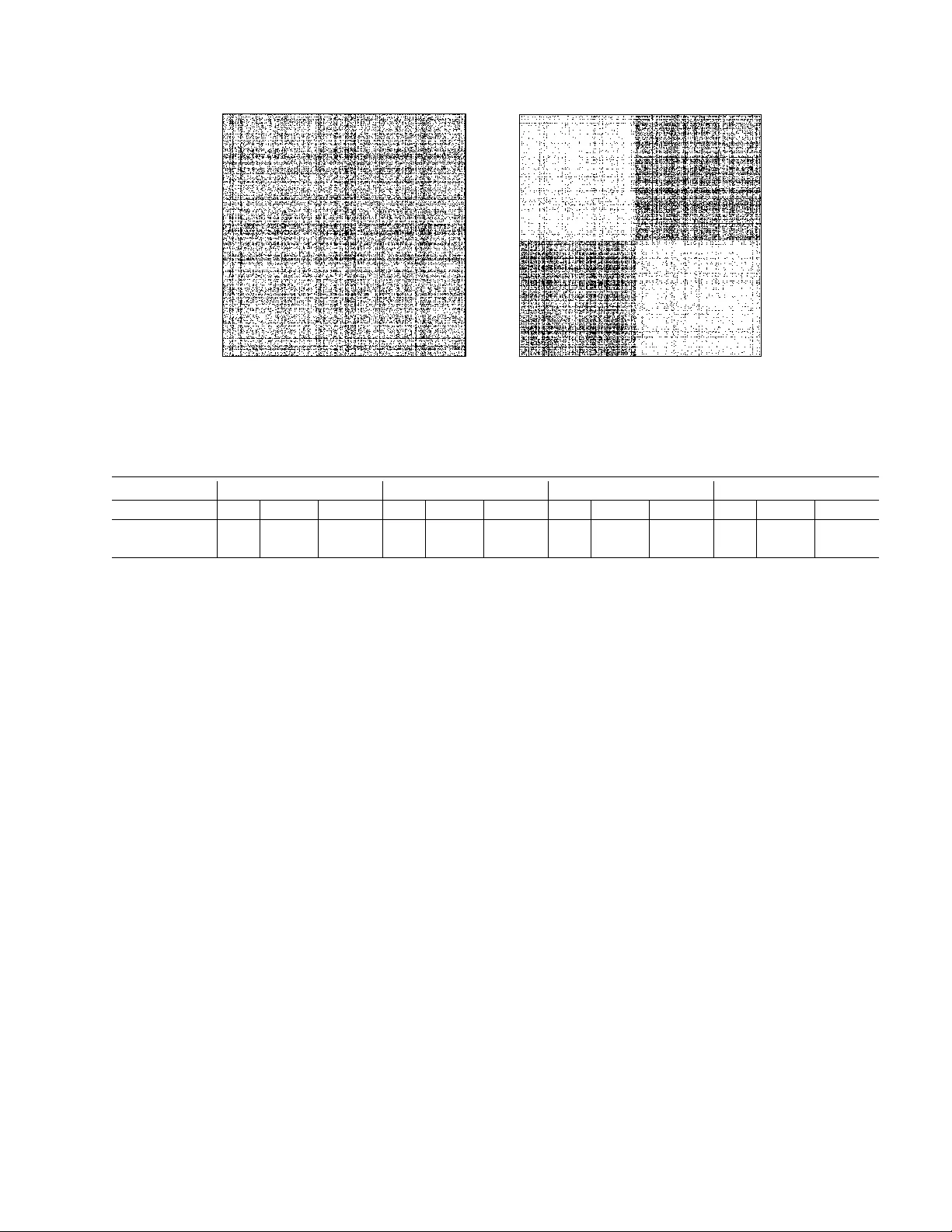

Community detection is a fundamental statistical problem in network data analysis. Many algorithms have been proposed to tackle this problem. Most of these algorithms are not guaranteed to achieve the statistical optimality of the problem, while proc…

Authors: Chao Gao, Zongming Ma, Anderson Y. Zhang