Variational Information Maximisation for Intrinsically Motivated Reinforcement Learning

The mutual information is a core statistical quantity that has applications in all areas of machine learning, whether this is in training of density models over multiple data modalities, in maximising the efficiency of noisy transmission channels, or…

Authors: Shakir Mohamed, Danilo Jimenez Rezende

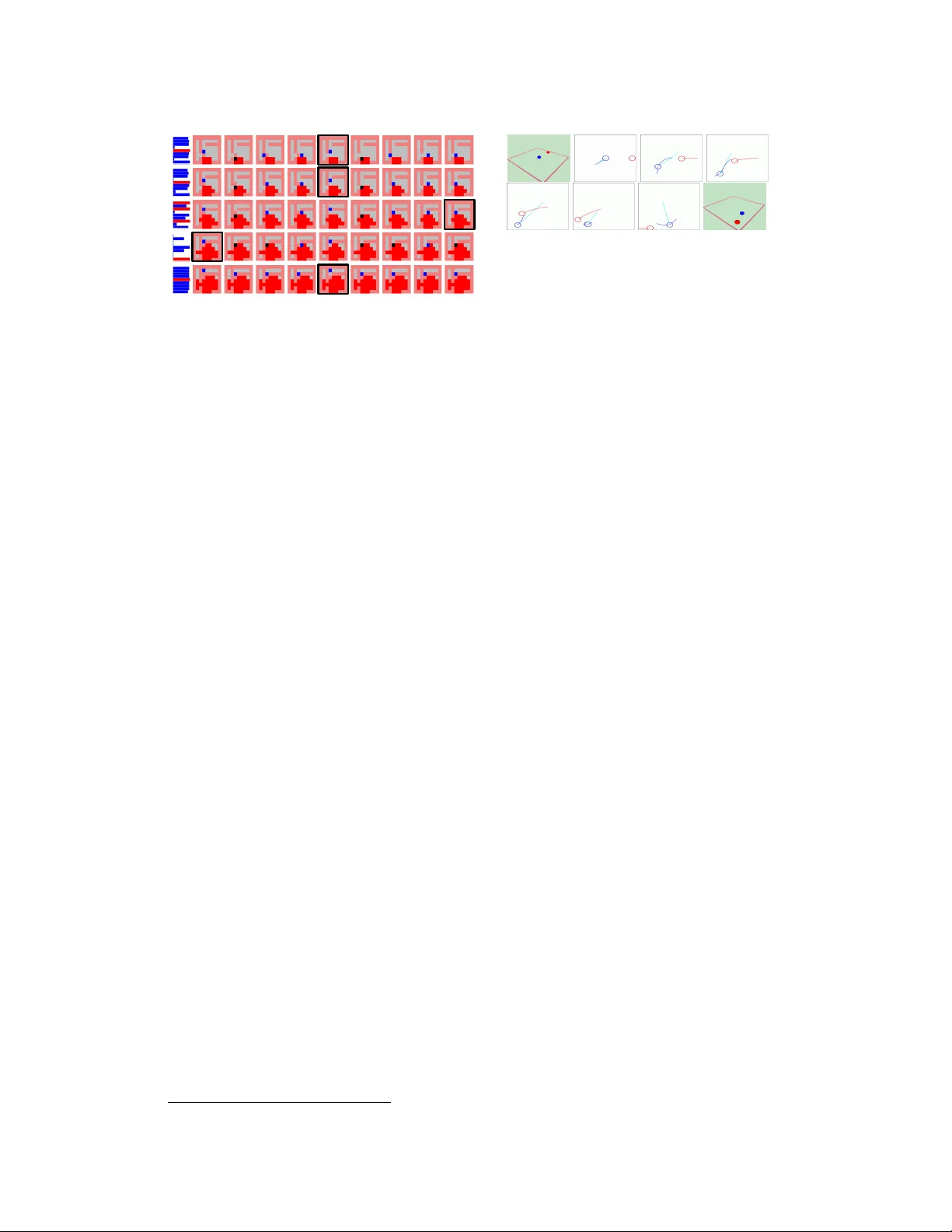

V ariational Inf ormation Maximisation f or Intrinsically Motiv ated Reinf orcement Lear ning Shakir Mohamed and Danilo J. Rezende Google DeepMind, London { shakir, danilor } @google.com Abstract The mutual information is a core statistical quantity that has applications in all ar - eas of machine learning, whether this is in training of density models o ver multiple data modalities, in maximising the efficiency of noisy transmission channels, or when learning behaviour policies for exploration by artificial agents. Most learn- ing algorithms that inv olve optimisation of the mutual information rely on the Blahut-Arimoto algorithm — an enumerative algorithm with exponential com- plexity that is not suitable for modern machine learning applications. This paper provides a new approach for scalable optimisation of the mutual information by merging techniques from v ariational inference and deep learning. W e de velop our approach by focusing on the problem of intrinsically-moti vated learning, where the mutual information forms the definition of a well-kno wn internal driv e kno wn as empo werment. Using a v ariational lower bound on the mutual information, combined with con volutional networks for handling visual input streams, we de- velop a stochastic optimisation algorithm that allo ws for scalable information maximisation and empo werment-based reasoning directly from pix els to actions. 1 Introduction The problem of measuring and harnessing dependence between random variables is an inescapable statistical problem that forms the basis of a lar ge number of applications in machine learning, includ- ing rate distortion theory [4], information bottleneck methods [26], population coding [1], curiosity- driv en exploration [24, 19], model selection [3], and intrinsically-motiv ated reinforcement learning [20]. In all these problems the core quantity that must be reasoned about is the mutual information. In general, the mutual information (MI) is intractable to compute and few existing algorithms are useful for realistic applications. The recei ved algorithm for estimating mutual information is the Blahut-Arimoto algorithm [29] that ef fecti vely solves for the MI by enumeration — an approach with e xponential complexity that is not suitable for modern machine learning applications. By com- bining the best current practice from variational inference with that of deep learning, we bring the generality and scalability seen in other problem domains to information maximisation problems. W e provide a ne w approach for maximisation of the mutual information that has significantly lo wer complexity , allows for computation with high-dimensional sensory inputs, and that allo ws us to exploit modern computational resources. The technique we deriv e is generally applicable, but we shall describe and develop our approach by focussing on one popular and increasingly topical application of the mutual information: as a measure of ‘empo werment’ in intrinsically-moti vated reinforcement learning. Reinforcement learn- ing (RL) has seen a number of successes in recent years that has now established it as a practical, scalable solution for realistic agent-based planning and decision making [15, 12]. A limitation of the standard RL approach is that an agent is only able to learn using external re wards obtained from its en vironment; truly autonomous agents will often exist in environments that lack such external rew ards or in environments where rew ards are sparsely distributed. Intrinsically-motivated rein- for cement learning [23] attempts to address this shortcoming by equipping an agent with a number of internal drives or intrinsic rew ard signals, such as hunger , boredom or curiosity that allows the agent to continue to explore, learn and act meaningfully in a re ward-sparse world. There are many 1 External Environment Internal Environment Planner Option KB Critic State Repr. State Embedding Option Observation Action Agent Environment Figure 1: Perception-action loop sepa- rating en vironment into internal and e x- ternal facets. z a 1 z a 2 z a K … Decoder Source ω (A|s) ψ (s) State Representation s’ s q ( a 1 ,...,a K | s, s 0 ) h ( a 1 ,...,a K | s ) z a 1 z a 2 z a K … x x’ Figure 2: Computational graph for variational in- formation maximisation. ways in which to formally define internal driv es, but what all such definitions have in common is that they , in some unsupervised fashion, allo w an agent to reason about the v alue of information in the action-observ ation sequences it experiences. The mutual information allo ws for exactly this type of reasoning and forms the basis of one popular intrinsic rew ard measure, known as empowerment . Our paper begins by describing the framework we use for online and self-motiv ated learning (sec- tion 2) and then describes the general problem associated with mutual information estimation and empowerment (section 3). W e then make the follo wing contributions: • W e dev elop stochastic variational information maximisation , a new algorithm for scalable es- timation of the mutual information and channel capacity that is applicable to both discrete and continuous settings. • W e combine variational information optimisation and tools from deep learning to de velop a scal- able algorithm for intrinsically-motivated r einfor cement learning , demonstrating a ne w applica- tion of the variational theory for problems in reinforcement learning and decision making. • W e demonstrate that empowerment-based behaviours obtained using v ariational information max- imisation match those using the exact computation. W e then apply our algorithms to a broad range of high-dimensional problems for which it is not possible to compute the exact solution, but for which we are able to act according to empowerment – learning dir ectly fr om pixel information . 2 Intrinsically-motivated Reinf or cement Learning Intrinsically- or self-motiv ated learning attempts to address the question of where rewards come from and how they are used by an autonomous agent. Consider an online learning system that must model and reason about its incoming data streams and interact with its en vironment. This perception-action loop is common to man y areas such as acti ve learning, process control, black-box optimisation, and reinforcement learning. An extended view of this framework was presented by Singh et al. [23], who describe the en vironment as factored into external and internal components (figure 1). An agent receives observations and takes actions in the external en vironment. Impor- tantly , the source and nature of any re ward signals are not assumed to be provided by an oracle in the e xternal en vironment, b ut is mo ved to an internal en vironment that is part of the agent’ s decision- making system; the internal en vironment handles the efficient processing of all input data and the choice and computation of an appropriate internal rew ard signal. There are two important components of this framework: the state representation and the critic. W e are principally interested in vision-based self-motiv ated systems, for which there are no solutions currently developed. T o achie ve this, our state r epr esentation system is a con volutional neural net- work [13]. The critic in figure 1 is responsible for pro viding intrinsic re wards that allo w the agent to act under different types of internal motiv ations, and is where information maximisation enters the intrinsically-motiv ated learning problem. The nature of the critic and in particular, the rew ard signal it provides is the main focus of this paper . A wide variety of re ward functions hav e been proposed, and include: missing information or Bayesian surprise, which uses the KL di vergence to measure the change in an agents internal belief after the observ ation of new data [8, 22]; measures based on prediction errors of future states such predicted L 1 change, predicted mode change or probability gain [16], or salient ev ent prediction [23]; and measures based on information-theoretic quantities such as predicted information gain (PIG) [14], causal entropic forces [28] or empo werment [21]. The paper by Oudeyer & Kaplan [18] 2 currently provides the widest singular discussion of the breadth of intrinsic motiv ation measures. Although we hav e a wide choice of intrinsic reward measures, none of the available information- theoretic approaches are ef ficient to compute or scalable to high-dimensional problems: they require either knowledge of the true transition probability or summation over all configurations of the state space, which is not tractable for complex en vironments or when the states are large images. 3 Mutual Inf ormation and Empowerment The mutual information is a core information-theoretic quantity that acts as a general measure of dependence between two random v ariables x and y , defined as: I ( x , y ) = E p ( y | x ) p ( x ) log p ( x , y ) p ( x ) p ( y ) , (1) where the p ( x , y ) is a joint distribution over the random variables, and p ( x ) and p ( y ) are the cor- responding marginal distributions. x and y can be many quantities of interest: in computational neuroscience they are the sensory inputs and the spiking population code; in telecommunications they are the input signal to a channel and the receiv ed transmission; when learning exploration policies in RL, they are the current state and the action at some time in the future, respecti vely . For intrinsic motiv ation, we use an internal reward measure referred to as empowerment [11, 21] that is obtained by searching for the maximal mutual information I ( · , · ) , conditioned on a starting state s , between a sequence of K actions a and the final state reached s 0 : E ( s ) = max ω I ω ( a , s 0 | s ) = max ω E p ( s 0 | a,s ) ω ( a | s ) log p ( a , s 0 | s ) ω ( a | s ) p ( s 0 | s ) , (2) where a = { a 1 , . . . , a K } is a sequence of K primitive actions a k leading to a final state s 0 , and p ( s 0 | a , s ) is the K -step transition probability of the environment. p ( a , s 0 | s ) is the joint distribution of action sequences and the final state, ω ( a | s ) is a distribution ov er K -step action sequences, and p ( s 0 | s ) is the joint probability marginalised o ver the action sequence. Equation (2) is the definition of the channel capacity in information theory and is a measure of the amount of information contained in the action sequences a about the future state s 0 . This measure is compelling since it provides a well-grounded, task-independent measure for intrinsic motiv ation that fits naturally within the framework for intrinsically motiv ated learning described by figure 1. Furthermore, empowerment, like the state- or action-value function in reinforcement learning, as- signs a v alue E ( s ) to each state s in an en vironment. An agent that seeks to maximise this v alue will mov e to wards states from which it can reach the largest number of future states within its planning horizon K . It is this intuition that has led authors to describe empowerment as a measure of agent ‘preparedness’, or as a means by which an agent may quantify the extent to which it can reliably influence its en vironment — motiv ating an agent to mov e to states of maximum influence [21]. An empowerment-based agent generates an open-loop sequence of actions K steps into the future — this is only used by the agent for its internal planning using ω ( a | s ) . When optimised using (2), the distribution ω ( a | s ) becomes an efficient exploration policy that allo ws for uniform exploration of the state space reachable at horizon K , and is another compelling aspect of empowerment (we provide more intuition for this in appendix A). But this policy is not what is used by the agent for acting: when an agent must act in the world, it follows a closed-loop policy obtained by a planning algorithm using the empo werment v alue (e.g., Q-learning); we expand on this in sect. 4.3. A further consequence is that while acting, the agent is only ‘curious’ about parts of its en vironment that can be reached within its internal planning horizon K . W e shall not explore the ef fect of the horizon in this work, b ut this has been widely-explored and we defer to the insights of Salge et al. [21]. 4 Scalable Inf ormation Maximisation The mutual information (MI) as we hav e described it thus far , whether it be for problems in empo w- erment, channel capacity or rate distortion, hides two difficult statistical problems. Firstly , comput- ing the MI in volv es expectations over the unknown state transition probability . This can be seen by rewriting the MI in terms of the dif ference between conditional entropies H ( · ) as: I ( a , s 0 | s ) = H ( a | s ) − H ( a | s 0 , s ) , (3) where H ( a | s ) = − E ω ( a | s ) [log ω ( a | s )] and H ( a | s 0 , s ) = − E p ( s 0 | a,s ) ω ( a | s ) [log p ( a | s 0 , s )] . This com- putation requires mar ginalisation ov er the K -step transition dynamics of the en vironment p ( s 0 | a , s ) , 3 which is unknown in general. W e could estimate this distribution by building a generativ e model of the en vironment, and then use this model to compute the MI. Since learning accurate generati ve models remains a challenging task, a solution that a voids this is preferred (and we also describe one approach for model-based empowerment in appendix B). Secondly , we currently lack an efficient algorithm for MI computation. There exists no scalable algorithm for computing the mutual information that allows us to apply empowerment to high- dimensional problems and that allow us to easily exploit modern computing systems. The current solution is to use the Blahut-Arimoto algorithm [29], which essentially enumerates over all states, thus being limited to small-scale problems and not being applicable to the continuous domain. More scalable non-parametric estimators hav e been developed [7, 6]: these have a high memory footprint or require a very large number of observations, an y approximation may not be a bound on the MI making reasoning about correctness harder , and they cannot easily be composed with existing (gradient-based) systems that allow us to design a unified (end-to-end) system. In the continuous domain, Monte Carlo integration has been proposed [10], but applications of Monte Carlo estimators can require a large number of draws to obtain accurate solutions and manageable variance. W e hav e also explored Monte Carlo estimators for empo werment and describe an alternative importance sampling-based estimator for the MI and channel capacity in appendix B.1. 4.1 V ariational Inf ormation Lower Bound The MI can be made more tractable by deriving a lower bound to it and maximising this instead — here we present the bound deri ved by Barber & Ag akov [1]. Using the entropy formulation of the MI (3) re veals that bounding the conditional entrop y component is suf ficient to bound the entire mutual information. By using the non-negativity property of the KL di ver gence, we obtain the bound: KL [ p ( x | y ) k q ( x | y )] ≥ 0 ⇒ H ( x | y ) ≤ − E p ( x | y ) [log q ξ ( x | y )] I ω ( s ) = H ( a | s ) − H ( a | s 0 , s ) ≥ H ( a ) + E p ( s 0 | a,s ) ω θ ( a | s ) [log q ξ ( a | s 0 , s )] = I ω ,q ( s ) (4) where we have introduced a variational distribution q ξ ( · ) with parameters ξ ; the distribution ω θ ( · ) has parameters θ . This bound becomes exact when q ξ ( a | s 0 , s ) is equal to the true action posterior distribution p ( a | s 0 , s ) . Other lo wer bounds for the mutual information are also possible: Jaakkola & Jordan [9] present a lower bound by using the conv exity bound for the logarithm; Brunel & Nadal [2] use a Gaussian assumption and appeal to the Cramer-Rao lo wer bound. The bound (4) is highly con venient (especially when compared to other bounds) since the transition probability p ( s 0 | a , s ) appears linearly in the e xpectation and we ne ver need to ev aluate its probability — we can thus ev aluate the expectation directly by Monte Carlo using data obtained by interaction with the en vironment. The bound is also intuitive since we operate using the marginal distribution on action sequences ω θ ( a | s ) , which acts as a source (exploration distribution), the transition distrib ution p ( s 0 | a , s ) acts as an encoder (transition distribution) from a to s 0 , and the variational distribution q ξ ( a | s 0 , s ) con veniently acts as a decoder (planning distribution) taking us from s 0 to a . 4.2 V ariational Inf ormation Maximisation A straightforward optimisation procedure based on (4) is an alternating optimisation for the param- eters of the distributions q ξ ( · ) and ω θ ( · ) . Barber & Agako v [1] made the connection between this approach and the generalised EM algorithm and refer to it as the IM (information maximisation) algorithm and we follow the same optimisation principle. From an optimisation perspectiv e, the maximisation of the bound I ω ,q ( s ) in (4) w .r .t. ω ( a | s ) can be ill-posed (e.g., in Gaussian models, the v ariances can di ver ge). W e av oid such di ver gent solutions by adding a constraint on the v alue of the entropy H ( a ) , which results in the constrained optimisation problem: ˆ E ( s ) = max ω ,q I ω ,q ( s ) s.t. H ( a | s ) < , ˆ E ( s ) = max ω ,q E p ( s 0 | a,s ) ω ( a | s ) [ − 1 β ln ω ( a | s ) + ln q ξ ( a | s 0 , s )] (5) where a is the action sequence performed by the agent when mo ving from s to s 0 and β is an in verse temperature (which is a function of the constraint ). At all times we use very general source and decoder distributions formed by complex non-linear functions using deep networks, and use stochastic gradient ascent for optimisation. W e refer to our approach as stochastic variational information maximisation to highlight that we do all our computation on a mini-batch of recent experience from the agent. The optimisation for the decoder q ξ ( · ) becomes a maximum likelihood problem, and the optimisation for the source ω θ ( · ) requires computation of an unnormalised ener gy-based model, which we describe next. W e summmarise the ov erall procedure in algorithm 1. 4 4.2.1 Maximum Likelihood Decoder The first step of the alternating optimisation is the optimisation of equation (5) w .r .t. the decoder q , and is a supervised maximum likelihood problem. Giv en a set of data from past interactions with the en vironment, we learn a distribution from the start and termination states s , s 0 , respecti vely , to the action sequences a that have been taken. W e parameterise the decoder as an auto-regressiv e distribution o ver the K -step action sequence: q ξ ( a | s 0 , s ) = q ( a 1 | s , s 0 ) K Y k =2 q ( a k | f ξ ( a k − 1 , s , s 0 )) , (6) W e are free to choose the distributions q ( a k ) for each action in the sequence, which we choose as categorical distributions whose mean parameters are the result of the function f ξ ( · ) with parameters ξ . f is a non-linear function that we specify using a two-layer neural network with rectified-linear activ ation functions. By maximising this log-likelihood, we are able to make stochastic updates to the variational parameters ξ of this distrib ution. The neural network models used are e xpanded upon in appendix D. 4.2.2 Estimating the Source Distrib ution Giv en a current estimate of the decoder q , the variational solution for the distribu- tion ω ( a | s ) computed by solving the functional deriv ati ve δ I ω ( s ) /δ ω ( a | s ) = 0 under the constraint that P a ω ( a | s ) = 1 , is giv en by ω ? ( a | s ) = 1 Z ( s ) exp ( ˆ u ( s , a )) , where u ( s , a ) = E p ( s 0 | s,a ) [ln q ξ ( a | s , s 0 )] , ˆ u ( s , a ) = β u ( s , a ) and Z ( s ) = P a e ˆ u ( s,a ) is a normalisation term. By substituting this optimal distribution into the original objectiv e (5) we find that it can be expressed in terms of the normalisation function Z ( s ) only , E ( s ) = 1 β log Z ( s ) . The distribution ω ? ( a | s ) is implicitly defined as an unnormalised distribution — there are no direct mechanisms for sampling actions or computing the normalising function Z ( s ) for such distributions. W e could use Gibbs or importance sampling, but these solutions are not satisfactory as they would require sev eral ev aluations of the unknown function u ( s , a ) per decision per state. W e obtain a more con venient problem by approximating the unnormalised distribution ω ? ( a | s ) by a normalised (directed) distribution h θ ( a | s ) . This is equiv alent to approximating the energy term ˆ u ( s , a ) by a function of the log-likelihood of the directed model, r θ : ω ? ( a | s ) ≈ h θ ( a | s ) ⇒ ˆ u ( s , a ) ≈ r θ ( s , a ); r θ ( s , a ) = ln h θ ( a | s ) + ψ θ ( s ) . (7) W e introduced a scalar function ψ θ ( s ) into the approximation, but since this is not dependent on the action sequence a it does not change the approximation (7), and can be verified by substituting (7) into ω ? ( a | s ) . Since h θ ( a | s ) is a normalised distribution, this leav es ψ θ ( s ) to account for the normalisation term log Z ( s ) , verified by substituting ω ? ( a | s ) and (7) into (5). W e therefore obtain a cheap estimator of empowerment E ( s ) ≈ 1 β ψ θ ( s ) . T o optimise the parameters θ of the directed model h θ and the scalar function ψ θ we can minimise any measure of discrepancy between the two sides of the approximation (7). W e minimise the squared error , giving the loss function L ( h θ ,ψ θ ) for optimisation as: L ( h θ ,ψ θ ) = E p ( s 0 | s,A ) ( β ln q ξ ( a | s , s 0 ) − r θ ( s , a )) 2 . (8) At con vergence of the optimisation, we obtain a compact function with which to compute the em- powerment that only requires forward e valuation of the function ψ . h θ ( a | s ) is parameterised using an auto-regressi ve distribution similar to (18), with conditional distributions specified by deep net- works. The scalar function ψ θ is also parameterised using a deep network. Further details of these networks are provided in appendix D. 4.3 Empowerment-based Beha viour policies Using empowerment as an intrinsic reward measure, an agent will seek out states of maximal em- powerment. W e can treat the empowerment value E ( s ) as a state-dependent reward and can then utilise any standard planning algorithm, e.g., Q-learning, policy gradients or Monte Carlo search. W e use the simplest planning strategy by using a one-step greedy empowerment maximisation. This amounts to choosing actions a = arg max a C ( s , a ) , where C ( s , a ) = E p ( s 0 | s,a ) [ E ( s )] . This policy does not account for the ef fect of actions beyond the planning horizon K . A natural enhancement is to use v alue iteration [25] to allo w the agent to tak e actions by maximising its long term (potentially 5 Algorithm 1: Stochastic V ariational Information Maximisation for Empowerment Parameters: ξ variational, λ con volutional, θ source while not con ver ged do x ← { Read current state } s = Con vNet λ ( x ) { Compute state repr . } A ∼ ω ( a | s ) { Draw action sequence. } Obtain data ( x , a , x 0 ) { Acting in en v . } s 0 = Con vNet λ ( x 0 ) { Compute state repr . } ∆ ξ ∝ ∇ ξ log q ξ ( a | s , s 0 ) (18) ∆ θ ∝ ∇ θ L ( h θ , ψ θ ) (8) ∆ λ ∝ ∇ λ log q ξ ( a | s , s 0 ) + ∇ λ L ( h θ , ψ θ ) end while E ( s ) = 1 β ψ θ ( s ) { Empowerment } T rue MI T rue MI Bound MI y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 y = − 0.083 + 1 ⋅ x , r 2 = 0.903 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 1.5 2.0 2.5 3.0 1.5 2.0 2.5 3.0 T r ue Empo w er ment (nats) Appro ximate Empo w er ment (nats) Bound MI Approximate Empowerment (nats) T rue Empowerment (nats) Figure 3: Comparing exact vs approx- imate empowerment. Heat maps: em- powerment in 3 en vironments: tw o rooms, cross room, two-rooms; Scatter plot: agreement for two-rooms. discounted) empowerment. A third approach would be to use empo werment as a potential function and the dif ference between the current and pre vious state’ s empowerment as a shaping function with in the planning [17]. A fourth approach is one where the agent uses the source distribution ω ( a | s ) as its behaviour policy . The source distribution has similar properties to the greedy behaviour pol- icy and can also be used, but since it effecti vely acts as an empowered agents internal exploration mechanism, it has a large variance (it is designed to allow uniform exploration of the state space). Understanding this choice of behaviour polic y is an important line of ongoing research. 4.4 Algorithm Summary and Complexity The system we have described is a scalable and general purpose algorithm for mutual information maximisation and we summarise the core components using the computational graph in figure 2 and in algorithm 1. The state representation mechanism used throughout is obtained by transforming ra w observations x , x 0 to produce the start and final states s , s 0 , respecti vely . When the ra w observ ations are pixels from vision, the state representation is a con volutional neural network [13, 15], while for other observations (such as continuous measurements) we use a fully-connected neural network – we indicate the parameters of these models using λ . Since we use a unified loss function, we can apply gradient descent and backpropagate stochastic gradients through the entire model allo wing for joint optimisation of both the information and representation parameters. For optimisation we use a preconditioned optimisation algorithm such as Adagrad [5]. The computational complexity of empowerment estimators inv olves the planning horizon K , the number of actions N , and the number of states S . For the exact computation we must enumerate over the number of states, which for grid-worlds is S ∝ D 2 (for D × D grids), or for binary images is S = 2 D 2 . The complexity of using the Blahut-Arimoto (B A) algorithm is O ( N K S 2 ) = O ( N K D 4 ) for grid worlds or O ( N K 2 2 D 2 ) for binary images. The B A algorithm, e ven in environments with a small number of interacting objects becomes quickly intractable, since the state space gro ws exponentially with the number of possible interactions, and is also exponential in the planning horizon. In contrast, our approach deals directly on the image dimensions. Using visual inputs, the con volutional network produces a vector of size P , upon which all subsequent computation is based, consisting of an L - layer neural network. This gives a complexity for state representation of O ( D 2 P + LP 2 ) . The autoregressi ve distrib utions ha ve comple xity of O ( H 2 K N ) , where H is the size of the hidden layer . Thus, our approach has at most quadratic comple xity in the size of the hidden layers used and linear in other quantities, and matches the complexity of any currently employed large-scale vision-based models. In addition, since we use gradient descent throughout, we are able to leverage the po wer of GPUs and distributed gradient computations. 5 Results W e demonstrate the use of empowerment and the effecti veness of v ariational information maximi- sation in two types of en vironments. Static en vir onments consists of rooms and mazes in different configurations in which there are no objects with which the agent can interact, or other moving ob- 6 Figure 4: Empo werment for a room en viron- ment, showing a) an empty room, b) room with an obstacle c) room with a mov eable box, d) room with row of mo veable box es. Figure 5: Left: empowerment landscape for agent and key scenario. Y ellow is the key and green is the door . Right: Agent in a corridor with flowing la va. The agent places a bricks to stem the flow of la v a. jects. The number of states in these settings is equal to the number of locations in the en vironment, so is still manageable for approaches that rely on state enumeration. In dynamic envir onments , as- pects of the environment change, such as flowing lav a that causes the agent to reset, or a predator that chases the agent. For the most part, we consider discrete action settings in which the agent has fiv e actions (up, down, left, right, do nothing). The agent may have other actions, such as picking up a key or laying down a brick. There are no external rew ards available and the agent must reason purely using visual (pixel) information. For all these experiments we used a horizon of K = 5 . 5.1 Effectiveness of the MI Bound W e first establish that the use of the variational information lower bound results in the same be- haviour as that obtained using the exact mutual information in a set of static environments. W e consider en vironments that hav e at most 400 discrete states and compute the true mutual informa- tion using the Blahut-Arimoto algorithm. W e compute the variational information bound on the same en vironment using pixel information (on 20 × 20 images). T o compare the tw o approaches we look at the empo werment landscape obtained by computing the empowerment at ev ery location in the en vironment and show these as heatmaps. For action selection, what matters is the location of the maximum empo werment, and by comparing the heatmaps in figure 3, we see that the empo werment landscape matches between the exact and the v ariational solution, and hence, will lead to the same agent-behaviour . In each image in figure 3, we show a heat-map of the empo werment for each location in the en viron- ment. W e then analyze the point of highest empowerment: for the large room it is in the centre of the room; for the cross-shaped room it is at the centre of the cross, and in a tw o-rooms en vironment, it is located near both doors. In addition, we show that the empowerment v alues obtained by our method constitute a close approximation to the true empowerment for the two-rooms en vironment (correlation coeff = 1.00, R 2 =0.90). These results match those by authors such as Klyubin et al. [11] (using empowerment) and W issner-Gross & Freer [28] (using a different information-theoretic measure — the causal entropic force). The advantage of the v ariational approach is clear from this discussion: we are able to obtain solutions of the same quality as the exact computation, we hav e f ar more fa vourable computational scaling (one that is not e xponential in the size of the state space and planning horizon), and we are able to plan directly from pixel information. 5.2 Dynamic En vironments Having established the usefulness of the bound and some further understanding of empowerment, we no w examine the empowerment behaviour in environments with dynamic characteristics. Even in small en vironments, the number of states becomes extremely large if there are objects that can be mov ed, or added and removed from the en vironment, making enumerative algorithms (such as B A) quickly infeasible, since we hav e an exponential explosion in the number of states. W e first reproduce an experiment from Salge et al. [21, § 4.5.3] that considers the empowered behaviour of an agent in a room-en vironment, a room that: is empty , has a fixed box, has a moveable box, has a row of moveable boxes. Salge et al. [21] explore this setup to discuss the choice of the state representation, and that not including the existence of the box se verely limits the planning ability of the agent. In our approach, we do not face this problem of choosing the state representation, since the agent will reason about all objects that appear within its visual observ ations, obviating the need for hand-designed state representations. Figure 4 sho ws that in an empty room, the empo werment is uniform almost e verywhere e xcept close to the w alls; in a room with a fix ed box, the fixed box limits the set of future reachable states, and as expected, empowerment is lo w around the box; in a room where the box can be moved, the box can now be seen as a tool and we hav e high empowerment near the box; similarly , when we hav e four boxes in a row , the empowerment is highest around the 7 Stay Right Left Down Up Box+Right Box+Left Box+Down Box+Up t =1 t =2 t =4 t =3 t =5 C ( s, a ) Figure 6: Empowerment planning in a lav a-filled maze en vironment. Black panels show the path taken by the agent. 1 1 2 3 4 5 6 6 Figure 7: Predator (red) and agent (blue) sce- nario. Panels 1, 6 show the 3D simulation. Other panels show a trace of the path that the predator and prey take at points on its trajectory . The blue/red sho ws path history; cyan sho ws the direction to the maximum empowerment. boxes. These results match those of Salge et al. [21] and show the effecti veness of reasoning from pixel information directly . Figure 6 shows ho w planning with empo werment works in a dynamic maze en vironment, where lava flows from a source at the bottom that eventually engulfs the maze. The only w ay the agent is able to safeguard itself, is to stem the flo w of lav a by b uilding a wall at the entrance to one of the corridors. At ev ery point in time t , the agent decides its next action by computing the expected empo werment after taking one action. In this en vironment, we show the planning for all 9 av ailable actions and a bar graph with the empo werment values for each resulting state. The action that leads to the highest empowerment is tak en and is indicated by the black panels 1 . Figure 5(left) shows two-rooms separated by a door . The agent is able to collect a key that allows it to open the door . Before collecting the ke y , the maximum empowerment is in the region around the key , once the agent has collected the key , the region of maximum empowerment is close to the door 2 . Figure 5(right) shows an agent in a corridor and must protect itself by building a wall of bricks, which it is able to do successfully using the same empo werment planning approach described for the maze setting. 5.3 Predator -Pr ey Scenario W e demonstrate the applicability of our approach to continuous settings, by studying a simple 3D physics simulation [27], sho wn in figure 7. Here, the agent (blue) is follo wed by a predator (red) and is randomly reset to a ne w location in the en vironment if caught by the predator . Both the agent and the predator are represented as spheres in the environment that roll on a surface with friction. The state is the position, v elocity and angular momentum of the agent and the predator , and the action is a 2D force v ector . As expected, the maximum empo werment lies in regions a way from the predator , which results in the agent learning to escape the predator 3 . 6 Conclusion W e hav e developed a ne w approach for scalable estimation of the mutual information by exploiting recent advances in deep learning and variational inference. W e focussed specifically on intrinsic motiv ation with a reward measure known as empowerment, which requires at its core the ef ficient computation of the mutual information. By using a variational lo wer bound on the mutual infor- mation, we dev eloped a scalable model and efficient algorithm that expands the applicability of empowerment to high-dimensional problems, with the complexity of our approach being extremely fa vourable when compared to the complexity of the Blahut-Arimoto algorithm that is currently the standard. The overall system does not require a generative model of the en vironment to be built, learns using only interactions with the environment, and allows the agent to learn directly from vi- sual information or in continuous state-action spaces. While we chose to dev elop the algorithm in terms of intrinsic motiv ation, the mutual information has wide applications in other domains, all which stand to benefit from a scalable algorithm that allows them to exploit the abundance of data and be applied to large-scale problems. Acknowledgements: W e thank Daniel Polani for in valuable guidance and feedback. 1 V ideo: http://youtu.be/eA9jVDa7O38 2 V ideo: http://youtu.be/eSAIJ0isc3Y 3 V ideos: http://youtu.be/tMiiKXPirAQ ; http://youtu.be/LV5jYY- JFpE 8 References [1] Barber , D. and Agakov , F . The IM algorithm: a v ariational approach to information maximiza- tion. In NIPS , volume 16, pp. 201, 2004. [2] Brunel, N. and Nadal, J. Mutual information, Fisher information, and population coding. Neural Computation , 10(7):1731–1757, 1998. [3] Buhmann, J. M., Chehreghani, M. H., Frank, M., and Streich, A. P . Information theoretic model selection for pattern analysis. W orkshop on Unsupervised and T ransfer Learning , 2012. [4] Cover , T . M. and Thomas, J. A. Elements of information theory . John Wile y & Sons, 1991. [5] Duchi, J., Hazan, E., and Singer , Y . Adaptive subgradient methods for online learning and stochastic optimization. The Journal of Machine Learning Researc h , 12:2121–2159, 2011. [6] Gao, S., Steeg, G. V ., and Galstyan, A. Efficient estimation of mutual information for strongly dependent variables. , 2014. [7] Gretton, A., Herbrich, R., and Smola, A. J. The kernel mutual information. In ICASP , vol- ume 4, pp. IV –880, 2003. [8] Itti, L. and Baldi, P . F . Bayesian surprise attracts human attention. In NIPS , pp. 547–554, 2005. [9] Jaakkola, T . S. and Jordan, M. I. Improving the mean field approximation via the use of mixture distributions. In Learning in graphical models , pp. 163–173. 1998. [10] Jung, T ., Polani, D., and Stone, P . Empowerment for continuous agent-en vironment systems. Adaptive Behavior , 19(1):16–39, 2011. [11] Klyubin, A. S., Polani, D., and Nehaniv , C. L. Empowerment: A universal agent-centric measure of control. In IEEE Congress on Evolutionary Computation , pp. 128–135, 2005. [12] Koutn ´ ık, J., Schmidhuber , J., and Gomez, F . Evolving deep unsupervised conv olutional net- works for vision-based reinforcement learning. In GECCO , pp. 541–548, 2014. [13] LeCun, Y . and Bengio, Y . Con volutional networks for images, speech, and time series. The handbook of brain theory and neural networks , 3361:310, 1995. [14] Little, D. Y . and Sommer , F . T . Learning and exploration in action-perception loops. F r ontiers in neural cir cuits , 7, 2013. [15] Mnih, V ., Kavukcuoglu, K., and Silver , D., et al. Human-lev el control through deep reinforce- ment learning. Nature , 518(7540):529–533, 2015. [16] Nelson, J. D. Finding useful questions: on Bayesian diagnosticity , probability , impact, and information gain. Psychological re view , 112(4):979, 2005. [17] Ng, Andre w Y , Harada, Daishi, and Russell, Stuart. Policy in variance under re ward transfor- mations: Theory and application to reward shaping. In ICML , 1999. [18] Oudeyer , P . and Kaplan, F . How can we define intrinsic moti vation? In International confer- ence on epigenetic r obotics , 2008. [19] Rubin, J., Shamir , O., and T ishby , N. T rading value and information in MDPs. In Decision Making with Imperfect Decision Makers , pp. 57–74. 2012. [20] Salge, C., Glackin, C., and Polani, D. Changing the environment based on empowerment as intrinsic motiv ation. Entr opy , 16(5):2789–2819, 2014. [21] Salge, C., Glackin, C., and Polani, D. Empowerment–an introduction. In Guided Self- Or ganization: Inception , pp. 67–114. 2014. [22] Schmidhuber , J. Formal theory of creativity , fun, and intrinsic moti vation (1990–2010). IEEE T rans. Autonomous Mental Development , 2(3):230–247, 2010. [23] Singh, S. P ., Barto, A. G., and Chentanez, N. Intrinsically motiv ated reinforcement learning. In NIPS , 2005. [24] Still, S. and Precup, D. An information-theoretic approach to curiosity-driven reinforcement learning. Theory in Biosciences , 131(3):139–148, 2012. [25] Sutton, R. S. and Barto, A. G. Intr oduction to r einfor cement learning . MIT Press, 1998. [26] Tishby , N., Pereira, F . C., and Bialek, W . The information bottleneck method. In Allerton Confer ence on Communication, Contr ol, and Computing , 1999. [27] T odorov , E., Erez, T ., and T assa, Y . Mujoco: A physics engine for model-based control. In Intelligent Robots and Systems (IR OS) , pp. 5026–5033, 2012. [28] W issner-Gross, A. D. and Freer , C. E. Causal entropic forces. Phys. Rev . Let. , 110(16), 2013. [29] Y eung, R. W . The Blahut-Arimoto algorithms. In Information Theory and Network Coding , pp. 211–228. 2008. 9 A Empowerment as Path-counting W e can obtain a further intuitiv e understanding of empo werment by examining analytical properties of equation (2). For simplicity , we focus on deterministic and discrete en vironments. In this setting, the transition probability p ( s 0 | s , a ) is a delta distribution p ( s 0 | s , a ) = δ ( s 0 − T ( s , a )) , where T ( s , a ) is a transition function that starts in state s , ex ecutes the action sequence a and provides the resulting state. Solving equation (2) for the optimal source distribution ω ( a | s ) , using Blahut-Arimoto [4], yields the fixed-point iteration: ω ( k +1) ( a | s ) = 1 Z ( s ) ω ( k ) ( a | s ) P A 0 : T ( s,A 0 )= T ( s,A ) ω ( k ) ( a 0 | s ) , (9) where Z ( s ) is a normalising constant. By starting the recursion (9) with a uniform distribution, ω (1) ( a | s ) ∝ 1 , the solution to the recursion at the next iteration ω (2) ( a | s ) is: ω (2) ( a | s ) ∝ 1 n ( a , s ) , where n ( a , s ) is the number of alternativ e action-paths a 0 that terminate at the same state as the action-path a , starting from the state s . W e can also relate the quantity n ( a , s ) with a more intuiti ve number , n ( s 0 , s ) , which is the number of different action-paths that brings the agent from state s to the state s 0 , n ( a , s ) = P s 0 p ( s 0 | a , s ) n ( s 0 , s ) . If ω ( k ) ( a | s ) = ω ( k ) ( a 0 | s ) , for all a 0 such that T ( s , a 0 ) = T ( s , a ) then the r .h.s. of (9) does not depend on ω ( k ) ( a | s ) . Since the function n ( a , s ) satisfies this property , we conclude that ω (2) ( a | s ) is a fixed point of (9). The optimal source distribution and resulting empo werment are: ω ( ∞ ) ( a | s ) = 1 Z ( s ) 1 n ( a , s ) ; E ( s ) = log Z ( s ) = log P A 1 n ( a , s ) = log n ( s ) , (10) where n ( s ) is the number of different states that can be reached from state s at horizon K . W e demonstrate this reasoning using a tree-structured en vironment in figure 8. An agent selecting its actions uniformly will in general not visit all terminal states uniformly , unless ev ery action-path terminates at a different state. Ho wever , if an agent selects its actions according the the distribution ω ( ∞ ) ( a | s ) it will visit terminal states uniformly . This can be seen by computing the marginal distrib ution of terminal states p ( s 0 | s ) = P A δ ( s 0 − T ( s , a )) ω ( ∞ ) ( a | s ) = 1 n ( s ) . Therefore the distribution ω ( ∞ ) ( a | s ) can be seen as an efficient exploration policy , that allows the agent to explore all states uniformly . This adds to a similar analysis presented by Salge et al. [3, § 4.4.6]. s 1 D U actions s 6 s 2 s 5 s 3 s 4 Figure 8: T ree-structured en vironment. Each node represents a reachable state and sho wing possible transitions by taking the up or down action. B Model-based Empowerment The approach we described in the main te xt was ’model-free’ in the sense that it did not use a model of the transition dynamics of the environment. Building accurate transition models can be hard and much success in RL has been achieved with model-free methods. Ideally , we would like to use a model-based method, since this will allow for reasoning about task-independent aspects of the world, allo w for transfer learning across domains and potentially faster learning. W e describe here model-based approaches that we dev eloped for empo werment. These methods were not as efficient for reasons which we describe below , and hence were not part of our main text. 10 B.1 Importance Sampling Estimator The most-generic model-based empowerment method is to approximate the empowerment using generic importance-sampling estimator . W e assume that a model of the environment p ( s 0 | a i , s ) is av ailable, but at this point will not specify ho w this model is obtained. W e generate S samples a i from the source distribution ω t ( a | s ) with importance weights α t,i ( P i α t,i = 1 , α t,i > 0 ∀ i = 1 . . . S ) at iteration t , { a i , α t,i } ∼ ω t ( a | s ) . (11) The samples a i are kept constant through the optimization and only the importance weights are adapted to maximize the MI. Additionally , for each action-sequence sample a i we generate J future- state samples s 0 k,i from the transition model p ( s 0 | a i , s ) , s 0 k,i ∼ p ( s 0 | a i , s ) ∀ i = 1 . . . S , k = 1 . . . J. (12) W e could approximate all quantities required to compute the MI from the samples a i and s 0 k,i , b ut we shall instead, directly approximate the Blahut-Arimoto iteration. For this we compute a distortion D t,i at the t -th iteration using: D t,i ≈ 1 J J X k =1 ln p ( s 0 k,i | a i ) p t ( s 0 k,i ) , (13) where p t ( s 0 ) = P i p ( s 0 | a i , s ) α t,i and find a ne w set of normalized weights α t +1 ,i such that ω t +1 ( a | s ) best approximates the Blahut-Arimoto update. This yields a simple update rule for the importance weights, ln α t +1 ,i = ln α t,i + D t,i − c t +1 , (14) where c t +1 = P i α t,i exp D t,i is a normalizing constant. The algorithmic complexity of the update (14) is O ( S ) , b ut the cost of computing the distortion (13) scales as O ( J S 2 ) . This approach is applicable to the continuous domain, but typically requires a large number of samples for accurate estimation of the empowerment. B.2 Efficient Optimisation In the case of smooth transition models p ( s 0 | a i , s ) and polic y ω θ ( a | s ) , a more ef ficient algorithm can be derived using stochastic backpropagation [2, 1] in which we rewrite both the model and policy as s 0 = f ( a i , s , ξ m ) and a = h θ ( s , ξ p ) where ξ m , ξ p ∼ N (0 , I ) and f and h are differentiable func- tions. Using this representation, we can re write the v ariational objecti ve function 4 as an expectation under ξ m , ξ p : I ω ,q ( s ) = E ξ m ,ξ p [log q ξ ( h θ ( s , ξ p ) | f ( h θ ( s , ξ p ) , s , ξ m ) , s ) − log ω θ ( h θ ( s , ξ p ) | s )] (15) The reparametrised bound 15 can now be optimized with respect to the parameters θ of the policy using stochastic gradient ascent. C Deriving the Blahut-Arimoto Iterations fr om the V ariational Bound Here we show that the Blahut-Arimoto algorithm can be deriv ed from the variational bound (8). The v ariational distribution q ( a | s 0 , s ) that maximises the bound (8) is the posterior distrib ution over actions giv en present and future states, q ? ( a | s 0 , s ) = p ( a | s 0 , s ) ∝ p ( s 0 | a , s ) ω ( a | s ) . (16) The Blahut-Arimoto algorithm is obtained by replacing equation (16) in equation (10) and rearrang- ing the terms: ω t +1 ( a | s ) ∝ exp β E p ( s 0 | s,A ) [ln q t ( a | s , s 0 )] ∝ exp β E p ( s 0 | s,A ) [ln p t ( a | s 0 , s )] ∝ exp β E p ( s 0 | s,A ) ln p ( s 0 | a , s ) ω t ( a | s ) p t ( s 0 | s ) ∝ ω t ( a | s ) exp β E p ( s 0 | s,A ) ln p ( s 0 | a , s ) p t ( s 0 | s ) . (17) 11 D Neural Network Description D.1 State r epresentation using con volutional netw orks For observations that are images, we make use of a con volutional network to obtain a state rep- resentation. W e use the same con volutional network for all experiments. After each con volution we apply a rectified non-linearity . For all experiments , we make use a 10 filters for each layer of the conv olution. The first con volution consists of 4 × 4 kernels with a stride of 1, and the second con volution consists of 3 × 3 kernels with a stride of 2. The output of the conv olution is passed through a fully connected layer with 100 hidden units, followed by a rectified non-linearity . This 100-dimensional representation is what forms the state representation s , s 0 used for the variational information maximisation components that follow this processing stage. D.2 P arameterisation of other networks W e also use neural networks in the parameterisation of the decoder distribution q ξ ( a | s , s 0 ) and in the directed model h θ ( a | s ) . This distribution is of the form: q ξ ( a | s 0 , s ) = q ( a 1 | s , s 0 ) K Y k =2 q ( a k | f ξ ( a k − 1 , s , s 0 )) , (18) where we must specify the form of the per action distributions q ( a k ) . This distribution is Gaussian distribution whose mean and v ariance are parameterised by a two-layer neural network: q ( a k ) = N ( a k | µ ξ ( a k − 1 , s , s 0 ) , σ 2 ξ ( a k − 1 , s , s 0 )) (19) µ ξ ( a k − 1 , s , s 0 ) = g ( W µ η + b ) (20) log σ ξ ( a k − 1 , s , s 0 ) = g ( W σ η + b ) (21) η = ` ( W 2 g ( W 1 x + b 1 ) + b 2 ) (22) where η is a two-layer neural netw ork which forms the shared component of the distrib ution. g ( · ) is an element-wise non-linearity , which is the rectified non-linearity in our case: Rect ( x ) = max(0 , x ) . The scalar function ψ θ ( s ) , also is specified by a two-layer neural network. References [1] Kingma, Diederik P and W elling, Max. Stochastic gradient vb and the v ariational auto-encoder . International Confer ence on Learning Repr esentations , 2014. [2] Rezende, Danilo Jimenez, Mohamed, Shakir , and W ierstra, Daan. Stochastic back-propagation and variational inference in deep latent gaussian models. In International Conference on Ma- chine Learning , 2014. [3] Salge, C., Glackin, C., and Polani, D. Empowerment–an introduction. In Guided Self- Or ganization: Inception , pp. 67–114. 2014. [4] Y eung, R. W . The Blahut-Arimoto algorithms. In Information Theory and Network Coding , pp. 211–228. 2008. 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment