(Blue) Taxi Destination and Trip Time Prediction from Partial Trajectories

Real-time estimation of destination and travel time for taxis is of great importance for existing electronic dispatch systems. We present an approach based on trip matching and ensemble learning, in which we leverage the patterns observed in a dataset of roughly 1.7 million taxi journeys to predict the corresponding final destination and travel time for ongoing taxi trips, as a solution for the ECML/PKDD Discovery Challenge 2015 competition. The results of our empirical evaluation show that our approach is effective and very robust, which led our team – BlueTaxi – to the 3rd and 7th position of the final rankings for the trip time and destination prediction tasks, respectively. Given the fact that the final rankings were computed using a very small test set (with only 320 trips) we believe that our approach is one of the most robust solutions for the challenge based on the consistency of our good results across the test sets.

💡 Research Summary

**

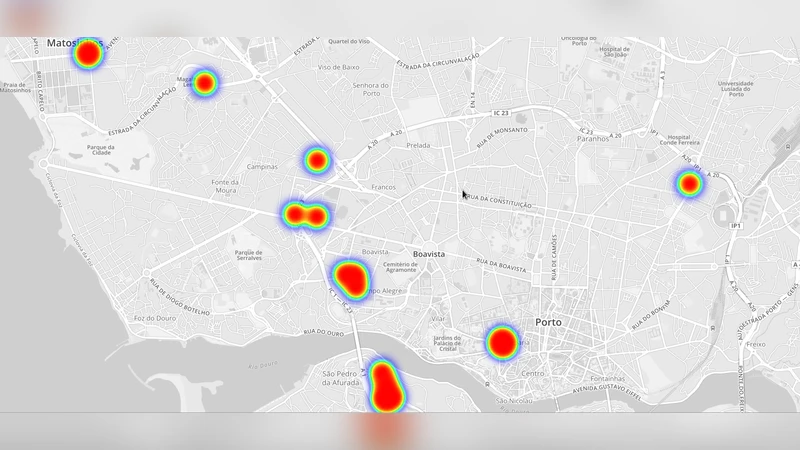

The paper presents “BlueTaxi,” a data‑driven solution for the ECML/PKDD 2015 Discovery Challenge that required real‑time prediction of a taxi’s final destination and remaining travel time from only a partial GPS trajectory. The authors leveraged a large dataset of approximately 1.7 million trips collected from 442 taxis operating in Porto, Portugal over one year. Each trip includes GPS points recorded every 15 seconds together with auxiliary metadata such as taxi ID, call ID, stand ID, and start timestamp.

Data cleaning and preprocessing

The raw data contained numerous inconsistencies: missing GPS points, erroneous timestamps, and trips where the meter remained on after the passenger was dropped off. The authors identified missing GPS points by detecting unrealistically large jumps between consecutive coordinates (exceeding a speed limit of 160 km/h). They also filtered out trips with unreliable start times and those that continued after the passenger had been dropped off. To reduce GPS noise, they applied the Ramer‑Douglas‑Peucker line‑simplification algorithm with three epsilon values (1e‑6, 5e‑6, 5e‑5), generating three levels of simplified trajectories.

Feature engineering for destination prediction

The core intuition is that two trips that share a similar route, especially in the final segment, are likely to end at the same or nearby locations. For a test trip A, the system searches for the ten most similar trips B in the training set. Similarity is measured by the average Haversine distance between corresponding points of the two trajectories; dynamic time warping was considered but discarded due to computational cost. For each of the ten nearest neighbors, the destination coordinates are collected and used as raw features.

Kernel regression (KR) is employed as a smoothed version of k‑NN. Three bandwidth parameters (0.005, 0.05, 0.5) generate three KR‑based destination estimates, which are added to the feature vector. The authors discovered that using only the last 500–700 meters of a trip yields the most informative KR predictions, reflecting the convergence of routes near the destination.

Contextual information (call ID, taxi ID, day of week, hour of day, stand ID) is incorporated by performing KR restricted to trips that share the same contextual value. Among these, call‑ID‑based KR gave the best performance. Additional basic features include Euclidean and Haversine distance between the first and last observed GPS points, direction relative to the city centre, number of GPS points, day of week, and the raw first/last coordinates.

Feature engineering for travel‑time prediction

The feature set mirrors that of destination prediction, but the target variable is the remaining travel time (log‑transformed). Additional time‑specific features are introduced: average speed and acceleration computed over the last d meters (d ∈ {10,20,50,100,200}) and over the whole observed segment; average speed of all trips observed within one hour of the snapshot (capturing traffic conditions); shape complexity measured as the ratio of Euclidean to Haversine distance between start and end points; and a binary flag indicating whether any speed between consecutive GPS points exceeds a set threshold (100, 120, 140, 160 km/h), which signals missing GPS data. In total, 66 features are used for time prediction.

Modeling approach

For destination prediction, the authors chose Random Forest (RF) because of its robustness and ability to provide feature‑importance scores. They performed feature selection using the built‑in cross‑validation function (rfcv) and removed outliers by training an initial RF with 2000 trees, discarding trips whose prediction error exceeded the 90th percentile, and retraining on the cleaned set.

Travel‑time prediction employed an ensemble of three regressors: Gradient Boosted Regression Trees (GBRT), Random Forest, and Extremely Randomized Trees (ERT). To combine them, a stacked generalization (stacking) scheme was used. The training data were split into a validation set and a local test set of equal size; base models were trained on the remaining data, predictions on the validation set were fed to a meta‑regressor, and finally the meta‑regressor combined the base predictions on the test set.

To accelerate nearest‑neighbor searches, a Geohash‑based index was built. Each GPS point is encoded as a geohash, and range queries retrieve trips within a 1 km radius of the first point of the test trip. This heuristic dramatically reduces query time without noticeable loss in prediction accuracy.

Experimental results

The authors constructed a local training set of 13 301 trips drawn from five time snapshots that matched the test set’s temporal distribution. An additional 12 000 trips from snapshots one hour later were tested but did not improve public leaderboard scores, so they were omitted from the final models.

On the official test set of 320 trips, the BlueTaxi system achieved 7th place for destination prediction and 3rd place for travel‑time prediction. The performance was consistent across both the public and private leaderboards, indicating strong generalization despite the small test size.

Conclusions and future work

The study demonstrates that (1) careful preprocessing of noisy GPS data, (2) similarity‑based trip matching with kernel regression, (3) incorporation of contextual metadata, and (4) robust ensemble learning with stacking can together produce a highly accurate, real‑time taxi prediction system. The authors suggest future extensions such as (a) training on the full 1.7 M‑trip dataset, (b) comparing against deep‑learning sequence models, and (c) deploying online learning mechanisms for continuous adaptation to evolving traffic patterns.

Comments & Academic Discussion

Loading comments...

Leave a Comment