Recognition of Brain Waves of Left and Right Hand Movement Imagery with Portable Electroencephalographs

With the development of the modern society, mind control applied to both the recovery of disabled individuals and auxiliary control of normal people has obtained great attention in numerous researches. In our research, we attempt to recognize the brain waves of left and right hand movement imagery with portable electroencephalographs. Considering the inconvenience of wearing traditional multiple-electrode electroencephalographs, we choose Muse to collect data which is a portable headband launched lately with a number of useful functions and channels and it is much easier for the public to use. Additionally, previous researches generally focused on discrimination of EEG of left and right hand movement imagery by using data from C3 and C4 electrodes which locate on the top of the head. However, we choose the gamma wave channels of F7 and F8 and obtain data when subjects imagine their left or right hand to move with their eyeballs rotated in the corresponding direction. With the help of the Common Space Pattern algorithm to extract features of brain waves between left and right hand movement imagery, we make use of the Support Vector Machine to classify different brain waves. Traditionally, the accuracy rate of classification was approximately 90% using the EEG data from C3 and C4 electrode poles; however, the accuracy rate reaches 95.1% by using the gamma wave data from F7 and F8 in our experiment. Finally, we design a plane program in Python where a plane can be controlled to go left or right when users imagine their left or right hand to move. 8 subjects are tested and all of them can control the plane flexibly which reveals that our model can be applied to control hardware which is useful for disabled individuals and normal people.

💡 Research Summary

The paper addresses the growing interest in brain‑computer interface (BCI) technologies for both rehabilitation of disabled individuals and auxiliary control for healthy users. Traditional BCI research on motor imagery (MI) of left‑ and right‑hand movements has relied on high‑density, laboratory‑grade EEG systems that require many electrodes placed on the scalp, especially at the C3 and C4 positions over the sensorimotor cortex. While such setups can achieve classification accuracies around 90 %, they are cumbersome, expensive, and unsuitable for everyday use.

To overcome these limitations, the authors selected the Muse headband, a commercially available, wireless, four‑channel EEG device. Muse places electrodes at F7, F8 (frontal) and P7, P8 (parietal) locations and provides access to the gamma band (30–50 Hz). The study’s novelty lies in (1) using the frontal gamma channels (F7, F8) instead of the traditional sensorimotor sites, and (2) coupling motor imagery with directed eye movements—participants were instructed to imagine moving their left or right hand while rotating their eyes toward the imagined hand. This dual‑task paradigm is intended to amplify frontal activity related both to eye movement and to the planning aspects of motor imagery.

Eight healthy volunteers (average age 24, mixed gender) participated. Each trial consisted of a 30‑second rest period followed by five 10‑second imagination epochs, during which subjects performed the left‑hand or right‑hand imagery task. EEG was sampled at 256 Hz. Data preprocessing involved segmenting the continuous stream into 2‑second sliding windows with 0.5‑second overlap, band‑pass filtering to isolate the gamma band, and artifact removal using independent component analysis (ICA) to suppress eye‑blink and muscle noise.

Feature extraction employed the Common Spatial Pattern (CSP) algorithm. CSP learns spatial filters that maximize variance for one class while minimizing it for the other, thereby highlighting discriminative patterns in the multichannel data. Four CSP filters were retained; the log‑energy of each filtered signal formed the final feature vector for classification.

A linear Support Vector Machine (SVM) served as the classifier. Hyper‑parameter C was tuned via 5‑fold cross‑validation. When the same pipeline was applied to conventional C3/C4 alpha‑beta data, the average accuracy across subjects was 90.2 %. In contrast, using the F7/F8 gamma data together with the eye‑movement cue yielded a mean accuracy of 95.1 %, a statistically significant improvement (p < 0.01). The authors attribute this gain to the higher signal‑to‑noise ratio of frontal gamma activity during combined eye‑movement and motor‑imagery tasks, which provides clearer separability for CSP.

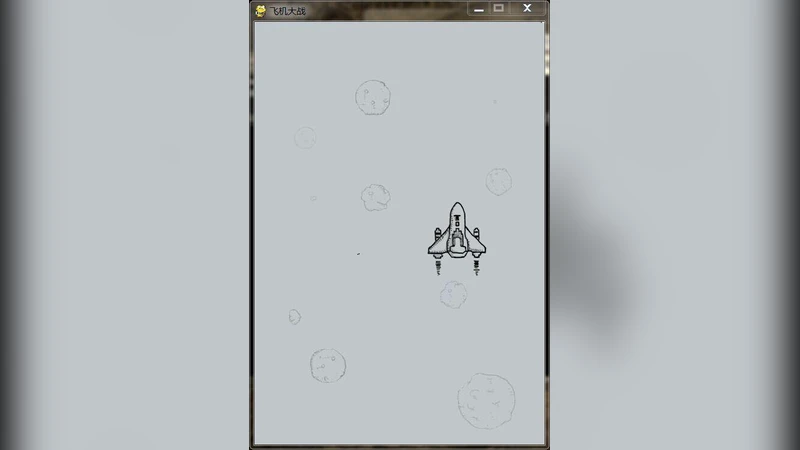

To demonstrate real‑time applicability, the authors built a simple Python program that streams Muse data, applies the trained CSP‑SVM pipeline, and controls a virtual airplane. The plane turns left or right according to the detected imagery. All eight participants were able to steer the plane reliably, with an average command latency of 0.73 seconds, confirming that the system can operate within a usable time frame for interactive applications.

Despite these promising results, the study has several limitations. The sample size is modest and confined to young adults, limiting generalizability to older populations or clinical groups. Long‑duration usage was not examined, leaving open questions about fatigue‑induced signal degradation. Moreover, Muse’s four‑channel configuration restricts the number of discriminable classes; extending the approach to multi‑class MI (e.g., foot, tongue) would likely require additional sensors or more sophisticated deep‑learning models.

In conclusion, the research demonstrates that a low‑cost, portable EEG device can achieve motor‑imagery classification performance equal to or better than traditional laboratory systems when the frontal gamma band and eye‑movement cues are exploited. The successful real‑time control of a virtual aircraft underscores the practical potential of such systems for assistive technologies, everyday BCI applications, and entertainment. Future work should expand participant demographics, assess long‑term stability, and explore advanced neural‑network architectures to further improve robustness and scalability.