IllinoisSL: A JAVA Library for Structured Prediction

IllinoisSL is a Java library for learning structured prediction models. It supports structured Support Vector Machines and structured Perceptron. The library consists of a core learning module and several applications, which can be executed from comm…

Authors: Kai-Wei Chang, Shyam Upadhyay, Ming-Wei Chang

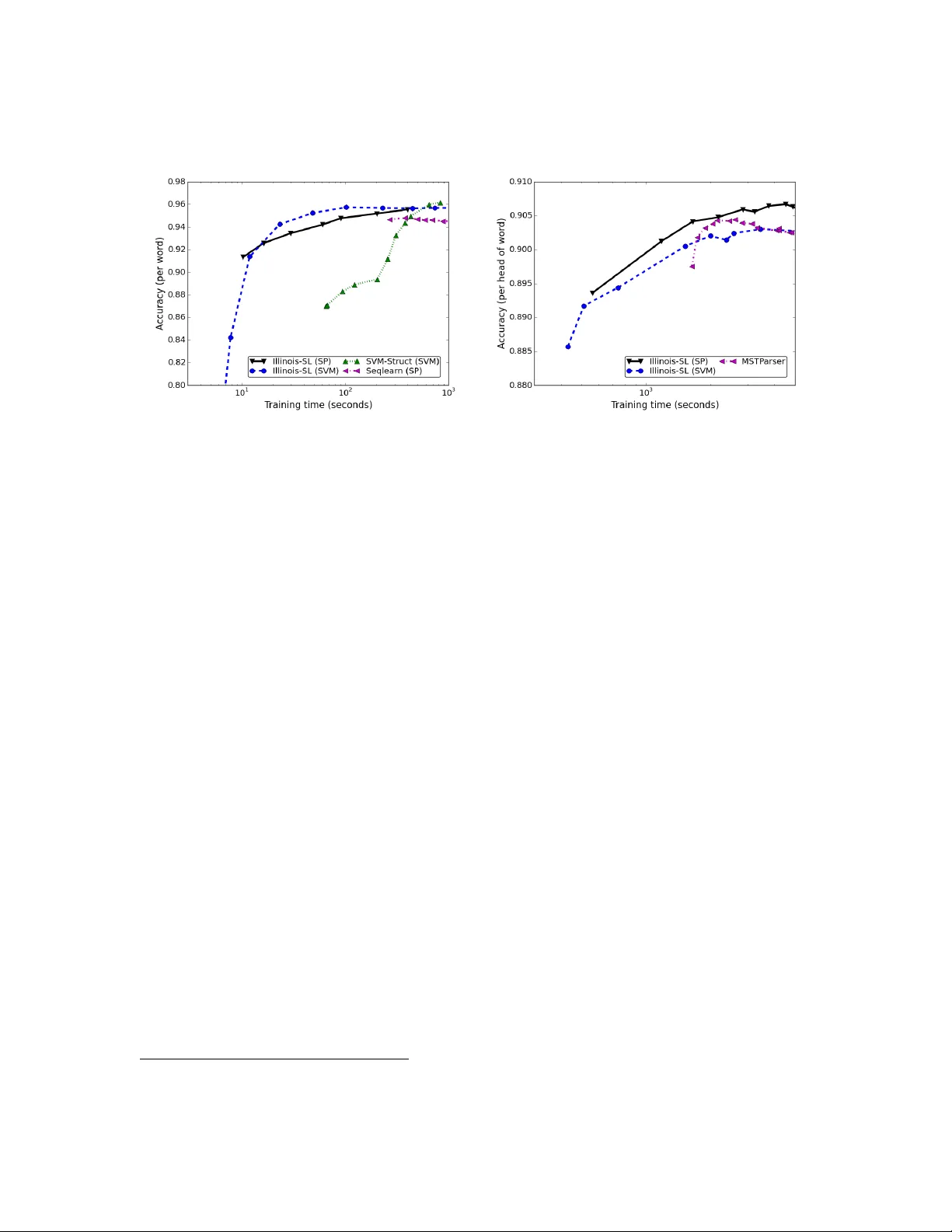

? (?) ??-?? Submitted ??; Published ??/?? IllinoisSL: A JA V A Library for Structured Prediction Kai-W ei Chang ∗ kw@kwchang.net Micr osoft R ese ar ch New England, MA Sh yam Upadhy a y up adhy a3@illinois.edu Dep artment of Computer Scienc e, University of Il linois at Urb ana-Champ aign, IL Ming-W ei Chang minchang@microsoft.com Micr osoft R ese ar ch, R e dmond, W A Viv ek Srikumar ∗ svivek@cs.ut ah.edu Scho ol of Computing at the University of Utah, UT Dan Roth danr@illinois.edu Dep artment of Computer Scienc e, University of Il linois at Urb ana-Champ aign, IL Editor: Abstract IllinoisSL is a Ja v a library for learning structured prediction mo dels. It supp orts struc- tured Supp ort V ector Mac hines and structured Perceptron. The library consists of a core learning mo dule and several applications, whic h can b e executed from command-lines. Do cumen tation is pro vided to guide users. In Comparison to other structured learning libraries, IllinoisSL is efficien t, general, and easy to use. 1. In tro duction Structured prediction mo dels hav e been widely used in sev eral fields, ranging from natural language pro cessing, computer vision, and bioinformatics. T o make structured prediction more accessible to practitioners, we presen t IllinoisSL , a Ja v a library for implementing structured prediction mo dels. Our library supports fast parallelizable v ariants of commonly used mo dels lik e Structured Supp ort V ector Machines (SSVM) and Structured Perceptron (SP), allo wing users to use m ultiple cores to train mo dels more efficien tly . Experiments on part-of-sp eec h (POS) tagging show that models implemented in IllinoisSL ac hieve the same lev el of performance as SVM struct , a well-kno wn C++ implementation of Structured SVM, in one-sixth of its training time. T o the b est of our knowledge, IllinoisSL is the first fully self-contained structured learning library in Ja v a. The library is released under NCSA licence 1 , pro viding freedom for using and modifying the soft ware. ∗ . Most of this w ork w as done while the author w as at the Universit y of Illinois, supported b y DARP A, under under agreemen t n umber F A8750-13-2-0008. The U.S. Gov ernment is authorized to repro duce and distribute reprints for Gov ernmental purposes not withstanding an y copyrigh t notation thereon. The views and conclusions contained herein are those of the authors and should not be in terpreted as necessarily represen ting the official p olicies or endorsements, either expressed or implied, of DARP A or the U.S. Gov ernment. 1. h ttp://op ensource.org/licenses/NCSA c ? . T ask x y InfSol ver Fea tureGenera tor POS sen tence tag Viterbi Emission and T agging sequence T ransition F eatures Dep endency sen tence dep endency Ch u-Liu-Edmonds Edge features P arsing tree Cost-Sensitiv e do cument document argmax do cumen t features Multiclass category T able 1: Examples of applications implemented in the library . IllinoisSL pro vides a generic interface for building algorithms to learn from data. A developer only needs to define the input and the output structures, and sp ecify the underlying mo del and inference algorithm (see Sec. 3). Then, the parameters of the mo del can b e estimated b y the learning algorithms pro vided b y library . The generality of our in terface allows users to switch seamlessly b etw een sev eral learning algorithms. The library and documentation are a v ailable at http://cogcomp.cs.illinois.edu/ page/software_view/illinois- sl . 2. Structured Prediction Mo dels This section in tro duces the notation and briefly describ es the learning algorithms. W e are giv en a set of training data D = { x i , y i } l i =1 , where instances x i ∈ X are annotated with structured outputs y i ∈ Y i , and Y i is a set of feasible structures for the i th instance. Structured SVM (T ask ar et al., 2004; Tso chan taridis et al., 2005) learns a weigh t vector w ∈ R n b y solving the following optimization problem: min w , ξ 1 2 w T w + C X i ξ 2 i s.t. w T Φ( x i , y i ) − w T Φ( x i , y ) ≥ ∆( y i , y ) − ξ i , ∀ i, y ∈ Y i . (1) where Φ( x , y ) is a feature v ector extracted from both input x and output y . The constraints in (1) force the mo del to assign higher score to the correct outp ut strcture y i than to others. ξ i is a slack v ariable and w e use L 2 loss to p enalize the violation in the ob jectiv e function (1). IllinoisSL supp orts tw o algorithms to solv e (1), a dual coordinate descen t metho d (DCD) (Chang et al., 2010; Chang and Yih, 2013) and a parallel DCD algorithm, DEMI- DCD (Chang et al., 2013). IllinoisSL also pro vides an implementation of Structured P erceptron (Collins, 2002). A t each step, Structured Perceptron up dates the mo del using one training instance ( x i , y i ) b y ¯ y ← arg max y ∈Y i w T φ ( x i , y ) , w ← w + η ( φ ( x i , y i ) − φ ( x i , ¯ y )) , where η is a learning rate. Our implemen tation includes the av eraging tric k introduced in Daum´ e II I (2006). 3. IllinoisSL Library W e pro vide command-line to ols to allo w users to quic kly learn a model for problems with common structures, such as linear-c hain, ranking, or a dep endency tree. The user can also implement a custom structured prediction model through the library in terface. W e describ e ho w to do the latter below. 2 Illinois-SL: A JA V A-Based Structured Prediction Librar y (a) P art-of-sp eech T agging (b) Dependency Parsing Figure 1: Accuracy v erse training time of t wo NLP tasks on PTB. Library In terface. IllinoisSL requires users to implemen t the following classes: • I Instance : the input x (e.g., sentence in POS tagging). • IStructure : the output structure y (e.g., tag sequence in POS tagging). • AbstractFeatureGenerato r : con tains a function FeatureGenerato r to extract features φ ( x , y ) from an example pair ( x , y ). • AbstractInfSolver : provides a metho d for solving inference (i.e., arg max y w T φ ( x i , y )) and for loss-augmented inference ( arg max y w T φ ( x i , y ) + ∆( y , y i )), and a metho d for ev aluating the loss ∆( y , y i ). F or example, in POS tagging, this class will include implemen tations of a viterbi deco der and the hamming loss, respectively . Once these classes are implemen ted, the user can seamlessly switc h b etw een different learning algorithms. Ready-T o-Use Implementations. The IllinoisSL pack age con tains implemen tations of sev eral common NLP tasks including a sequential tagger, a cost-sensitive mulcticlass classifier, and an MST dep endency parser. T able ?? sho ws the implmentation details of these learners. These implementations pro vide users with the ability to easily train a mo del for common problems using the command lines, and also serve as examples for using the library . The README file pro vides the details of how to use the library . Do cumen tation. IllinoisSL comes with detailed do cumentations, including JA V A API, command-line usage, and a tutorial. The tutorial pro vides a step-by-step instructions for building a POS tagger in 350 lines of JA V A co de. Users can p ost their comments and questions about the pac k age toto illinois- ml- nlp- users@cs.uiuc.edu . 4. Comparison T o show that IllinoisSL -based implementation of common NLP systems is on par with other structured learning libraries, we compare IllinoisSL with SVM struct 2 and Seqlearn 3 2. http://www.cs.cornell.edu/people/tj/svm_light/svm_struct.html 3. https://github.com/larsmans/seqlearn 3 on a Part-of-speech (POS) tagging problem. 4 W e follow the settings in Chang et al. (2013) and conduct exp erimen ts on the English Penn T reebank bank (PTB) (Marcus et al.). SVM struct solv es an L1-loss structured SVM problem using a cutting-plane method (Joachims et al., 2009). Seqlearn implemen ted a structured P erception algorithm for the sequential tagging problem. F or IllinoisSL , w e use 16 CPU cores to train the structured SVM mo del. Default parameters are used. Figure 1a shows the accuracy along training time of eac h mo del with default parameters. Despite b eing a general-purp ose pack age, IllinoisSL is more efficient than others 5 . W e also implemen ted a minimum spanning tree based dep endency parser using Illi- noisSL API. The implementation was done in less than 1000 lines of co de, with a few hours of co ding effort. Figure 1b shows the performance of our system in accuracy of head w ords (i.e., unlab eled attachmen t score). IllinoisSL is comp etitive with MSTParser 6 , a p opular implemen tation of dep endency parser. References K.-W. Chang, V. Srikumar, and D. Roth. Multi-core structural SVM training. In ECML , 2013. M. Chang and W. Yih. Dual co ordinate descent algorithms for efficient large margin struc- tural learning. T ACL , 2013. M. Chang, V. Srikumar, D. Goldwasser, and D. Roth. Structured output learning with indirect supervision. In ICML , 2010. M. Collins. Discriminativ e training metho ds for hidden Marko v mo dels: Theory and exp er- imen ts with p erceptron algorithms. In EMNLP , 2002. H. Daum´ e II I. Pr actic al Structur e d L e arning T e chniques for Natur al L anguage Pr o c essing . PhD thesis, Universit y of Southern California, 2006. T. Joachims, T. Finley , and Chun-Nam Y u. Cutting-plane training of structural SVMs. Machine L e arning , 2009. M. P . Marcus, B. San torini, and M. A. Marcinkiewicz. Building a large annotated corpus of english: The penn treebank. Computational Linguistics . A. C. M ¨ uller and S. Behnke. pystruct - learning structured prediction in Python. JMLR , 2014. B. T ask ar, C. Guestrin, and D. Koller. Max-margin mark ov netw orks. In NIPS , 2004. I. Tso chan taridis, T. Joachims, T. Hofmann, and Y. Altun. Large margin metho ds for structured and interdependent output v ariables. JMLR , 2005. 4. W e do not compare with pyStruct (M ¨ uller and Behnk e, 2014) because their pack age do es not supp ort sparse vectors. When representing the features using dense vector, p yStruct suffers from large memory usage and computing time. 5. Note that different learning pack ages using differen t training ob jectives. Therefore, the accuracy p erfor- mances are slightly different. 6. h ttp://www.seas.up enn.edu/ strctlrn/MSTParser/MSTP arser.html 4

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment