Efficient reconstruction of transmission probabilities in a spreading process from partial observations

An important problem of reconstruction of diffusion network and transmission probabilities from the data has attracted a considerable attention in the past several years. A number of recent papers introduced efficient algorithms for the estimation of spreading parameters, based on the maximization of the likelihood of observed cascades, assuming that the full information for all the nodes in the network is available. In this work, we focus on a more realistic and restricted scenario, in which only a partial information on the cascades is available: either the set of activation times for a limited number of nodes, or the states of nodes for a subset of observation times. To tackle this problem, we first introduce a framework based on the maximization of the likelihood of the incomplete diffusion trace. However, we argue that the computation of this incomplete likelihood is a computationally hard problem, and show that a fast and robust reconstruction of transmission probabilities in sparse networks can be achieved with a new algorithm based on recently introduced dynamic message-passing equations for the spreading processes. The suggested approach can be easily generalized to a large class of discrete and continuous dynamic models, as well as to the cases of dynamically-changing networks and noisy information.

💡 Research Summary

The paper addresses the problem of inferring transmission probabilities (edge infection rates) in a diffusion process when only partial observations of cascades are available. Traditional approaches assume full knowledge of activation times for every node, allowing a maximum‑likelihood estimator (MLE) to be derived from the exact likelihood of the observed data. However, in realistic settings many nodes are hidden or only a subset of time points is recorded, making the exact likelihood intractable because it requires summation over all possible hidden activation times, a computation that scales as O(T^H) where H is the number of hidden nodes.

The authors first propose a two‑stage heuristic (HTS) that alternates between completing the missing data using Monte‑Carlo sampling based on the current estimate of the transmission probabilities, and then applying the standard MLE on the “completed” cascades. While conceptually straightforward, this method remains computationally expensive and suffers from high variance in the gradient estimates, limiting its practicality for large networks.

To overcome these limitations, the authors develop a novel algorithm based on Dynamic Message‑Passing (DMP) equations, originally introduced for exact marginal computation on trees and shown to be accurate on sparse loopy graphs. For the discrete‑time SI model, DMP defines two types of messages: θ_{k→i}(t), the probability that node i has not yet been infected by neighbor k up to time t, and φ_{k→i}(t), the probability that the infection has already been transmitted along edge (k,i) by time t. These messages obey simple recursive updates (equations (8)–(9)) that can be computed in O(N d T) time per iteration, where d is the average degree and T the observation horizon, independent of the number of cascades M and the number of hidden nodes H.

The reconstruction procedure proceeds as follows. From the observed cascades Σ_O the empirical marginal activation probabilities m_i^(t) are estimated by averaging over all cascades for which node i’s state is known at time t. The DMP equations, given a current set of transmission probabilities α, produce model‑predicted marginals m_i(t). A loss function J = Σ_t Σ_{i∈O} (m_i^(t) – m_i(t))^2 measures the mismatch. Gradient descent updates the α’s, with gradients obtained by differentiating the DMP recursions, introducing auxiliary derivatives p_{k→i}^{rs}(t) = ∂θ_{k→i}(t)/∂α_{rs} and q_{k→i}^{rs}(t) = ∂φ_{k→i}(t)/∂α_{rs}. Because the loss aggregates over many cascades, the resulting optimization is robust to noise and missing data.

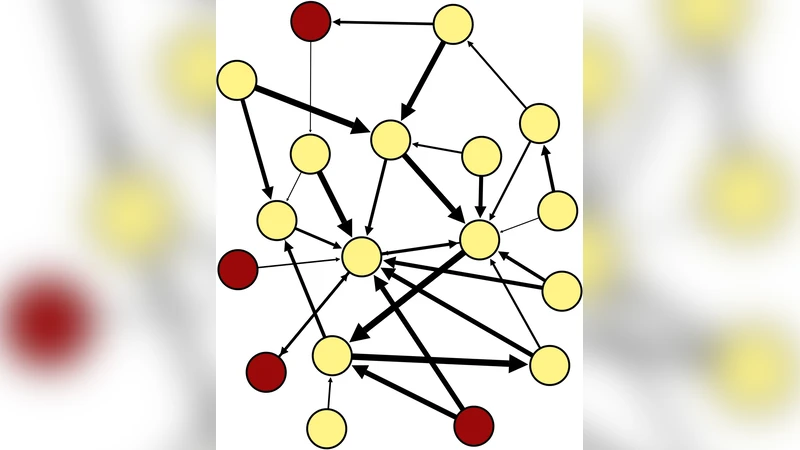

Extensive experiments on synthetic trees, power‑law random graphs, and a real‑world social network (Sampson’s monastery) compare four methods: full‑information MLE, the HTS heuristic, the basic DMP algorithm, and a hybrid DMP+ML where the MLE solution on the observed subgraph is used to initialise the DMP optimisation. Results show that while MLE achieves the lowest error when all data are available, its performance deteriorates sharply under measurement noise or partial observability. The DMP‑based approaches, particularly DMP+ML, maintain low reconstruction error even with substantial hidden‑node fractions (up to ~25 % of nodes) and are orders of magnitude faster, with per‑iteration cost independent of M and H. The authors also discuss a refinement (DMP*) that limits the DMP dynamics to a time window shorter than the length of the shortest cycle, thereby eliminating loop‑induced bias at the cost of increased solution degeneracy.

In summary, the paper contributes a scalable, noise‑robust framework for learning diffusion parameters from incomplete cascade data. By leveraging dynamic message‑passing, it sidesteps the exponential complexity of exact likelihood marginalisation while retaining high accuracy on sparse networks. The methodology is readily extensible to other discrete or continuous diffusion models (e.g., SIR, threshold, rumor spreading), time‑varying graphs, and settings with noisy observations, making it a valuable tool for epidemiology, information propagation, and network science applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment