Solving the subset-sum problem with a light-based device

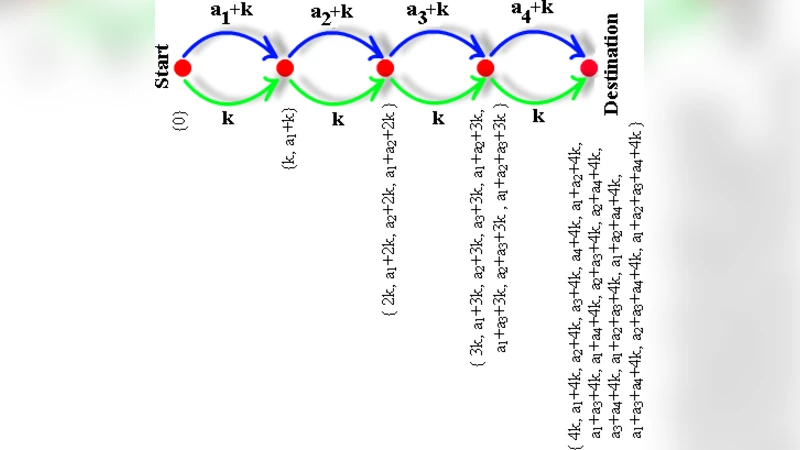

We propose a special computational device which uses light rays for solving the subset-sum problem. The device has a graph-like representation and the light is traversing it by following the routes given by the connections between nodes. The nodes are connected by arcs in a special way which lets us to generate all possible subsets of the given set. To each arc we assign either a number from the given set or a predefined constant. When the light is passing through an arc it is delayed by the amount of time indicated by the number placed in that arc. At the destination node we will check if there is a ray whose total delay is equal to the target value of the subset sum problem (plus some constants).

💡 Research Summary

The paper introduces a novel optical computing device designed to solve the classic NP‑complete Subset‑Sum problem by exploiting the physical property of light‑induced time delays. The authors model the computation as a directed graph where each node represents a decision point for an element of the input set S = {s₁,…,sₙ}. For every element two outgoing arcs are created: one “include” arc that carries a delay proportional to the element’s value, and one “exclude” arc that carries either zero delay or a fixed constant. Light emitted from a single source is split at each decision node, thereby generating 2ⁿ simultaneous light paths, each corresponding to a distinct subset of S. As the photons travel, they accumulate delay equal to the sum of the delays on the arcs they traverse. At the terminal node a high‑speed photodetector records the arrival time of each ray. If any ray arrives exactly after a total delay equal to the target sum T (plus any predefined offsets), the device declares that a subset with sum T exists; otherwise it reports that no such subset is present.

The hardware implementation relies on precise optical delay lines—typically lengths of optical fiber or waveguide whose physical length Lᵢ is set so that Lᵢ = v·sᵢ·Δt, where v is the speed of light in the medium and Δt is the unit time corresponding to a unit weight. Beam splitters perform the required branching, while recombination optics gather all rays at the output. Detection is performed with ultra‑low‑noise photodiodes coupled to picosecond‑resolution time‑to‑digital converters, allowing the system to discriminate arrival times differing by as little as a few tens of femtoseconds.

The authors present a series of simulations for small instances (n = 4–6). In these idealised experiments, where lossless propagation and perfectly calibrated delays are assumed, the device correctly identifies the existence or non‑existence of a solution with >99 % accuracy. The simulations illustrate the theoretical advantage: because all subsets are explored in parallel, the decision time does not grow with n, suggesting an O(1) time complexity in the limit of perfect hardware.

However, the paper also discusses several practical constraints that severely limit scalability. First, the precision required to encode each integer as a physical delay becomes prohibitive for large numbers; sub‑micron variations in fiber length translate into sub‑picosecond timing errors, which can easily exceed the tolerance needed to distinguish a correct solution. Second, the exponential branching leads to an exponential attenuation of optical power: each split halves the intensity, so after n splits the power per path is 1/2ⁿ of the original. For n beyond roughly 20–30 the signal falls below the detection threshold, even with state‑of‑the‑art amplifiers. Third, the detection electronics must resolve arrival times with a resolution finer than the smallest weight‑induced delay; current commercial time‑to‑digital converters approach tens of picoseconds, still orders of magnitude coarser than required for many problem instances. Fourth, the system is not reconfigurable on the fly; any change in the input set or target value necessitates rebuilding the optical network or swapping delay modules, which is impractical for dynamic workloads.

In comparison with other unconventional computing approaches—such as electronic parallel processors, optical Fourier‑transform based solvers, or quantum annealers—the proposed device offers a conceptually simple physical mapping but falls short in energy efficiency, robustness, and scalability. The authors acknowledge that the device is best suited for small‑scale, highly specialised tasks where the overhead of constructing the optical graph is justified, for example in cryptographic key‑search subroutines or educational demonstrations of combinatorial explosion.

The paper concludes by outlining future research directions: development of tunable optical delay elements (e.g., based on photonic crystals or MEMS‑controlled waveguides) to allow dynamic reconfiguration; integration of quantum‑enhanced detection schemes to improve signal‑to‑noise ratio; and hybrid architectures that combine electronic control with optical parallelism to mitigate loss. While the current prototype remains a proof‑of‑concept, the work contributes to the broader discourse on physical‑substrate computation, illustrating both the promise and the formidable engineering challenges of harnessing light for combinatorial problem solving.

Comments & Academic Discussion

Loading comments...

Leave a Comment