DeepCough: A Deep Convolutional Neural Network in A Wearable Cough Detection System

In this paper, we present a system that employs a wearable acoustic sensor and a deep convolutional neural network for detecting coughs. We evaluate the performance of our system on 14 healthy volunteers and compare it to that of other cough detection systems that have been reported in the literature. Experimental results show that our system achieves a classification sensitivity of 95.1% and a specificity of 99.5%.

💡 Research Summary

The paper presents “DeepCough,” a wearable cough‑detection system that combines a custom piezoelectric chest‑mounted acoustic sensor with a deep convolutional neural network (CNN). The sensor is designed to amplify respiratory vibrations while attenuating speech and ambient noise, thereby providing a cleaner signal than conventional condenser microphones. Acoustic data are segmented into 4 ms frames; 16‑frame (64 ms) windows with sufficient RMS energy are retained and transformed via a 128‑bin short‑time Fourier transform (STFT) into 64 × 16 spectrograms, which serve as the CNN input.

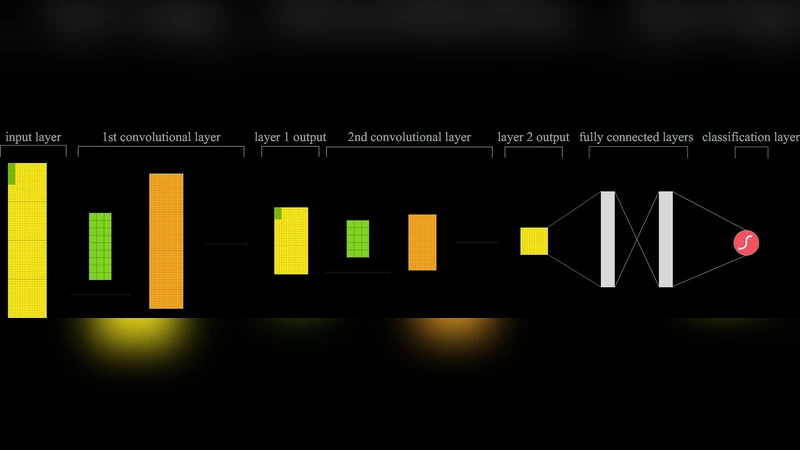

The CNN architecture consists of two convolutional layers (each with 16 ReLU filters of size 9 × 3 and 5 × 3, respectively) followed by 2 × 1 max‑pooling, two fully‑connected layers of 256 units with 0.5 dropout, and a final softmax layer that outputs cough versus non‑cough probabilities. Training uses stochastic gradient descent (learning rate 0.001, batch 20, momentum 0.9) on 10,279 overlapping 64 ms segments derived from recordings of 14 healthy volunteers (7 M/7 F). Each participant contributed roughly 40 forced coughs, yielding 627 cough examples; an equal number of speech segments were extracted from Harvard Sentences. A parallel recording with a professional microphone (Olympus LS‑12) enables direct comparison between sensor modalities.

The dataset is split 70 % for model building and 30 % for testing; the former is further divided 80 %/20 % for training/validation to tune hyper‑parameters. Data augmentation is performed by re‑buffering with a 4‑frame (25 %) overlap. Training converges after about 50 epochs (≈1 hour).

Two experiments evaluate the system. Experiment 1 compares the learned CNN features against traditional hand‑crafted MFCC features. A softmax classifier and a linear SVM are trained on MFCCs, and a linear SVM is trained on raw STFT data. The CNN achieves 94.0 % sensitivity and 91.7 % specificity, outperforming the STFT‑SVM by ~10 % and surpassing MFCC‑based classifiers, indicating that the CNN extracts more discriminative time‑frequency patterns for cough detection.

Experiment 2 pits the full DeepCough pipeline against a conventional MFCC‑based Hidden Markov Model (HMM) with 10 states per class. Test windows (~320 ms) are classified by averaging the CNN’s segment‑level probabilities, while the HMM uses log‑likelihood over MFCC sequences. On the piezo sensor data, DeepCough reaches 95.1 % sensitivity and 99.5 % specificity, markedly higher than the HMM’s performance. Comparable results are obtained on microphone recordings, confirming that the sensor design does not limit the method’s efficacy.

The authors conclude that (1) a purpose‑built piezo sensor can provide high‑quality cough‑relevant acoustic signals, (2) a modest‑size 2‑layer CNN can learn robust features directly from short‑duration spectrograms, and (3) the end‑to‑end system outperforms prior MFCC‑HMM approaches in both sensitivity and specificity. Future work will explore recurrent or transformer architectures better suited to longer temporal contexts and will involve prolonged passive data collection from patients to capture spontaneous coughs in real‑world settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment