Jet-Images: Computer Vision Inspired Techniques for Jet Tagging

We introduce a novel approach to jet tagging and classification through the use of techniques inspired by computer vision. Drawing parallels to the problem of facial recognition in images, we define a jet-image using calorimeter towers as the elements of the image and establish jet-image preprocessing methods. For the jet-image processing step, we develop a discriminant for classifying the jet-images derived using Fisher discriminant analysis. The effectiveness of the technique is shown within the context of identifying boosted hadronic W boson decays with respect to a background of quark- and gluon- initiated jets. Using Monte Carlo simulation, we demonstrate that the performance of this technique introduces additional discriminating power over other substructure approaches, and gives significant insight into the internal structure of jets.

💡 Research Summary

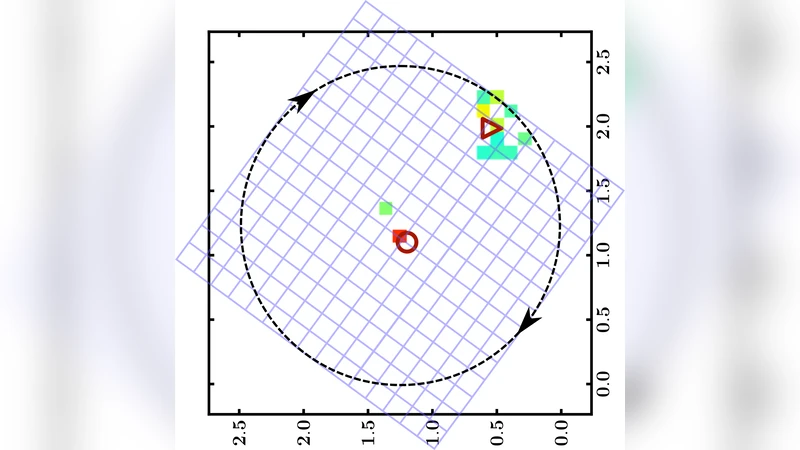

The paper “Jet‑Images: Computer Vision Inspired Techniques for Jet Tagging” introduces a novel methodology for classifying high‑energy jets by treating calorimeter tower deposits as pixels of an image and applying computer‑vision preprocessing and linear discriminant analysis. The authors first construct a “jet‑image” by mapping the transverse energy deposited in each calorimeter tower onto a fixed‑size η–φ grid with a granularity of 0.1 × 0.1, centered on the jet axis and spanning a 2R × 2R window. This representation yields a uniform‑dimensionality vector for every jet, enabling the direct use of image‑processing tools.

To make the images comparable, a series of preprocessing steps—directly inspired by facial‑recognition pipelines—are applied. Noise from pile‑up is mitigated using trimming, which removes soft sub‑jets. The leading sub‑jets (or “points of interest”) are identified, and the image is subsequently aligned: a rotation aligns the principal axis (or the line connecting the two leading sub‑jets for a two‑pronged decay) vertically, a translation centers the energy centroid (or leading sub‑jet) in the middle of the pixel array, and a reflection ensures the hardest radiation always appears on the same side. After alignment, each image is normalized so that its self‑dot product equals one, removing dependence on the absolute jet energy. Optionally, jets can be binned in auxiliary variables (e.g., total transverse energy or ΔR between sub‑jets) and separate discriminants trained per bin, analogous to grouping faces by expression.

With preprocessed jet‑images in hand, the classification problem becomes a linear one in a high‑dimensional space. The authors evaluate several linear classifiers and find Fisher’s Linear Discriminant (FLD) to be superior to Principal Component Analysis (PCA) because FLD explicitly maximizes inter‑class separation while minimizing intra‑class scatter. A regularized implementation of FLD is employed to avoid over‑training on limited Monte‑Carlo samples. Training on two classes—boosted hadronic W‑boson jets (signal) and QCD‑initiated quark/gluon jets (background)—produces a Fisher‑jet, a weight image of the same dimensionality as the inputs. The discriminant value D for any jet is simply the dot product of its image with the Fisher‑jet; positive D indicates signal‑like, negative D background‑like. Because the Fisher‑jet itself can be visualized, the method offers immediate physical insight: pixels with large positive weights correspond to features characteristic of a two‑prong W decay (e.g., two localized energy deposits), while negative weights highlight regions typical of diffuse QCD radiation.

Performance is assessed using simulated 8 TeV proton‑proton collisions generated with Pythia 8, MadGraph, and Herwig++. Jets with transverse momenta between 200 and 250 GeV are selected, and pile‑up is added via minimum‑bias events. The Fisher‑jet classifier is compared against traditional substructure observables such as τ₂₁, mass‑drop, and girth, both individually and in multivariate combinations. Receiver‑Operating‑Characteristic (ROC) curves show that the jet‑image FLD achieves a higher area‑under‑curve (AUC) than any of the benchmark methods, especially in high‑pile‑up scenarios where the trimming‑based preprocessing preserves discriminating information.

The key contributions of the work are: (1) a systematic conversion of calorimeter data into a standardized image format, preserving raw constituent information; (2) a physics‑tailored preprocessing pipeline that removes irrelevant geometric and energy‑scale variations; (3) the application of a fast, interpretable linear discriminant that can be visualized as an image, providing both classification power and physical intuition; (4) demonstrable improvement over existing substructure techniques in a realistic simulation environment.

The authors suggest several avenues for future development. Extending the image representation to include additional channels—such as track‑based momentum, particle‑flow candidates, or longitudinal calorimeter segmentation—could increase resolution and enable richer feature extraction. Non‑linear deep‑learning models, particularly convolutional neural networks, could be trained on the same jet‑images to capture higher‑order correlations, potentially surpassing linear methods while still benefiting from the same preprocessing. Multi‑class extensions (e.g., distinguishing W, Z, top, and Higgs jets) are straightforward by training multiple FLDs or employing one‑vs‑rest schemes. Finally, applying the technique to real LHC data will require careful treatment of detector effects and systematic uncertainties, but the inherent interpretability of the Fisher‑jet image makes it a promising tool for both discovery and precision measurements.

Comments & Academic Discussion

Loading comments...

Leave a Comment