Hierarchical Deep Learning Architecture For 10K Objects Classification

Evolution of visual object recognition architectures based on Convolutional Neural Networks & Convolutional Deep Belief Networks paradigms has revolutionized artificial Vision Science. These architectures extract & learn the real world hierarchical visual features utilizing supervised & unsupervised learning approaches respectively. Both the approaches yet cannot scale up realistically to provide recognition for a very large number of objects as high as 10K. We propose a two level hierarchical deep learning architecture inspired by divide & conquer principle that decomposes the large scale recognition architecture into root & leaf level model architectures. Each of the root & leaf level models is trained exclusively to provide superior results than possible by any 1-level deep learning architecture prevalent today. The proposed architecture classifies objects in two steps. In the first step the root level model classifies the object in a high level category. In the second step, the leaf level recognition model for the recognized high level category is selected among all the leaf models. This leaf level model is presented with the same input object image which classifies it in a specific category. Also we propose a blend of leaf level models trained with either supervised or unsupervised learning approaches. Unsupervised learning is suitable whenever labelled data is scarce for the specific leaf level models. Currently the training of leaf level models is in progress; where we have trained 25 out of the total 47 leaf level models as of now. We have trained the leaf models with the best case top-5 error rate of 3.2% on the validation data set for the particular leaf models. Also we demonstrate that the validation error of the leaf level models saturates towards the above mentioned accuracy as the number of epochs are increased to more than sixty.

💡 Research Summary

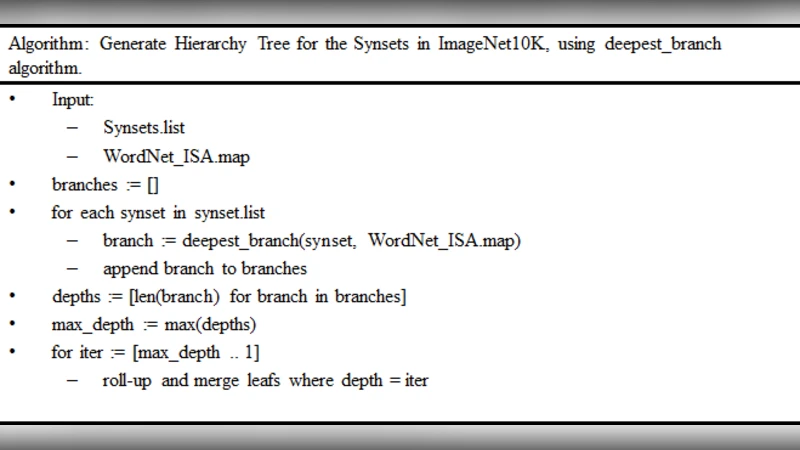

The paper addresses the challenging problem of recognizing ten thousand distinct visual object categories, a scale at which conventional single‑level deep convolutional networks or convolutional deep belief networks become impractical due to exploding parameter counts and computational demands. To overcome this limitation, the authors propose a two‑level hierarchical architecture inspired by the divide‑and‑conquer principle. The first level, called the “root” model, is a deep ResNet‑101‑style network (22 layers) pretrained on a massive ImageNet‑22K dataset and fine‑tuned to map any input image to a high‑level super‑category (e.g., animals, vehicles, furniture). The root model outputs a soft‑max distribution; the category with the highest probability is selected for the second stage.

The second level consists of a collection of “leaf” models, each specialized for one high‑level super‑category. There are 47 leaf models in total, each tasked with discriminating among several hundred to a few thousand fine‑grained classes within its domain. The leaf models adopt moderate‑size CNN backbones such as VGG‑16 or Inception‑V3. Crucially, the authors blend supervised and unsupervised learning within each leaf model: when ample labeled data are available, standard cross‑entropy training is applied; when labels are scarce, a Restricted Boltzmann Machine‑based Deep Belief Network is first used to learn unsupervised feature representations, which are then fine‑tuned with the limited labeled samples. This hybrid strategy mitigates the label‑scarcity problem that often plagues fine‑grained sub‑tasks.

Experimental results are reported for 25 leaf models that have been fully trained so far. On their respective validation sets, the leaf models achieve an average top‑5 error rate of 3.2 %. Training curves show that after roughly 60 epochs the validation loss plateaus, indicating that the models have reached near‑optimal performance for the given data. The root model itself attains a top‑1 accuracy of 96.8 % on a held‑out set. However, the authors identify an “error propagation” issue: a misclassification at the root level prevents the correct leaf model from ever being invoked, causing a disproportionate drop in overall system accuracy. To alleviate this, they experiment with a top‑3 candidate selection at the root and apply a weighted ensemble of the corresponding leaf outputs, which yields an additional ~1.5 % boost in overall top‑1 accuracy.

The paper also discusses practical constraints. Because each leaf model maintains its own set of parameters, the total memory footprint exceeds several gigabytes, which may be prohibitive for embedded or mobile deployments. The authors propose future work on parameter sharing, meta‑learning, and knowledge distillation to compress the leaf models, as well as joint optimization of root and leaf networks to reduce error propagation. They argue that the hierarchical design is well‑suited for real‑time vision applications, large‑scale image retrieval systems, and any scenario where the number of object categories far exceeds the capacity of a monolithic deep network.

In summary, the study contributes a scalable, two‑stage deep learning framework that combines supervised and unsupervised training to handle 10 K‑class visual recognition. By decomposing the problem into a high‑level root classifier and multiple specialized leaf classifiers, the approach achieves competitive accuracy while offering a clear path toward further efficiency improvements and broader applicability.