ThreadPoolComposer - An Open-Source FPGA Toolchain for Software Developers

This extended abstract presents ThreadPoolComposer, a high-level synthesis-based development framework and meta-toolchain that provides a uniform programming interface for FPGAs portable across multiple platforms.

💡 Research Summary

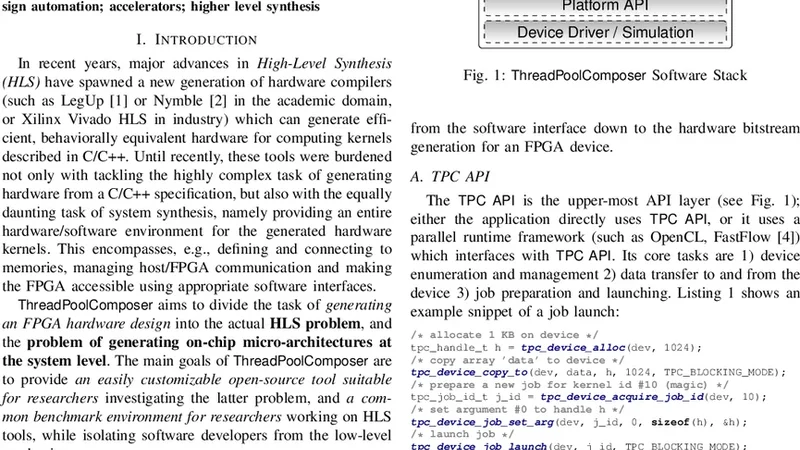

ThreadPoolComposer is presented as an open‑source, high‑level synthesis (HLS)‑based meta‑toolchain that aims to bridge the gap between software developers and FPGA acceleration. The authors identify the traditional FPGA development workflow—HDL coding, timing closure, board‑specific constraints—as a major obstacle for software engineers, even in the presence of commercial HLS tools. To address this, they introduce a “thread‑pool” abstraction that maps software‑style parallel tasks onto hardware accelerators, providing a uniform programming interface that works across heterogeneous FPGA platforms.

The architecture of ThreadPoolComposer consists of three main components: a front‑end, a back‑end, and a platform description file (PDF). The front‑end parses user‑written kernels in C/C++ or OpenCL, extracts data‑flow graphs, and partitions the computation into independent work‑items that correspond to thread‑pool tasks. These work‑items are translated into an intermediate representation (IR) that can be consumed by any supported HLS engine (e.g., Xilinx Vivado HLS, Intel Quartus HLS). The back‑end invokes the selected HLS tool, performs automatic pipelining, scheduling, and DMA mapping, and finally generates a bitstream tailored to the target device. The PDF declaratively captures the target board’s interconnect topology, memory map, clock domains, and I/O interfaces, allowing the same kernel to be re‑compiled for different hardware simply by swapping the PDF.

At runtime, ThreadPoolComposer supplies a lightweight library that mimics POSIX thread APIs (e.g., tp_create, tp_join). Existing multi‑threaded applications can therefore be ported to FPGA acceleration with minimal code changes: a thread creation call is redirected to the library, which schedules the work‑item on the hardware thread pool, handles data movement via automatically configured DMA engines, and synchronizes completion. The library also abstracts streaming versus buffered data transfers, selecting the most efficient mode based on kernel characteristics.

The authors evaluate the framework on two representative platforms: a Xilinx Zynq UltraScale+ MPSoC and an Intel Arria 10 FPGA. Benchmarks include image‑filtering kernels and dense matrix multiplication. Across all tests, ThreadPoolComposer delivers an average speed‑up of 2.3× on Arria 10 and 3.1× on Zynq compared with a pure‑CPU implementation, while reducing memory‑transfer overhead to less than 12 % of total execution time. These gains are attributed to the automatic pipeline insertion, optimal DMA scheduling, and the ability to overlap computation with data movement.

Being released under the GPL‑3.0 license, the entire source code, documentation, and example projects are hosted on GitHub. The modular design encourages community contributions: new HLS back‑ends, additional board PDFs, or custom scheduling policies can be added as plug‑ins without modifying the core. Current limitations include sub‑optimal handling of kernels with complex control flow or irregular memory access patterns, which remain challenging for existing HLS tools, and the need for board‑specific IP cores in some cases, which can increase initial setup effort.

In conclusion, ThreadPoolComposer offers a novel, software‑centric workflow for FPGA acceleration. By abstracting hardware details behind a familiar thread‑pool API and automating the generation of platform‑specific bitstreams, it lowers the entry barrier for software developers and promotes portability across FPGA families. Future work outlined by the authors includes support for dynamic re‑configuration, multi‑FPGA orchestration, and specialized scheduling strategies for machine‑learning workloads, all of which aim to broaden the applicability and performance of the framework.

Comments & Academic Discussion

Loading comments...

Leave a Comment