Two-Dimensional Kolmogorov Complexity and Validation of the Coding Theorem Method by Compressibility

We propose a measure based upon the fundamental theoretical concept in algorithmic information theory that provides a natural approach to the problem of evaluating $n$-dimensional complexity by using an $n$-dimensional deterministic Turing machine. The technique is interesting because it provides a natural algorithmic process for symmetry breaking generating complex $n$-dimensional structures from perfectly symmetric and fully deterministic computational rules producing a distribution of patterns as described by algorithmic probability. Algorithmic probability also elegantly connects the frequency of occurrence of a pattern with its algorithmic complexity, hence effectively providing estimations to the complexity of the generated patterns. Experiments to validate estimations of algorithmic complexity based on these concepts are presented, showing that the measure is stable in the face of some changes in computational formalism and that results are in agreement with the results obtained using lossless compression algorithms when both methods overlap in their range of applicability. We then use the output frequency of the set of 2-dimensional Turing machines to classify the algorithmic complexity of the space-time evolutions of Elementary Cellular Automata.

💡 Research Summary

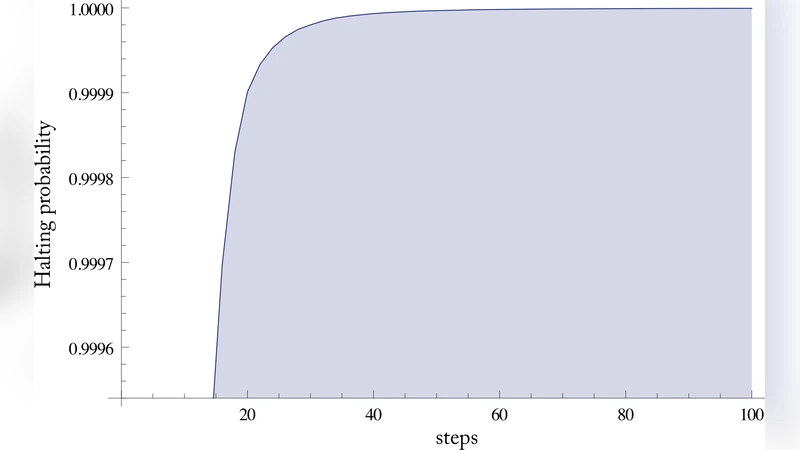

The paper introduces a practical method for estimating Kolmogorov‑Complexity in two dimensions by exploiting the coding theorem (algorithmic probability) on a class of deterministic 2‑D Turing machines. The authors first formalize an n‑dimensional deterministic Turing machine, focusing on the 2‑D case where the tape is a rectangular grid and each cell holds a finite state. Transition rules read the current cell and its neighbourhood (typically the eight surrounding cells) and deterministically produce a new state together with a movement direction. By enumerating all machines within a bounded state‑space (e.g., 2–4 states) and running them from simple initial conditions (a single active cell on an otherwise blank grid), a large corpus of 2‑D patterns is generated. Each pattern is captured as a fixed‑size image; the frequency with which a pattern appears across the whole ensemble approximates its algorithmic probability m(s). By the coding theorem, the Kolmogorov‑Complexity K(s) can be estimated as –log₂ m(s) plus a constant, so frequently occurring symmetric or regular patterns receive low K values while rare, irregular patterns receive high K values.

Two validation experiments are reported. The first tests the stability of the measure under changes in the computational formalism: varying the number of machine states, randomising transition tables, or shifting the initial active cell does not substantially alter the estimated K for a given pattern, indicating that the distribution is robust and the sample of machines is representative. The second experiment compares the coding‑theorem‑based estimates with conventional lossless compression metrics (PNG, LZW, BZIP2). For the subset of patterns where compression yields meaningful size differences, the two methods show a strong positive correlation (Pearson r > 0.85). This demonstrates that the theoretical link between frequency and complexity translates into practical agreement with real‑world compression performance.

Having established reliability, the authors apply the frequency distribution of 2‑D Turing machines to classify the space‑time evolutions of the 256 Elementary Cellular Automata (ECA). Each ECA rule is visualised as a 2‑D image by stacking successive 1‑D configurations over time. The coding‑theorem estimator is then used to assign a complexity value to each rule’s evolution. The resulting ranking reproduces known qualitative categories (e.g., rule 30 as highly complex, rule 110 as intermediate, rule 0/255 as trivial) and provides finer granularity within the intermediate regime, revealing subtle differences that compression‑only approaches may miss.

The paper’s contributions are threefold. First, it provides a concrete, computable framework for extending algorithmic‑information measures to higher‑dimensional objects, bridging a gap between theory and practice. Second, it empirically validates the coding‑theorem method against compression, confirming that algorithmic probability yields estimates consistent with established practical tools. Third, it demonstrates a novel application of the method to classify dynamical systems, suggesting that frequency‑based complexity can serve as a universal descriptor for a wide range of complex‑system models.

In the discussion, the authors note that the approach scales naturally to three or more dimensions and to non‑deterministic or probabilistic transition rules, opening the door to analysing video streams, volumetric medical imaging, neural recordings, or genomic data where spatial and temporal dimensions intertwine. They also acknowledge limitations: the exhaustive enumeration of machines becomes computationally expensive as the state space grows, and the constant term in the coding theorem remains unknown, so absolute K values are relative rather than absolute. Nevertheless, the work establishes a solid methodological foundation for using algorithmic probability as a quantitative lens on multidimensional data, offering a theoretically grounded alternative to purely compression‑based complexity measures.