Deep clustering: Discriminative embeddings for segmentation and separation

We address the problem of acoustic source separation in a deep learning framework we call "deep clustering." Rather than directly estimating signals or masking functions, we train a deep network to produce spectrogram embeddings that are discriminati…

Authors: John R. Hershey, Zhuo Chen, Jonathan Le Roux

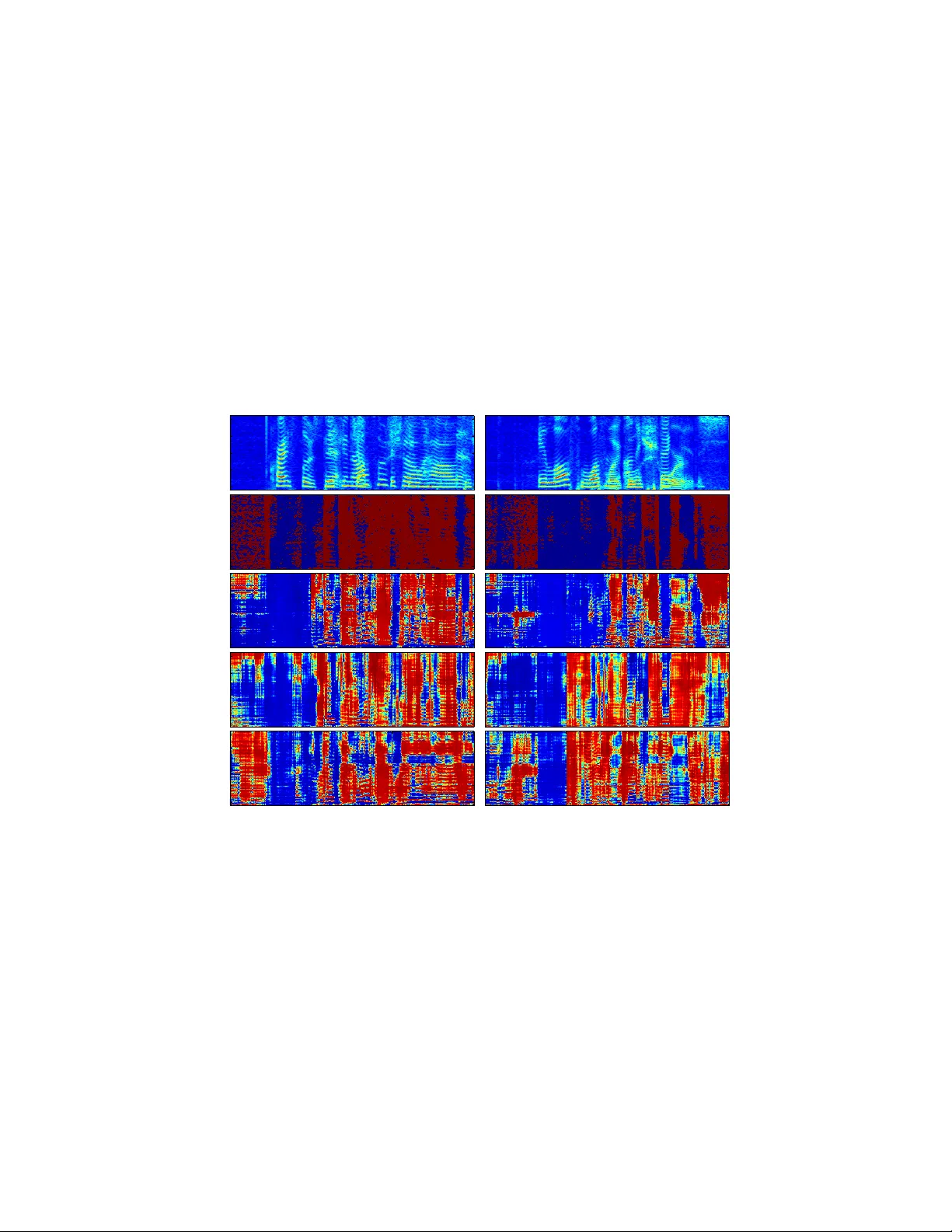

Deep clustering: Discriminativ e embeddings f or segmentation and separation John R. Hershey MERL Cambridge, MA hershey@merl.com Zhuo Chen Columbia Univ ersity New Y ork, NY zc2204@columbia.edu Jonathan Le Roux MERL Cambridge, MA leroux@merl.com Shinji W atanabe MERL Cambridge, MA watanabe@merl.com Abstract W e address the problem of acoustic source separation in a deep learning frame- work we call “deep clustering”. Rather than directly estimating signals or masking functions, we train a deep network to produce spectrogram embeddings that are discriminativ e for partition labels gi ven in training data. Pre vious deep network approaches provide great advantages in terms of learning power and speed, but previously it has been unclear how to use them to separate signals in a class- independent way . In contrast, spectral clustering approaches are flexible with re- spect to the classes and number of items to be segmented, but it has been unclear how to leverage the learning power and speed of deep networks. T o obtain the best of both worlds, we use an objectiv e function that to train embeddings that yield a lo w-rank approximation to an ideal pairwise affinity matrix, in a class- independent way . This a voids the high cost of spectral factorization and instead produces compact clusters that are amenable to simple clustering methods. The segmentations are therefore implicitly encoded in the embeddings, and can be ”decoded” by clustering. Preliminary e xperiments sho w that the proposed method can separate speech: when trained on spectrogram features containing mixtures of two speakers, and tested on mixtures of a held-out set of speakers, it can in- fer masking functions that improve signal quality by around 6dB. W e show that the model can generalize to three-speaker mixtures despite training only on two- speaker mixtures. The framew ork can be used without class labels, and therefore has the potential to be trained on a diverse set of sound types, and to generalize to novel sources. W e hope that future work will lead to segmentation of arbitrary sounds, with e xtensions to microphone array methods as well as image se gmenta- tion and other domains. 1 Introduction In real world perception, we are often confronted with the problem of selectively attending to ob- jects whose features are intermingled with one another in the incoming sensory signal. In computer vision, the problem of scene analysis is to partition an image or video into regions attributed to the visible objects present in the scene. In audio there is a corresponding problem kno wn as auditory scene analysis [1, 2], which seeks to identify the components of audio signals corresponding to indi- vidual sound sources in a mixture signal. Both of these problems can be approached as se gmentation problems, where we formulate a set of “ elements ” in the signal via an inde xed set of features, each of 1 which carries (typically multi-dimensional) information about part of the signal. For images, these elements are typically defined spatially in terms of pixels, whereas for audio signals they may be defined in terms of time-frequency coordinates. The segmentation problem is then solved by seg- menting elements into groups or partitions, for e xample by assigning a group label to each element. Note that although clustering methods can be applied to segmentation problems, the segmentation problem is technically different in that clustering is classically formulated as a domain-independent problem based on simple objectiv e functions defined on pairwise point relations, whereas partition- ing may depend on comple x processing of the whole input, and the task objecti v e may be arbitrarily defined via training examples with gi ven se gment labels. Segmentation problems can be broadly categorized into class-based segmentation problems where the goal is to learn from training class labels to label known object classes, versus more general partition-based segmentation problems where the task is to learn from labels of partitions, without requiring object class labels, to segment the input. Solving the partition-based problem has the advantage that unkno wn objects could then be segmented. In this paper, we propose a ne w partition- based approach which learns embeddings for each input elements, such that the correct labeling can be determined by simple clustering methods. W e focus on the single-channel audio domain, although our methods are applicable to other domains such as images and multi-channel audio. The motiv ation for segmenting in this domain, as we shall describe later, is that using the segmentation as a mask, we can extract parts of the tar get signals that are not corrupted by other signals. Since class-based approaches are relatively straightforw ard, and ha ve been tremendously successful at their task, we first briefly mention this general approach. In class based vision models, such as [3 – 5], a hierarchical classification scheme is trained to estimate the class label of each pix el or super - pixel re gion. In the audio domain, single-channel speech separation methods, for e xample, segment the time-frequency elements of the spectrogram into regions dominated by a target speaker , either based on classifiers [6 – 8], or generativ e models [9 – 11]. In recent years, the success of deep neural networks for classification problems has naturally inspired their use in class-based segmentation problems [4, 12], where they ha ve pro ven very successful. Howe v er class-based approaches ha ve some important limitations. First, of course, the assumed task of labeling kno wn classes fundamentally does not address the general problem in real w orld signals that there may be a lar ge number of possible classes, and man y objects may not ha ve a well-defined class. It is also not clear how to directly apply current class-based approaches to the more general problem. Class-based deep network models for separating sources require explicitly representing output classes and object instances in the output nodes, which leads to complexities in the general case. Although generati v e model-based methods can in theory be flexible with respect to the number of model types and instances after training, inference typically cannot scale computationally to the potentially larger problem posed by more general se gmentation tasks. In contrast, humans seem to solve the partition-based problem, since they can apparently segment well e ven with no vel objects and sounds. This observ ation is the basis of Gestalt theories of percep- tion, which attempt to explain perceptual grouping in terms of features such as proximity and simi- larity [13]. The partition-based segmentation task is closely related, and follows from a tradition of work in image se gmentation and audio separation. Application of the perceptual grouping theory to audio segmentation is generally kno wn as computational auditory scene analysis (CASA) [14, 15]. Spectral clustering is an activ e area of machine learning research with application to both image and audio segmentation. It uses local affinity measures between features of elements of the signal, and optimizes v arious objective functions using spectral decomposition of the normalized af finity matrix [16]. In contrast to conv entional central clustering algorithms such as k -means, spectral clustering has the adv antage that it does not require points to be tightly clustered around a central prototype, and can find clusters of arbitrary topology , provided that the y form a connected sub-graph. Because of the local form of the pairwise kernel functions used, in difficult spectral clustering problems the affinity matrix has a sparse block-diagonal structure that is not directly amenable to central clustering, which works well when the block diagonal affinity structure is dense. The powerful but computationally expensi ve eigenspace transformation step of spectral clustering addresses this, in effect, by ”f attening” the block structure, so that connected components become dense blocks, prior to central clustering [17]. 2 Although af finity-based methods were originally unsupervised inference methods, multiple-kernel learning methods such as [17, 18] were later introduced to train weights used to combine separate affinity measures. This allows us to consider using them for partition-based segmentation tasks in which partition labels are av ailable, but without requiring specific class labels. In [17], this was applied to speech separation by including a variety of complex features dev eloped to implement various auditory scene analysis grouping principles, such as similarity of onset/of fset, pitch, spectral en velope, and so on, as affinities between time-frequency regions of the spectrogram. The input features included a dual pitch-tracking model in order to improve upon the relativ e simplicity of kernel-based features, at the expense of generality . Rather than using specially designed features and relying on the strength of the spectral cluster- ing frame work to find clusters, we propose to use deep learning to deri ve embedding features that make the segmentation problem amenable to simple and computationally efficient clustering algorithms such as k -means, using the partition-based training approach. Learned feature trans- formations known as embeddings hav e recently been gaining significant interest in many fields. Unsupervised embeddings obtained by auto-associativ e deep networks, used with relativ ely simple clustering algorithms, hav e recently been shown to outperform spectral clustering methods [19, 20] in some cases. Embeddings trained using pairwise metric learning, such as word2vec [21] using neighborhood-based partition labels, hav e also been shown to hav e interesting inv ariance proper- ties. W e present below an objecti ve function that minimizes the distances between embeddings of elements within a partition, while maximizing the distances between embeddings for elements in different partitions. This appears to be an appropriate criterion for central clustering methods. The proposed embedding approach has the attractiv e property that all partitions and their permutations can be represented implicitly using the fixed-dimensional output of the network. The experiments described belo w sho w that the proposed method can separate speech using a speaker -independent model with an open set of speak ers at test time. As in [17], we deriv e partition labels by mixing signals together and observing their spectral dominance patterns. After training on a database of mixtures of speakers trained in this way , we show that without any modification the model shows a promising ability to separate three-speaker mixtures despite training only on two-speaker mixtures. Although results are preliminary , the hope is that this work leads to methods that can achieve class-independent segmentation of arbitrary sounds, with additional application to image segmentation and other domains. 2 Learning deep embeddings f or clustering W e define as x a raw input signal, such as an image or a time-domain wav eform, and as X n = g n ( x ) , n ∈ { 1 , . . . , N } , a feature vector index ed by an element n . In the case of images, n typically may be a superpixel index and X n some vector-v alued features of that superpixel; in the case of audio signals, n may be a time-frequency index ( t, f ) , where t indexes frames of the signal and f frequencies, and X n = X t,f the value of the complex spectrogram at the corresponding time- frequency bin. W e assume that there exists a reasonable partition of the elements n into regions, which we would like to find, for example to further process the features X n separately for each region. In the case of audio source separation, for e xample, these re gions could be defined as the sets of time-frequency bins in which each source dominates, and estimating such a partition w ould enable us to b uild time-frequenc y masks to be applied to X n , leading to time-frequenc y representations that can be in verted to obtain isolated sources. T o estimate the partition, we seek a K -dimensional embedding V = f θ ( x ) ∈ R N × K , parameterized by θ , such that performing some simple clustering in the embedding space will likely lead to a partition of { 1 , . . . , N } that is close to the target one. In this work, V = f θ ( x ) is based on a deep neural netw ork that is a global function of the entire input signal x (we allo w for a feature e xtraction step to create the network input; in general, the input features may be completely different from X n ). Thus our transformation can tak e into account global properties of the input, and the embedding can be considered a permutation- and cardinality-independent encoding of the netw ork’ s estimate of the signal partition. Here we consider a unit-norm embedding, so that | v n | 2 = P k v 2 n,k = 1 , ∀ n , where v n = { v n,k } and v n,k is the value of the k -th dimension of the embedding for element n . W e omit the dependency of V on θ to simplify notations. 3 The partition-based training requires a reference label indicator Y = { y n,c } , mapping each element n to each of c arbitrary partition classes, so that y n,c = 1 if element n is in partition c . For a training objectiv e, we seek embeddings that enable accurate clustering according to the partition labels. T o do this, we need a conv enient expression that is in v ariant to the number and permutations of the partition labels from one training example to the ne xt. One such objecti ve for minimization is C ( θ ) = | V V T − Y Y T | 2 W = X i,j : y i = y j ( h v i , v j i − 1) 2 d i + X i,j : y i 6 = y j ( h v i , v j i − 0) 2 p d i d j , (1) = X i,j : y i = y j | v i − v j | 2 d i + X i,j | v i − v j | 2 − 2 2 4 p d i d j − N , (2) where | A | 2 W = P i,j w i,j a 2 i,j is a weighted Frobenius norm, with W = d − 1 2 d − T 2 , where d i = Y Y T 1 is an ( N × 1) vector of partition sizes: that is, d i = |{ j : y i = y j }| . In the above we use the fact that | v n | 2 = 1 , ∀ n . Intuitiv ely , this objectiv e pushes the inner product h v i , v j i to 1 when i and j are in the same partition, and to 0 when they are in different partitions. Alternately , we see from (2) that it pulls the squared distance | v i − v j | 2 to 0 for elements within the same partition, while prev enting the embeddings from trivially collapsing into the same point. Note that the first term is the objectiv e function minimized by k -means, as a function of cluster assignments, and in this context the second term is a constant. So the objecti ve reasonably tries to lower the k -means score for the labeled cluster assignments at training time. This formulation can be related to spectral clustering as follows. W e can define an ideal affinity matrix A ∗ = Y Y T , that is block diagonal up to permutation and use an inner-product kernel, so that A = V V T is our affinity matrix. Our objectiv e becomes C = | A − A ∗ | 2 F , which measures the de viation of the model’ s af finity matrix from the ideal affinity . Note that although this function ostensibly sums ov er all pairs of data points i, j , the low-rank nature of the objective leads to an efficient implementation, defining D = diag ( Y Y T 1) : C = | V V T − Y Y T | 2 W = | V T D − 1 2 V | 2 F − 2 | V T D − 1 2 Y | 2 F + | Y T D − 1 2 Y | 2 F , (3) which av oids explicitly constructing the N × N affinity matrix. In practice, N is orders of magnitude greater than K , leading to a significant speedup. T o optimize a deep network, we typically need to use first-order methods. Fortunately deriv ati ves of our objective function with respect to V are also efficiently obtained due to the lo w-rank structure: ∂ C ∂ V T = 4 D − 1 2 V V T D − 1 2 V − 4 D − 1 2 Y Y T D − 1 2 V . (4) This low-rank formulation also relates to spectral clustering in that the latter typically requires the Nystr ¨ om lo w-rank approximation to the af finity matrix, [22] for ef ficienc y , so that the singular v alue decomposition (SVD) of an N × K matrix can be substituted for the much more expensi ve eigen- value decomposition of the K × K normalized af finity matrix. Rather than follo wing spectral clus- tering in making a low-rank approximation of a full-rank model, our method can be thought of as directly optimizing a lo w-rank af finity matrix so that processing is more ef ficient and parameters are tuned to the low-rank structure. At test time, we compute the embeddings V on the test signal, and cluster the ro ws v i ∈ R K , for example using k -means. W e also alternately perform a spectral-clustering style dimensionality reduction before clustering, starting with a singular value decomposition (SVD), ˜ V = U S R T , of normalized ˜ V = D − 1 2 V , where D = V V T 1 N , sorted by decreasing eigen value, and clustering the normalized ro ws of the matrix of m principal left singular vectors, with the i ’th row given by ˜ u i,r = u i,r / p P m r 0 =1 u i,r 0 : r ∈ [1 , m ] , similar to [23]. 3 Speech separation experiments 3.1 Experimental setup W e ev aluate the proposed model on a speech separation task: the goal is to separate each speech signal from a mixture of multiple speakers. While separating speech from non-stationary noise is 4 in general considered to be a dif ficult problem, separating speech from other speech signals is par- ticularly challenging because all sources belong to the same class, and share similar characteristics. Mixtures in v olving speech from same gender speakers are the most difficult since the pitch of the voice is in the same range. W e here consider mixtures of two speakers and three speakers (the latter always containing at least two speakers of the same gender). Howe ver , our method is not limited in the number of sources it can handle or the v ocabulary and discourse style of the speakers. T o inv estigate the effecti veness of our proposed model, we b uilt a new dataset of speech mixtures based on the W all Street Journal (WSJ0) corpus, leading to a more challenging task than in e xisting datasets. Existing datasets are too limited for e valuation of our model because, for example, the speech separation challenge [24] only contains a mixture of two speakers, with a limited v ocab ulary and insufficient training data. The SISEC challenge (e.g., [25]) is limited in size and designed for the ev aluation of multi-channel separation, which can be easier than single-channel separation in general. A training set consisting of 30 hours of two-speaker mixtures was generated by randomly select- ing utterances by dif ferent speakers from the WSJ0 training set si_tr_s , and by mixing them at various signal-to-noise ratios (SNR) between 0 dB and 5 dB. W e also designed the two training subsets from the above whole training set ( whole ), one considered the balance of the mixture of the genders ( balanced , 22.5 hours), and the other only used the mixture of female speakers ( female , 7.5 hours). 10 hours of cross v alidation set were generated similarly from the WSJ0 training set, which is used to optimize some tuning parameters, and to ev aluate the source separation performance of the closed speaker experiments ( closed speaker set ). 5 hours of ev aluation data was generated similarly using utterances from sixteen speakers from the WSJ0 development set si_dt_05 and ev aluation set si_et_05 , which are based on the different speakers from our training and closed speaker sets ( open speaker set ). Note that many existing speech separation methods (e.g., [5, 26]) cannot handle the open speaker problem without special adaptation procedures, and generally re- quire knowledge of the speakers in the ev aluation. For the ev aluation data, we also created 100 utterances of three-speaker mixtures for eac h closed and open speak er set as an adv anced setup. All data were downsampled to 8 kHz before processing to reduce computational and memory costs. The input features X were the log short-time Fourier spectral magnitudes of the mixture speech, computed with a 32 ms window length, 8 ms window shift, and the square root of the hann windo w . T o ensure the local coherency , the mixture speech was segmented with the length of 100 frames, roughly the length of one word in speech, and processed separately to output embedding V based on the proposed model. The ideal binary mask was used to build the target Y when training our network. The ideal binary mask giv es ownership of a time-frequency bin to the source whose magnitude is maximum among all sources in that bin. The mask v alues were assigned with 1 for active and 0 otherwise (binary), making Y Y T as the ideal affinity matrix for the mixture. T o av oid problems due to the silence regions during separation, a binary weight for each time- frequency bin was used during the training process, only retaining those bins such that each source’ s magnitude at that bin is greater than some ratio (arbitrarily set to -40 dB) of the source’ s maximum magnitude. Intuitively , this binary weight guides the neural network to ignore bins that are not important to all sources. 3.2 T raining procedure Networks in the proposed model were trained giv en the above input X and the ideal affinity matrix Y Y T . The network structure used in our experiments has two bi-directional long short-term memory (BLSTM) layers, followed with one feedforward layer . Each BLSTM layer has 600 hidden cells and the feedforward layer corresponds with the embedding dimension (i.e., K ). Stochastic gradient descent with momentum 0.9 and fixed learning rate 10 − 5 was used for training. In each updating step, a Gaussian noise with zero mean and 0.6 variance was added to the weight. W e prepared sev eral networks used in the speech separation experiments using different embedding dimensions from 5 to 60 . In addition, tw o different activ ation functions (logistic and tanh) were explored to form the embedding V with dif ferent ranges of v n,k . For each embedding dimension, the weights for the corresponding network were initialized randomly from the scratch according to a normal distribution with zero mean and 0.1 variance with the tanh activ ation and whole training set. In the experiments of a different activ ation (logistic) and different training subsets ( balanced and female ), the network was initialized with the one with the tanh acti v ation and whole training set. The implementation was 5 T able 1: SDR improv ements (in dB) for dif ferent clustering methods. method closed speaker set open speaker set oracle NMF 5.06 - DC oracle k -means 6.54 6.45 DC oracle spectral 6.35 6.26 DC global k -means 5.87 5.81 T able 2: SDR impro vements (in dB) for different embedding dimensions K and acti v ation functions closed speaker set open speaker set model DC oracle DC global DC oracle DC global K = 5 -0.77 -0.96 -0.74 -1.07 K = 10 5.15 4.52 5.29 4.64 K = 20 6.25 5.56 6.38 5.69 K = 40 6.54 5.87 6.45 5.81 K = 60 6.00 5.19 6.08 5.28 K = 40 logistic 6.59 5.86 6.61 5.95 based on CURRENNT , a publicly a vailable training softw are for DNN and (B)LSTM networks with GPU support ( https://sourceforge.net/p/currennt ). 3.3 Speech separation pr ocedure In the test stage, the speech separation was performed by constructing a time-domain speech sig- nal based on time-frequency masks for each speaker . The time-frequency masks for each source speaker were obtained by clustering the row vectors of embedding V , where V was outputted from the proposed model for each segment (100 frames), similarly to the training stage. The number of clusters corresponds to the number of speakers in the mixture. W e ev aluated various types of cluster- ing methods: k -means on the whole utterance by concatenating the embeddings V for all segments; k -means clustering within each segment; spectral clustering within each segment. For the within- segment clusterings, one needs to solve a permutation problem, as clusters are not guaranteed to be consistent across se gments. For those cases, we report oracle permutation results (i.e., permutations that minimize the L 2 distance between the masked mixture and each source’ s comple x spectrogram) as an upper bound on performance. One interesting property of the proposed model is that it can potentially generalize to the case of three-speaker mixtures without changing the training procedure in Section 3.2. T o v erify this, speech separation experiments on three-speaker mixtures were conducted using the network trained with two speaker mixtures, simply changing the above clustering step from 2 to 3 clusters. Of course, training the network including mixtures in volving more than two speakers should improv e perfor- mance further , b ut we shall see that the method does surprisingly well ev en without retraining. As a standard speech separation method, supervised sparse non-ne gati ve matrix f actorization (SNMF) was used as a baseline [26]. While SNMF may stand a chance separating speakers in male-female mixtures when using a concatenation of bases trained separately on speech by other speakers of each gender, it would not make sense to use it in the case of same-gender mixtures. T o giv e SNMF the best possible adv antage, we use an oracle: at test time we gi ve it the basis functions trained on the actual speaker in the mixture. For each speaker , 256 bases were learned on the clean training utterances of that speaker . Magnitude spectra with 8 consecuti ve frames of left conte xt were used as input features. At test time, the basis functions for the two speakers in the test mixture were concatenated, and their corresponding acti vations computed on the mixture. The estimated models for each speaker were then used to build a W iener-filter like mask applied to the mixture, and the corresponding signals reconstructed by in verse STFT . For all the experiment, performance was e valuated in terms of averaged signal-to-distortion ratio (SDR) using the bss_eval toolbox [27]. The initial SDR a veraged o ver the mixtures was 0 . 16 dB for two speaker mixtures and − 2 . 95 dB for three speaker mixtures. 6 T able 3: SDR improvement (in dB) for each type of mixture. Scores a veraged over male-male (m+m), female-female (f+f), female-male (f+m), or all mixtures. training gender closed speaker set open speaker set method distribution m+m f+f f+m all m+m f+f f+m all oracle NMF speaker dependent 3.25 3.31 6.53 4.90 - - - - DC oracle permute whole 3.79 4.29 9.04 6.54 4.49 3.21 8.69 6.45 balanced 3.89 4.35 8.74 6.42 4.61 3.49 8.27 6.41 female - 5.03 - - - 4.04 - - DC global k -means whole 2.54 2.85 9.07 5.87 3.51 1.42 8.57 5.80 balanced 2.78 2.87 8.63 5.72 3.89 1.74 8.27 5.83 female - 3.88 - - - 2.56 - - T able 4: SDR improv ement (in dB) for three speaker mixture method closed speaker set open speaker set oracle NMF 4.42 - DC oracle 3.50 2.81 DC global 2.74 2.22 4 Results and discussion As sho wn in T able 1, both the oracle and non-oracle clustering methods for proposed system sig- nificantly outperform the oracle NMF baseline, ev en though the oracle NMF is a strong model with the important advantage of kno wing the speaker identity and has speak er-dependent models. For the proposed system the open speaker performance is similar to the closed speaker results, indicating that the system can generalize well to unkno wn speakers, without any explicit adaptation methods. For different clustering methods, the oracle k -means outperforms the oracle ”spectral clustering” by 0 . 19 dB showing that the embedding represents centralized clusters. T o be fair , what we call spectral clustering here is using our outer product kernel instead of a local kernel function such as a Gaussian, as commonly used in spectral clustering. Howe ver a Gaussian kernel could not be used here due to computational comple xity . Also note that the oracle clustering method in our experiment resolves the permutation of two (or three in T able 4) speakers in each segment. In the dataset, each utterance usually contains 6 ∼ 8 segments so the permutation search space is relati vely small for each utterance. Hence this problem may hav e an easy solution to be explored in future work. For the non-oracle experiments, the whole utterance clustering also performs relatively well compared to baseline. Gi ven the fact that the system was only trained with indi vidual segments, the ef fectiv e- ness of the whole utterance clustering suggests that the network learns features that are globally important, such us pitch, timbre etc. In T able 2, the K = 5 system completely fails, either because optimization of the current network architecture fails, or the embedding fundamentally requires more dimensions. The performance of K = 20 , K = 40 , K = 60 are similar , sho wing that the system can operate in a wide range of parameter values. W e arbitrarily used tanh networks in most of the experiments because the tanh network has larger embedding space than logistic network. Howe ver , in T able 2, we sho w that in retrospect the logistic network performs slightly better than the tanh one. In T able 3, since the female and male mixture is an intrinsically easier segmentation problem, the performance of mixture between female and male is significantly better than the same gender mix- tures for all situations. As mentioned in Section 3, the random selection of speaker would also be a factor for the large gap. W ith more balanced training data, the system has better performance for the same gender separation with a sacrifice of its performance for different gender mixture. If we only focus on female mixtures, the performance is still better . Figure 2 sho ws an example of embeddings for two different mixtures (female-female and male- female), in which a few embedding dimensions are plotted for each time-frequency bin in order to show ho w the y are sensitiv e to dif ferent aspects of each signal. In T able 4, the proposed system can also separate the mixture of three speakers, even though it is only trained on two-speaker mixtures. As discussed in previous sections, unlike many separation algorithms, deep clustering can naturally scale up to more sources, and thus make it suitable for many real world tasks when the number of sources is not available or fixed. Figure 1 sho ws one 7 Frequency (kHz) Time (s) 1 2 3 4 5 0 1 2 3 4 Frequency (kHz) 0 1 2 3 4 Frequency (kHz) 0 1 2 3 4 Figure 1: An example of three-speaker separation. T op: log spectrogram of the input mixture. Middle: ideal binary mask for three speakers. The dark blue shows the silence part of the mixture. Bottom: output mask from the proposed system trained on two-speak er mixtures. example of the separation for three speaker mixture in the open speaker set case. Note that we also did experiments with mixtures of three fix ed speakers for the training and testing data, and the SDR improv ement of the proposed system is 6 . 15 . Deep clustering has been e v aluated in a variety of conditions and parameter re gimes, on a challeng- ing speech separation problem. Since these are just preliminary results, we expect that further refine- ment of the model will lead to significant improvement. For example, by combining the clustering step into the embedding BLSTM network using the deep unfolding technique [28], the separation could be jointly trained with embedding and lead to potential better result. Also in this work, the BLSTM network has a relati vely uniform structure. Alternativ e architectures with different time and frequency dependencies, such as deep con volutional neural networks [3], or hierarchical recursi ve embedding networks [4], could also be helpful in terms of learning and regularization. Finally , scal- ing up training on databases of more disparate audio types, as well as applications to other domains such as image segmentation, would be prime candidates for future w ork. 8 Time (s) 0 1 2 3 0 1 2 3 4 0 1 2 3 4 Frequency (kHz) 0 1 2 3 4 0 1 2 3 4 0 1 2 3 4 Time (s) 0 1 2 3 Figure 2: Examples of embeddings for two mixtures: f+f (left) and f+m (right). 1st ro w: spectro- gram; 2nd row: ideal binary mask; 3rd-5th row: embeddings. 9 References [1] A. S. Bregman, Auditory scene analysis: The per ceptual or ganization of sound , MIT press, 1994. [2] C. J. Darwin and R. P . Carlyon, “ Auditory grouping, ” in Hearing , B. Moore, Ed. Else vier , 1995. [3] C. Farabet, C. Couprie, L. Najman, and Y . LeCun, “Learning hierarchical features for scene labeling, ” IEEE T rans. P AMI , vol. 35, no. 8, pp. 1915–1929, 2013. [4] A. Sharma, O. T uzel, and M.-Y . Liu, “Recursi ve context propagation network for semantic scene label- ing, ” in Proc. NIPS , 2014, pp. 2447–2455. [5] P . Smaragdis, “Con v olutiv e speech bases and their application to supervised speech separation, ” IEEE T r ans. Audio, Speech, Languag e Pr ocess. , v ol. 15, no. 1, pp. 1–12, 2007. [6] R. J. W eiss and D. P . Ellis, “Estimating single-channel source separation masks: Rele vance vector ma- chine classifiers vs. pitch-based masking, ” in Proc. SAP A , 2006, pp. 31–36. [7] G. Kim, Y . Lu, Y . Hu, and P . C. Loizou, “ An algorithm that improves speech intelligibility in noise for normal-hearing listeners, ” J. Acoust. Soc. Am. , vol. 126, no. 3, pp. 1486–1494, 2009. [8] Y . W ang, K. Han, and D. W ang, “Exploring monaural features for classification-based speech segreg a- tion, ” IEEE T rans. Audio, Speech, Language Process. , v ol. 21, no. 2, pp. 270–279, 2013. [9] S. T . Ro weis, “Factorial models and refiltering for speech separation and denoising, ” in Proc. Eur ospeech , 2003, pp. 1009–1012. [10] M. Schmidt and R. Olsson, “Single-channel speech separation using sparse non-ne gativ e matrix factor- ization, ” in Proc. Interspeech , 2006, pp. 3111–3119. [11] J. R. Hershey , S. J. Rennie, P . A. Olsen, and T . T . Kristjansson, “Super-human multi-talker speech recognition: A graphical modeling approach, ” Comput. Speech Lang . , vol. 24, no. 1, pp. 45–66, 2010. [12] Y . W ang and D. W ang, “T owards scaling up classification-based speech separation, ” IEEE T r ans. Audio, Speech, Language Pr ocess. , v ol. 21, no. 7, pp. 1381–1390, 2013. [13] M. W ertheimer , “Laws of organization in perceptual forms, ” in A Sour ce book of Gestalt psychology , W . A. Ellis, Ed., pp. 71–88. Routledge and Kegan P aul, 1938. [14] M. P . Cooke, Modelling auditory pr ocessing and organisation , Ph.D. thesis, Univ . of Sheffield, 1991. [15] D. P . W . Ellis, Prediction-Driven Computational Auditory Scene Analysis , Ph.D. thesis, MIT , 1996. [16] J. Shi and J. Malik, “Normalized cuts and image se gmentation, ” IEEE T r ans. P AMI , v ol. 22, no. 8, pp. 888–905, 2000. [17] F . R. Bach and M. I. Jordan, “Learning spectral clustering, with application to speech separation, ” JMLR , vol. 7, pp. 1963–2001, 2006. [18] C. Fo wlkes, D. Martin, and J. Malik, “Learning af finity functions for image se gmentation: Combining patch-based and gradient-based approaches, ” in Proc. CVPR , 2003, vol. 2, pp. 54–61. [19] F . Tian, B. Gao, Q. Cui, E. Chen, and T .-Y . Liu, “Learning deep representations for graph clustering, ” in Pr oc. AAAI , 2014. [20] P . Huang, Y . Huang, W . W ang, and L. W ang, “Deep embedding network for clustering, ” in Pr oc. ICPR , 2014, pp. 1532–1537. [21] T . Mikolov , I. Sutskever , K. Chen, G. S. Corrado, and J. Dean, “Distributed representations of words and phrases and their compositionality , ” in Pr oc. NIPS , 2013, pp. 3111–3119. [22] C. F owlk es, S. Belongie, F . Chung, and J. Malik, “Spectral grouping using the nystr ¨ om method, ” IEEE T r ans. P AMI , vol. 26, no. 2, pp. 214–225, 2004. [23] A. Y . Ng, M. I. Jordan, Y . W eiss, et al., “On spectral clustering: Analysis and an algorithm, ” in Proc. NIPS , 2002, pp. 849–856. [24] M. Cooke, J. R. Hershey , and S. J. Rennie, “Monaural speech separation and recognition challenge, ” Computer Speech & Language , v ol. 24, no. 1, pp. 1–15, 2010. [25] E. V incent, S. Araki, F . Theis, G. Nolte, P . Bofill, H. Sa wada, A. Ozerov , V . Gowreesunk er , D. Lutter , and N. Q. Duong, “The signal separation evaluation campaign (2007–2010): Achiev ements and remaining challenges, ” Signal Pr ocessing , vol. 92, no. 8, pp. 1928–1936, 2012. [26] J. Le Roux, F . J. W eninger, and J. R. Hershey , “Sparse NMF – half-baked or well done?, ” T ech. Rep. TR2015-023, MERL, Cambridge, MA, USA, Mar . 2015. [27] E. V incent, R. Gribon v al, and C. F ´ evotte, “Performance measurement in blind audio source separation, ” IEEE T rans. Audio, Speech, Languag e Pr ocess. , v ol. 14, no. 4, pp. 1462–1469, 2006. [28] J. R. Hershey , J. Le Roux, and F . W eninger , “Deep unfolding: Model-based inspiration of novel deep architectures, ” Sep. 2014, arXi v:1409.2574. 10

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment