Multimodal Deep Learning for Robust RGB-D Object Recognition

Robust object recognition is a crucial ingredient of many, if not all, real-world robotics applications. This paper leverages recent progress on Convolutional Neural Networks (CNNs) and proposes a novel RGB-D architecture for object recognition. Our architecture is composed of two separate CNN processing streams - one for each modality - which are consecutively combined with a late fusion network. We focus on learning with imperfect sensor data, a typical problem in real-world robotics tasks. For accurate learning, we introduce a multi-stage training methodology and two crucial ingredients for handling depth data with CNNs. The first, an effective encoding of depth information for CNNs that enables learning without the need for large depth datasets. The second, a data augmentation scheme for robust learning with depth images by corrupting them with realistic noise patterns. We present state-of-the-art results on the RGB-D object dataset and show recognition in challenging RGB-D real-world noisy settings.

💡 Research Summary

This paper addresses the challenge of robust object recognition for robotics by exploiting both RGB and depth modalities through a novel multimodal deep learning architecture. The proposed system consists of two parallel convolutional neural network (CNN) streams—one processing the RGB image and the other processing the depth image—and a late‑fusion network that merges the high‑level features from both streams before the final classification layer.

Depth Encoding (Colorization)

A major obstacle is that depth images are single‑channel and differ substantially from the three‑channel natural images on which ImageNet‑pretrained CNNs were trained. Rather than using complex encodings such as HHA (height above ground, horizontal disparity, angle with gravity), the authors introduce a simple yet effective colorization scheme: depth values are first normalized to the 0‑255 range and then mapped to a Jet colormap, producing a three‑channel RGB‑like image where near objects appear red, mid‑range objects green, and far objects blue. This transformation distributes depth information across all three channels, preserving edges and object boundaries while keeping the computational overhead minimal. Experiments demonstrate that this colorization outperforms HHA and other handcrafted encodings on the RGB‑D Object dataset.

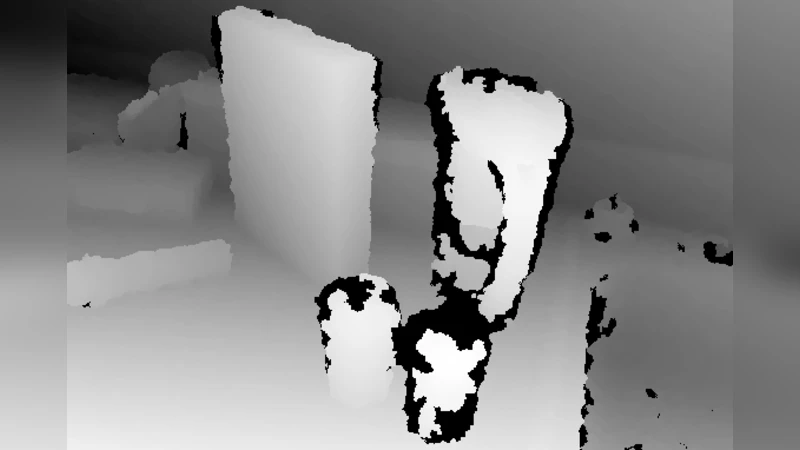

Noise‑Based Data Augmentation

Real‑world RGB‑D sensors frequently suffer from missing data, speckle noise, and occlusions. To make the depth stream robust to such imperfections, the authors generate synthetic training samples by corrupting depth images with realistic missing‑pixel patterns sampled from actual sensor recordings. The corrupted regions are set to zero (or a sentinel value) and surrounding pixels are tiled to simulate the “hole” effect. This augmentation is applied only to the depth stream during the final fine‑tuning stage, encouraging the network to learn features that are invariant to sensor noise and to rely more heavily on the complementary RGB information when depth is unreliable.

Three‑Stage Training Procedure

- Individual Stream Pre‑training – Both RGB and depth streams are initialized with weights from a CaffeNet (AlexNet‑style) model trained on ImageNet. Each stream is then fine‑tuned separately on the target RGB‑D dataset by attaching a randomly initialized softmax classifier and minimizing the cross‑entropy loss.

- Fusion Network Training – After the streams have learned modality‑specific representations, their penultimate fully‑connected layers (fc7) are concatenated. A new fusion network (an inner‑product layer followed by a softmax) is added on top, and the whole system is fine‑tuned jointly. The authors also experiment with freezing the stream weights and training only the fusion layer, showing comparable results.

- Noise Augmentation Fine‑tuning – In the final stage, depth images are augmented with the synthetic missing‑pixel patterns described above. The network is trained again, allowing it to adapt its fusion strategy to noisy depth inputs.

Experimental Validation

The method is evaluated on two benchmarks:

- Washington RGB‑D Object Dataset – The proposed architecture achieves state‑of‑the‑art classification accuracy, surpassing previous approaches that used hand‑crafted features, hierarchical sparse coding, or un‑fine‑tuned CNN features.

- RGB‑D Scenes Dataset – This dataset contains real‑world clutter and sensor noise. The authors show that both the depth colorization and the noise‑augmentation significantly improve performance; combined, they yield the highest reported accuracy on this challenging set.

Additional ablation studies compare image warping (simple resizing) with the authors’ “long‑side scaling + border tiling” preprocessing. The latter preserves object aspect ratios and shape cues, leading to a measurable boost in recognition rates.

Contributions and Impact

- A practical depth‑to‑RGB colorization that enables direct reuse of ImageNet‑pretrained CNNs for depth data without costly retraining on large depth corpora.

- A realistic noise‑based data augmentation pipeline that enhances robustness to missing depth values, a common issue in robotic perception.

- A staged training strategy that efficiently adapts large‑scale CNNs to modest RGB‑D datasets while learning an end‑to‑end multimodal fusion.

Limitations and Future Work

While the colorization scheme is computationally cheap, it discards the absolute metric nature of depth, which may be important for tasks requiring precise geometry (e.g., pose estimation). Moreover, the current architecture processes each modality independently before fusion; tighter early‑fusion or 3‑D convolutional approaches could capture cross‑modal interactions earlier in the network. Future research may explore integrating point‑cloud‑based 3‑D CNNs, temporal sequences for dynamic scenes, or self‑supervised pre‑training on large unlabeled RGB‑D collections to further close the domain gap between synthetic and real sensor data.

Overall, the paper presents a well‑engineered, empirically validated solution that pushes RGB‑D object recognition toward the robustness required for real‑world robotic applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment