Sparsity Based Methods for Overparameterized Variational Problems

Two complementary approaches have been extensively used in signal and image processing leading to novel results, the sparse representation methodology and the variational strategy. Recently, a new sparsity based model has been proposed, the cosparse …

Authors: Raja Giryes, Michael Elad, Alfred M. Bruckstein

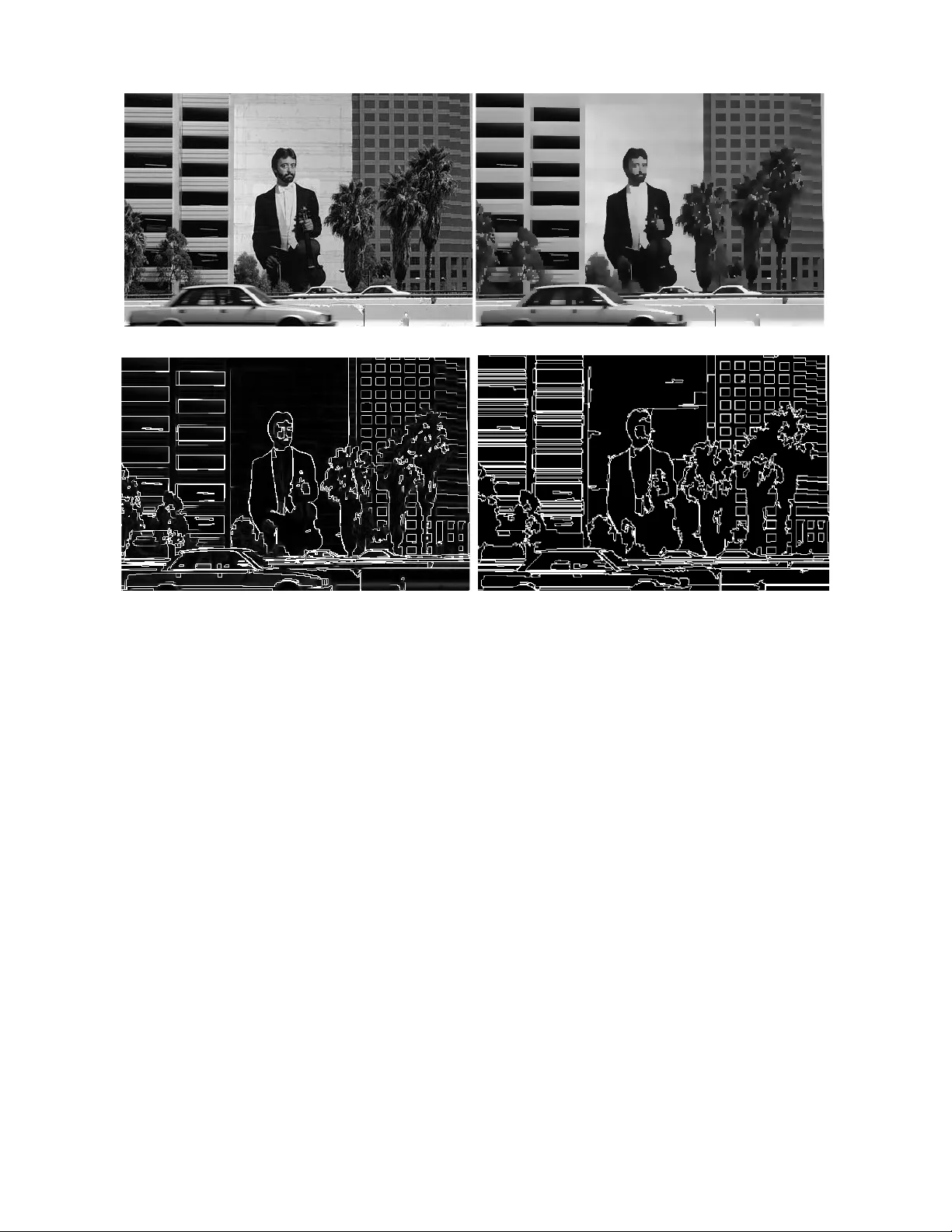

1 Sparsity Based Methods for Ov erparame terized V ariati onal Problems Raja Giryes * , Michael Elad ** , and Al fred M. Brucks tein ** * Department of Electrical and Computer En gineering, Duke Un i versity , Durham, North Carolina, 27 708, US A. raja.giryes@d uke.edu . ** Department of Compute r Science, T he T echnion - Israel Ins titute of T echnology , Haifa, 32000, Israel. { elad, freddy } @cs.technio n.ac.il Abstract —T wo complementary approaches ha ve been exten- siv ely used in signal and image proce ssin g leading to nov el results, the sparse representation methodology and the var iational strat- egy . Recently , a new sp arsity based model has been proposed, the cosparse analysis framewor k, which may potentially help in bridging sparse approximation based methods to the traditional total-varia tion minimization. Based on this, we intro du ce a spar - sity based framework fo r solving over parameterized variational problems. The latter has been used to improv e t he estimation of optical flow and also for general denoisin g of signals and images. Howe ver , the recov ery of the space vary in g p arameters i n volve d was n ot adeq uately addressed by traditional variational methods. W e fi rst demonstrate the efficiency of the new framework for one dimensional signals in reco vering a piecewise linear and polynomial function. Then, we illustrate how th e new technique can be used for denoising and segmentation of images. I . I N T R O D U C T I O N Many succ essful s ign al and imag e pro cessing tech niques rely on the fact that the given signals or images of in terest belong to a c lass descr ibed b y a cer tain a prio ri kn own model. Giv en the mod el, the signal is pro cessed b y estimating th e “correct” parameter s of the model. F or examp le, in the sparsity framework the assumption is th at the signals belo ng to a union of low dimension al subspace s [1], [ 2], [3], [4]. In the variational strategy , a model is imposed o n the variations of the signa l, e.g., its deriv atives are required to be smo oth [5], [6], [ 7], [8]. Thoug h both sparsity-based an d variational-based ap- proach es a re widely used for signa l processing and com puter vision, they are often viewed as two different m ethods with little in commo n between them. On e of the well known v ari- atonal too ls is th e total-variation regularization , used mainly for de noising an d inv erse pro blems. It can be formu lated as [6] min ˜ f g − M ˜ f 2 2 + λ ∇ ˜ f 1 , (1) where g = Mf + e ∈ R m are the giv en no isy measurements, M ∈ R m × d is a measurem ent matrix , e ∈ R m is an additive (typically white Gaussian) noise, λ is a regularizatio n parameter, f ∈ R d is the o riginal u nknown signal to be recovered, and ∇ f is its grad ients vector . The anisotrop ic version of (1), which we will use in th is work, is min ˜ f g − M ˜ f 2 2 + λ Ω DIF ˜ f 1 , (2) where Ω DIF is the finite-difference oper ator th at returns the deriv atives of th e signal. In the 1 D case it applies the filter [1 , − 1] , i.e., Ω DIF = Ω 1D-DIF = 1 − 1 0 . . . . . . 0 0 1 − 1 . . . . . . 0 . . . . . . . . . . . . . . . . . . 0 0 . . . 1 − 1 0 0 0 . . . . . . 1 − 1 , (3 ) For imag es it retu rns th e ho rizontal an d vertical deriv atives using the filters [1 , − 1 ] and [1 , − 1] T respectively . Note that for one dimen sional sign als th ere is no difference betwee n (1) an d (2) as the g radient eq uals the deriv ative. Howe ver , in the 2 D case the fir st (Eq. (1)) co nsiders the sum of g radients (square r oot o f the squared sum of the directional d eriv atives), while the seco nd ( Eq. (2)) con siders the absolute sum o f the directional der i vati ves, appro ximated b y finite differences. Recently , a very inter esting con nection has been drawn between the total-variation minimization p roblem and the sparsity m odel. It h as be en shown that (2) can be viewed as an ℓ 1 -relaxation techn ique f or appro ximating signals th at are sparse in their derivati ves domain, i. e., after apply ing the operator Ω DIF on them [ 9], [1 0], [1 1]. Such signals are said to be cospar se under the oper ator Ω DIF in the an alysis (co)sparsity model [10]. Notice that the TV regularization is only one examp le f rom the variational framework. An other recen t tec hnique, which is the focu s of this p aper, is th e overparameterizatio n idea, which represents the signal as a combina tion o f known functio ns weighted by spa ce-variant p arameters of the model [12], [1 3]. Let us introduce this overparameterize d model via an ex- ample. If a 1D sign al f is known to be piecewise linear, its i -th elem ent can be written as f ( i ) = a ( i ) + b ( i ) i , where a ( i ) and b ( i ) are the local coefficients describing the loca l line-curve. As such, the vectors a and b should be piecewise constant , with discon tinuities in the same locations. E ach constant inter val in a and b corresp onds to o ne linear segmen t 2 in f . When put in matrix- vector notatio n, f can be written alternatively as f = a + Zb , (4) where Z ∈ R d × d is a diagon al m atrix with the values 1 , 2 , . . . , d on its m ain diag onal. For imag es th is p arameteriza- tion wou ld similarly be f ( i, j ) = a ( i, j ) + b 1 ( i, j ) i + b 2 ( i, j ) j . This strategy is ref erred to as ”overparameter ization” be- cause the nu mber of representatio n parameter s is larger th an the signal size . In th e ab ove 1D example, while the original signal contain s d un known values, the r ecovery problem that seeks a and b ha s twice a s many variables. Clearly , th ere are m any other parameterization o ptions fo r signa ls, beyond the lin ear one. Such parameter izations have b een sh own to improve the denoising p erforman ce of the solutio n of th e problem posed in (1) in some cases [12], an d to provide very high q uality results fo r optical flow estimation [13], [1 4]. A. Ou r Contribution The true f orce behind overparameterization is that wh ile it uses more variables than n eeded fo r rep resenting th e sig nals, these are o ften more naturally suited to descr ibe its structure. For example, if a signal is piecewise linear then we m ay impose a constraint on the overpar ameterization coefficients a and b to be piecewise constant. Note that piecewise con stant signals are spar se under th e Ω DIF operator . Therefor e, for each o f the coefficients we can use the tools developed in the an alysis sparsity model [11], [15], [16], [1 7], [1 8]. H owe ver , in our case a and b ar e jointly sparse, i. e., the ir chan ge poin ts are collocated an d the refore an extension is necessary . Constraints o n the structur e in the sparsity pattern o f a representatio n have alread y be en an alyzed in the literature. They ar e comm only ref erred to as joint spar sity models, and those are foun d in the literature, both in the co ntext of h andling group s of signals [19], [2 0], [21], [22], [2 3], [24], or wh en considerin g bloc ks of non-zero s in a sin gle repr esentation vector [25], [26], [2 7], [28], [29] . W e use these tools to extend the existing analysis tech niques to handle the blo ck sparsity in o ur overparameterized scheme . In this paper we in troduce a general sparsity based fr ame- work for solving overparam eterized variational problem s. As the stru cture of these prob lems en ables segmentatio n wh ile recovering the signal, we provide an elegant way for r ecover - ing a signal f rom its deteriorated measuremen ts by usin g a n ℓ 0 approa ch, which is accompanied b y th eoretical g uarantees. W e demo nstrate the efficiency of the new fram e work for one dimensiona l functions in re covering piecewise polynomial sig- nals. Then we shift o ur view to imag es and d emonstrate how the n e w a pproach can be used f or deno ising and segmen tation. B. Organization This paper is organized as follows: In Sectio n II we present the overpara meterized variational mode l with mor e d etails. In Section III we describ e b riefly the synthesis a nd analy sis sparsity mo dels. In Sections IV an d V we introdu ce a new framework for solving overparam eterized v ariational pro blems using sparsity . Section IV pro poses a r ecovery strategy for the 1 D polynom ial case based on the SSCoSaMP tec hnique with optim al projections [30], [31], [32]. W e provide stable recovery g uarantees f or this algorithm fo r the case o f an additive adversarial n oise and den oising gu arantees for the case of a zero-mean wh ite Gaussian no ise. I n Section V we extend ou r scheme beyond the 1D case to higher dimensional polyno mial functions such as im ages. W e employ an extension of the GAPN alg orithm [3 3] for block spar sity for this task . In Section VI we present exper iments f or linear overparame teri- zation of imag es and on e dimensional signals. W e demonstra te how th e propo sed method can be used for image denoising and segmentation. Section VII concludes our work and proposes future direc tions of resear ch. I I . T H E O V E R PA R A M E T E R I Z E D V A R I AT I O N A L F R A M E W O R K Considering again the linear relation ship between the mea- surements and the un known sign al, g = Mf + e , (5) note th at witho ut a p rior k nowledge on f we cann ot recover it fro m g if m < d or e 6 = 0 . In the variational framework, a regular ization is imposed on the variations o f the signal f . O ne popu lar strategy f or recovering the signal in this framework is by solving th e following minimization pro blem: min ˜ f g − M ˜ f 2 2 + λ A ( ˜ f ) p , (6) where λ is the regularization weigh t and p ≥ 1 is the type of norm used with the regulariza tion operator A , whic h is typically a local operator . For examp le, for p = 1 and A = ∇ we g et the TV minimization (Eq. (2)). Another example for a regularization op erator is the Laplace operator A = ∇ 2 . Other types o f regularization op erators and variational formu lations can be f ound in [34], [3 5], [36], [3 7]. Recently , the overamete rized v ariatio nal framework has been intro duced as an exten sion to the trad itional variational methodo logy [12], [13], [ 14], [38]. Instead of a pplying a regularization on the signal itself, it is ap plied on the coef - ficients of the signal und er a global parameteriz ation of the space. Ea ch element o f the signal can be modeled as f ( i ) = P n j =1 b j ( i ) x j ( i ) , wh ere { b j } n j =1 are th e coefficients vectors and { x j } n j =1 contain th e parameterizatio n b asis fu nctions fo r the space. Denoting by X i , diag( x i ) the diagon al matrix that has the vector x i on its diagona l, we ca n rewrite th e above as f = P n j =1 X j b j . With these notations, the overparameterize d minimization pr oblem becomes min ˜ b i , 1 ≤ i ≤ n g − M n X i =1 X i ˜ b i 2 2 + n X i =1 λ i A i ( ˜ b i ) p i , (7) where each coefficient b i is regularized separately b y the op- erator A i (which can b e the sam e one fo r all the coefficients) 1 . 1 W e note that it is possible to have more than one regulari zation for each coef ficient, as pract iced in [38]. 3 Returning to the example of a linear overpar ameterization, we hav e that f = a + Zb , whe re in this case X 1 = I (the identity matrix) and X 2 = Z = diag (1 , . . . , d ) , a d iagonal matrix with 1 , . . . , d on its diagon al. If f is a piecewise linear functio n then the co efficients vectors a and b shou ld be piecewise con stant, an d th erefore it would be n atural to regularize these co effi cien ts with th e gradient o perator . T his leads to the f ollowing m inimization pro blem: min ˜ a , ˜ b g − M ˜ a + Z ˜ b 2 2 + λ 1 k∇ ˜ a k 1 + λ 2 ∇ ˜ b 1 , (8) which is a special case o f ( 7). The two main advantages of using the overpara meterized formu lation are these: (i) the new unknowns have a simpler form (e.g. a piece wise linear signal is treated by piecewise constant unknowns), and thus are easier to r ecover; an d (ii) this formu lation leads to recovering the parameters of the sign al along with the sig nal itself. The overparametriza tion idea, as introdu ced in [12], [13], [14], [38] builds upon the vast work in signal processing that refers to variational me thods. As such, there are n o k nown guaran tees for the qu ality of the recovery o f the signal, when using the form ulation po sed in (8) or its variants. M oreover , it has been shown in [38] that even for the case of M = I (and obviously , e 6 = 0 ), a po or recovery is achiev ed in recovering f and its param eterization coefficients. No te that the same happen s ev en if more sophisticated regularization s are comb ined and ap plied on a , b , an d eventually on f [38]. This leads us to lo ok for ano ther strategy to ap proach the problem of recovering a piecewise linear fun ction fr om its deterio rated m easurement g . Before d escribing o ur new scheme, we introduce in the n ext section the sparsity m odel that will aid us in d ev elop ing this alternative strategy . I I I . T H E S Y N T H E S I S A N D A N A L Y S I S S PA R S I T Y M O D E L S A popu lar p rior for recovering a sign al f fr om its distorted measuremen ts (as p osed in (5)) is the sparsity m odel [1], [2]. The idea behind it is that if we know a priori that f r esides in a union o f low d imensional subspaces, wh ich d o n ot in tersect trivially with the nu ll space o f M , th en we can estimate f stably by selecting the signal th at belo ngs to this unio n o f subspaces and is the closest to g [3], [ 4]. In the classical sparsity model, the signal f is assumed to have a sparse rep resentation α under a g i ven dictionary D , i.e., f = D α , k α k 0 ≤ k , where k·k 0 is th e ℓ 0 pseudo- norm that co unts the n umber of n on-zero entries in a vector , and k is the spar sity of the signal. Note that each low dimensional subspace in the stand ard sparsity m odel, known also as the synthesis m odel, is spanned b y a co llection of k colum ns from D . Wit h this model we can re cover f by solving min α k g − MD α k 2 2 s.t. k α k 0 ≤ k , (9) if k is k nown, or min α k α k 0 s.t. k g − MD α k 2 2 ≤ k e k 2 2 , (10) if we have information about the energy of the noise e . Obviously , on ce we g et α , the d esired r ecovered signal is simply D α . As both of these m inimization p roblems are NP- hard [39], many approx imation tech niques have been pro posed to approximate th eir so lution, a ccompanied with recovery guaran tees that d epend o n th e pro perties of the matrices M and D . These includ e ℓ 1 -relaxation [ 40], [ 41], [ 42], k nown also as LASSO [4 3], matching pursuit (MP) [44], o rthogon al matching pursuit (OMP) [45], [46], comp ressi ve sampling matching pu rsuit (CoSaMP) [47], sub space pursuit (SP) [48], iterativ e hard thresholding (IHT) [49] an d h ard thresholding pursuit (HTP) [50]. Another fra mew ork for m odeling a union o f low dimen- sional subspaces is the a nalysis one [10], [40]. This model considers the behavior of Ωf , the signal after applying a given operator Ω on it, and assumes that this vector is sp arse. Note that here the zero s a re those that characteriz e the subspace in which f resides, as e ach zer o in Ωf correspon ds to a row in Ω to which f is orthog onal to. Th erefore, f resides in a subspace orthog onal to th e o ne spanned by th ese rows. W e say that f is cosparse u nder Ω with a cosuppor t Λ if Ω Λ f = 0 , where Ω Λ is a sub-matr ix of Ω with the rows co rrespond ing to th e set Λ . The analy sis variants of (9) an d (10) for estimatin g f are min ˜ f g − M ˜ f 2 2 s.t. Ω ˜ f 0 ≤ k , (11) where k is the number o f non- zeros in Ωf , and min ˜ f Ω ˜ f 0 s.t. g − M ˜ f 2 2 ≤ k e k 2 2 . (12) As in the sy nthesis case, these m inimization prob lems are also NP-hard [1 0] and appr oximation techniqu es have bee n propo sed including Greed y Analysis Pu rsuit (GAP) [1 0], GAP noise (GAPN) [33], an alysis CoSAMP (ACoSaMP), analy sis SP (ASP), analysis IHT (AI HT) and analysis HTP (AHTP) [11]. I V . O V E R PA R A M E T E R I Z AT I O N V I A T H E A NA L Y S I S S PA R S I T Y M O D E L W ith the sparsity mo dels now defined , we re visit the overparameterization variational problem. I f we know that our signal f is piecewis e linear, then it is clear that the coefficients param eters should be piecewise co nstant with the same discontinuity locations , when lin ear overparameterization is used. W e denote by k the numb er of these d iscontinuity locations. As a reminder we rewrite f = [ I , Z ] a T , b T T . Note that a and b are jointly sparse un der Ω DIF , i.e, Ω DIF a and Ω DIF b have the same non -zero loc ations. With this o bservation we can extend the analysis minimization p roblem (11) to suppo rt the structu red sparsity in the vector a T , b T T , leading to the following minimization pro blem: min a , b g − M [ I , Z ] a b 2 2 (13) s.t. k| Ω DIF a | + | Ω DIF b |k 0 ≤ k , where | Ω DIF a | denotes applyin g eleme nt-wise absolute value on the entries o f Ω DIF a . 4 Note that we can ha ve a similar formulation for this problem also in the synth esis framework using the Heaviside diction ary D H S = 1 1 . . . 1 1 0 1 . . . . . . 1 . . . 0 . . . . . . . . . . . . . . . . . . 1 1 0 0 . . . 0 1 , (14) whose atoms are step functions o f different len gth. W e use the known observation that ev ery one dimension al signal with k change points c an be sparsely represented using k + 1 atom s from D H S ( k co lumns for representin g the change p oints plu s one for the DC). One way to ob serve that is b y the fact that Ω DIF ˜ D H S = I , wh ere ˜ D H S is a subma trix of D H S obtained by removing the last column of D H S (the DC compon ent). Therefo re, o ne may recover th e coefficient parameter s a and b , by their spar se repr esentations α and β , solv ing min α , β g − M [ I , Z ] D H S 0 0 D H S α β 2 2 (15) s.t. k| α | + | β |k 0 ≤ k , where a = D H S α an d b = D H S β . This minim ization problem ca n b e app roximated using block- sparsity techniqu es such as the gr oup-LASSO estimator [ 25], the mixed- ℓ 2 /ℓ 1 relaxation (extension of th e ℓ 1 relaxation) [26], [ 27], the Block OMP (BOMP) algorithm [28] or the extensions o f CoSaMP and IHT f or structured sparsity [29]. The joint sparsity frame- work can also b e used with (15) [19], [20], [21], [22], [2 3], [24]. The problem with the abov e synthesis techniques is tw of old: (i) No r ecovery guaran tees exist f or this formu lation with the dictionary D H S ; (ii) It is hard to g eneralize the model in (9) to h igher order sign als, e.g., imag es. The reason that no theoretical guaran tees are pr ovided for the D H S dictionary is the high co rrelation between its columns. Th ese create h igh ambigu ity , causing th e classical synthesis techn iques to fail in recovering the represen tations α an d β . This pro blem has bee n addr essed in sev era l con- tributions that h av e treated the signal dire ctly and not its representatio n [ 30], [31], [32], [5 1], [52], [5 3]. W e introdu ce an algorith m that appr oximates the solution s of both ( 9) and ( 11) and has theoretical reco nstruction per- forman ce gu arantees for one d imensional fu nctions f with matrices M that are near isometric for piecewise po lynomial function s. In th e next section we shall pr esent another algo - rithm that d oes not have su ch gu arantees but is gen eralizable to h igher order fu nctions. Thoug h till n ow we h a ve restricted our discussion on ly to piec e wise linear function s, we tu rn now to look at the more general c ase of piecewise 1D polyno mial functio ns of degree n . Note that this metho d approx imates th e following minimization pr oblem, which is a gen eralization of (13) to any polyno mial o f degree n , min b 0 , b 1 ,..., b n g − M I , Z , Z 2 , . . . , Z n b 0 b 1 . . . b n 2 2 (16) s.t. n X i =0 | Ω DIF b i | 0 ≤ k , where | Ω DIF b i | is an e lement-wise op eration that c alculates the absolute value of each entry in Ω DIF b i . W e employ the sig nal space CoSaMP ( SSCoSaMP) strategy [30], [31] 2 to ap proximate the solution of (16). This algo rithm assumes the existence of a pr ojection that for a given signal finds its closest signal (in th e ℓ 2 -norm sense) that b elongs to the mod el 3 , wh ere in our case the model is piecewise polyn o- mial function s with k jump points. This algorithm , alon g with the pro jection required , ar e pre sented in App endix A . A. Re covery Guarantees for Piecewise P olynom ial Functions T o provide theo retical guarantees for the recovery by SSCoSaMP we employ two theorem s fr om [31] an d [32]. These lead to re construction erro r bo unds for SSCoSaMP that guaran tee stable recovery if th e noise is adversarial, and an effecti ve denoising effect if it is zer o-mean white Ga ussian. Both theorems rely o n the following pro perty of the mea- surement m atrix M , which is a special case of th e D -RIP [18] and Ω -RIP [11]. Definition 4.1 : A matr ix M has a po lynomial restricted isometry proper ty of order n ( P n -RIP) with a constant δ k if for any piec e wise polyn omial function f of o rder n with k jumps we have (1 − δ k ) k f k 2 2 ≤ k Mf k 2 2 ≤ (1 + δ k ) k f k 2 2 . (17) Having th e P n -RIP definition we tu rn to pr esent the first theorem, whic h treats the adversarial noise case. Theor em 4 .2 (Based o n Cor ollary 3 .2 in [31] 4 ): Let f be a piecewise p olynom ial f unction of order n , e be an adversarial bound ed n oise and M satisfy the P n -RIP (17) with a co n- stant δ 4 k < 0 . 046 . Then after a finite nu mber of iteration s, SSCoSaMP yields ˆ f − f 2 ≤ C k e k 2 , (18) where C > 2 is a con stant depending on δ 4 k . Note th at the above the orem implies that we may co m- pressiv ely sense piecewise polyn omial func tions and ach ie ve a per fect recovery in the noiseless case e = 0 . Note also that if M is a subg aussian random matrix then it is sufficient to use o nly m = O ( k ( n + lo g( d )) m easurements [3], [11]. Thoug h the above theo rem is important for co mpressed sensing, it does not gu arantee noise redu ction, e ven for the 2 In a very similar way we could ha ve used the analysis CoSaMP (A CoSaMP) [11 ], [16]. 3 Note that in [30], [31] the projectio n might be allo wed to be nea r-optimal in the sense that the projection error is close to the optimal error up to a multipli cati ve constan t. 5 0 50 100 150 200 250 300 −1.5 −1 −0.5 0 0.5 1 1.5 (a) Noisy Function σ = 0 . 1 0 50 100 150 200 250 300 −1.5 −1 −0.5 0 0.5 1 1.5 original BGAPN Recovery BGAPN Continuous Recovery (b) Funct ion Recove ry for σ = 0 . 1 0 50 100 150 200 250 300 −10 −5 0 5 10 a Estimate b Estimate 0 50 100 150 200 250 300 −10 −5 0 5 10 a true b true (c) Coef ficients Paramete rs Rec overy for σ = 0 . 1 0 50 100 150 200 250 300 −1.5 −1 −0.5 0 0.5 1 1.5 (d) Noisy Function σ = 0 . 25 0 50 100 150 200 250 300 −1.5 −1 −0.5 0 0.5 1 1.5 original BGAPN Recovery BGAPN Continuous Recovery (e) Functi on Recove ry for σ = 0 . 25 0 50 100 150 200 250 300 −10 −5 0 5 10 a Estimate b Estimate 0 50 100 150 200 250 300 −10 −5 0 5 10 a true b true (f) Coef ficients Paramet ers Reco very for σ = 0 . 25 Fig. 1. Recov ery of a piece wise linea r function using the BGAPN algorith m with and without a constraint on the conti nuity . case M = I , as C > 2 . Th e reason for this is that the noise here is adversarial, leading to a worst-case b ound. By introdu cing a ra ndom distribution fo r th e noise, one may get better reco nstruction gu arantees. Th e fo llowing theorem assumes that the noise is rand omly Gaussian distributed, this way enab ling to provide effectiv e denoising gu arantees. Theor em 4 .3 (Based on Theo r em 1.7 in [32] 5 ): Assum e the condition s of Theor em 4.2 suc h that e is a random zero-mea n white G aussian no ise with a variance σ 2 . Then after a finite n umber of iterations, SSCoSaMP yields ˆ f − f 2 ≤ (19) C p (1 + δ 3 k )3 k 1 + p 2(1 + β ) log( nd ) σ , with p robability exceeding 1 − 2 (3 k )! ( nd ) − β . The boun d in the theo rem can be given on the expected error instead of being given only with high pro bability using the proof techniqu e in [5 4]. W e remark that if we were given an o racle th at for eknows the locations of the jumps in the parameteriz ation, the error we would get would be O ( √ k σ ) . As the log ( nd ) factor in ou r bound is ine vitab le [ 55], we may conclud e th at our gu arantee is optimal up to a constant factor . V . S PA R S I T Y B A S E D O V E R PA R A M E T E R I Z E D V A R I AT I O NA L A L G O R I T H M F O R H I G H D I M E N S I O N A L F U N C T I O N S W e now turn to gener alize the mo del in (13) to sup port other overparam eterization for ms, including hig her dimen - sional functions suc h a s images. W e consider th e case wher e an upper-boun d for the noise en ergy is g i ven and not the sparsity k , as is co mmon in many app lications. Notice that for th e synthesis m odel, such a gener alization is not trivial because while it is easy to extend the Ω DIF operator to high dime nsions, it is not clea r how to do this fo r the Heaviside dictiona ry . Therefo re we consider an o verpa rameterized version of (12), where the noise energy is known a nd the analysis mo del is used. L et X 1 , . . . X n be matrices o f the space variables and b 1 . . . b n their coe ffi cien ts parameters. For example, in a 2D (im age) case o f piecewise linea r constan t, X 1 will be th e identity matrix, X 2 will b e a diagon al matrix with the values [1 , 2 , . . . , d, 1 , 2 , . . . , d, . . . 1 , 2 , . . . , d ] on its main diagona l, and X 3 will similarly b e a diagon al matr ix with [1 , 1 , . . . , 1 , 2 , 2 , . . . , 2 , . . . d, d, . . . , d ] on its main diagon al. Assuming th at all th e co efficient p arameters are jointly sparse under a g eneral operator Ω , we may r ecover these coefficients by solvin g h ˆ b T 1 , . . . , ˆ b T n i T = min ˜ b 1 ,..., ˜ b n n X i =1 Ω ˜ b i 0 (20) s.t. g − M [ X 1 , . . . X n ] ˜ b 1 . . . ˜ b n 2 ≤ k e k 2 . Having an estimate for all these c oefficients, our approximatio n for the original signa l f is ˆ f = [ X 1 , . . . , X n ] h ˆ b T 1 , . . . , ˆ b T n i T . As th e min imization pro blem in (20) is NP-hard we sugg est 6 0 50 100 150 200 250 300 −1.5 −1 −0.5 0 0.5 1 1.5 (a) Noisy Function σ = 0 . 1 0 50 100 150 200 250 300 −1.5 −1 −0.5 0 0.5 1 1.5 original BGAPN Recovery BGAPN Continuous Recovery (b) Funct ion Recove ry for σ = 0 . 1 0 50 100 150 200 250 300 −10 −5 0 5 10 a Estimate b Estimate c Estimate 0 50 100 150 200 250 300 −10 −5 0 5 10 a true b true c true (c) Coef ficients Paramete rs Rec overy for σ = 0 . 1 0 50 100 150 200 250 300 −1.5 −1 −0.5 0 0.5 1 1.5 (d) Noisy Function σ = 0 . 25 0 50 100 150 200 250 300 −1.5 −1 −0.5 0 0.5 1 1.5 original BGAPN Recovery BGAPN Continuous Recovery (e) Functi on Recove ry for σ = 0 . 25 0 50 100 150 200 250 300 −10 −5 0 5 10 a Estimate b Estimate c Estimate 0 50 100 150 200 250 300 −10 −5 0 5 10 a true b true c true (f) Coef ficients Paramet ers Reco very for σ = 0 . 25 Fig. 2. Recov ery of a piece wise second-ord er polynomial function using the BGAPN algorithm with and without a constraint on the continui ty . to solve it b y a generalization of th e GAPN algorithm [33] – the block GA PN (BGAPN). W e introdu ce this extension in Append ix B . This a lgorithm aims at finding in a greedy way the rows of Ω th at are orthogon al to the space v ariab les b 1 . . . b n . No tice that o nce we fin d th e ind ices of these rows , the set Λ that satisfies Ω Λ b i = 0 for i = 1 . . . n ( Ω Λ is the submatrix of Ω with the rows corr esponding to the set Λ ) , w e m ay approx imate b 1 . . . b n by solvin g h ˆ b T 1 , . . . , ˆ b T n i T = min ˜ b 1 ,..., ˜ b n n X i =1 Ω Λ ˜ b i 2 2 (21) s.t. g − M [ X 1 , . . . X n ] ˜ b 1 . . . ˜ b n 2 ≤ k e k 2 . Therefo re, BGAPN approx imates b 1 . . . b n by finding Λ first . It starts with Λ th at includes all the rows o f Ω and then gradua lly rem oves elements fro m it by solving the pro blem posed in (21) at each iteration and then finding the row in Ω that h as the largest co rrelation with the cur rent tempora l solution h ˆ b T 1 , . . . , ˆ b T n i T . Note that there are no known recovery guaran tees fo r BGAPN of the for m we have had for SSCoSaMP befo re. Therefo re, we present its efficiency in se veral experiments in the next section. As explained in Appendix B, th e advantages of BGAPN over SSCoSaMP , desp ite the lack of theoretical guaran tees, are that (i) it d oes not need k to b e f oreknown and (ii) it is easier to use w ith hig her dimensiona l functions. Before we move to th e next section we note that o ne of the advantages of the ab ove fo rmulation and the BGAPN algo - rithm is th e relativ e ease o f add ing to it new con straints. For example, we may encou nter piecewise po lynomial f unctions that are also contin uous. Howe ver, we do no t h av e such a continuity constrain t in the curren t fo rmulation . As we shall see in the next section, the absence o f such a constraint allows jumps in the discontinuity points between the poly nomial segments and therefor e it is important to add it to the algorithm to get a be tter reconstru ction. One possibility to solve this p roblem is to ad d a continuity constraint on the jump points o f the signal. In Append ix B we present also a mod ified version of th e BGAPN alg orithm th at imposes such a contin uity constrain t, and in the next sectio n we sh all see h ow th is handles the problem . Note that this is only o ne examp le of a co nstraint th at on e may add to the BGAPN techn ique. For example, in images o ne may add a smoothne ss co nstraint on the edges’ directions. V I . E X P E R I M E N T S For demonstratin g the efficiency of the prop osed method we perfor m several tests. W e start with the one dimensional case, testing o ur p olynomial fitting appr oach with the continu ity constraint and without it f or continuous piecewise polyn omials of first and second degrees. W e com pare the se results with the optima l poly nomial app roximation scheme p resented in Section IV and to the variational app roach in [38] . W e con- tinue with a c ompressed sensing experiment fo r discon tinuous 7 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 0 2 4 6 8 10 12 14 16 18 20 σ Mean Squared Error BGAPN BGAPN Continuous Optimal Projection Optimal Continuous TVOPNL 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 0 2 4 6 8 10 12 14 16 18 20 σ Mean Squared Error BGAPN BGAPN Continuous Optimal Projection Optimal Continuous TVOPNL Fig. 3. MSE of the reco vered piece wise linear functions (left ) and piece wise second-order polynomial functions (right) as a funct ion of the noise v ariance σ for the m ethods BGAPN with and wit hout the continuity constr aint and the optimal approximati on with and without continuit y post-proce ssing. As a reference we compare to the non local over parameterize d TV (TVOPNL) approac h introd uced in [38]. 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Sampling Rate: m/d Recovery Rate BGAPN SSCoSaMP Fig. 4. Recov ery rat e of piece wise second-orde r polynomia l functions as a function of the sampling rate m/d for the methods BGAPN and SSCoSaMP . piecewise po lynomials and compare BGAPN with SSCoSaMP . Then we p erform some tests o n images using BGAPN. W e start by deno ising carto on imag es u sing the piecewise linear model. W e compar e o ur outcome with the one of TV d enoising [6] and show that our result d oes not suffer from a staircasing effect [56]. W e co mpare also to a TV d enoising version with overparameterization [ 12]. Then we show how our framework may be used for image segmentation, drawing a c onnection to the Mum ford-Sh ah functional [ 57], [58]. W e comp are o ur results with the ones o btained by the p opular g raph-cu ts based segmentation [59]. A. Con tinuous Piecewise P olynomia l Functions Denoising In order to ch eck th e p erforma nce of the polyn omial fitting, we ge nerate rand om continu ous p iecewise-linear and secon d- order polynom ial functions with 300 samples, 6 jumps and a dynamic rang e [ − 1 , 1] . Then we contam inate th e signal with a wh ite Gaussian noise with a stan dard d eviation fr om the set { 0 . 05 , 0 . 1 , 0 . 1 5 , . . . , 0 . 5 } . W e comp are the recovery result of BGAPN with a nd without the co ntinuity constraint with th e one of the optimal approx imation 6 . Figs. 1 and 2 pr esent BGAPN re construction results for the linear and seco nd order polyn omial cases respectively , for two different n oise le vels. It c an be o bserved that the addition of the con tinuity con straint is essential for the correctn ess o f th e recovery . I ndeed, with out it we get jumps b etween th e segments. Note also th at the numb er of jumps in our recovery may be different than the one of the orig inal sign al as BGAPN do es not have a preliminar y informa tion a bout it. Howe ver, it still manag es to recover th e parameteriz ation in a good way , especially in the lower no ise case. The po ssibility to pr ovide a para metric representation is one of the advantages o f our metho d. In deed, o ne m ay achieve good d enoising results without using the linear m odel in 6 W e hav e done the same experimen t with the BOMP algorit hm [28], adoptin g the synthesis frame work, with and without the continuity constrai nt, and observed that it performs very similarly to BGAPN. 8 terms of mean sq uared erro r (MSE) using m ethods such as free-kn ot spline [6 0]. Howe ver, the approx imated fun ction is not gu aranteed to b e piecewise linear an d therefo re learning the ch ange points f rom it is sub-optim al. See [38] and the referenc es th erein fo r more details. T o evaluate our method with r espect to its MSE we comp are it with the optimal ap proxim ation for piecewise polyno mial function pre sented in Appendix A-A. No te that the target signals are co ntinuous while this alg orithm does not u se this assumption. The refore, we add th e con tinuity constraint to this method as a post pro cessing ( unlike BGAPN that merges this in its steps). W e take the chan ging points it has recovered and p roject the noisy measure ment g to its closest continuous piecewise polynomial fun ction with the same discon tinuities . Figure 3 presents the recovery pe rforman ce of BGAPN and the projectio n alg orithm with and withou t the c ontinuou s constraint. Without the c onstraint, it can be observed that BGAPN ac hiev es better rec overy perf ormance. Th is is du e to the fact that it is not r estricted to the number of change points in the initial signal an d ther efore it can use more points and thus adapt itself better to the signal, achieving lower MSE. Howe ver , a fter adding the constrain t in the piecewise linear case the optim al projection ac hiev es a better recovery error . The reason is that, as the op timal projection uses the exact numb er of p oints, it finds the chan ging locatio ns more accurately . Note tho ugh that in the c ase of second or der poly- nomial fu nctions, BGAPN g ets better recovery . This hap pens because this p rogram uses the con tinuity constraint also within its iterations and not only at the final step, as is the c ase with the p rojection alg orithm. As the second order p olynomial case is mor e complex th an the piec e wise line ar one, the impact of the u sage of the co ntinuity prior is h igher and more sign ificant than the infor mation on the n umber of c hange points. W e com pare also to the non -local opverap ameterized TV algorithm (TV OPNL) in [38] 7 , which was shown to b e bet- ter for the task of line segmen tation, when com pared with se veral alter nativ es in cluding the o nes rep orted in [12] and [13]. Clearly , our pr oposed sch eme ach iev es better recovery perfor mance than TV OPNL, demon strating the supremacy of our line segmentation strategy . B. Comp r essed Sensing of Piecewise P olyno mial Functions W e perform also a co mpressed sensing exper iment in wh ich we comp are the p erforma nce of SSCoSAMP , with the opti- mal p rojection, and BGAPN fo r recovering a second o rder polyno mial fu nction with 6 jum ps from a small set of linear measuremen ts. Each en try in the m easurement matrix M is selected f rom an i.i.d normal d istribution and then all colu mns are norma lized to have a unit no rm. The p olynomial functions are selected as in the previous expe riment but with two differences: (i) we om it the con tinuity constraint; and (ii) we normalize the signals to be with a unit nor m. Fig. 4 presents the r ecovery rate (noiseless case σ = 0 ) of each progr am as a function of th e number of measurem ents m . Note that fo r a very small o r large num ber of samples BGAPN behaves better . Howe ver , in the middle rang e SSCoSaMP 7 Code provided by the authors. (a) Origina l Image (b) Noisy Image σ = 20 (c) BGAPN wit h Ω DIF . PSNR = 40.09dB. (d) TV recov ery . PSNR = 38.95dB. (e) TV OP recov ery . PSNR = 37.41dB. Fig. 5. D enoisi ng of swoosh using the BGAPN algorith m with and without diagona l deriv ati ves. Notice that we do not have the staircasing effe ct that appears in the TV reconstruc tion. achieves a b etter rec onstruction rate. Noneth eless, we may say that their perfo rmance is mo re or less the sam e. C. Cartoon Image Denoising W e tur n to ev aluate th e p erforman ce of our appro ach on images. A p iecewise smooth model is con sidered to be a good model for images, and especially to the ones with n o texture, i.e., cartoo n imag es [ 61], [62]. Th erefore, we use a linear overparameterization of the two dimensional plane and employ the two dimensiona l difference ope rator Ω DIF that c alculates the horizontal and vertical discrete deri vativ es of an image by applying the filters [1 , − 1 ] and [1 , − 1 ] T on it. In th is case, the 9 (a) Origina l Image (b) Noisy Image σ = 20 (c) BGAPN wit h Ω DIF . PSNR = 34.02dB. (d) TV recov ery . PSNR =33.69dB. (e) TV OP reco very . PSNR =31.83dB. Fig. 6. Denoising of sign using the BGAPN algorithm. T he results of TV and OP-TV are presented as a reference . problem in (20) tu rns to be (no tice that M = I ) h ˆ b T 0 , ˆ b T h , ˆ b T v i T = (22) min ˜ b 0 , ˜ b h , ˜ b v Ω DIF ˜ b 0 2 + Ω DIF ˜ b h 2 + Ω DIF ˜ b v 2 0 s.t. g − [ X 0 , X h , X v ] ˜ b 0 ˜ b h ˜ b v 2 ≤ k e k 2 , where X 0 , X h , X v are th e matrices that contain the DC, the horizon tal and the vertical par ameterizations respectively ; and ˆ b 0 , ˆ b h , ˆ b v are their c orrespon ding spac e variables. W e app ly this scheme f or denoising two cartoon im ages, (a) Origina l Image (b) Noisy Image σ = 20 (c) BGAPN wit h Ω DIF . PSNR = 30.77dB. (d) TV recov ery . PSNR = 31.44dB. (e) TV OP recov ery . PSNR = 30.6dB. Fig. 7. D enoisi ng of house using the BGAPN algorithm. Notic e that we do not have the staircasing ef fect that appear s in the TV reconstruc tion. Because our model is linear we do not reco ver the texture and thus we get slightly inferior results compared to TV with respect to PSNR. Note tha t if we use a cubic ove rparameteriza tion with BGAPN instead of linea r w e get PSNR (=31.81dB) better than that of T V . swoosh an d sign . W e com pare ou r results with the on es o f TV denoising [6]. Figs. 5 an d 6 present t he recovery of swoo sh and sign f rom their n oisy version contamina ted w ith an ad ditiv e white Gaussian n oise with σ = 20 . Note that we ach ie ve better recovery results than T V a nd d o n ot suffer f rom its staircasing effect. W e have tuned the parameters of TV separately for each image to optimize its outp ut quality , while we have used the same setup for o ur me thod in all the den oising experim ents. T o get a go od quality with BGAPN, we run the algo rithm se veral times with d ifferent set of para meters (which are the same fo r all im ages) and th en p rovide as an o utput the a verage image of all the run s. Notice th at using this tech nique with TV degrades its results. T o test wh ether our better den oising is just a result of using 10 (a) Origina l Image Gradients (b) Recove red Image Gradients Fig. 8. Gradient map of the clean house image and our reco vered image from Fig. 7. overparameterization or an outcom e o f our ne w framew ork , we compare also to TV with linear overparam eterization [ 12] 8 . Notice that while plug ging overpar ameterization directly in TV impr oves the results in some cases [12], this is not the case with the images here . Theref ore, we see that ou r new framework that link s sparsity with overparameter ization has an advantage over the old approa ch that still acts within the variational scheme. W e could use other form s of overpar ameterizations such as c ubical instead o f plan ar or ad d other d irections of the deriv atives in addition to the ho rizontal and vertical ones. For example, on e may app ly our scheme also using an operator that calculates also the d iagonal deriv atives using th e filters 1 0 0 − 1 and 0 1 − 1 0 . Such ch oices may lead to an improvement in different scen arios. A future work shou ld focus on learn ing the overparameterization s and the type o f deriv atives that sho uld be used for deno ising an d for other tasks. W e be lie ve that such a learning has the potential to lead to state-o f-the-art results. D. Image Segmentation As a motiv ation for the task of segmentation we present the denoising of an image with a textur e. W e contin ue using the model in (2 2) and conside r th e house image as an example. Fig. 7 demo nstrates the denoising r esult we get f or th is imag e. Note that here as well we do not suffer fro m the staircasing effect th at app ears in the TV rec overy . Howe ver, due to the nature o f ou r model we loo se the textur e and ther efore achieve an infer ior PSNR com pared to the TV d enoising 9 . Thoug h the remov al of te xtur e is not fa vorable for th e task of denoising , it makes the recovery of salient edges in the original image easier . In Fig. 8 we present th e grad ient map of o ur recovered image and the one of th e or iginal image. It can be seen th at while the gr adients of the o riginal image cap ture also 8 Code provided by the authors. 9 The lower PSNR we get with our method is because our model is linear and therefore is less capable to ada pt itself to the te xture. By using a cubic ov erparameteri zation we get PSNR which is equal to the one of TV . Note also that for larger noise magnitudes the rec overy performanc e of our algori thm in terms of PSN R becomes better than TV also with the linea r model, as in these conditions, we tend to loose the te xture anyway . the textur e changes, ou r method finds only the m ain ed ges 10 . This motivates us to use our sch eme for segmentation. Since our scheme divides the image into piecewise linear re- gions, we c an view ou r strategy as a n app roach that min imizes the Mu mford- Shah function al [57], [5 8]. On the other hand , if the imag e h as only two region s, our segmen tation result can be viewed as a solution of the Chan-V ese functional with the difference that we mo del each region by a polyn omial functio n instead of approx imating it by a constan t [63]. W e present ou r segmentation results for th ree images, and for eac h we display th e piecewise co nstant version of each image to gether with its bo undary map . Our segmen tation results appear in Figs. 9, 10 and 11 . W e co mpare o ur r esults to th e pop ular grap h-cuts based segmentation [5 9]. N otice that we achieve a compa rable performan ce, where in some p laces our method behaves b etter and in oth ers the strategy in [59] provides a better result. Thoug h we g et a good segmentation , it is c lear that ther e is still a large r oom f or improvement com pared to the curre nt state-of-the- art. On e direction f or improvement is to u se more filters with in Ω . Anothe r one is to calculate the gradien ts of the coefficients p arameters and not of the recovered image as they are suppo sed to b e tru ly piecewise constan t. W e leave these ideas to a futur e work. V I I . C O N C L U S I O N A N D F U T U R E W O R K This work has presented a novel framework for solv ing the overparameterized variational problem using sparse represen- tations. W e have demo nstrated how this framework can b e used both f or o ne d imensional an d two dimensional f unctions, while a gen eralization to oth er higher dimension s (such as 3D) is straightf orward. W e have solved the prob lem o f line fitting for p iecewis e polyno mial 1D sign als and then shown how the new tec hnique can b e used f or comp ressed sensing, deno ising and segmentatio n. Thoug h this work has focused m ainly on linear overparam - eterizations, the extension to other forms is straightfor ward. Howe ver , to keep th e discussion as simple as po ssible, we have chosen to use simple fo rms of overparameter izations in the experiments section . As a fu ture research, we b elie ve that a lea rning pr ocess sh ould b e adde d to our scheme. It should adapt the fu nctions of the space variables X 1 , . . . , X n and the filters in Ω to the sign al at ha nd. W e believe that this has the potential to lead to state-of-th e-art results in segmen tation, denoising and other sign al pro cessing tasks. Combining of our scheme with the standard sparse representation app roach may provide the po ssibility to add support to images with texture. This will lead to a schem e th at works glob ally on the image for th e cartoo n part a nd loca lly for the texture part. Another route for fu ture work is to integrate o ur scheme in the state- of-the- art overparameterized based algorith m fo r op tical flow in [13]. A C K N O W L E D G M E N T The authors would like to thank T al Nir and Guy Roseman for providing their code for the experimen ts. The research 10 W e hav e tested our approac h also in the presence of a blu r operator . The edges in this case are preserved as well. 11 (a) Origina l Image (b) Piec ewise linear version of the image (c) Image Segmentat ion (d) Image Segmentat ion using Graph-Cuts [59] Fig. 9. Piece wise li near version of coins image together with the segmentat ion result. W e compare to the popular graph-cuts based segmentatio n [59]. leading to these results has received f unding from the E u- ropean Research Cou ncil under Eur opean Unions Sev en th Framework Program, ERC Gra nt ag reement no. 3206 49. This research is p artially suppo rted by AFOSR, ARO, NSF , ONR, and NGA. Th e author s would like to th ank the ano nymous revie wers for their help ful an d constructive co mments that greatly co ntributed to impr oving th is pape r . A P P E N D I X A T H E S S C O S A M P A L G O R I T H M For app roximating (16), we u se a block sparsity variant of SSCoSaMP [32] and ada pt it to our mod el. It is p resented in Algorith m 1. Du e to the eq uiv alence between D H S and Ω DI F , we use the latter in the algorithm . This method uses a projectio n S n ( · , k ) that giv en a signal finds its clo sest piecewise p olynom ial function s with k jump points. W e calculate this projection using dyna mic pro gram- ming. Our strategy is a generalizatio n of the one that ap pears in [1 1], [64] and is presented in th e next sub section. The halting criter ion we use in our work in Algorithm 1 is k g t r k 2 ≤ ǫ f or a given sma ll con stant ǫ . Other option s fo r stopping criter ia are discussed in [4 7]. A. Op timal Appr oximation using Piecewise P olyno mial Func- tions Our projectio n techn ique uses th e fact that on ce th e jum p points are set, the op timal p arameters of th e polyno mial in a segment [ t, l ] can b e calcula ted optimally by solving a least squares min imization prob lem [ I [ t, l ] , Z [ t, l ] , . . . , Z n [ t, l ]] b 0 [ t, l ] b 1 [ t, l ] . . . b n [ t, l ] − g [ t, l ] 2 2 , (23) where g [ t, l ] is the sub-vector of g suppor ted by the ind ices t to l ( t ≤ l ) a nd Z i [ t, l ] is the (square) sub -matrix o f Z i correspo nding to the indices t to l . W e denote by P n ( g [ t, l ]) the polyno mial function we get by solving (23). Indeed , in 12 (a) Origina l Image (b) Piec ewise linear version of the image (c) Image Segmentat ion (d) Image Segmentat ion using Graph-Cuts [59] Fig. 10. Piece wise linear version of airplane image together with the segmenta tion result. W e compare to the popula r grap h-cuts based segment ation [59]. the case that the size of the segment [ t, l ] is smaller than the number o f pa rameters, e.g . segment o f size on e fo r a linear function , the ab ove minim ization prob lem has in finitely many options for setting the p arameters. Howev er, all o f them lead to the same resu lt, wh ich is keep ing the values of the points in th e segment, i.e., h aving P n ( g [ t, l ]) = g [ t, l ] . Denote by S n ( g [1 , ˜ d ] , k ) the o ptimal appro ximation of the signal g [1 , ˜ d ] by a piecewis e po lynomial function with k jumps. I t can be calcu lated by solving the following recursive minimization pr oblem ˆ t = argmin 1 ≤ t< ˜ d k S n ( g [1 , t ] , k − 1) − g [1 , t ] k 2 2 (24) + P n ( g [ t + 1 , ˜ d ]) − g [ t + 1 , ˜ d ] 2 2 , and setting S n ( g [1 , ˜ d ] , k ) = S n ( g [1 , ˆ t ] , k − 1 ) P n ( g [ ˆ t + 1 , ˜ d ]) . (25) The vectors S n ( g [1 , t ] , k − 1) can b e calculated r ecur- si vely using (2 4). The recursion ends with the b ase case S n ( g [1 , t ] , 0) = P n ( g [1 , t ]) . This lead s us to the following alg orithm for calcu lating an op timal appro ximation for a signal g . No tice that this algorithm provid es u s also with the para meterization of a piecewise polynomial. 1) Calculate S n ( g [1 , t ] , 0) = P n ( g [1 , t ]) for 1 ≤ t ≤ d . 2) For ˜ k = 1 : k − 1 do • Calculate S n ( g [1 , ˜ d ] , ˜ k ) for 1 ≤ ˜ d ≤ d using (2 4) and (2 5). 3) Calculate S n ( g [1 , d ] , k ) using (24) and (25). Denoting by T the worst case comp lexity of calculating P n ( g [ t, l ]) for any pair t, l , we hav e that the com plexity o f step 1) is O ( dT ) ; o f step 2) is O ( k d 2 ( T + d )) , as the computation of the pro jection erro r is of complexity O ( d ) ; and of step 3) O ( d ( T + d )) . Su mming all together we get a total comp lexity of O ( k d 2 ( T + d )) fo r the algorithm, wh ich is a po lynomial complexity since T is poly nomial. A P P E N D I X B T H E B L O C K G A P N A L G O R I T H M For a pproxim ating (2 0), we extend the GAPN te chnique [33] to block spar sity and adapt it to our m odel. It is pre sented in Alg orithm 2. Notice tha t th is p rogram, un like SSCoSaMP , does not assum e the kn owledge of k or the existence of an optimal pro jection onto the signals’ low dim ensional unio n of subspaces. Note also that it suits a gen eral form of overpar am- eterization an d n ot on ly 1D piecewise po lynomial functio ns. It is possible to accelerate BGAPN for highly scaled prob lems by rem oving fro m the cosuppor t several elem ents at a time instead of one in the up date cosuppo rt stage. 13 (a) Origina l Image (b) Piec ewise linear version of the image (c) Image Segmentat ion (d) Image Segmentat ion using Graph-Cuts [59] Fig. 11. Piece wise linear version of man image together with the segment ation resul t. W e compare to the popular graph-cuts based segmen tation [59]. Ideally , we would expe ct that after several iteration s of updating the cosuppo rt in BGAPN we would have Ω Λ ˆ b i = 0 . Howe ver , m any signals are only nearly cospar se, i.e., have k significantly la rge values in Ωb i while the rest are smaller than a sm all constant ǫ . T herefore , a natur al stopp ing cr iterion in this case would be to stop when the m aximal value in Ω ˆ b i is smaller th an ǫ . This is the stopping criterion we use throug hout this pape r for BGAPN. Of cou rse, this is no t the only o ption for a stopping criterio n, e.g. on e may loo k at th e relativ e so lution change in ea ch iteration or use a c onstant number o f iterations if k is for eknown. W e present also a mod ified version of BGAPN in Algo - rithm 3 that imposes a con tinuity constraint on the chan ge points. This is d one b y cre ating a binary diag onal matrix W = dia g ( w 1 , . . . , w p ) su ch that in each iteration of the progr am the i -th element w i is 1 if it correspond s to a change point and zero other wise. This matr ix serves a s a weights matrix to penalize disco ntinuity in the change poin t. Th is is done by addin g the regularizing term γ WΩ [ X 1 , . . . X n ] ˜ b 1 . . . ˜ b n 2 2 to the minimization pro blem in ( 26) (Eq . (27) in Alg orithm 3), which lea ds to the ad ditional step (Eq. ( 28)) in the modified progr am. R E F E R E N C E S [1] A. M. Bruckstein, D. L. Donoho, and M. Elad, “From sparse solutions of systems of equations to sparse modeling of signals and images, ” SIAM Revie w , vol. 51, no. 1, pp. 34–81, 2009. [2] R. Gribon val and M. Nielsen, “Sparse represent ations in unions of bases, ” IEEE T rans. Inf. Theory . , vol. 49, no. 12, pp. 3320–3325, Dec. 2003. [3] T . Blumensath and M. Davie s, “Sampling theor ems for signa ls from the union of finite-di mensional linear subspaces, ” IEEE T rans. Inf. Theory . , vol. 55, no. 4, pp. 1872 –1882, april 2009. [4] Y . M. L u and M. N. D o, “ A theory for sampling signals from a union of s ubspace s, ” IEEE T rans. Signal Proc ess. , vol. 56, no. 6, pp. 2334 –2345, Jun. 2008. [5] P . Pero na and J. Mal ik, “Scale-space and edge detecti on using anisotrop ic diffusion, ” P attern Analysis and Mach ine Intellig ence, IEEE T ransactions on , vol. 12, no. 7, pp. 629–639, Jul 1990. [6] L . I. Rudin , S. Osher , and E. Fat emi, “Nonlinea r total varia tion based noise remov al algorithms, ” Physica D: Nonline ar Phenomena , vol. 60, no. 1-4, pp. 259–268, 1992. [7] V . Caselles, R. Kimmel, and G. Sapiro, “Geodesic acti ve contours, ” Internati onal Journal of Computer V ision , vol. 22, no. 1, pp. 61–79, 1997. [8] J. W eick ert, Anisotr opic diffusion in imag e pr ocessing . T eubner , Stuttga rt, Germany , 1998. [9] D. Needell and R. W ard, “Stable image reconstru ction using total v ariation minimizat ion, ” SIAM J ournal on Imaging Scienc es , vol. 6, no. 2, pp. 1035–1058 , 2013. [10] S. Nam, M. E. Davies, M. Elad, and R. Gribon val, “The cosparse analysi s model and alg orithms, ” Appl. Comput. Harmon. Anal. , vol. 34, no. 1, pp. 30 – 56, 2013. 14 Algorithm 1 Sign al Space CoSaMP (SSCoSaMP) for Piece- wise Polyn omial Functions Input: k , M , g , γ , where g = Mf + e , f = I , Z , Z 2 , . . . , Z n b T 0 , b T 1 , . . . , b T n T is a piecewise polyno mial fun ction of or der n , k = k P n i =0 | Ω DIF b i |k 0 is the numb er of jumps in the r epresentation coefficients of f , e is an additive n oise and γ is a parame ter o f the algorithm . S n ( · , k ) is a p rocedur e that ap proximates a giv en sign al by a piecewise polynom ial functio n o f order n with k jumps. Output: ˆ f : A p iecewis e polynomial with k + 1 segments that approx imates f . • Initialize the jum ps’ location s T 0 = ∅ , th e residu al g 0 r = g and set t = 0 . while halting criterion is not satisfied do • t = t + 1 . • Find th e parameterization b r, 0 , b r, 1 , . . . , b r,n of the residual’ s polynomial approxima tion by calculating S n ( M T g t − 1 r , γ k ) . • Find ne w temporal ju mp locations: T ∆ = the support of P n i =0 | Ω DIF b r,i | . • Update the ju mps locations’ indices: ˜ T t = T t − 1 ∪ T ∆ . • Compute tempo ral parameters: [ b p, 0 , . . . , b p,n ] = argmin ˜ b 0 ,..., ˜ b n g − M I , Z , Z 2 . . . , Z n ˜ b 0 ˜ b 1 . . . ˜ b n 2 2 s.t. ( Ω DIF ˜ b 0 ) ( ˜ T t ) C = 0 , . . . ( Ω DIF ˜ b n ) ( ˜ T t ) C = 0 . • Calcu late a po lynomial app roximation of orde r n : f t = S n ( I , Z , Z 2 , . . . , Z n b T p, 0 , . . . , b T p,n T , k ) . • Find new ju mp loc ations: T t = the locatio ns of the jumps in the par ameterization of f t . • Up date the re sidual: g t r = g − M f t . end while • Form final solution ˆ f = f t . [11] R. Giryes, S. Nam, M. Elad, R. Gribon val , and M. E. Da vies, “Greedy- lik e algorithms for the cosparse analysi s model, ” Linear Algebra and its Applicat ions , vol. 441, no. 0, pp. 22 – 60, Jan. 2014, special Issue on Sparse Approximate Solution of Linear Systems. [12] T . Nir and A. Bruckstein , “On over -parameteriz ed model based TV- denoisin g, ” in Signals, Circ uits and Systems, 2007. ISSCS 2007. Inter- national Symposium on , vol . 1, July 2007, pp. 1–4. [13] T . Nir , A. M. Bruc kstein, and R. Kimmel, “Over -parameteriz ed v aria- tional optical flow, ” Internati onal J ournal of Computer V ision , vol. 76, no. 2, pp. 205–216, 2008. [14] G. Rosman, S. Shem-T ov , D. Bitton, T . Nir , G. Adiv , R. Kimmel, A. Feuer , and A. M. Bruckst ein, “Over -parameteri zed optic al flow using a stereosc opic constraint , ” in Scale Space and V ariationa l Methods in Computer V ision , ser . Lecture Notes in Computer S cienc e, A. M. Bruckste in, B. M. Haar Romeny , A. M. Bronstein, and M. M. Bronstein, Eds. Springer Berlin Heidelbe rg, 2012, vol. 6667, pp. 761–772. [15] R. Giryes, S. Nam, R. Gribon val , and M. E. Davies, “Iterati ve cosparse project ion algorithms for the reco very of cosparse ve ctors, ” in The 19th Eur opean Signal Proc essing Confer ence (EUSIPCO-2011) , Barcelona, Spain, 2011. [16] R. Giryes and M. E lad, “CoSaMP and SP for the cosparse anal- ysis m odel, ” in The 20th Europe an Signal Proce ssing Conferen ce (EUSIPCO-2012) , Bucharest, Romania, 2012. [17] T . Pele g and M. E lad, “Performance guarante es of the thresholding algorit hm for the Co-Sparse analysis model, ” submitte d to IEEE T rans. Algorithm 2 Th e Block GAPN Algo rithm Input: M , g , Ω , where g = Mf + e , f = [ X 1 , . . . , X n ] b T 1 , . . . , b T n T such that P n i | Ωb i | is sparse, and e is an a dditiv e noise. Output: ˆ f = [ X 1 , . . . , X n ] h ˆ b T 1 , . . . , ˆ b T n i T : an estimate for f such that P n i Ω ˆ b i is sp arse. Initialize cosup port Λ = { 1 , . . . , p } and set t = 0 . while halting criterion is not satisfied do t = t + 1 . Calculate a new estimate: h ˆ b T 1 , . . . , ˆ b T n i T = a rgmin ˜ b 1 ,..., ˜ b n n X i =1 Ω Λ ˜ b i 2 2 (26) s.t. g − M [ X 1 , . . . X n ] ˜ b 1 . . . ˜ b n 2 ≤ k e k 2 . Update cosup port: Λ = Λ \ argmax j P n i =1 Ω j ˆ b i 2 2 . end while Form an estimate for th e original sign al: ˆ f = [ X 1 , . . . , X n ] h ˆ b T 1 , . . . , ˆ b T n i T . on Information Theory . [18] E. J. Cand ` es, Y . C. Eldar , D . Needell , and P . Randall, “Compressed sens- ing with coherent and redunda nt dic tionaries, ” Appl. Comput. Harmon. Anal. , vol. 31, no. 1, pp. 59 – 73, 2011. [19] S. F . Cotter , B. D. Rao, K. Engan, and K. Kreutz-Del gado, “Sparse solutions to linear in verse problems with multiple measurement vectors, ” IEEE T rans. Signal Pr ocess. , vol. 53, no. 7, pp. 2477–2488, July 2005. [20] J. A. Tro pp, A. C. Gilbert, and M. J. Strauss, “ Algorith ms for simulta- neous sparse approximation. Part I: Greedy pursuit , ” Signal Pr ocessing , vol. 86, no. 3, pp. 572 – 588, 2006, sparse Approximations in Signal and Image Processing. [21] J. A. Tro pp, “ Algorithms for simultaneou s sparse approximati on. Part II: Conv ex rela xation, ” Signal P r ocessing , vol. 86, no. 3, pp. 589 – 602, 2006, sparse Approximatio ns in Signal and Image Processing. [22] D. Wip f and B. Rao, “ An empirica l bayesian strategy for s olving the simultan eous sparse approximation problem, ” IEEE T rans. Signal Pr ocess. , vol. 55, no. 7, pp. 3704–3716, July 2007. [23] M. Mishali and Y . C. E ldar , “Reduce and boost: Reco vering arbitrary sets of jointly sparse vect ors, ” IEEE T rans. Signal Proce ss. , vol. 56, no. 10, pp. 4692–4702, Oct. 2008. [24] M. Fornasier and H. Rauhut, “Reco very algorithms for vector- value d data with joint sparsity constrain ts, ” SIAM J ournal on Numerical Anal- ysis , vol. 46, no. 2, pp. 577–613, 2008. [25] M. Y uan and Y . Lin, “Model selection and estimation in re gression with grouped varia bles, ” J ournal of the Royal Statist ical Society: Series B (Statist ical Met hodology) , vol. 68, no. 1, pp. 49–67, 2006. [26] Y . C. E ldar and M. Mishali, “Robust recov ery of signals from a structure d union of subspaces, ” Information Theory , IEEE T ransacti ons on , vol. 55, no. 11, pp. 5302–5316, Nov 2009. [27] M. Stojni c, F . Parv aresh, and B. Hassibi, “On the recon struction of block-spa rse signal s with an optimal number of measurements, ” Signal Pr ocessing, IEEE T ransactio ns on , vol. 57, no. 8, pp. 3075–3085, Aug 2009. [28] Y . C. Eldar , P . Kuppinge r, and H. Bolcskei, “Block-spar se signals: Uncerta inty relations and efficien t recove ry , ” Signal Proce ssing, IEEE T ransactions on , vol. 58, no. 6, pp. 3042–3054, June 2010. [29] R. Baraniuk , V . Ce vher , M. D uarte , and C. Hegde, “Model-base d com- pressi ve sensing, ” Information Theory , IEEE T ransactions on , v ol. 56, no. 4, pp. 1982–2001 , April 2010. [30] M. A. Dav enport, D. Needell , and M. B. W akin, “Signal space cosamp for sparse recov ery with redundant dictiona ries, ” IEEE T rans. Inf. Theory , vol. 59, no. 10, pp. 6820–6829, Oct 2013. 15 Algorithm 3 The Bloc k GAPN Alg orithm with Continuity Constraint Input: M , g , Ω , γ , where g = M f + e , f = [ X 1 , . . . , X n ] b T 1 , . . . , b T n T such that P n i | Ωb i | is spar se, e is an a dditiv e n oise, and γ is a weigh t for the contin uity constraint. Output: ˆ f = [ X 1 , . . . , X n ] h ˆ b T 1 , . . . , ˆ b T n i T : an estimate for f such that P n i Ω ˆ b i is sparse. Initialize cosup port Λ = { 1 , . . . , p } and set t = 0 . while halting criterion is not satisfied do t = t + 1 . Calculate a new estimate: h ˆ b T 1 , . . . , ˆ b T n i T = a rgmin ˜ b 1 ,..., ˜ b n n X i =1 Ω Λ ˜ b i 2 2 (27) s.t. g − M [ X 1 , . . . X n ] ˜ b 1 . . . ˜ b n 2 ≤ k e k 2 . Update co support: Λ = Λ \ argmax j P n i =1 Ω j ˆ b i 2 2 . Create W eight Matrix : W = diag( w 1 , . . . , w p ) , wher e w i = 0 if i ∈ Λ or w i = 1 othe rwise. Recalculate the estimate: h ˆ b T 1 , . . . , ˆ b T n i T = a rgmin ˜ b 1 ,..., ˜ b n n X i =1 Ω Λ ˜ b i 2 2 (28) + γ WΩ [ X 1 , . . . X n ] ˜ b 1 . . . ˜ b n 2 2 s.t. g − M [ X 1 , . . . X n ] ˜ b 1 . . . ˜ b n 2 ≤ k e k 2 . end while Form an estimate for th e original sign al: ˆ f = [ X 1 , . . . , X n ] h ˆ b T 1 , . . . , ˆ b T n i T . [31] R. Giryes and D. Needell, “Greedy signal space methods for inco herence and beyond, ” A ppl. Comput. Harmon. Anal. , vol. 39, no. 1, pp. 1 – 20, Jul. 2015. [32] ——, “Near oracle performance and block analysi s of signal space greedy m ethods, ” Journal of Appr oximation Theory , vol. 194, pp. 157 – 174, Jun. 2015. [33] S. Nam, M. E. Da vies, M. Elad, and R. Gribon val, “Recov ery of cosparse signals with greedy analy sis pursuit in the presence of noise, ” in 4th IEEE Internat ional W orkshop on Computat ional Advances in Multi- Sensor Adaptive Proc essing (CAMSAP) , Dec 2011, pp. 361–364. [34] J. M. Morel and S. Solimini, V ariationa l Methods in Imag e Seg menta- tion . Cambridge, MA, USA: Birkha user Boston Inc., 1995. [35] T . Chan and J. Shen, Image Proce ssing and Analysis . Soci ety for Industria l and Applied Mathe matics, 2005. [36] J. W eickert , A. Bruhn, T . Brox, and N. Papenber g, “ A survey on v ariational optic flo w methods for small displace ments, ” in Mathemati cal Models for Re gistration and A pplic ations to Medical Imaging , ser . Mathemat ics in industry . Springer Berlin Heidelbe rg, 2006, vol. 10, pp. 103–136. [37] G. Aubert and P . Korn probst, Mathe matical pr oblems in image pr o- cessing: partial dif feren tial equati ons and the calcul us of variations . Springer , 2006, vol. 147. [38] S. Shem-T ov , G. Rosman, G. Adiv , R. Kimmel, and A. M. Bruckstei n, “On glo bally optimal local model ing: From moving le ast squares to o ver- parametri zation, ” in Innovati ons for Shape A nalysis . Springer , 2013, pp. 379–405. [39] G. Da vis, S . Malla t, and M. A vel laneda, “ Adapti ve gree dy approxima- tions, ” Constructi ve Appr oximation , vol. 50, pp. 57–98, 1997. [40] M. Elad, P . Milanfar , and R. Rubinstein, “ Analysis ve rsus synthe sis in signal priors, ” In verse P r oblems , vol. 23, no. 3, pp. 947–968, June 2007. [41] S. S. Chen, D. L. Donoho, and M. A. Saunde rs, “ Atomic dec omposition by basis pursuit, ” SIAM Jou rnal on Sci entific Computing , vol. 20, no. 1, pp. 33–61, 1998. [42] D. L. Donoho and M. Elad, “On the stabili ty of the basis pursuit in the presence of noise, ” Signal P r ocess. , vol. 86, no. 3, pp. 511–532, 2006. [43] R. Tibshiran i, “Regression shrinkage and s elec tion via the lasso, ” J. Roy . Statist . Soc. B , vol . 58, no. 1, pp. 267–288, 1996. [44] S. Mallat and Z. Zhang, “Matching pursuits with time-frequ ency dicti o- naries, ” IEEE T rans. Signal Proce ss. , vol. 41, pp. 3397–3415, 1993. [45] S. Chen, S. A. Billings, and W . Luo, “Orthogonal least squar es methods and their applicati on to non-linear system identificati on, ” International J ournal of Contr ol , vol. 50, no. 5, pp. 1873–1896, 1989. [46] G. Davis, S. Mallat, and M. A vellan eda, “ Adapti ve time-freque ncy decomposit ions, ” Optical Engineering , vol. 33, no. 7, pp. 2183–2191, July 1994. [47] D. Needell and J. Tropp, “CoSaMP: Iterati ve s ignal recov ery from incomple te and inaccurate samples, ” Appl. Comput. Harmon. Anal. , vol. 26, no. 3, pp. 301 – 321, May 2009. [48] W . Dai and O. Milenko vic, “Subspac e pursuit for compressiv e sensing signal reconstructi on, ” IEEE T rans. Inf. Theory . , vo l. 55, no. 5, pp. 2230 –2249, May 2009. [49] T . Blumensath and M. E . Davie s, “Iterati ve hard threshold ing for compressed sensing, ” Appl. Comput. Harmon. Anal. , vol. 27, no. 3, pp. 265 – 274, 2009. [50] S. Foucart, “Hard thresholdin g pursuit: an algorit hm for compressi ve sensing, ” SIAM J. Numer . Anal. , vol. 49, no. 6, pp. 2543–2563, 2011. [51] R. Giryes and M. E lad, “Can we allo w line ar depe ndencies in the dic- tionary in the synthesis framew ork?” in IEEE International Confe rence on A coustic s, Speech and Signal Pr ocessing (ICASSP) , 2013. [52] ——, “Iterati ve hard thresholdi ng for signal recove ry using near optimal project ions, ” in 10th Int. Conf. on Sampling Theory Appl. (SAMPT A) , 2013. [53] ——, “OMP with highly coherent dictionarie s, ” in 10th Int. Conf. on Sampling Theory Appl. (SA MPT A) , 2013. [54] ——, “RIP-based near-oracl e performance guara ntees for SP, CoSaMP, and IHT, ” IEEE T rans. Signal Proc ess. , vol. 60, no. 3, pp. 1465–1468, March 2012. [55] E. J. Cand ` es, “Modern statistic al estimati on via oracle inequali ties, ” Acta Numerica , vol. 15, pp. 257–325, 2006. [56] J. Sav age and K. Chen, “On multigri ds for solvi ng a class of improv ed total varia tion based staircasi ng reduct ion models, ” in Ima ge Pr ocessing Based on P artial Dif fere ntial E quation s , ser . Mathema tics and V isual- izati on, X.-C. T ai, K.-A. Lie, T . Chan, and S. Osher, Eds. Springer Berlin Heidelber g, 2007, pp. 69–94. [57] D. Mumford and J. Shah, “Optimal approximations by piece wise smooth functio ns and associa ted vari ational problems, ” Communicati ons on Pure and Applied Mathemati cs , vol. 42, no. 5, pp. 577–685, 1989. [58] L. Am brosio and V . M. T ortorelli, “ Approximati on of funct ional depe nd- ing on jumps by ellipti c functiona l via t-c onv ergenc e, ” Communicat ions on Pure and Applied Mathematics , vol. 43, no. 8, pp. 999–1036, 1990. [59] P . F . Felze nszwalb and D. P . Huttenlocher , “Ef ficient graph-ba sed image segment ation, ” International J ournal of Computer V ision , vol. 59, no. 2, pp. 167–181, 2004. [60] D. Jupp, “ Approximatio n to data by splines with free knots, ” SIAM J ournal on Numerical Analysis , vol . 15, no. 2, pp. 328–343, 1978. [61] E. J. Cand ` es and D. L. Donoho, “Curv elets? a surprisingly ef fecti ve nonadapt iv e representati on for objects with edges, ” in Curves and Surface Fi tting: Saint-M alo 99 , C. R. A. Cohen and L. S chumak er , E ds. V anderbilt Uni versity Press, Nashville, 2000, p. 105?120. [62] D. L. Donoho, “Sparse components of images and optimal atomic de- compositio ns, ” Constructiv e Appr oximatio n , v ol. 17, no. 3, p. 353?382, 2001. [63] T . F . Chan and L. V ese, “ Acti ve contours without edges, ” IEEE T rans- actions on Image Proc essing , vol. 10, no. 2, pp. 266–277, Feb 2001. [64] T . Han, S. Kay , and T . Huang, “Optimal s egme ntation of signals and its applic ation to image denoising and boundary feature extracti on, ” in 16 IEEE International Confere nce on Image P r ocessing (ICIP). , vol. 4, oct. 2004, pp. 2693 – 2696 V ol. 4.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment